Navigating Time Zone Differences: Scheduling Ads For Maximum Impact via @sejournal, @brookeosmundson

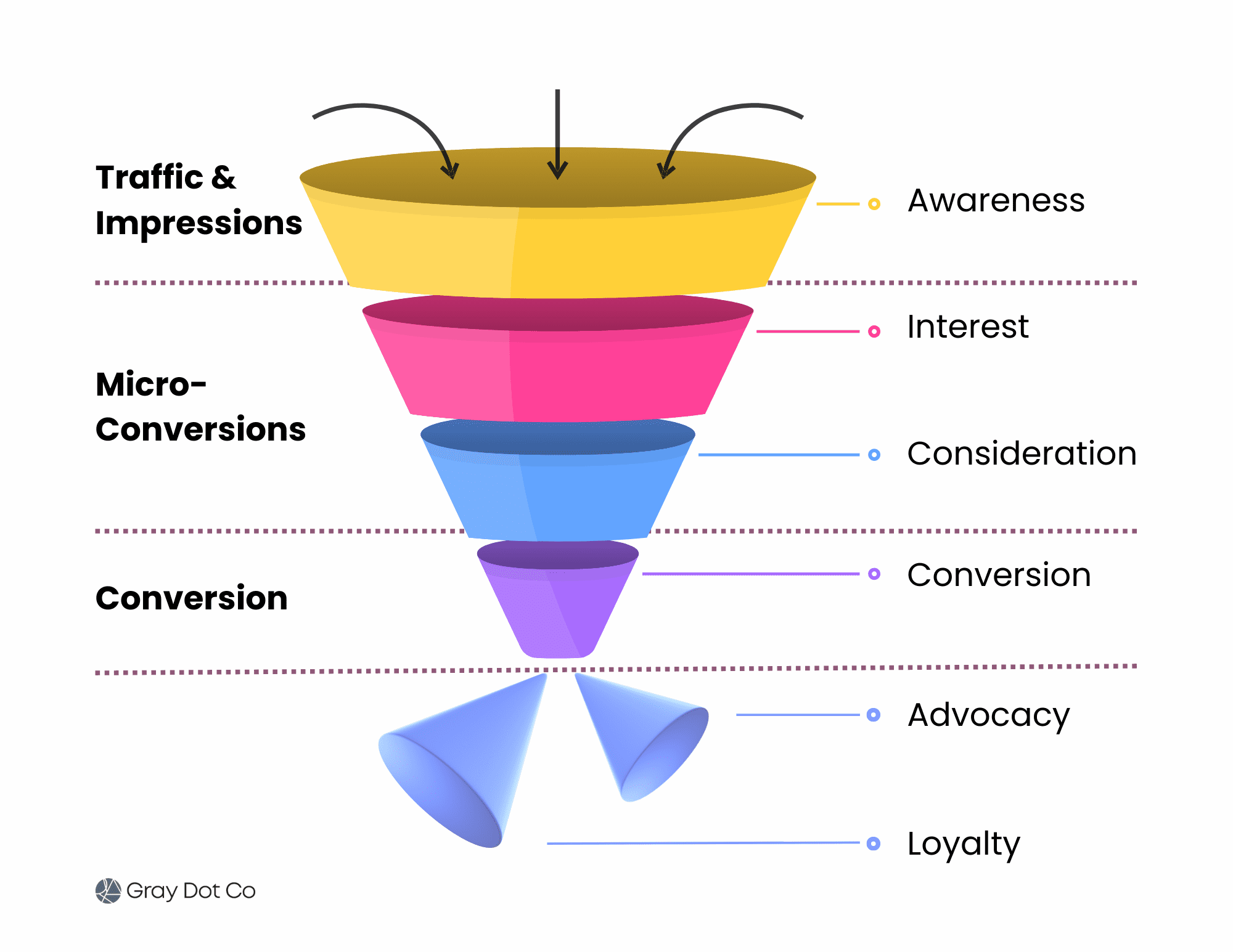

Ad scheduling is a fundamental setting in Google Ads and Microsoft Ads, but when managing campaigns across multiple time zones, it becomes more complex.

Standard scheduling tactics may not cut it if you’re advertising internationally or running campaigns across regions with different peak engagement times.

Poorly timed ads can lead to wasted budget, lower conversion rates, and missed opportunities.

This article goes beyond the basics to cover next-level strategies for scheduling ads effectively across different time zones.

We’ll explore techniques such as localized scheduling, data-driven adjustments, and automation to maximize campaign performance.

Understanding Time Zone Challenges In PPC

When advertising across multiple regions, time zone discrepancies can create challenges that impact ad delivery, engagement, and conversions.

A common pitfall is assuming that a single campaign schedule will work universally. In reality, what works in one location might be completely ineffective in another.

For example, if your Google Ads account is set to Eastern Time but your target audience is primarily on the West Coast, your ads might be running during off-hours, leading to suboptimal performance.

International campaigns require even more diligence to consider local business hours and consumer behavior patterns.

Another factor is peak engagement hours. While lunchtime or evening hours may be prime time in one country, those same hours could be completely irrelevant in another.

Understanding these nuances is essential for optimizing your ad scheduling strategy.

Advanced Strategies For Scheduling Ads Across Time Zones

Successfully managing ad scheduling across time zones requires a thoughtful approach that goes beyond the basics.

While many advertisers set simple schedules and hope for the best, the real wins come from leveraging automation, data-driven insights, and strategic segmentation.

Whether you’re running campaigns domestically across U.S. time zones or managing international PPC efforts, applying advanced techniques can help ensure your ads are served at the right time for the right audience.

Segmenting Campaigns By Time Zone For Better Control

If you’re running campaigns across multiple time zones, one of the best ways to stay in control is by creating separate campaigns for different regions.

This lets you adjust ad schedules, budgets, and bidding strategies based on local peak performance times rather than forcing a single schedule to work for every location.

For example, an ecommerce brand serving customers in the U.S. and Europe might run separate campaigns for each region.

The U.S. campaign can focus on morning and evening hours when engagement peaks, while the European campaign targets prime shopping hours in local time zones.

While this approach adds complexity, the benefits far outweigh the extra management effort. Automating adjustments with rules and scripts can help streamline this process, ensuring each campaign is optimized without constant manual oversight.

Leveraging Automated Bidding Over Fixed Schedules

Manual ad scheduling has its place, but automated bid strategies like Target ROAS or Maximize Conversions allow you to optimize bids dynamically rather than setting fixed hours.

These AI-driven approaches adjust bids in real time, ensuring ads appear when conversion probability is highest, regardless of time zone differences.

For instance, if data shows that users in one region convert at a higher rate between 9 a.m. and 11 a.m. but another region performs better in the evening, automated bidding will allocate more budget when it matters most.

Instead of manually adjusting bids every few weeks, let machine learning do the heavy lifting.

Optimizing Scheduling Based On Market-Specific Peak Hours

Different markets have different user behaviors, so it’s crucial to base your scheduling decisions on actual performance data rather than assumptions.

Google Ads’ ad schedule reports and Microsoft Ads’ time-of-day insights can help you identify when users in each region are most active.

For example, if analytics reveal that North American users are most engaged in the evening while European users peak in the morning, your campaigns should reflect that.

Instead of blanketing all markets with a generic ad schedule, tailor your approach based on real-time engagement trends.

Using Labels To Manage And Adjust Scheduling

One often overlooked yet powerful feature in Google and Microsoft Ads is the use of labels.

Labels let you group campaigns, ad groups, or keywords into easily manageable categories, making it simpler to track and adjust schedules.

For example:

- Tagging campaigns by region allows for easy bulk adjustments when shifting schedules due to seasonal changes or promotional events.

- Labeling time-sensitive ads ensures that you can quickly pause or resume campaigns as needed without sifting through dozens of settings.

- Using automation scripts with labels enables automatic bid adjustments or scheduling changes based on real-time performance.

By applying labels effectively, you can streamline scheduling changes without manually editing each campaign, saving time and reducing errors.

Automating Scheduling Adjustments With Scripts

If you’re managing multiple time zones, Google Ads scripts can be a game-changer.

Rather than manually adjusting schedules, scripts can dynamically modify bids based on real-time performance data.

For example, a script could be set up to boost bids by 20% during high-converting hours and reduce them by 10% when conversions drop. This keeps campaigns optimized while freeing up time to focus on strategy rather than daily bid adjustments.

Scripts also work well with labels. You can program scripts to modify bid strategies for campaigns tagged with specific labels, ensuring changes are applied only to relevant ads.

Adjusting For Daylight Saving Time Changes

Another scheduling headache is Daylight Saving Time (DST), which varies by country and can cause misalignment in ad schedules.

A campaign that ran perfectly last month might suddenly be off by an hour if a region switches to DST.

To avoid this, maintain a calendar of DST changes in key markets and adjust schedules proactively.

Another option is using automated rules or machine learning-based bid adjustments to handle these shifts without manual intervention.

Budget Allocation Based On Regional Performance Trends

Rather than splitting your budget evenly across all time zones, consider allocating more spend to the highest-performing regions based on historical data.

By analyzing performance reports, you can determine which locations deliver the best ROI and adjust budgets accordingly.

For instance, if your data shows that conversions peak in the late evening for Pacific time zone users but decline in the early morning for Eastern time users, shift more budget toward the stronger-performing time periods.

This approach ensures ad spend is being used effectively rather than wasted on time slots that don’t generate conversions.

Mastering Ad Scheduling For Global Success

Effectively navigating time zone differences in Google and Microsoft Ads isn’t just about setting a schedule and forgetting about it.

A winning strategy requires a mix of localized segmentation, automation, and continuous data-driven adjustments.

Instead of seeing time zone variations as a challenge, think of them as an opportunity to refine and optimize your strategy.

By leveraging campaign segmentation, smart bidding, labels, and scripts, you’ll gain greater control over when and where your ads appear – without unnecessary budget waste.

At the end of the day, great PPC management isn’t about simply keeping the lights on. It’s about making smart, strategic moves that maximize impact.

Test, tweak, and refine your approach, and you’ll see the results in both efficiency and performance.

More Resources:

Featured Image: tovovan/Shutterstock