Being successful with restaurant SEO can be a challenge.

Whether it is finding the time, wearing a lot of hats, or not having the same type of budget and return on investment (ROI) measurement as other industries, you might be in a tough spot trying to figure out how to get it done.

No matter what your situation is or your starting point, there are specific strategic and tactical things you can do that will help move your brand forward and increase your online visibility and engagement through SEO.

There are 11 specific things that are important for restaurant SEO that I’ll unpack in this article to help you focus your time on what matters.

1. Define Your SEO & Content Strategies

Before jumping into a myriad of tools, platforms, and engagement channels, define your SEO strategy. This will help to greatly narrow your competition and give you a quicker path to driving quality traffic to your website.

Start by defining the geographic area you want to own (where most of your customers will come from because they either live or work nearby, or are visiting).

Next, research what keyword terms and phrases your audience uses through a trusted keyword research tool like Ahrefs, Moz Pro, Semrush, or others.

To learn more on how to do keyword research, read this keyword research guide.

There are a few distinct groupings of terms that you want to group and classify properly, and they all have different levels of competition.

High-Level Restaurant Terms

Terms like “restaurants” and “Kansas City restaurants” are some of the most generic variations a searcher might use.

In the keyword research tools (each tool will vary on locality options), you can set your geographic focus to the area you identified and use both the generic term by itself (“restaurants”) and geographic modifier (“Kansas City restaurants”), as well as other general variations related to what your restaurant is about.

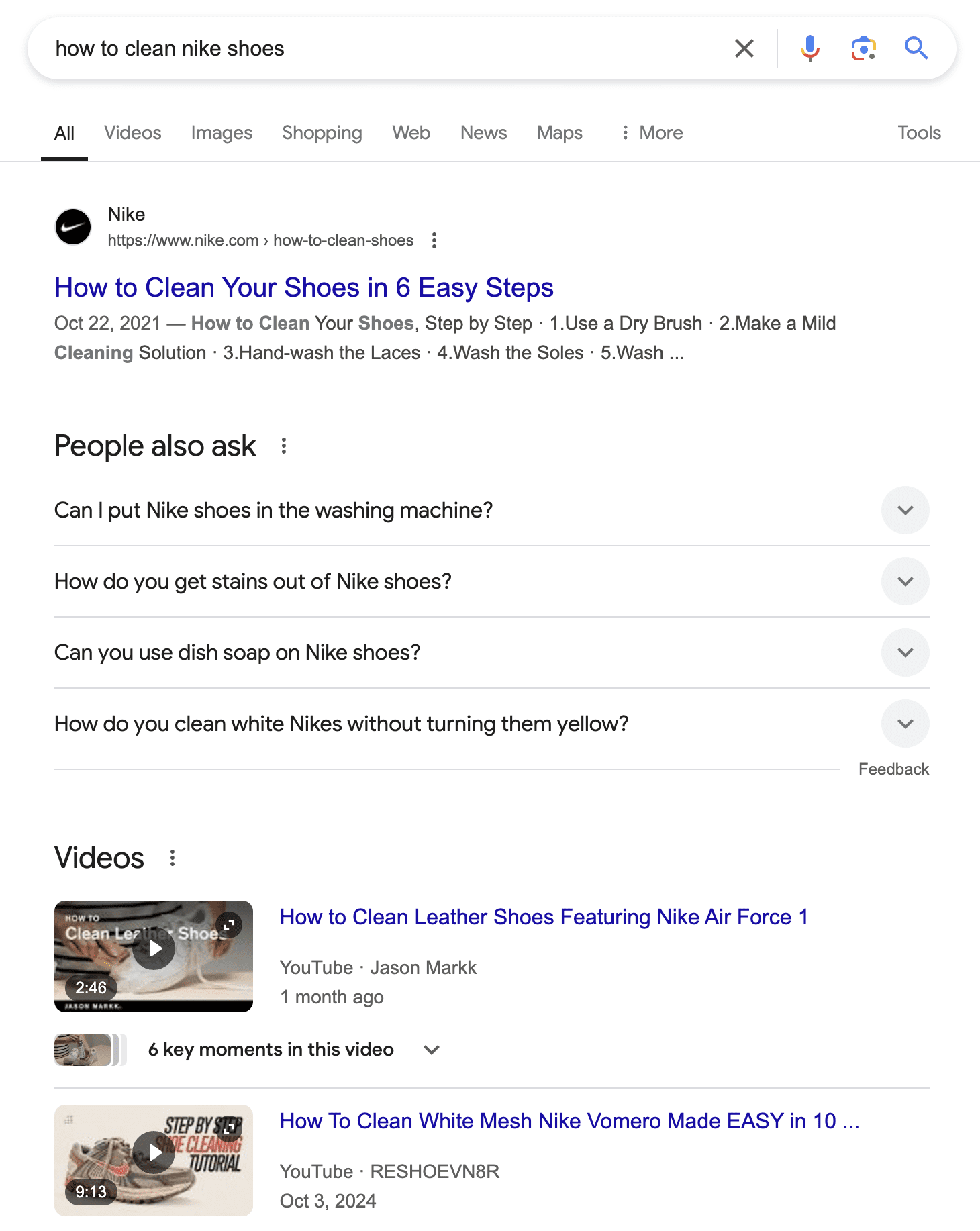

Also, don’t forget about voice search terms and variations of things like “restaurants near me” that will rely on location settings and the context of the search engine to return results for the searcher that you likely want to be included.

Niche-Specific Terms

The next level relates to the specific categories your restaurant would fall into.

Examples include “Mexican restaurants,” “pizza,” “romantic restaurants,” and other unique features and types of cuisine.

If you’re struggling with what specific categories or wording you should use, take a look at Google Maps (the Google Business Profile listings), Yelp, and TripAdvisor.

Use their filtering criteria in your area to see the general categories they utilize.

Brand Terms

Don’t take it for granted that you’ll automatically rise to the top of brand searches. Know how many people are searching for your restaurant by name and compare that to the high-level and niche-specific search volume.

Ensure your site outranks the directory, reservation (if applicable), and social sites in your space for your restaurant, as the value of people coming to your site is higher and trackable.

Once you’re armed with search terms and volume data, you can narrow your focus to the specific terms that fit your restaurant at high, category-specific, and brand levels.

Covering this spectrum helps you focus on what to measure and define your content.

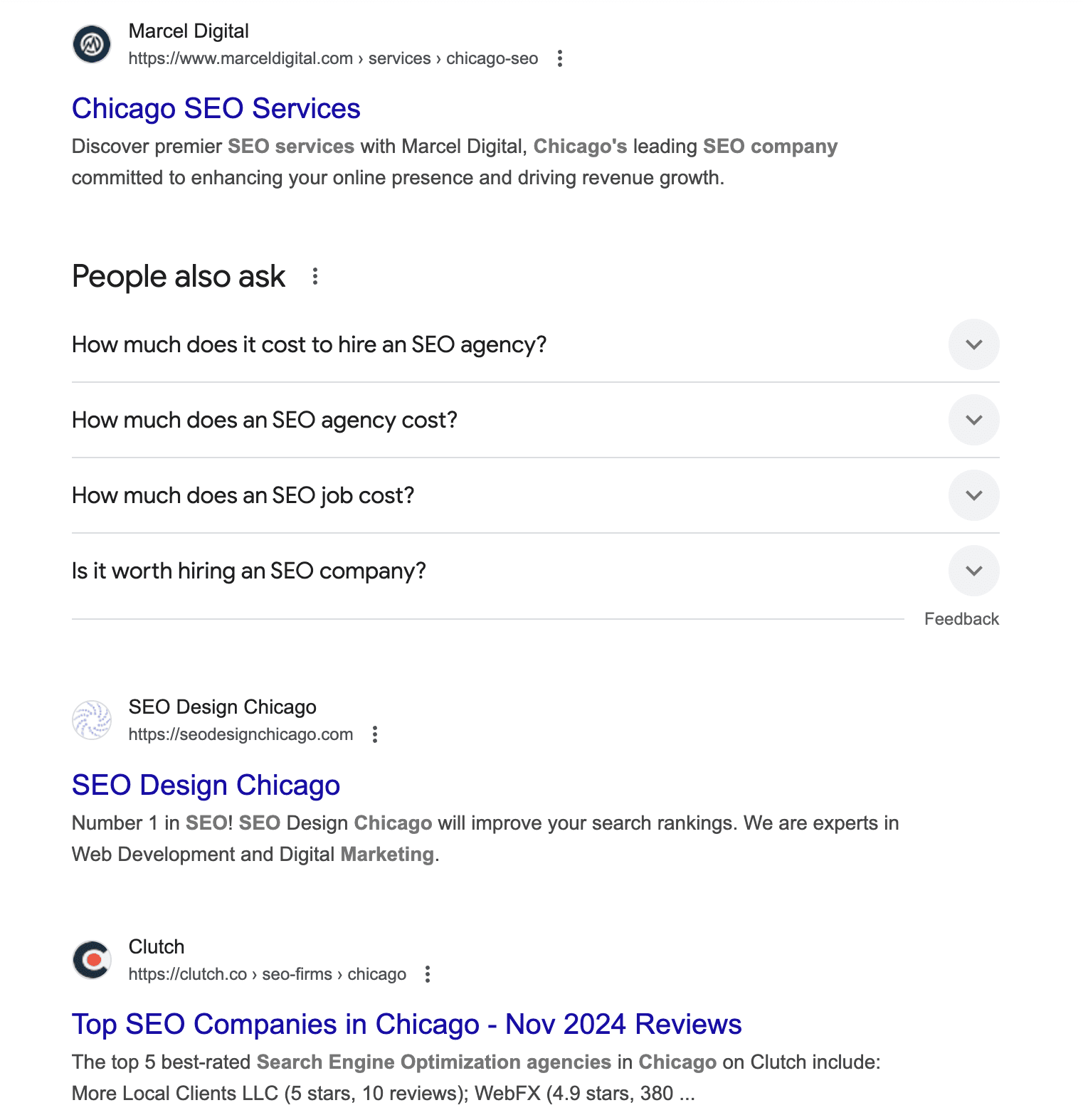

2. Dominate In Local Search

To dive into local search, start by claiming, standardizing data, and optimizing listings for your restaurant across all of the major and relevant local search properties.

This includes a mix of search engine directories, social media sites, and industry-specific directory sites.

Moz Local and Yext are two popular tools (there are many available, though) that can help you understand what directories and external data sources are out there, and then you can ensure they are updated.

Accurate NAP (name, address, phone) information that is consistent across all data sources is a critical foundational element of local SEO.

Beyond that, you can then work on optimizing the fields of information, like the business description and business categories, to align with your focus terms identified in your keyword research.

Put your focus on the directories that matter.

Start with Google Business Profile, then branch out to Yelp, TripAdvisor, and other restaurant-specific directories and data sources. Some of them could be obscure, niche-specific, or not seem very significant, but they add up.

Set reminders or tasks to come back to your Google Business Profile on a regular basis to update content, including photos, specials, and offers, and to monitor engagement and review activity (more on both of those topics below).

All of this will work together to grow your online visibility.

3. Engage With Customers On Social Media

Even though social media’s direct impact on SEO has long been debated, we know that social media engagement can drive users to your site.

Social media can be a powerful touchpoint of the customer journey, showcasing what customers can expect to experience at your restaurant.

A strong social presence often correlates with a strong organic search presence as content, engagement, and popularity align with the important SEO pillars of relevance and authority.

Develop a social media strategy and follow through with implementation.

Make sure to engage with followers and reply to inquiries promptly. How you communicate online sets a perception of your overall customer service and approach.

Find your audience, engage them, and get them to influence others on your behalf.

Ultimately, through engagement with fans and promoting content on social media that funnels visitors to your main website, you will see an increase in visits from social networks.

This will then correlate with the benefits from the rest of your SEO efforts.

4. Encourage Reviews & Testimonials

It’s nearly impossible to do a search for a restaurant and not see review and rating scores in the search results. That’s because people click on higher star ratings.

Reviews are often considered part of a social media strategy and are an engagement tactic, but have a broader impact on traffic to your site through search results pages as well.

Through the use of structured data markup, you can have your star ratings appear in search results and provide another compelling reason for a user to click on your site versus your competitor’s.

If you have online ratings that don’t reflect the quality of your restaurant, come up with a review strategy now to get as many reviews as possible to help bring up your score prior to implementing the code that will pull the ratings into the SERPs.

A higher star rating likely means a higher click-through rate to your site – and more foot traffic.

5. Create Unique Content

If you have a single location, your job is a lot easier than the multi-location local or national chain.

However, you have to stand out from the competition by ensuring you have enough unique content on your website.

Having a wealth of engaging and helpful content on your site will serve you well if it is valuable to your prospects and customers. Building a strong brand will translate to better rankings, higher brand recall, and greater brand affinity.

Keep in mind that content doesn’t all have to be written copy; you can present your menus, in-house promotions, and more through video, photography, and graphics.

The search engines are focused on context and not just the keywords on your site.

By identifying and regularly generating new content, you also can keep the pipeline full of engaging material that helps you stand out from your competition.

For example, if you have a niche restaurant, embrace that and set yourself apart from the generic chain down the street (no offense if you own, operate, or do marketing for a chain – you have a different challenge of scaling your efforts).

Share information about the founders, the culture, and most importantly – the product.

Give details about your menu, including sourcing of ingredients, how you developed recipes, and the compelling reason your chicken marsala is the best in town.

6. Consider Content Localization

Again, single-location restaurants have an easier road here. Based on decisions you’ve made about your market area, make sure you provide enough cues and context to users and the search engines as to where your restaurant is and what area it serves.

Sometimes, the search engines and out-of-town visitors don’t fully understand the unofficial names of neighborhoods and areas.

By providing content that is tied into the community and doesn’t simply assume that everyone knows where you’re located, you can help everyone out.

One example of this is a 100-location chain that started small with a single paragraph for each location written in a way tailored to the store, local history, neighborhood, and community engagement.

From there, we were able to find other areas to scale, and it worked well to differentiate stores from each other.

When it comes to nuanced and potentially confusing location names and context, addresses can be misleading. Think about how Google will handle those.

These are important factors to consider so you aren’t trying to have a location compete with too broad of a geographic area for search rankings.

7. Apply Basic On-Page SEO Best Practices

Without going into the details of all on-page and indexing optimization techniques, I want to encourage you not to skip or ignore the best practices of on-page SEO.

You need your page to be indexed to ensure you have the potential for visibility and on-page SEO to ensure the proper classification of your content.

You can spend a lot of time on a full SEO strategy, but if you’re just getting started, I recommend putting the rest aside and starting with these two areas.

To ensure your site is crawled and indexed properly now and in the future, check your robots.txt and XML sitemap. Set up Google Search Console to look for errors.

When it comes to on-page, ensure that you have unique and keyword-specific page URLs, title tags, meta description tags, headings, page copy, and image alt attributes.

This sounds like a lot, but start with your most important pages, like your home, menu, about, and contact pages, and go from there as time permits.

8. Think Mobile First

Mobile accounts for a high percentage of visits to restaurant websites. Google now crawls the mobile version of a website to understand its content.

Hopefully, you have a responsive website or one that passes the necessary mobile-friendly tests.

But that’s just the beginning when it comes to mobile.

It’s also critical to think about page load speed and providing a great mobile user experience.

9. Implement Schema “Restaurants” Markup

Another area where we can build context for the search engines and gain exposure to more users in the search results is by using structured data.

In the restaurant industry, implementing the Schema.org library for restaurants is a must.

This task requires a developer, website platform, or content management system with the right plugins or built-in options.

10. Measure Your Efforts

This could have been tip No. 1, but I’m including it here, as it is important throughout the process. With the previous nine tips, there’s something to do and implement.

But before you embark on any aspect of optimization, make sure those efforts are measurable.

When investing in your strategy, you want to know what aspects are working, which ones aren’t, and where your efforts were (and are) best producing a return on investment.

Track visibility, engagement, and conversion metrics as deep as you can connect them to your business.

Beyond that, you’ll need to identify the right progress metrics tied to goals to know you’re moving in the right direction.

11. Don’t Ignore AI

This is less of a specific recommendation or tactic and more of a wide-reaching one. AI provides a lot of opportunities to scale content, create efficiencies, and do more with less.

Whether you’re leveraging AI tools natively, using SaaS products to help you research, optimize, and measure efforts, or relying on things like AI Overviews in Google to engage with users in search, it is hard to ignore.

Know that while AI is helpful to further scale efforts and be where searchers are finding content, you don’t want to abandon your brand or generate content that is clearly generic and not human-generated.

Don’t ignore AI, but use it with care to avoid losing out on the unique value important for search and searchers for your restaurant.

Restaurant SEO Matters For Visibility And Traffic

There are unique challenges for restaurant SEO. However, if you can dedicate the time and effort to a strategy and follow through on tactics and measurement, it can be highly rewarding and profitable as well.

While you might not be able to directly attribute SEO performance to ROI for restaurant SEO, you can find correlations between a stronger brand presence and visibility and volume in your location(s).

I encourage you to nail down your strategy and dedicate focus to the tactics to see it through.

More Resources:

Featured Image: PeopleImages.com – Yuri A