For this week’s Ask An SEO, the question asked was:

“Do I need to rethink my content strategy for LLMs and how do I get started with that?”

To answer, I’m going to explain the non-linear journey down the customer journey funnel and where large language models (LLMs) show up.

From rethinking traffic expectations to conducting an audit on sentiment picked up by LLMs, I will talk about why brand identity matters in building the kind of reputation that both users and machines recognize as authoritative.

You can watch this week’s Ask An SEO video and read the full transcript below.

Editor’s note: The following transcript has been edited for clarity, brevity, and adherence to our editorial guidelines.

Don’t Rush Into Overhauling Your Strategy

Off the bat, I strongly advise not to rush into this. I know there’s an extreme amount of noise and buzz and advice out there on social media that you need to rethink your strategy because of LLMs, but this thing is very, very far from settled.

For example, or most notably, AI Mode is still not in traditional search results. When that happens, when Google moves the AI Mode tab from being a tab into the main search results, the whole ecosystem is set for another upheaval, whatever that looks like, because we don’t actually know what that will look like.

I personally think that Google’s Gemini demo (the one they did way, way back, where they showed customized results for certain types of queries with certain answer formats) might be what AI Mode ends up resembling more than what it does right now, which is purely a text-based output that sort of aligns with ChatGPT.

I think Google will differentiate those two products once it moves AI Mode over from the tab into the main search results. So, things are not settled yet. And if you think they’re not. They are not settled yet.

Rethinking Traffic Expectations From LLMs

The other thing I want you to rethink is the traffic expectations from LLMs.

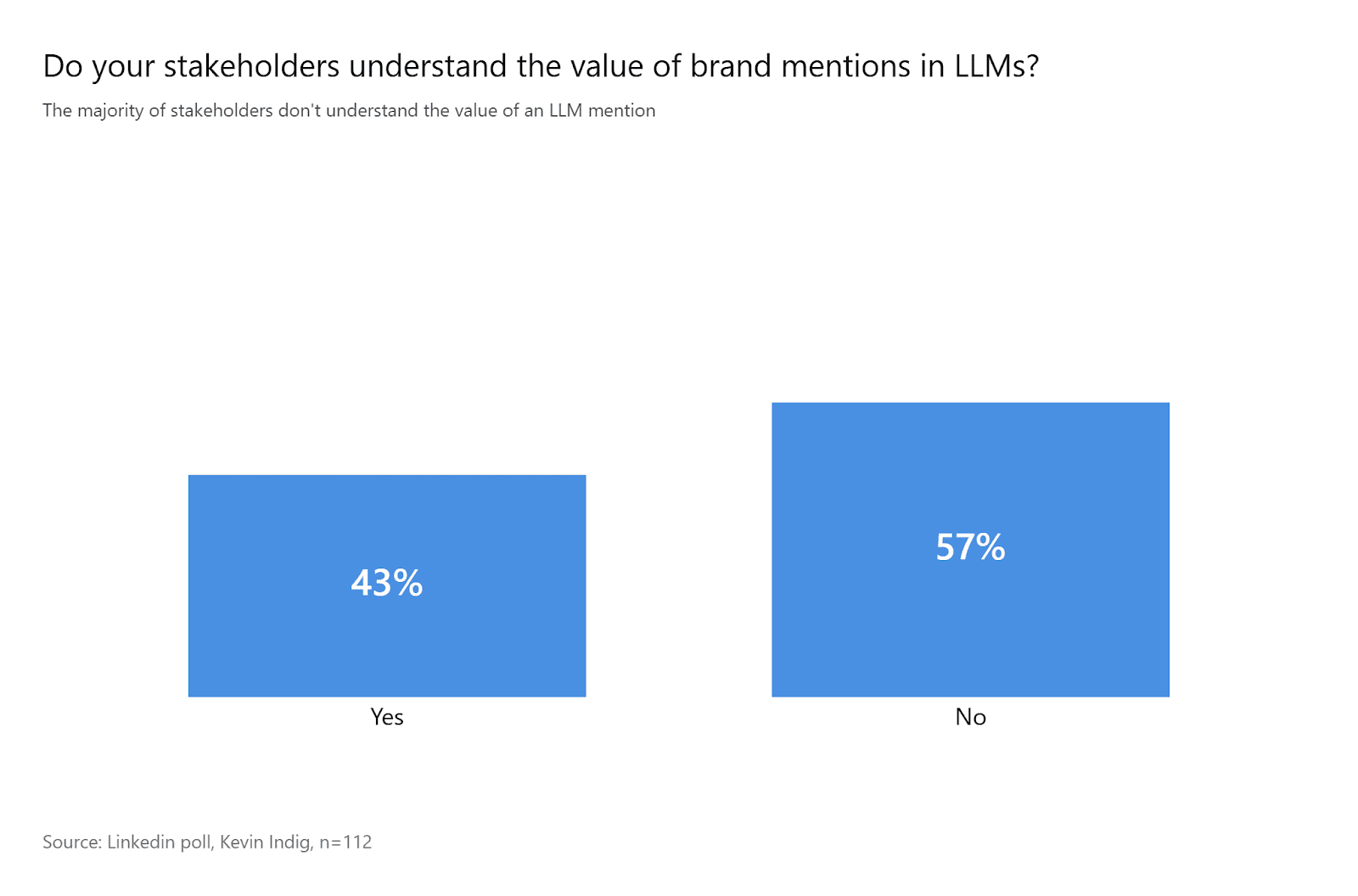

There’s been a lot of talk about citations and traffic – citations and traffic, citations and traffic. I don’t think citations, and therefore traffic, are the main diamond within the LLM ecosystem. I believe mentions are. And I don’t think that’s anything really new, by the way.

Traditionally, the funnel has been messy, and Google’s been talking about that for a long time. Now, you have an LLM that may be a starting point or a step in that messy funnel, but I don’t believe it’s fundamentally different.

I’ll give you an example. If I’m looking for a pair of shoes, I might go to Google and search, [Are these Nike shoes any good?]. I might look at a website, then go to Amazon and look at the actual product.

Then I might go to YouTube, see a review of the product, maybe watch a different one, go back to Amazon, have a look, check Google Shopping to see if it’s cheaper there, and then head back to Amazon to buy it.

Now, you have an LLM thrown into the mix, and that’s really the main difference. Maybe now, the LLM gives me the answer. Or maybe Google gives me the answer. Then I go to Amazon, look at the product, go to Google Shopping to see if it’s cheaper, watch a YouTube review, maybe switch things up a bit, go back to ChatGPT, see if it recommends something different this time, go through the whole process, and eventually buy on Amazon. That’s just me, personally.

It’s important to realize that the paradigm has been around for a while. But if you’re thinking of LLMs as a source of traffic, I highly recommend you don’t. They are not necessarily built for that.

ChatGPT, specifically, is not built for citations or to offer traffic. It’s built to provide answers and to be interactive. You’ll notice you usually don’t get a citation in ChatGPT until the third, fourth, or fifth prompt, whatever it is.

Other LLMs, like AI Mode or Perplexity, are a little bit more citation or link-based, but still, their main commodity is the output, giving you the answer and the ability to explore further.

So, I’m a big believer that the brand mention is far more important than the actual citation, per se. Also, the citation might just be the source of information. If I’m asking, “Are Nike shoes good?” I might get a review from a third-party website, say, the CNET of shoes, and even if I click there, that’s not where I’m going to buy the actual shoe.

So, the traffic in that case isn’t even the desirable outcome for the brand. You want users to end up where they can buy the shoe, not just read a review of it.

The Importance Of Synergy And Context With Content

The next thing is the importance of synergy and context with your content. In order to be successful with LLMs, it’s not about (and I’ve heard this before from people) that the top citations are just the ones that already do well on Google. Not necessarily.

There might be a correlation, but not causation. LLMs are trying to do something different than search engines. They’re trying to synthesize the web to serve as a proxy for the entire web. So, what happens with your content across the web matters way more: How your content is talked about, where it’s talked about, who’s talking about it, and how often it’s mentioned.

That doesn’t mean what’s on your site doesn’t factor in, but it’s weighted differently than with traditional search engines. You need to give the LLM the brand context to realize that you have a digital presence in this area, that you’re someone worth mentioning or citing.

Again, I’d focus more on mentions. That’s not to say citations aren’t important (they are), but mentions tend to carry more weight in this context.

Conducting An Audit

The way to go about this, in my opinion, is to conduct an audit. You need to see how the LLM is talking about the topic.

LLMs are notoriously positive and tend to loop in tiny bits of negative sentiment within otherwise positive answers. I was looking at a recent dataset. I don’t have the formal numbers, but I can tell you they’re built to lean neutral or net positive.

For example, if I ask, “Are the Dodgers good?” LLMs, in this case, I was looking at AI Mode, which will say, “Yes, the Dodgers are good…” and go on about that. If I ask, “Are the Yankees good?” and let’s say two or three weeks ago they weren’t doing well, it won’t say, “Yes, the Yankees are good.” It’ll say, “Well, if you look at this and you look at that, overall you might say the Yankees are good.”

Those are two very different answers. They’re both trying to be positive, but you have to read between the lines to understand how the LLM is actually perceiving the brand and what possible user hesitancies or skepticism are bound up in that. Or where are the gaps?

For instance, if I ask, “Is Gatorade a great drink?” and it answers one way, and then I ask, “Is Powerade a good drink?” and it answers slightly differently, you have to notice why that’s happening. Why does it say, “Gatorade is great,” but “Powerade is loved by many”? You have to dig in and understand the difference.

Running an audit helps you see how the LLM is treating your brand and your market. Is it consistently bringing up the same user points of skepticism or hesitation? If I ask, “What’s a good alternative to Folgers coffee?” AI Mode might say, “If you’re looking for a low-cost coffee, Folgers is an option. But if you want something that tastes better at a similar price, consider Brand X.”

That tells you something: There’s a negative sentiment around Folgers and its taste. That’s something you should be picking up on for your content and brand strategy. The only way to know that is to conduct an audit, read between the lines, and understand what the LLM is saying.

Shaping What LLMs Say About Your Brand

The way to get LLMs to say what you want about your brand is to start with a conscious point of view: What do you want LLMs to say about your brand? Which really comes down to: what do you want people to say about your brand?

And the only way to do that is to have a very strong, focused, and conscious brand identity. Who are you? What are you trying to do? Why is that meaningful? Who are you doing it for? And who is interested in you because of it?

Your brand identity is what gives your brand focus. It gives your content marketing focus, your SEO strategy focus, your audience targeting focus, and your everything focus.

If this is who you are, and that is not who you are, then you’re not going to write content that’s misaligned with who you are and what you’re trying to do. You’re not going to dilute your brand identity by creating content that’s tangential or inconsistent.

If you want third-party sites and people around the web to pick up who you are and what you’re about, to build that presence, you need a very conscious and meaningful understanding of who you are and what you do.

That way, you know where to focus, where not to, what content to create, what not to, and how to reinforce the idea around the web that you are X and relevant for X.

It sounds simple, but developing all of that, making sure it’s aligned, and auditing all the way through to ensure it’s actually happening … that’s easier said than done.

Final Thoughts

LLMs may shift how your customers find information about your brands, but chasing citations and clicks isn’t a solid strategy.

Despite the chaos in AI and search in the age of LLMs, marketers need to stick to the fundamentals: brand identity, trust, and relevance still matter.

Focus on brand identity to build your reputation, ensuring that both users and search engines recognize your brand as an authority in your niche.

More Resources:

Featured Image: Paulo Bobita/Search Engine Journal