It Works Until It Doesn’t: AI Content Strategies That Backfire via @sejournal, @lilyraynyc

Over the past few years, I’ve watched AI content creation tools rapidly gain adoption across the SEO/GEO industry. These tools offer the promise of leveraging AI to automate content creation, reduce headcount, cut costs, and scale output.

As someone who has spent the last decade helping companies recover from Google algorithm updates, my spidey senses started tingling the minute I heard the pitches for many of these tools. Even before AI was part of the conversation, Google already had a long history of reducing the visibility of automated content in its search results.

Despite recent advancements in the quality of AI outputs, I’ve remained skeptical that publishing AI-generated or AI-assisted content at scale can drive sustained performance in Google’s search results. This is especially true now, given how Google updated its ranking systems in recent years specifically to demote overly optimized, SEO-driven content.

Over the past several months, I have been monitoring more than 220 websites that were publicly identified, either by themselves or by their AI content vendors, as customers of various AI content creation, automation, and scaling platforms. These tools fully write articles, assist with writing them, or use AI automations and workflows to support content creation. Many of these tools also now focus on driving visibility, mentions, and citations in AI search responses (AEO/GEO).

I wanted to analyze what happens after the claims of big wins.

A consistent pattern emerged across the 220+ sites I’ve been monitoring, and I believe it is concerning enough to be worth writing about: it works, until it doesn’t.

Below, I will share some of the trends I am observing, plus a variety of common SEO/GEO approaches I believe may be causing declines in organic search (and consequently, AI search) visibility. As a reminder, what is dangerous for SEO can also be dangerous for AI search, largely because of RAG.

Methodology & Disclaimers

Before we dive in, it’s important to set the stage with my approach and provide some important disclaimers.

This analysis is based on third-party SEO measurement data: organic traffic estimates and organic page count time series data from Ahrefs, corroborated against the Sistrix Visibility Index data to confirm broader visibility patterns. Top-traffic URLs were identified using Ahrefs’ top-pages export. Where I describe URL patterns or percentage changes, I am quoting directly from these third-party tools as of May 2026.

The dataset covers more than 220 client domains tracked across the publicly published customer-stories pages of over a dozen AI content platforms. For many of these sites, I narrowed the analysis to a specific subfolder where the AI-assisted content had been published, either identified directly in the case study itself or inferred from a sharp increase in new pages around the time of the case study’s publication.

The analysis, conclusions, and recommendations throughout this piece reflect my own professional opinions based on more than a decade of helping companies recover from Google algorithm updates. Other SEO/GEO practitioners may disagree with my findings and approaches, and individual sites and strategies will always have their own context.

3 Important Disclaimers About This Data:

First, these are third-party estimates, not first-party analytics. They are well-validated tools in the SEO industry, but they are not perfect measurements of organic search performance.

Second, the traffic declines described here could reflect many factors, including but not limited to algorithmic adjustments by Google, on-site changes by the site operators themselves, off-site competitive dynamics, brand changes, acquisitions, seasonality, and changes to internal site architecture. I am not asserting that any AI content tool directly caused any traffic outcome described in this piece. I am describing a correlation observed across many listed sites that share similar content patterns and organic traffic trajectories.

Third, vendors and specific domains are deliberately not named here. The pattern is the story, not the specific actors. Any resemblance to a specific company, vendor, or case study is incidental to the broader pattern described.

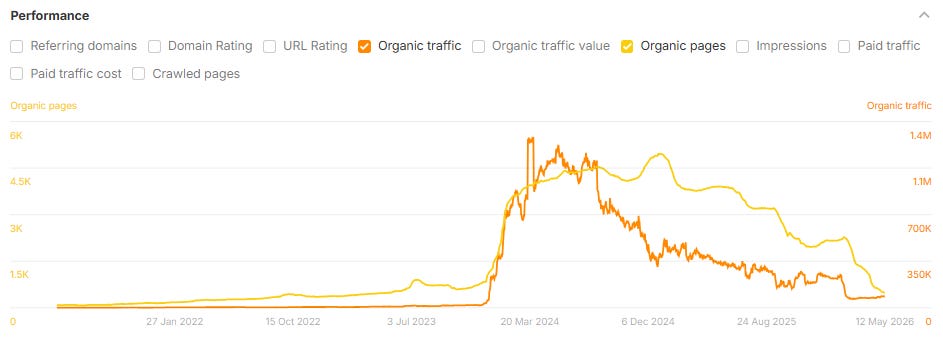

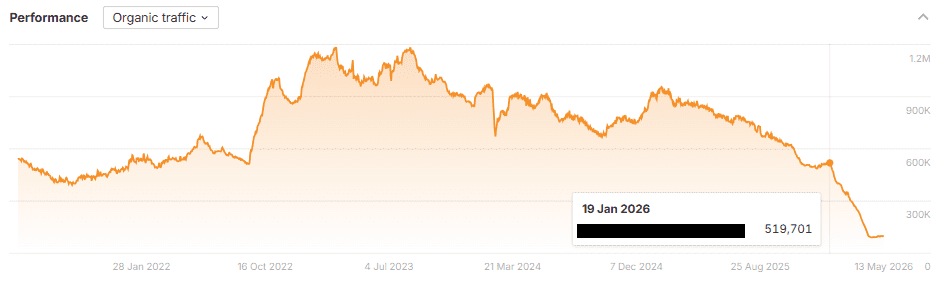

What The Data Shows: Rapid Growth Before A Steep Decline

If there is one thing the data makes clear, it is this: scaling content production with AI is not a low-risk strategy for organic search. It can produce real short-term gains in both SEO and AI search (LLMs use search engines), but across this dataset, those gains have rarely held. In many cases, the eventual loss has exceeded the initial peak.

Across the group of 220+ sites and subfolders I analyzed:

- 54% lost 30% or more of their peak organic traffic.

- 39% lost 50% or more.

- 22% lost 75% or more.

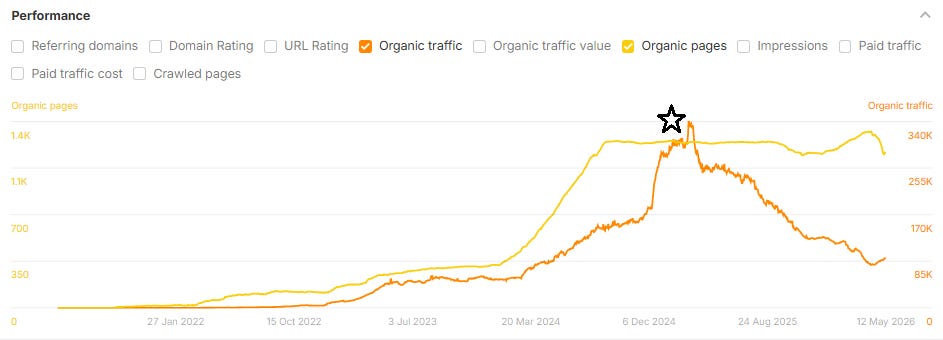

Within those declines, a recurring trajectory appears: a rapid growth in organic pages over six to 12 months; an organic traffic peak within roughly three to six months of the content peak; and then a steep decline in traffic that erases most of the gain (and frequently drops below the prior baseline) within the following year.

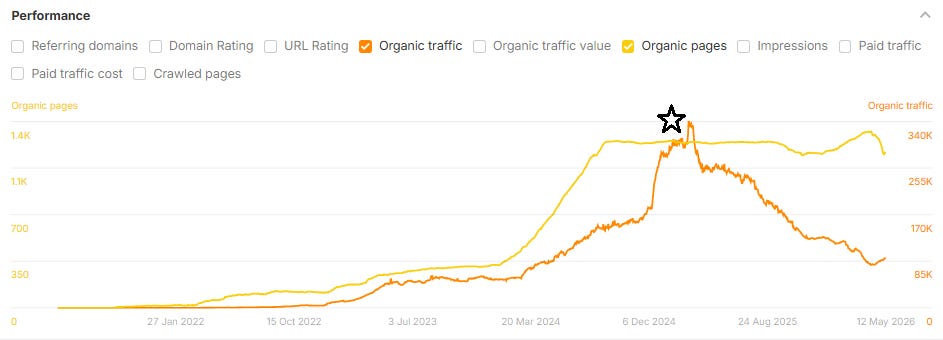

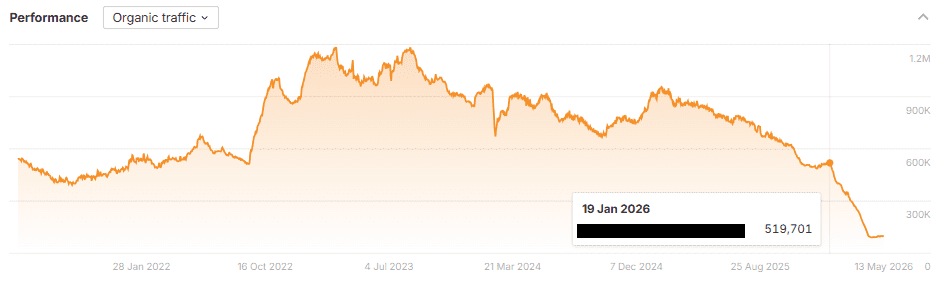

Most of these traffic drops took place after the case studies were published (which also makes me wonder whether the case studies themselves could be contributing to the declines). In the example below, the case study was published in January 2025, indicated by the the black star below:

I am also continuously monitoring changes to organic page growth and organic traffic to these sites and subfolders over time. Looking at the updated data, a substantial number of these brands appear to have substantially reduced their content footprints in 2025 and 2026, often removing, redirecting, or 410’ing many of the same pages featured as success stories in published case studies. This could explain the recent drop in pages (yellow line) shown in the above screenshot (and potentially, the corresponding increase in organic search traffic).

In many cases, these case studies remain published to this day, but the pages they reference do not.

The Familiar Rank & Tank Playbook

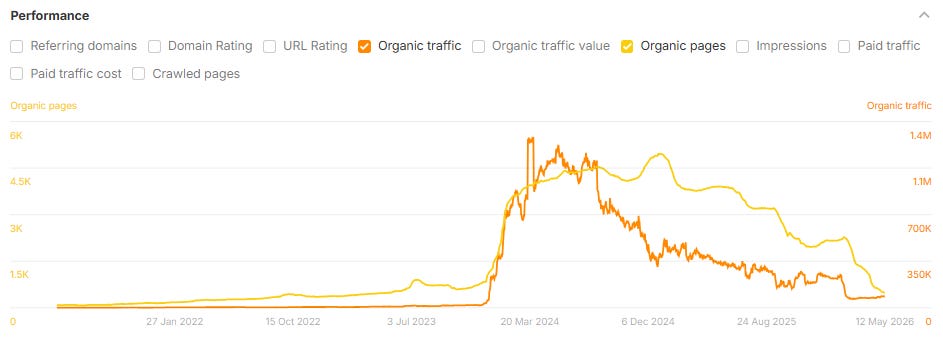

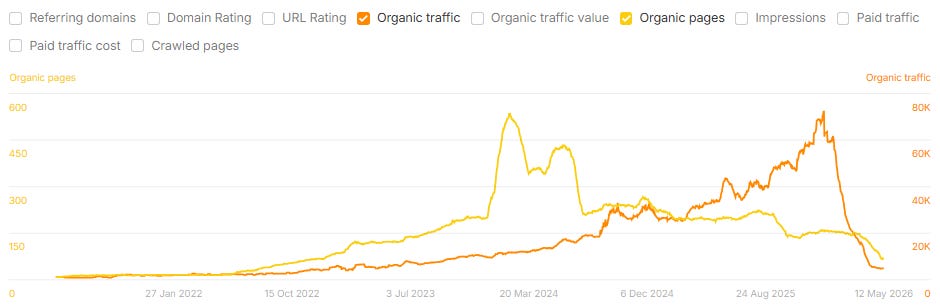

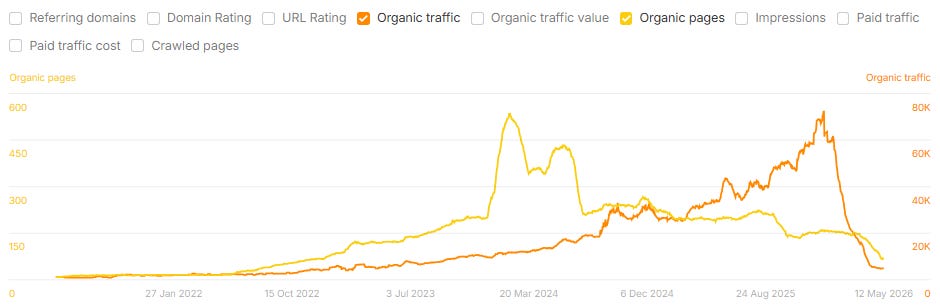

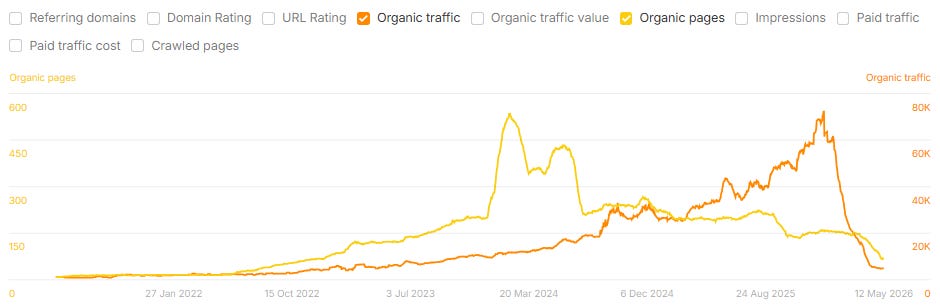

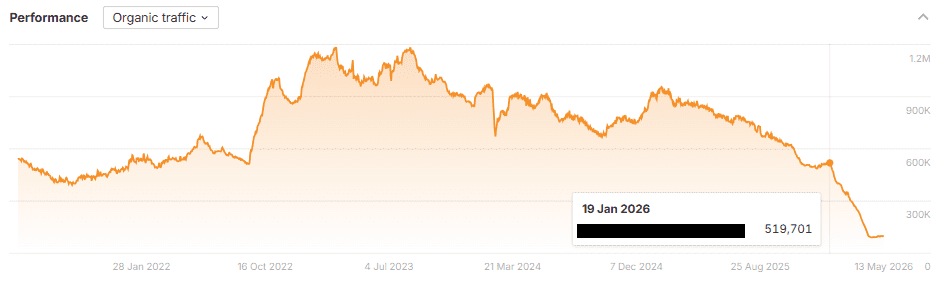

When a site starts seeing traffic drops due to sitewide content quality issues, it’s rarely a gentle decline. As Glenn Gabe refers to it, a better label would be “Mount AI”: steep growth, followed by a similarly shaped drop-off in organic traffic, once Google’s systems have gathered enough signals to identify what is going on.

Below are several examples of case study sites that used AI to scale content creation and saw massive drops in organic traffic after their case studies were published:

This pattern is consistent across industries, including cybersecurity, travel, marketing, SaaS, healthcare, B2B services, crypto, and consumer goods, and it shows up across vendors.

The shape of the line in the chart is similar to trajectories we have seen among many sites affected by Google’s algorithm updates in recent years. It is the same boom-bust cycle the SEO industry has watched repeatedly in different forms, accelerated this time by the speed at which AI tools have enabled site owners to scale content.

The SEO Industry Just Went Through This

What is hard to overstate is just how recently the SEO industry watched a near-identical cycle play out. Many SEOs and site owners are still licking their wounds from a brutal round of Google updates and new spam policies that obliterated many sites’ traffic a few years back.

In September 2023, Google launched the Helpful Content Update, the most aggressive crackdown it had done in years against content that, according to its announcement, “feels like it was created for search engines instead of people.”

Roughly six months later, in March 2024, it followed up with the longest core update in Google’s history, which Google states was designed to “reduce unhelpful, unoriginal content in search results by 45%.” Across two consecutive update cycles, Google’s stated target was the same thing: content produced at scale, regardless of whether the production method was human, AI, or a combination of both.

Alongside the March 2024 update, Google formalized a new spam policy called “Scaled Content Abuse,” explicitly naming the practice it was working to suppress: generating many pages to manipulate search rankings, regardless of authorship.

The SEO industry is still working through the collateral damage from those updates, including significant losses for many small publishers, some of whom were publishing original, human-written content but used excessive SEO frameworks that the updates likely flagged. The casualty list also included some publishers who had partnered with ad networks and other emerging tools offering AI content creation and scaling as a service.

Many sites affected by the HCU haven’t recovered to this day, despite their enormous efforts. I spent significant time in 2024 working and speaking with many site owners trying to dig themselves out of that hole.

Having spent hundreds of hours analyzing and presenting about those two major updates, I can say that the content I am seeing published with many of these new AI tools often looks and feels a lot like the exact type of content that was wiped off the map with these 2023 and 2024 Google updates.

8 Recurring Content Patterns That Are Risky For SEO And AI Search

So, what types of content am I seeing published by companies using AI tools to build articles that I believe are ultimately risky for SEO? I believe the answer lies in page templates that aim to influence SEO rankings, AI search responses, and/or citations in AI search, but are highly formulaic and easily repeatable by competitors.

What starts as a genuine approach to try to build helpful content (and score a mention/citation) ends up being an easily detectable footprint by Google when enough sites are publishing similar pages, and the index becomes flooded with tens or hundreds of thousands of these similar pages, which is easier than ever to do using AI.

This is exactly what Google means when it talks about writing for search engines, not humans.

Reviewing top-traffic URLs across the declining domains, eight distinct content templates appear repeatedly. Most sites seeing declines in the analysis use some combination of at least three or four. The most aggressive ones use all eight. Typically, affected sites also have hundreds or thousands of these articles, which amplifies the problem and generally leads to steeper traffic losses.

1. Comparison Pages At Scale

Pattern: /blog/[product-A]-vs-[product-B] published at scale across most reasonable head-to-head matchups in a category. Observed across the dataset for product-vs-product pairings, framework-vs-framework pairings, and, in at least one case, concept-vs-concept pairings unrelated to the publisher’s actual business.

2. The “What Is X” Glossary

Single-term, single-question pages designed to be cited by AI engines. Pattern: /resources/what-is-[term] or /glossary/[term]. Observed across the dataset, including programmatic glossaries scaled across multiple languages from a single source template. Scaling translations with AI and without human review can also frequently lead to sitewide content quality issues.

3. The “Best [X] For [Y]” Listicle

The most familiar AI-content template, with origins in the affiliate-content era. This pattern was observed across the dataset in both broad-category and narrow-niche variants.

4. The Self-Promotional Listicle

A variant of No. 3 in which the publisher is itself a competitor in the category being ranked, and frequently lists itself as the No. 1 best among competitors. These pages generally lack real evidence that the company genuinely tested all of the competitors in the list, which is recommended by Google for review pages.

I wrote about this “listicle” page template causing SEO/GEO issues in February 2026, when I found that many companies publishing dozens, hundreds, or even thousands of self-promotional listicles saw extreme traffic drops beginning on the same day (approximately Jan. 21, 2026). This pattern was observed across multiple sites in the dataset, most aggressively in B2B services.

5. The Competitor-Vs-Alternatives Page

Pattern: /blog/[competitor-brand]-alternatives, or, in the more programmatic form, dedicated landing pages built for every named competitor in a category. This approach was observed extensively across the dataset, including one case where the majority of a site’s top traffic pages were dedicated to individual competitor brand names.

6. Programmatic Location And Language Scaling

This is one of the oldest tricks in the SEO book, and one that I’ve seen sites get in trouble for with algorithm updates for at least 10 years. The approach: Use one template multiplied across every geography or language a search engine will index, with very little unique content per local landing page.

In many cases, the company publishing these pages often does not have real brick-and-mortar locations in each of the neighborhood/city/state pages they are targeting.

This page type was observed across the dataset including state-by-state content, country-by-country service pages, and the multilingual programmatic glossaries described above.

7. The FAQ Farm

Each page answers exactly one question. Pattern: /faq/[full-question]. Designed for extraction by AI engines: a clear question in the URL, the answer in the first paragraph, bullet points in the body, schema markup at the bottom.

The problem? This approach creates a lot of low-quality content and baggage for the site when implemented at scale. Scaling FAQs was also observed extensively across the dataset, including in industries where the templated tone was a noticeable mismatch with the publisher’s brand context.

Here is a screenshot of my March 2024 Amsive article advising against the same exact thing:

It’s also worth noting that just last week, Google announced it was deprecating FAQ Rich Results, which I believe might have something to do with this new influx of FAQ schema aimed at trying to earn citations and mentions in AI search.

8. Off-Topic Content Published At Scale

Publishing off-topic content, with no apparent connection to the publisher’s actual business, at high volumes, is one of the fastest ways to get in trouble with search engine algorithms. This was also a huge problem during the Helpful Content Update and March 2024 Core Updates, when many sites were experimenting with publishing off-topic content, like funny quotes, jokes, baby names, horoscopes, and other high-volume articles that weren’t actually topically relevant for the publisher.

This method was used across multiple sites in the dataset, including pieces on entertainment topics on a services platform, lists of names and jokes, social-media memes on B2B websites, and historical or biographical content on business-focused sites.

The Late January 2026 Unconfirmed Google Update

A secondary pattern appears in the data around late January 2026: a wave of sites with explicitly GEO-optimized, self-promotional listicles, plus other risky SEO/GEO approaches, saw organic traffic declines between 40% and 95% over the January-April 2026 window.

Google did not announce or confirm an update by name in January 2026, but at least 40 sites I identified saw a negative trend beginning around Jan. 20, 2026. In many cases, the impact was isolated to the company’s blog or other subfolder containing a lot of new SEO-driven content. My analysis found that some of these companies were scaling dozens, hundreds, or even thousands of these self-promoting listicles, in which they named their own company the No. 1 best when compared to competitors.

I suspect this adjustment on Google’s end was just the start of Google (and likely the LLM providers building on top of search) beginning to demote this type of content in search results, and it appears that the impact was greater than just the listicles themselves. For affected sites, the entire blog or subfolder containing these articles often also saw declines. In other cases, the impact was carried over across the full domain.

How To Use AI Content Tools Safely

I do believe there is a way to use AI content tools safely, and a way for these tools to support the creation of high-quality content. The tools themselves are not the problem, but the implementation can be. I believe these tools should be used and overseen by experienced SEO professionals who understand the landscape of content approaches that Google has grown extremely sophisticated at penalizing and demoting over the past 10+ years. The problem often stems from a “set it and forget it” approach, or when the goal is to scale as many pages as quickly as possible without human review.

Using AI content tools for research, organization, content briefs, pulling in proprietary company data and insights, and more can be invaluable for speeding up the content creation process. But when articles are simply published “for SEO/GEO” without consideration of the risks involved with search engine ranking systems, the well-intentioned content can actually backfire for both SEO and AI search.

To perform well, I recommend that any AI-assisted content should still demonstrate E-E-A-T, add original or unique information above and beyond what is offered by competing pages (information gain), and consider being transparent about the use of AI to create the content (which is recommended by Google).

The Bottom Line

If there is one takeaway from monitoring these 220+ sites over the past several months, it’s that the playbooks being sold as “AI-first SEO” or “GEO-optimized content at scale” look remarkably similar to the playbooks that got sites flattened by the Helpful Content Update and the March 2024 Core Update. The packaging is new, but the pattern is not.

Across the dataset, the brands still growing are generally the ones whose content does not match the eight templates above. Many brands that scaled into those templates are the ones now removing pages, redirecting subfolders, and taking other steps to try to mitigate recent losses in traffic.

If you’re currently evaluating an AI content vendor, or running a program in-house, here are a few practical questions I think are worth asking before publishing another page:

- Does this page actually exist because a real customer or reader needs it, or because a search engine or LLM might cite it?

- Could a competitor publish a near-identical version of this page tomorrow using the same prompt?

- Would I be comfortable if Google, a journalist, or my own customers saw the full list of URLs in this subfolder?

- Is the article inherently biased, and if so, is the page transparent with users about those biases?

- Is there any first-party data, expertise, or original perspective on this page that isn’t available on the first ten results already ranking for the query?

None of this means AI content tools are unusable. They can be genuinely useful for research, briefs, internal data synthesis, and accelerating workflows where a human expert is still in the loop. The trouble starts when the goal becomes volume, or when the people closest to the content stop reviewing what is going out the door.

The SEO industry has already lived through this cycle once in the last few years. The sites that came out of it best were the ones that prioritized quality, originality, and topical focus over scale. I expect the same to be true of this cycle, and I’ll keep tracking the data as it plays out.

More Resources:

This post was originally published on Lily Ray NYC Substack.

Featured Image: Stokkete/Shutterstock