Google Explains How CDNs Impact Crawling & SEO via @sejournal, @martinibuster

Google published an explainer that discusses how Content Delivery Networks (CDNs) influence search crawling and improve SEO but also how they can sometimes cause problems.

What Is A CDN?

A Content Delivery Network (CDN) is a service that caches a web page and displays it from a data center that’s closest to the browser requesting that web page. Caching a web page means that the CDN creates a copy of a web page and stores it. This speeds up web page delivery because now it’s served from a server that’s closer to the site visitor, requiring less “hops” across the Internet from the origin server to the destination (the site visitor’s browser).

CDNs Unlock More Crawling

One of the benefits of using a CDN is that Google automatically increases the crawl rate when it detects that web pages are being served from a CDN. This makes using a CDN attractive to SEOs and publishers who are concerned about increasing the amount of pages that are crawled by Googlebot.

Normally Googlebot will reduce the amount of crawling from a server if it detects that it’s reaching a certain threshold that’s causing the server to slow down. Googlebot slows the amount of crawling, which is called throttling. That threshold for “throttling” is higher when a CDN is detected, resulting in more pages crawled.

Something to understand about serving pages from a CDN is that the first time pages are served they must be served directly from your server. Google uses an example of a site with over a million web pages:

“However, on the first access of a URL the CDN’s cache is “cold”, meaning that since no one has requested that URL yet, its contents weren’t cached by the CDN yet, so your origin server will still need serve that URL at least once to “warm up” the CDN’s cache. This is very similar to how HTTP caching works, too.

In short, even if your webshop is backed by a CDN, your server will need to serve those 1,000,007 URLs at least once. Only after that initial serve can your CDN help you with its caches. That’s a significant burden on your “crawl budget” and the crawl rate will likely be high for a few days; keep that in mind if you’re planning to launch many URLs at once.”

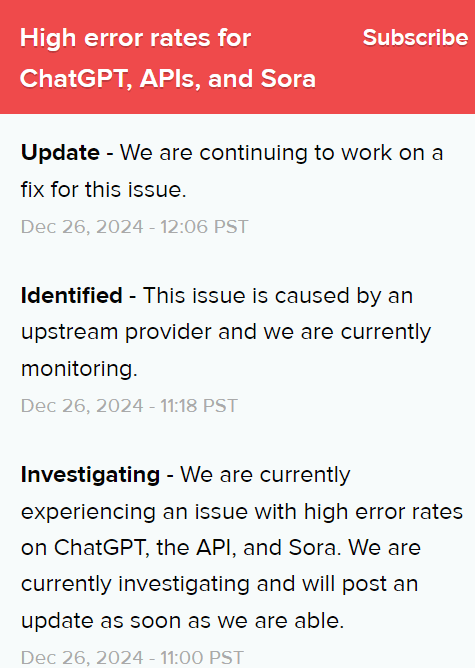

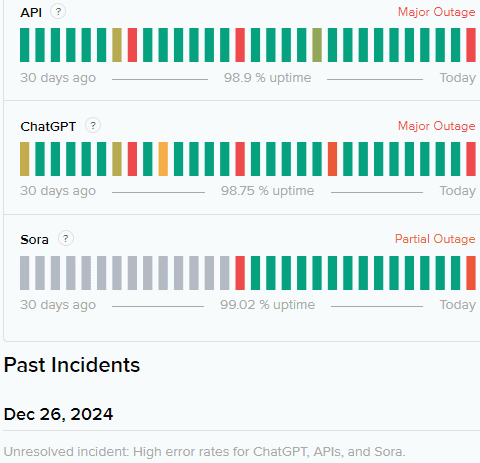

When Using CDNs Backfire For Crawling

Google advises that there are times when a CDN may put Googlebot on a blacklist and subsequently block crawling. This effect is described as two kinds of blocks:

1. Hard blocks

2. Soft blocks

Hard blocks happen when a CDN responds that there’s a server error. A bad server error response can be a 500 (internal server error) which signals a major problem is happening with the server. Another bad server error response is the 502 (bad gateway). Both of these server error responses will trigger Googlebot to slow down the crawl rate. Indexed URLs are saved internally at Google but continued 500/502 responses can cause Google to eventually drop the URLs from the search index.

The preferred response is a 503 (service unavailable), which indicates a temporary error.

Another hard block to watch out for are what Google calls “random errors” which is when a server sends a 200 response code, which means that the response was good (even though it’s serving an error page with that 200 response). Google will interpret those error pages as duplicates and drop them from the search index. This is a big problem because it can take time to recover from this kind of error.

A soft block can happen if the CDN shows one of those “Are you human?” pop-ups (bot interstitials) to Googlebot. Bot interstitials should send a 503 server response so that Google knows that this is a temporary issue.

Google’s new documentation explains:

“…when the interstitial shows up, that’s all they see, not your awesome site. In case of these bot-verification interstitials, we strongly recommend sending a clear signal in the form of a 503 HTTP status code to automated clients like crawlers that the content is temporarily unavailable. This will ensure that the content is not removed from Google’s index automatically.”

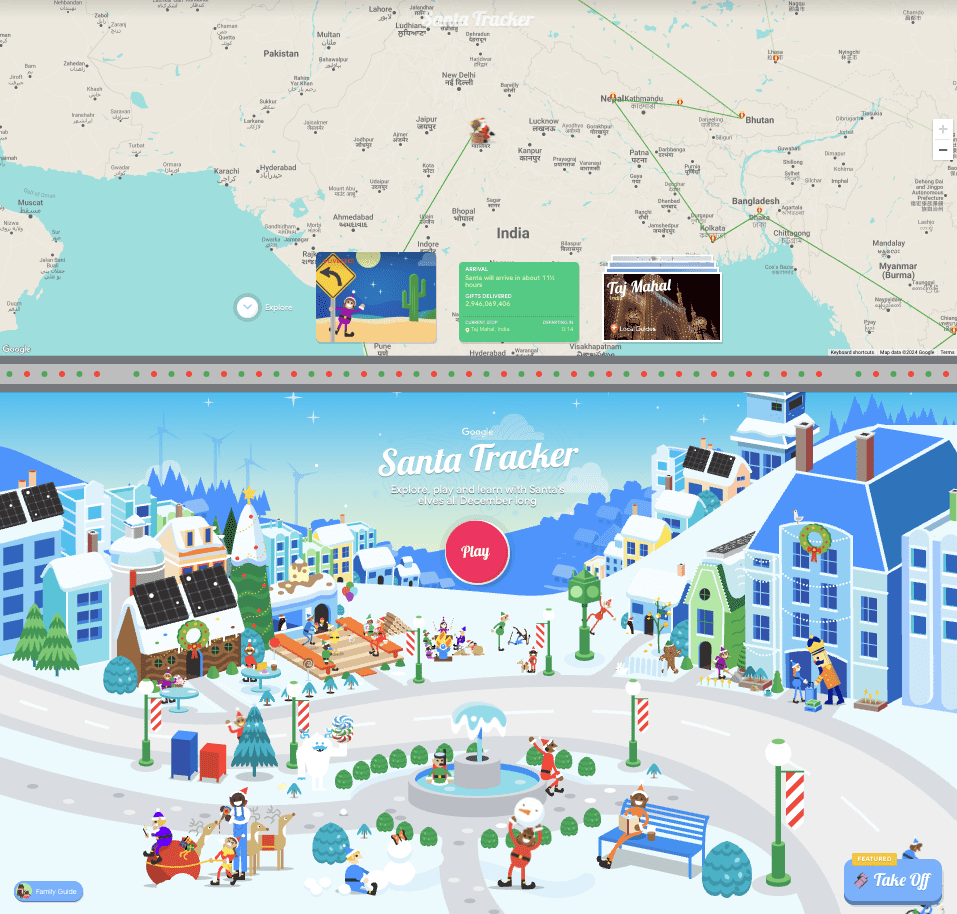

Debug Issues With URL Inspection Tool And WAF Controls

Google recommends using the URL Inspection Tool in the Search Console to see how the CDN is serving your web pages. If the CDN firewall, called a Web Application Firewall (WAF), is blocking Googlebot by IP address you should be able to check for the blocked IP addresses and compare them to Google’s official list of IPs to see if one of them are on the list.

Google offers the following CDN-level debugging advice:

“If you need your site to show up in search engines, we strongly recommend checking whether the crawlers you care about can access your site. Remember that the IPs may end up on a blocklist automatically, without you knowing, so checking in on the blocklists every now and then is a good idea for your site’s success in search and beyond. If the blocklist is very long (not unlike this blog post), try to look for just the first few segments of the IP ranges, for example, instead of looking for 192.168.0.101 you can just look for 192.168.”

Read Google’s documentation for more information:

Crawling December: CDNs and crawling

Featured Image by Shutterstock/JHVEPhoto