How To Get The Perfect Budget Mix For SEO And PPC via @sejournal, @brookeosmundson

There’s no one-size-fits-all answer when it comes to deciding how much of your marketing budget should go toward SEO versus PPC.

But that doesn’t mean the decision should be based on gut instinct or what your competitors are doing.

Marketing leaders are under more pressure than ever to show a return on every dollar spent.

So, it’s not about choosing one over the other. It’s about finding the right balance based on your goals, your timelines, and what kind of results the business expects to see.

This article walks through how to think about budget allocation between SEO and PPC with a focus on what kind of output you can reasonably expect for your spend.

What You’re Actually Paying For

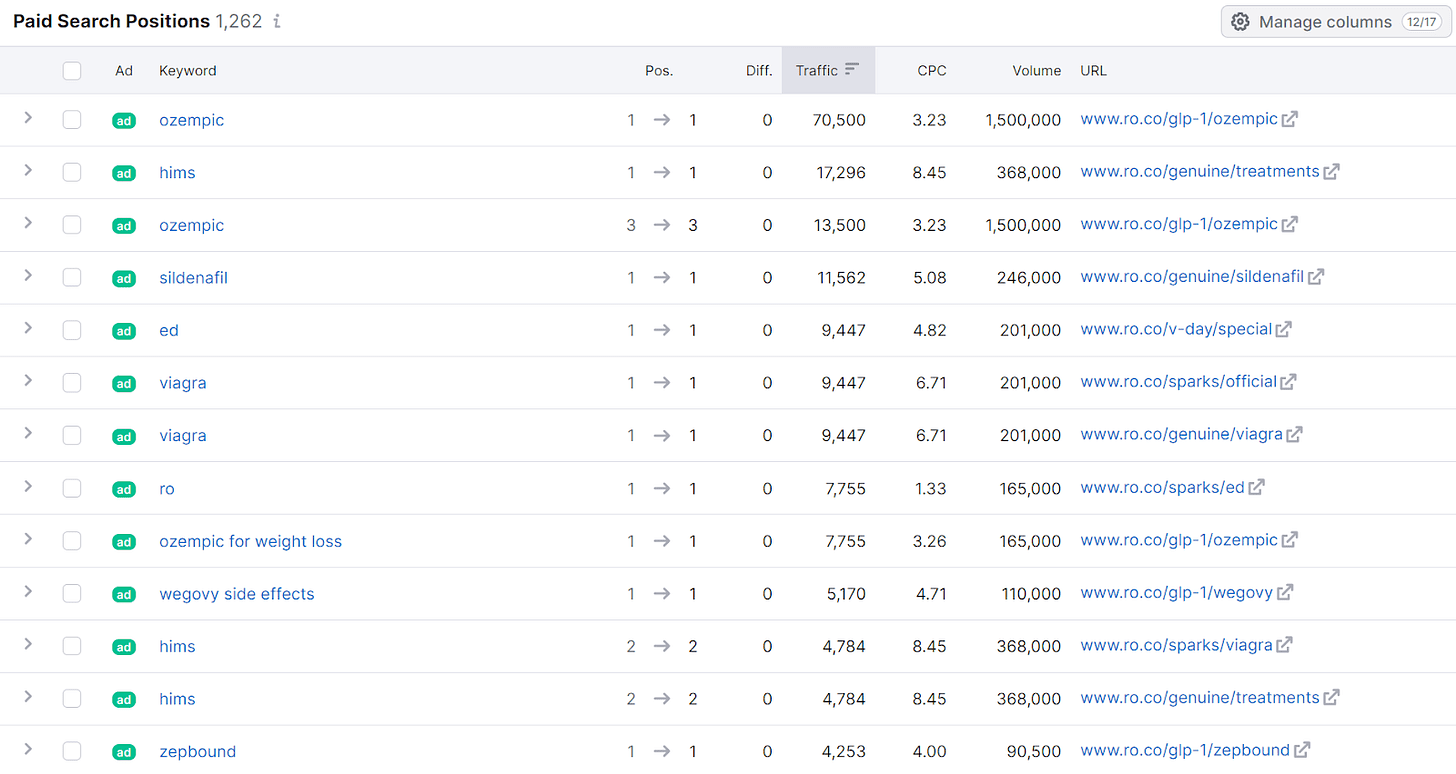

When you spend money on PPC, you’re buying immediate visibility.

Whether it’s Google Ads, Microsoft Ads, or paid social, you’re paying for clicks, impressions, and leads right now.

That cost is largely predictable and better to forecast. For example, if your cost-per-click (CPC) is $3 and your budget is $10,000, you can expect about 3,300 clicks.

PPC spend can be directly tied to pipeline, which is why it’s often favored by performance-driven teams.

With SEO, you’re investing in long-term growth. You’re paying for content, technical fixes, site structure improvements, and link acquisition.

But you don’t pay for clicks or impressions. Once rankings improve, those clicks come organically.

The upside is compounding growth and reduced cost per lead over time.

The downside? It can take months to see meaningful impact, and the cost-to-output ratio is harder to predict.

It’s also worth noting that PPC costs often increase with competition, while SEO costs tend to remain relatively stable over time. That can make SEO more scalable in the long term, especially for brands in high-CPC industries.

How Urgency And Goals Influence Budget Splits

If you need leads or traffic now, PPC should probably get the bulk of your short-term budget.

Launching a new product? Trying to meet quarterly goals? Paid search and social can give you the volume you need pretty quickly.

But if you’re trying to reduce customer acquisition cost (CAC) in the long run or improve visibility in organic search to support brand awareness, SEO deserves more attention. It builds value over time and often pays dividends past the life of your campaign.

Many brands start with a 70/30 or 60/40 split favoring PPC, then shift the mix as organic efforts gain traction.

Just make sure you set clear expectations: SEO is not a quick fix, and over-promising short-term gains can backfire when the board wants results next quarter.

If you’re rebranding, expanding into new markets, or supporting a product launch, a heavier upfront PPC investment makes sense. But brands that already rank well organically or have strong content foundations can afford to rebalance the mix in favor of SEO.

Why Organic Traffic Is Getting Harder To Defend

One emerging challenge for organic marketing is the rise of AI Overviews in Google Search. More brands are seeing a dip in organic traffic even when they maintain strong rankings.

Why?

Because the search experience is shifting. AI-generated summaries are now answering questions directly on the results page, often pushing traditional organic listings further down.

That means your SEO strategy can’t just be about rankings anymore. You need to invest in content that earns visibility in AI Overviews, featured snippets, and other enhanced search features.

This may involve rethinking how content is structured, focusing more on schema markup, FAQs, and direct-answer formats that AI models tend to surface.

In practical terms, your SEO budget should now include:

- Structured content planning built around entity-based search.

- Technical SEO improvements like schema and page speed.

- Multimedia content like images and videos, which AI often pulls into results.

- Continual refresh of older content to maintain relevance in evolving search formats.

This shift doesn’t mean SEO is no longer worth it. It means you need to be more strategic in how you spend.

Ask your SEO partner or in-house team how they’re adapting to AI search changes, and make sure your budget reflects that evolution.

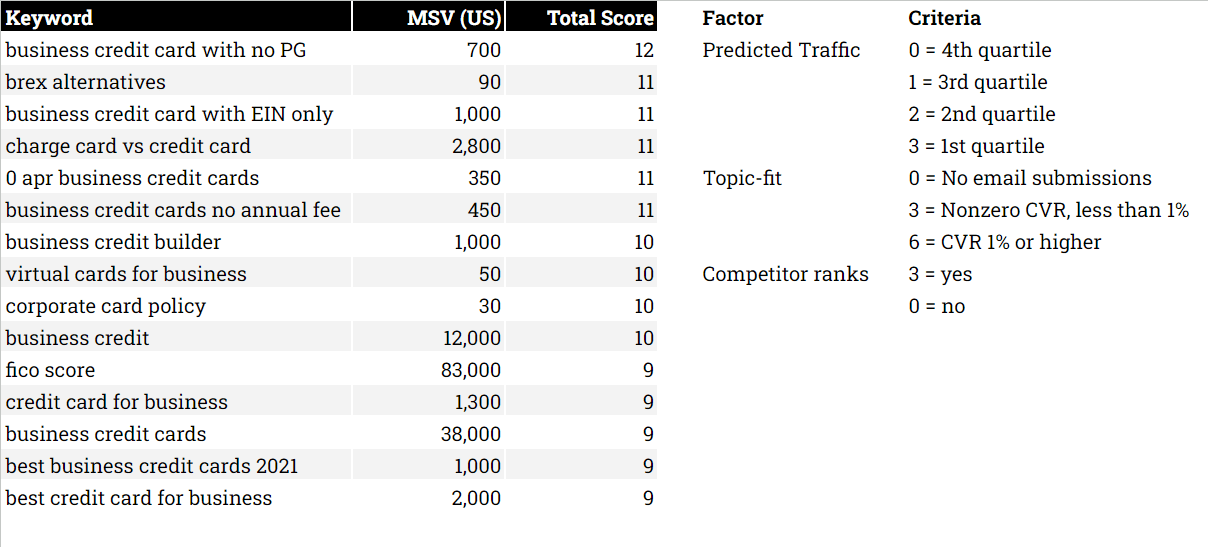

Budget Planning Based On Realistic Outputs

Let’s put this into numbers. Say you have a $100,000 annual digital marketing budget.

Putting $80,000 toward PPC might get you 25,000 paid clicks and 500 conversions (based on a fictional $3.20 CPC and 2% conversion rate).

The remaining $20,000 on SEO might buy you four high-quality articles a month, technical clean-up work, and backlink outreach.

If done well, this might start showing traction in three to six months and bring in sustained traffic over time.

The key is to model your budget around what’s actually possible for each channel, not just what you hope will happen. SEO efforts often have a longer lag time, but PPC campaigns can run out of gas as soon as you turn off the spend.

You should also budget for maintenance and reinvestment. Even strong SEO performance requires fresh content and updates to keep rankings.

Similarly, PPC campaigns need regular optimization, creative testing, and bid adjustments to stay efficient.

You should also plan for budget allocation across different campaign types: brand vs. non-brand, search vs. display, and prospecting vs. retargeting.

Each serves a different purpose, and over-investing on one without supporting the others can limit growth.

For example, allocating part of your PPC budget to retargeting warm audiences can drastically improve efficiency compared to cold prospecting alone.

While branded search often delivers low-cost conversions, it shouldn’t be your only area of investment if you’re trying to scale.

What To Communicate To Leadership

Leadership wants to know two things: how much are we spending, and what are we getting in return?

A mixed SEO and PPC strategy gives you the ability to answer both.

PPC provides short-term wins you can report on monthly.

SEO builds long-term momentum that pays off in quarters and years.

Explain that PPC is more like a faucet you control. SEO is more like building your own well. Both are valuable.

But if you only have one or the other, you’re either stuck renting traffic or waiting too long to see the impact.

Board members and non-marketing executives often prefer hard numbers. So, when proposing a budget mix, include projected costs per acquisition, estimated traffic volumes, and timelines for ramp-up.

Make it clear where each dollar is going and what kind of return is expected.

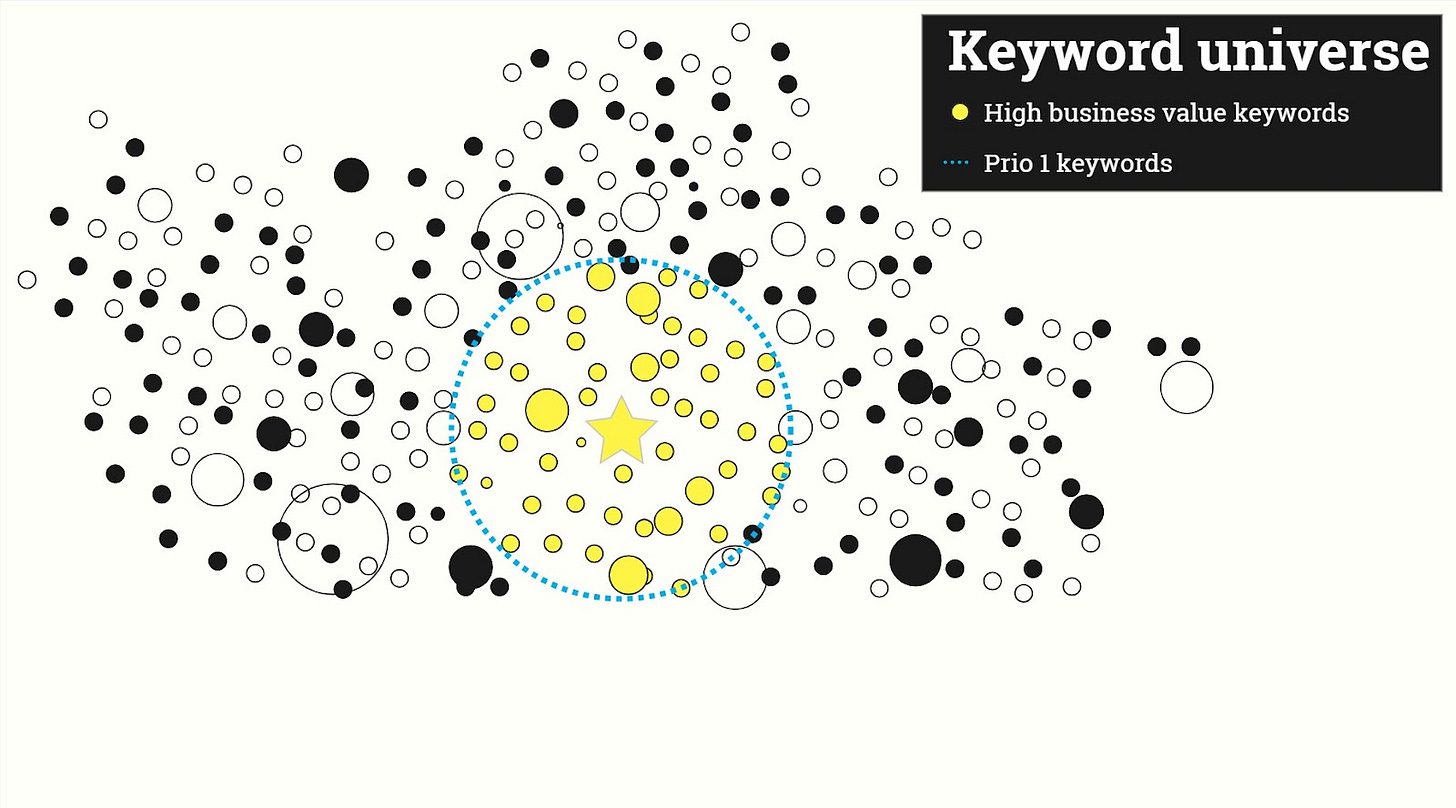

If possible, create a model that shows various scenarios. For example, what a 50/50 vs. 70/30 SEO/PPC split might look like in terms of conversions, traffic, and cost per lead over time.

Visuals help ground the conversation in data rather than preference.

Choosing The Right Metrics For Each Channel

One challenge with mixed-channel budget planning is deciding which key performance indicator (KPI) to prioritize.

PPC is easier to measure in terms of direct return on investment (ROI), but SEO plays a broader role in business success.

For PPC metrics, you may want to focus on KPIs like:

- Impression share.

- Conversion rate.

- Cost per acquisition (CPA).

- Return on ad spend (ROAS).

For SEO metrics, you may want to focus on:

- Organic traffic growth over time.

- Ranking improvements.

- Page engagement.

- Assisted conversions.

When reporting to leadership, show how the two channels complement each other.

For example, paid search might drive immediate clicks, but your top-converting landing page could rank organically and reduce spend over time.

When To Adjust Your Budget Mix

Your initial budget allocation isn’t set in stone. It should evolve based on performance data, market shifts, and internal needs.

If PPC costs rise but conversion rates drop, that could be a cue to pull back and invest more in organic.

If you’re seeing strong rankings but low engagement, it may be time to shift some SEO funds into conversion rate optimization (CRO) or paid retargeting.

Seasonality and campaign cycles also matter. Retailers may lean heavily on PPC during Q4, while B2B companies might invest more in SEO during longer sales cycles.

Set quarterly review points where you re-evaluate performance and make adjustments. That level of agility shows leadership you’re making informed decisions, not just sticking to arbitrary ratios.

Avoiding Common Budget Mistakes

Some companies go all-in on SEO, expecting miracles. Others burn through paid budgets with nothing left to sustain organic efforts. Both approaches are risky.

A healthy mix means budgeting for:

- Immediate lead gen (PPC).

- Long-term traffic growth (SEO).

- Regular testing and performance analysis.

Don’t forget to budget for what happens after the click: landing page development, CRO, and reporting tools that tie it all together.

Another mistake is treating SEO as a one-time project instead of an ongoing investment. If you only fund it during a site migration or a content sprint, you’ll lose momentum.

Same goes for PPC: Without a proper landing page experience or conversion tracking, even high-performing ads won’t deliver meaningful results.

Balancing Short-Term Wins With Long-Term Growth

There is no universal perfect split between SEO and PPC. But there is a perfect mix for your goals, stage of growth, and available resources.

Take the time to assess what you actually need from each channel and what you can realistically afford. Make sure your projections align with internal timelines and expectations.

And most importantly, keep reviewing your mix as performance data rolls in. The right budget allocation today might look very different six months from now.

Smart marketing leaders don’t choose sides. They choose what makes sense for the business today, and build flexibility into their strategy for tomorrow.

More Resources:

Featured Image: Jirapong Manustrong/Shutterstock