The Pentagon is planning for AI companies to train on classified data, defense official says

The Pentagon is discussing plans to set up secure environments for generative AI companies to train military-specific versions of their models on classified data, MIT Technology Review has learned.

AI models like Anthropic’s Claude are already used to answer questions in classified settings; applications include analyzing targets in Iran. But allowing models to train on and learn from classified data would be a new development that presents unique security risks. It would mean sensitive intelligence like surveillance reports or battlefield assessments could become embedded into the models themselves, and it would bring AI firms into closer contact with classified data than before.

Training versions of AI models on classified data is expected to make them more accurate and effective in certain tasks, according to a US defense official who spoke on background with MIT Technology Review. The news comes as demand for more powerful models is high: The Pentagon has reached agreements with OpenAI and Elon Musk’s xAI to operate their models in classified settings and is implementing a new agenda to become an “an ‘AI-first’ warfighting force” as the conflict with Iran escalates. (The Pentagon did not comment on its AI training plans as of publication time.)

Training would be done in a secure data center that’s accredited to host classified government projects, and where a copy of an AI model is paired with classified data, according to two people familiar with how such operations work. Though the Department of Defense would remain the owner of the data, personnel from AI companies might in rare cases access the data if they have appropriate security clearance, the official said.

Before allowing this new training, though, the official said, the Pentagon intends to evaluate how accurate and effective models are when trained on nonclassified data, like commercially available satellite imagery.

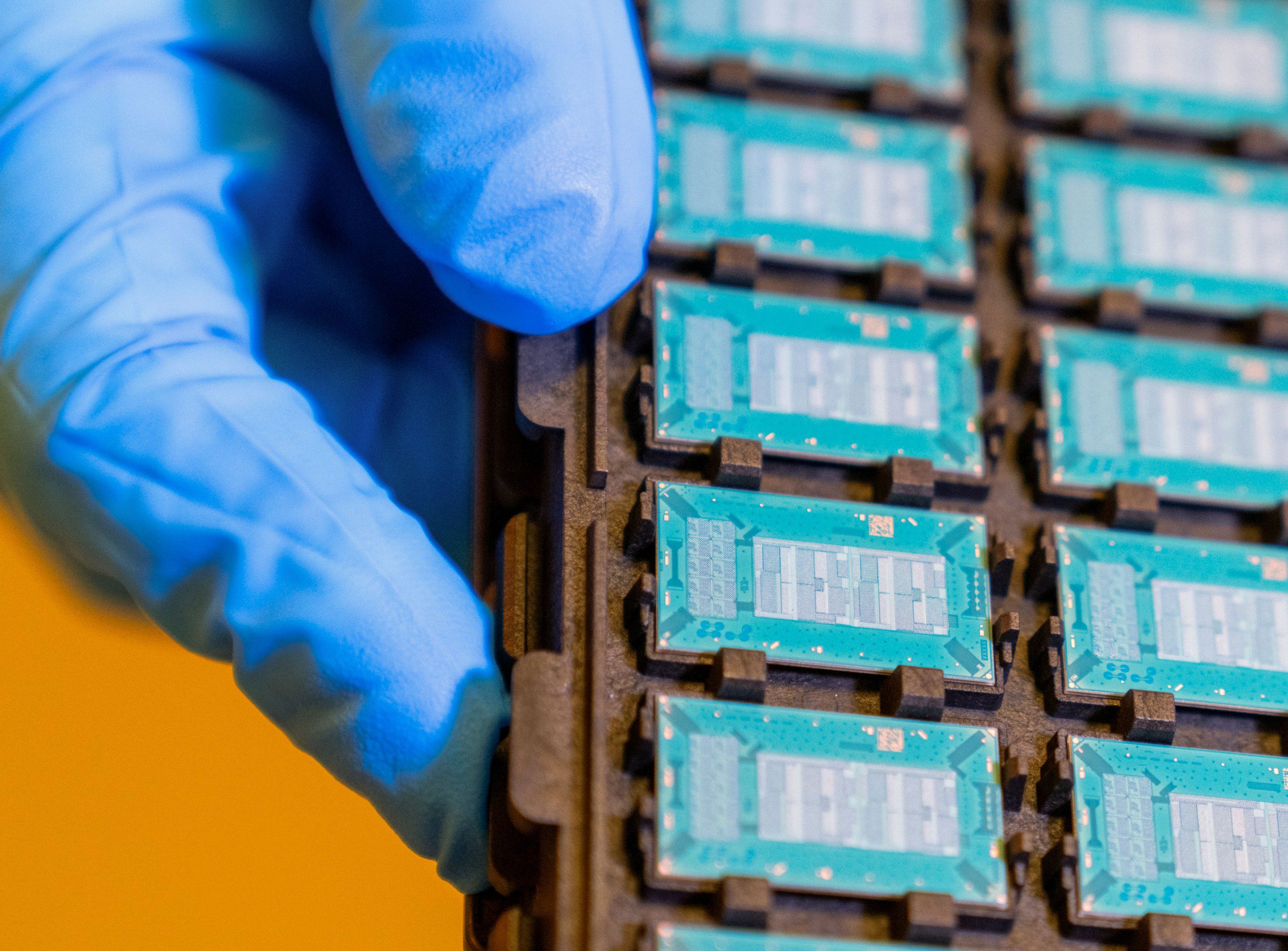

The military has long used computer vision models, an older form of AI, to identify objects in images and footage it collects from drones and airplanes, and federal agencies have awarded contracts to companies to train AI models on such content. And AI companies building large language models (LLMs) and chatbots have created versions of their models fine-tuned for government work, like Anthropic’s Claude Gov, which are designed to operate across more languages and in secure environments. But the official’s comments are the first indication that AI companies building LLMs, like OpenAI and xAI, could train government-specific versions of their models directly on classified data.

Aalok Mehta, who directs the Wadhwani AI Center at the Center for Strategic and International Studies and previously led AI policy efforts at Google and OpenAI, says training on classified data, as opposed to just answering questions about it, would present new risks.

The biggest of these, he says, is that classified information these models train on could be resurfaced to anyone using the model. That would be a problem if lots of different military departments, all with different classification levels and needs for information, were to share the same AI.

“You can imagine, for example, a model that has access to some sort of sensitive human intelligence—like the name of an operative—leaking that information to a part of the Defense Department that isn’t supposed to have access to that information,” Mehta says. That could create a security risk for the operative, one that’s difficult to perfectly mitigate if a particular model is used by more than one group within the military.

However, Mehta says, it’s not as hard to keep information contained from the broader world: “If you set this up right, you will have very little risk of that data being surfaced on the general internet or back to OpenAI.” The government has some of the infrastructure for this already; the security giant Palantir has won sizable contracts for building a secure environment through which officials can ask AI models about classified topics without sending the information back to AI companies. But using these systems for training is still a new challenge.

The Pentagon, spurred by a memo from Defense Secretary Pete Hegseth in January, has been racing to incorporate more AI. It has been used in combat, where generative AI has ranked lists of targets and recommended which to strike first, and in more administrative roles, like drafting contracts and reports.

There are lots of tasks currently handled by human analysts that the military might want to train leading AI models to perform and would require access to classified data, Mehta says. That could include learning to identify subtle clues in an image the way an analyst does, or connecting new information with historical context. The classified data could be pulled from the unfathomable amounts of text, audio, images, and video, in many languages, that intelligence services collect.

It’s really hard to say which specific military tasks would require AI models to train on such data, Mehta cautions, “because obviously the Defense Department has lots of incentives to keep that information confidential, and they don’t want other countries to know what kind of capabilities we have exactly in that space.”

If you have information about the military’s use of AI, you can share it securely via Signal (username jamesodonnell.22).