For roughly two decades, the SEO discipline operated on a quiet assumption that turned out to be one of its most valuable features. Guidance from one search engine traveled. If Google said sitemaps mattered, Bing said sitemaps mattered. If Bing said structured data deserved real effort, Google said the same. Practitioners optimized for Google with reasonable confidence that the work would carry across the other engines, and most of the time it did. That portability was not luck. It was the product of a structurally large overlap layer that the major search engines had jointly built, brick by brick, over twenty years.

That world doesn’t exist in LLM-land. The major providers train on different corpora, run different crawlers under different policies, route different queries through different retrieval systems, and apply different alignment processes that shape the final response in ways the upstream signals can’t predict. Guidance from any one provider, including Google’s guidance about its own Gemini products, is one data point. Practitioners carrying the SEO habit forward, the habit of treating one engine’s guidance as roughly the whole map, will optimize confidently for one platform and miss the others.

Sidebar: As I was finalizing this piece, Google published fresh guidance on optimizing for their generative AI features. Their framing is explicit: from Google Search’s perspective, optimizing for AI search is still SEO. That framing is accurate for Google Search. It does not extend to ChatGPT, Claude, Perplexity, or any other LLM, and that is precisely the trap this article is about.

The Shared Standards That Made SEO Guidance Portable

The era of portable guidance was built on actual collaboration, not coincidence. The Sitemaps protocol became the joint property of Google, Yahoo, and Microsoft in November 2006, when the three engines formally agreed to support a common protocol at version 0.90, building on Google’s earlier Sitemaps 0.84 from June 2005. Five years later, on June 2, 2011, the same three engines launched Schema.org, with Yandex joining shortly after, to create a common vocabulary for structured data markup. That was the announcement that got made on stage at SMX Advanced. I was on the Bing team at the time, and what struck me then is what still matters now. The engines were competitors, but they had decided that a shared vocabulary served them all. Webmasters got one set of rules. The web got cleaner data. The engines got better signals. Everybody won.

The pattern repeated with robots.txt, the 1994 convention that became RFC 9309 at the IETF in 2022, formalizing what every serious crawler already honored. And it repeated again, more recently, with IndexNow, the protocol Microsoft Bing and Yandex launched in October 2021. IndexNow is now supported by Bing, Yandex, Naver, Seznam, and Yep. Google has tested the protocol since 2021, but has not adopted it.

That overlap layer is exactly why Google’s guidance felt safe to follow, even if you cared about Bing traffic. The signals the engines used were not identical, but the inputs they accepted, the protocols they honored, and the standards they advertised were. Optimization had a shared substrate.

Where The LLM Stacks Actually Diverge

The LLM environment doesn’t have a shared substrate of comparable size. The differences are not cosmetic, and they are not temporary. They are baked into how the systems are built.

Start with training data. OpenAI has signed disclosed licensing deals with News Corp worth up to $250 million over five years, Axel Springer at roughly $13 million per year, Reddit at an estimated $70 million per year, plus the Financial Times, Condé Nast, Hearst, Vox Media, The Atlantic, the Associated Press, Le Monde, and others. Google has its own Reddit deal, estimated at $60 million per year, granting real-time data API access. Anthropic has not publicly disclosed equivalent publisher licensing deals, and that undisclosed status is itself the practitioner-facing point. The corpora that fed these models, and that continue to refresh them, are not the same documents. Practitioners cannot know what any given provider has paid for and what it hasn’t.

The crawler infrastructure diverges next. OpenAI runs three separate bots: GPTBot for training, OAI-SearchBot for search indexing, and ChatGPT-User for user-initiated retrieval. Anthropic runs three of its own: ClaudeBot for training, Claude-SearchBot for search, and Claude-User for user-initiated retrieval. Perplexity runs PerplexityBot and Perplexity-User. Google introduced Google-Extended in September 2023 as the user-agent that controls whether Google can use a site’s content to train Gemini, separate entirely from the Googlebot that handles traditional search indexing. There is no single AI user-agent. Every provider requires a separate rule, and the rules don’t translate cleanly across providers because the bots don’t do equivalent jobs in equivalent ways.

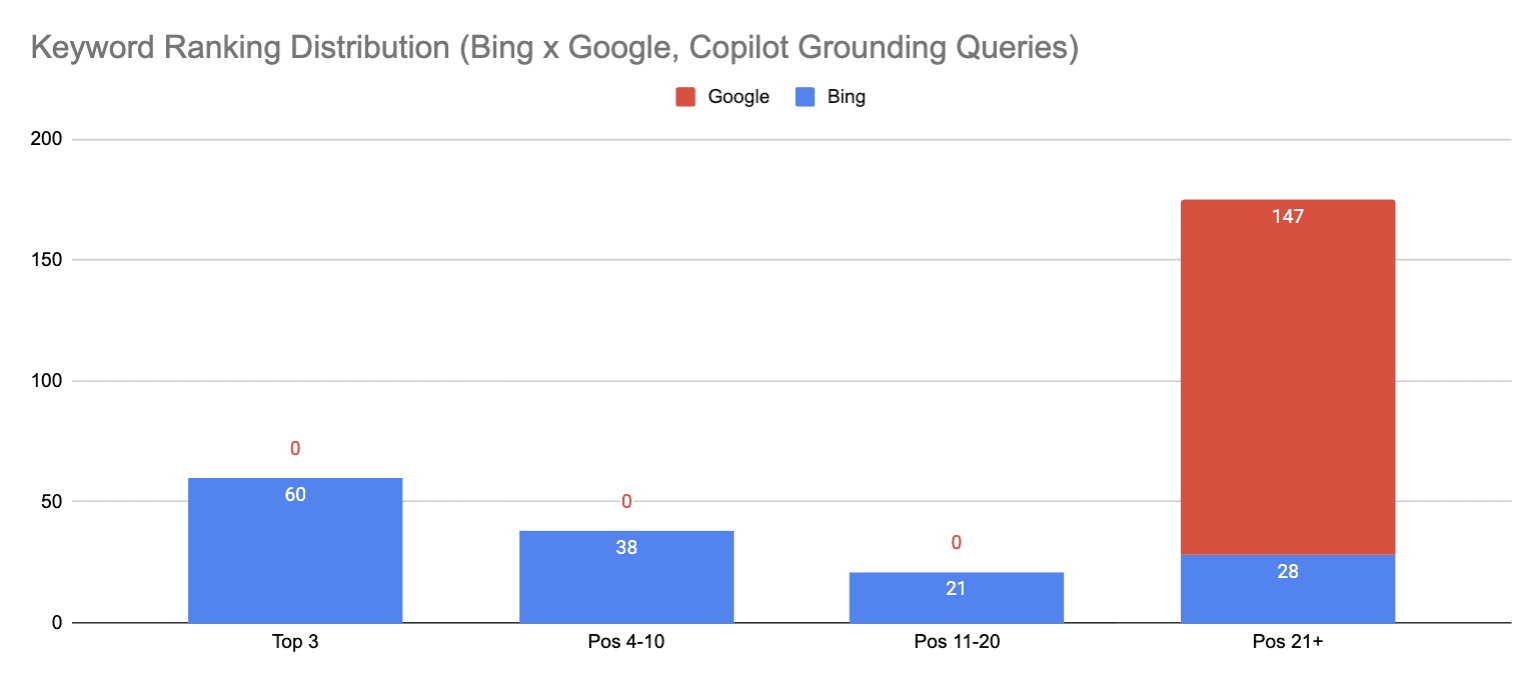

The retrieval architectures diverge structurally. ChatGPT has historically used Bing’s index as its primary web search source, and that connection appears to still be primary, though OpenAI continues to build out additional infrastructure alongside it. Perplexity built its retrieval system on a Vespa-based pipeline that treats documents and sub-document chunks as first-class retrievable units. Google’s Gemini uses Google’s own index plus Knowledge Graph grounding. Claude uses Brave Search as a retrieval partner. Same query, four different retrieval systems, four different views of which sources exist and which sources are worth surfacing.

Then comes the alignment layer, which is where SEO had no equivalent at all. After a model is trained on its corpus, providers run post-training to shape how the model actually behaves: tone, refusal patterns, format, safety posture, what counts as a good answer. OpenAI’s primary approach has been RLHF, or Reinforcement Learning from Human Feedback, where human raters score model outputs and the model learns to produce highly rated responses. Anthropic developed Constitutional AI, which trains models to critique and revise their own outputs against a written set of principles. These methodologies produce demonstrably different behavior in the final products. The same retrieved content, fed into two models aligned by two methodologies, can yield two materially different responses about the same brand.

When One Provider’s Guidance Demonstrably Fails To Port

The clearest single example of guidance that doesn’t port is llms.txt. Jeremy Howard of Answer.AI proposed the file in September 2024 as a markdown manifest, placed at a site’s root, that would guide LLMs to the most important content. The proposal got picked up across the SEO community. Yoast built a generator. Agencies added llms.txt creation to their service catalogs. Conference speakers declared it essential.

As of mid-2026, no major LLM provider has confirmed they consume the file. Not OpenAI. Not Anthropic. Not Google. Server-log analyses across hundreds of thousands of domains show major AI crawlers don’t routinely request /llms.txt at all. Google’s John Mueller publicly compared it to the deprecated meta keywords tag. Gary Illyes confirmed at Search Central Live in July 2025 that Google does not support llms.txt and is not planning to.

I’ve written about this elsewhere, so I won’t repeat the technicalities here. What matters for this argument is the structural lesson. Schema.org succeeded because three engines built it together and then enforced it together. Llms.txt was proposed by one researcher, picked up by tooling vendors, and ignored by the platforms it was supposed to serve. The shared-standards model that gave SEO its portable guidance is not available to LLM practitioners at the same scale, because the platforms are not building the standards together. They are building their own pipelines.

The Gemini Inversion

The cleanest illustration of how far guidance portability has degraded sits inside one company. Google publishes its own SEO documentation at Search Central, the canonical guidance the industry has followed for two decades. Those documents emphasize traditional ranking signals, E-E-A-T, content quality, technical accessibility, and structured data. That guidance is still useful for Google Search itself.

Google also makes Gemini, the model that powers AI Overviews and Google’s separate AI Mode surface. And the citation behavior of those surfaces does not appear to track the guidance the same company publishes for its own search results.

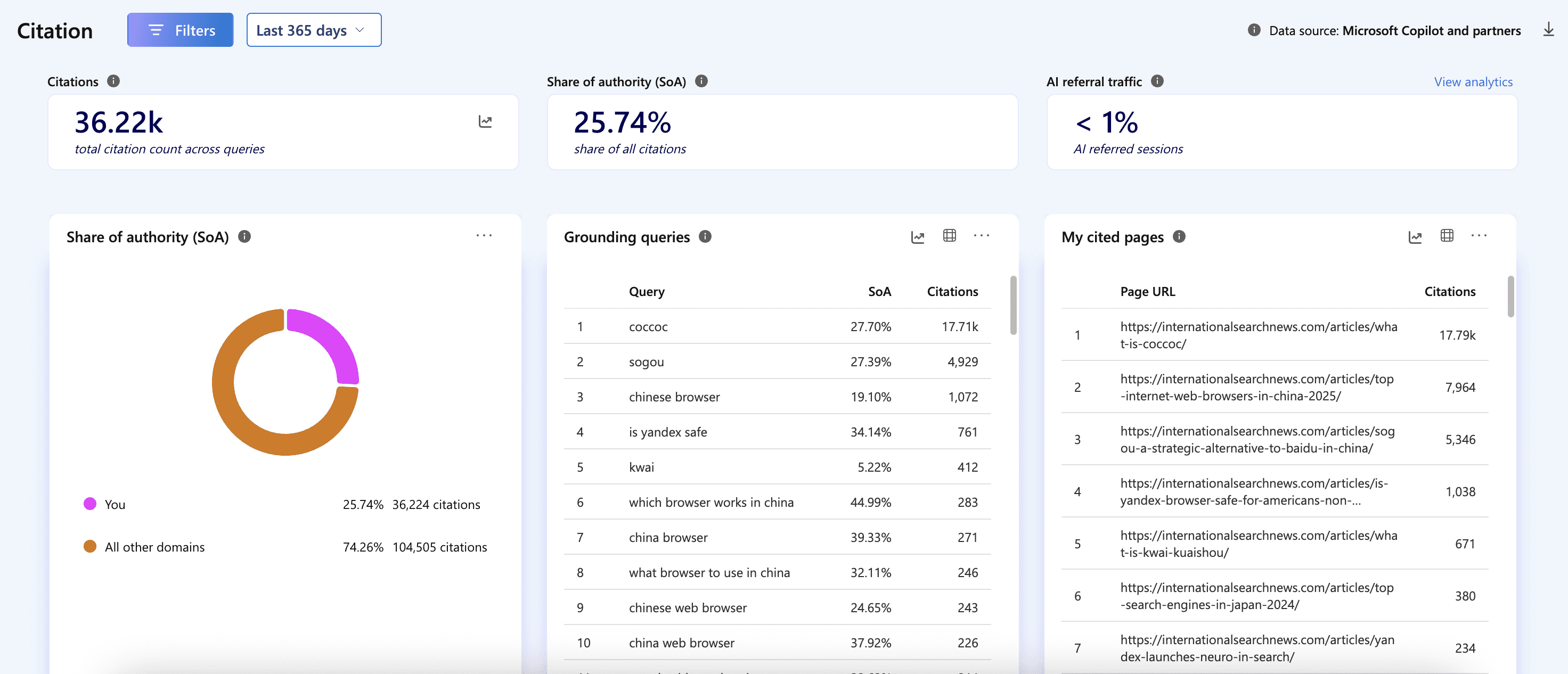

In late 2024, roughly three-quarters of pages cited in AI Overviews also ranked in Google’s top 12 for the same query. By early 2026, after Google upgraded AI Overviews to Gemini 3 in January, Ahrefs analyzed 4 million AI Overview URLs and found that only 38% of cited pages also appeared in the top 10 for the same query. A separate BrightEdge analysis put the overlap closer to 17%. SE Ranking’s post-upgrade work found that Gemini 3 replaced approximately 42% of the domains previously cited under earlier model versions and generates 32% more sources per response.

The gap widens further when you look at Google’s AI Mode, which is a separate conversational surface that runs on the same Gemini family. Semrush data shows AI Mode and AI Overviews reach semantically similar conclusions 86% of the time, but cite the same URLs only 13.7% of the time. Only 14% of AI Mode citations rank in Google’s traditional top 10.

It appears, so far, that the canonical relationship has shifted. Google’s published SEO guidance is still the cleanest path to ranking in Google Search. But that ranking is no longer a reliable proxy for being cited by Google’s own AI surfaces. The same guidance, the same content, the same domain, can produce three meaningfully different outcomes across Google Search, AI Overviews, and AI Mode, even though all three live inside the same company. The old playbook of following the search engine’s guidance and trusting that the engine’s other surfaces would behave consistently does not appear to be delivering the same returns it used to.

What Still Ports, And Why It’s Smaller Than It Looks

A universal layer does survive. Crawler accessibility still matters across every provider. Primary-source factual content still wins more citations than aggregator restatement. Clean retrievable structure still helps every system understand what a page is about. Presence on the high-authority sources that all major LLMs disproportionately cite, Wikipedia, YouTube, Reddit, major news outlets, still functions as a force multiplier across platforms. Earning visibility on those sources gives content a chance to surface in any LLM that draws on them.

But the universal layer is much smaller than it was in the SEO era. Qwairy’s analysis of 118,000 AI responses across ChatGPT, Perplexity, Google AI Mode, and Claude found that only 11% of cited domains appeared across multiple platforms. The other 89% were platform-specific. A brand that wins citations on Perplexity may be largely invisible on Claude. A brand that’s a regular reference on ChatGPT may not show up in AI Overviews at all. The same content can be the right answer for one system and the wrong answer for the system next to it.

What This Means For The Work

The practical implication is not abandoning all hope. It is that practitioners need to stop treating any single LLM provider’s guidance as the universal map and start treating it as one input among several. Read what every major provider publishes about their own systems. Test your visibility across platforms, not just on the platform you happen to use most. Treat divergence as the default and overlap as the exception, not the other way around.

This is not how SEO worked, and the difference matters. The old reflex was to optimize for Google and trust the portability. The new reality is that following one LLM’s guidance, even Google’s guidance about Gemini, will leave you optimized for a slice of the landscape and potentially blind to the rest. The discipline is being rebuilt on platform-specific work that didn’t exist in the SEO era, and the practitioners who recognize that first are going to spend the next two years setting the standards everyone else follows.

The overlap has shrunk. You now have more work than ever to accomplish.

If you have thoughts on where the divergence between providers is sharpest in your own work, reach out directly. I’d genuinely like to hear what’s showing up in the data.

More Resources:

This post was originally published on Duane Forrester Decodes.

Featured Image: Rawpixel.com/Shutterstock; Paulo Bobita/Search Engine Journal