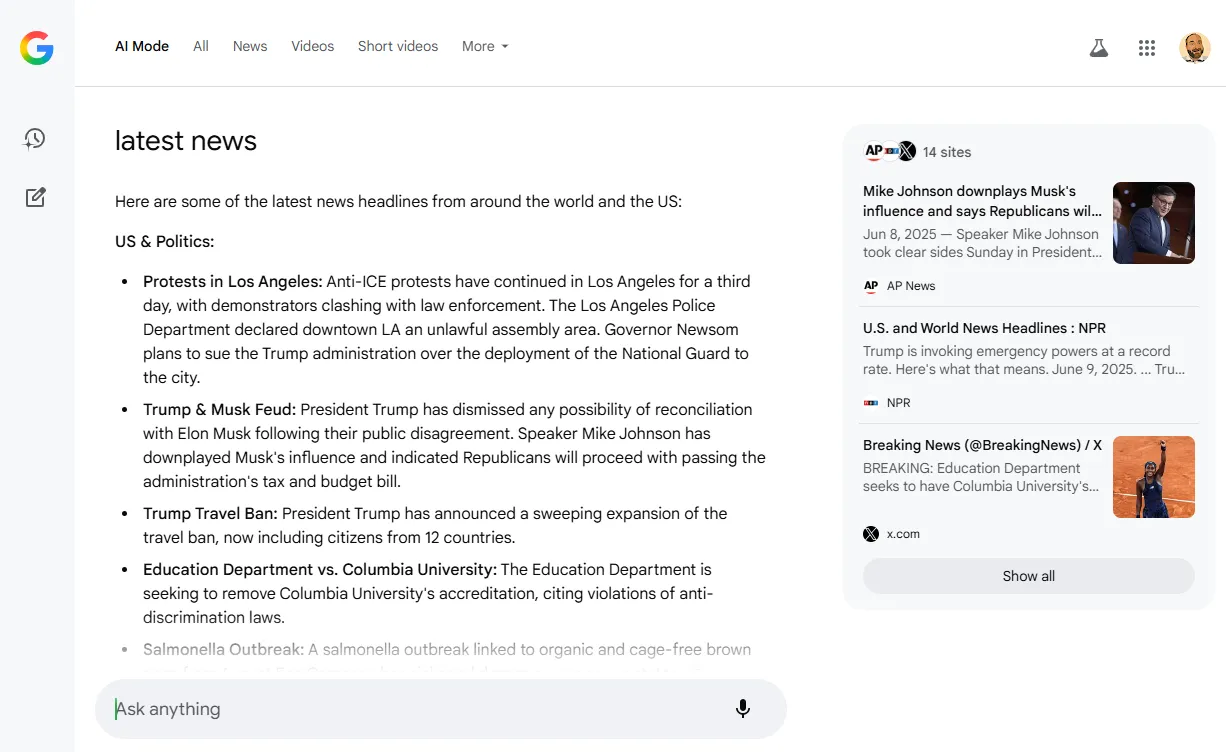

Global advertising expenditure has surpassed the $1 trillion mark for the first time.

Digital advertising continues to dominate this growth, with digital channels encompassing search and social media forecast to account for 72.9% of total ad revenue by the end of the year.

From a platform perspective, Google, Meta, Amazon, and Alibaba are expected to capture more than half of global ad revenues this year.

In-house and agency-side paid media teams are working harder than ever to grow ecommerce businesses efficiently, and the amount of data being used day-to-day (even hour-to-hour) is enormous.

With this growth and investment, something is clearly working, and given that brands can map new/returning audiences to their advertising funnel and serve ads across billions of auctions, it’s a lever that millions of businesses pull.

However, with budgets being split across channels (search, social, out-of-home, etc) and brands using CRM data, analytics platforms, third-party attribution tools, and more to define their “source of truth,” fragmentation begins to appear with reporting. Only 32% of executives feel they fully capitalize on their performance marketing data for this reason.

With data being spread across several sources, ad platforms having different attribution models, and the C-suite likely asking, “Which source of truth is correct?”, reporting paid media performance for ecommerce isn’t the most straightforward task.

This post digs into key performance indicators, platform attribution & modeling, business goals, and how to bring it all together for a holistic view of your advertising efficacy.

Key Performance Indicators (KPIs)

To begin navigating paid media reporting, it starts with the KPIs that each account optimizes towards and how this feeds into channel performance.

Each of these has purpose, benefits, limitations, and practical use cases that should be viewed through a lens of attribution unique to each platform.

Short-Term Performance

Return On Ad Spend (ROAS)

- Definition: revenue/cost.

This metric measures the revenue generated for every dollar spent on advertising.

If your total ad cost was $1,000 and you drove $18,500 revenue, your ROAS would be 18.5.

- Benefits: Direct measure of advertising efficiency and helps provide a snapshot of campaign profitability.

- Limitations: Does not account for customer acquisition costs (CACs), margin, LTV, returns, shipping, etc.

Cost Per Acquisition (CPA)

- Definition: cost/sales or leads.

This metric shows the average cost to generate a sale (or lead, depending on the goal, e.g., an ecommerce brand could be measuring using CPA to sign up new customers for an event).

For example, if your total ad cost was $5,000 and you drove 180 sales, your CPA would be $ 27.77.

- Benefits: Easy to monitor over time and helps assess efficiency.

- Limitations: Neglects revenue, customer acquisition cost, margin, LTV, etc., and treats all sales equally regardless of value.

Cost Of Sale (CoS)

- Definition: total ad spend/revenue.

This metric measures what % of revenue is spent on advertising.

Say a brand spends $20,000 on Meta Ads and generates £100,000 in revenue, their resulting CoS would be 20%.

- Benefits: Useful for margin-sensitive businesses and marketplaces where prices and/or Average Order Value (AOV) are volatile.

- Limitations: Can mask unprofitable sales (in some scenarios) if margin, returns, shipping, etc., are not considered.

Mid-Term Efficiency

Customer Acquisition Cost (CAC)

- Definition: total marketing costs spent on acquiring new customers/total number of new customers.

- Detailed definition: total marketing costs spent on acquiring new customers + wages + software costs + agency/consultancy fees + overheads/total number of new customers.

This metric may reflect either marketing costs associated with driving new customer acquisition or a holistic view of all costs associated with acquiring new customers.

Let’s say a business has a CAC of $175 and an AOV of $58, they will need each new customer to repeat purchase ~3x to make acquisition profitable.

- Benefits: Holistic view of acquisition cost, ideal for longer-term profitability analysis for paid media investment.

- Limitations: Not always the most suitable for channel-specific reporting (think account structuring, audiences, etc.), and can be a lagging metric as it doesn’t reflect short-term changes in performance like ROAS or CPA would.

Marketing Efficiency Ratio (MER)

- Definition: Sometimes referred to as blended ROAS, MER is calculated by dividing total revenue/total ad spend across all channels.

This metric shows how efficiently your total ad spend is converting into revenue, regardless of the channel.

Where MER is especially useful is when brands are active on multiple ad networks, all of which contribute in some way to the final sale, and where siloed platform attribution is inconsistent.

- Benefits: Captures topline performance from a transactional perspective and simplifies multi-channel reporting.

- Limitations: Neglects exactly where the sales and revenue came from and obscures channel efficiency, especially important for search, social, etc.

Long-Term Strategic

Customer Lifetime Value (CLV Or CLTV)

- Definition: This metric estimates the total net revenue a customer brings over their relationship with a brand.

Used alongside CAC, this metric is essential for understanding the true value of both acquisition and retention, which is important for almost all ecommerce models, and especially important for brands looking to capitalize on repeat purchases and subscription-based models.

- Benefits: Builds a foundation for tying performance marketing to long-term outcomes while helping give room to CAC targets across valuable customer segments.

- Limitations: Takes a fair amount of work to get set up and maintain, in addition to requiring a clean cohort and repeat purchase data. Additionally, when brands introduce new products/services, it can be hard to forecast accurate CLV numbers, and it will take time.

So, which one should you be reporting on for your ecommerce brand?

Speaking from experience, there isn’t a right or wrong answer, nor is there a blueprint for which KPIs you should be reporting on.

Having a multifaceted approach will enable more informed decision making, combining short-, medium-, and long-term KPIs to form a holistic model for measuring performance that feeds into your reports.

However, even after choosing your KPIs, different attribution models across advertising platforms add another layer of complexity, as does the ever-evolving customer journey involving multiple touchpoints across devices, channels, etc.

The Ad Platforms

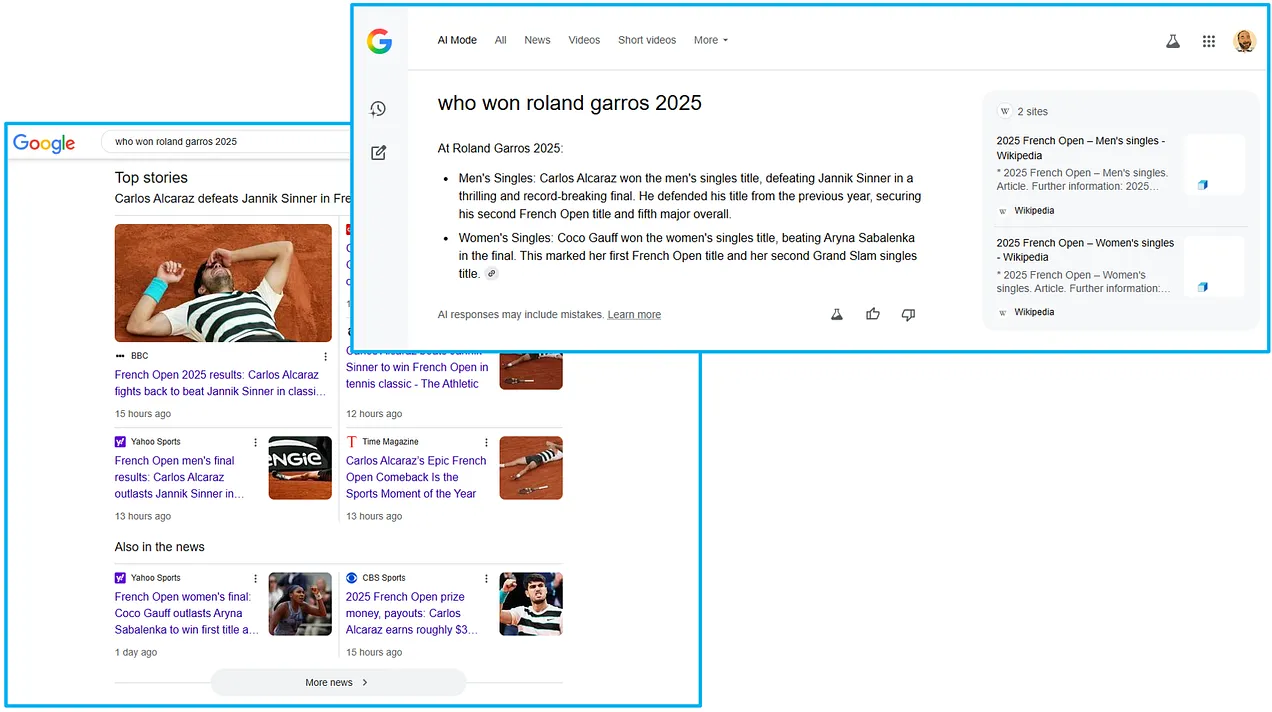

Each ad platform handles attribution and tracking differently.

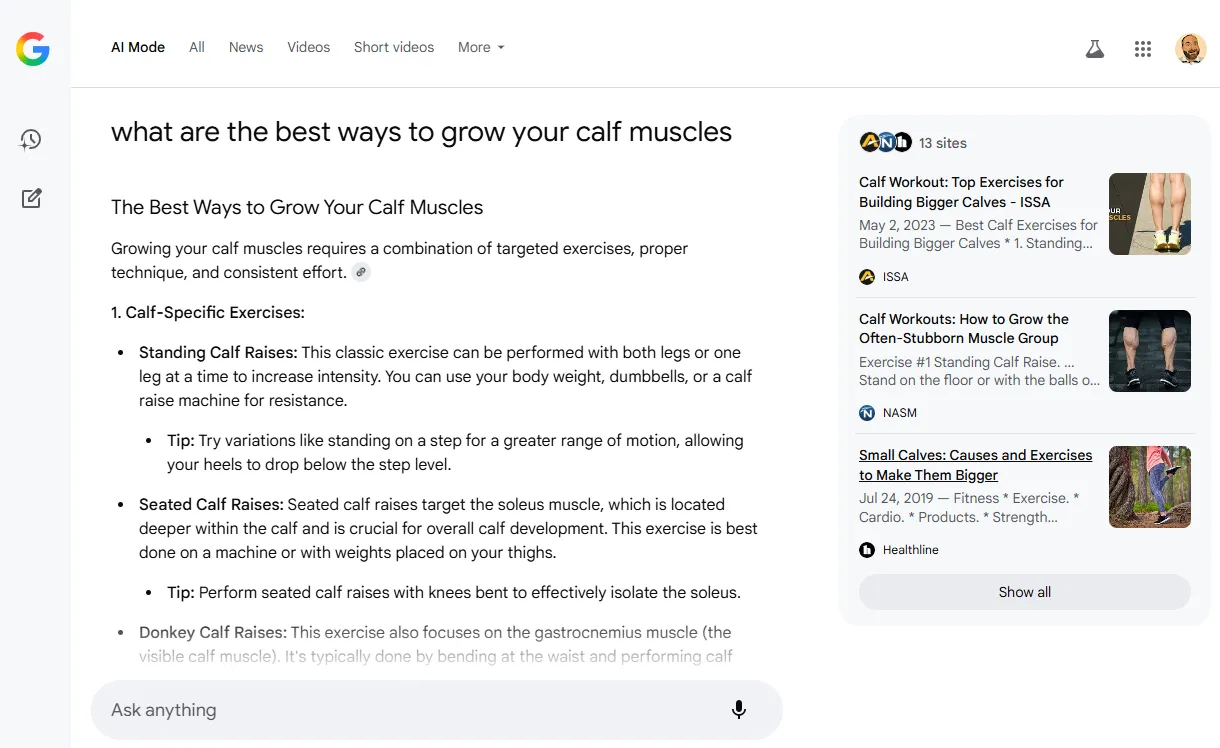

Take Google Ads, for example, the default model is Data-Driven Attribution (DDA), and when using the Google Ads pixel, only paid channels receive credit.

Then, with a GA4 integration to Google Ads, both paid and organic are eligible to receive credit for sales.

Click-through windows, value, count, etc, can all be customised to provide a view of performance that feeds into your Google Ads campaigns.

Using the Google Ads pixel, say a user clicks a shopping ad, then a search ad, and then returns via organic to make the purchase, 40% of the credit could go to shopping, and 60% to the search ad.

With the GA4 integrated conversion, shopping could receive 30%, search 40%, and organic visit 30%, resulting in 70% of the value being attributed back to the campaigns in-platform.

Now, comparing this to Meta Ads, which uses a seven-day click and one-day view attribution window by default, when a user converts within this time frame, 100% of the credit will be attributed to Meta.

This is why the narrative for conversion tracking on Meta is one of overrepresentation, with brands seeing inflated revenue numbers vs. other channels, even more so with loose audience targeting, where campaign types such as ASC can serve assets to audiences who have already interacted with your brand.

Then, when you dig into third-party analytics, the comparisons between Google Ads, Meta Ads, Pinterest Ads, etc., are almost the complete opposite.

So, what should this data be used for, and how does it factor into the bigger picture?

In-platform metrics are best viewed as directional.

They help optimize within the walls of that specific platform to identify high-performing audiences, auctions, creatives, and placements, but they rarely reflect the true incremental value of paid media to your business.

The data in Google, Meta, Pinterest, etc. is a platform-specific lens on performance, and the goal shouldn’t be to pick one or ignore these metrics.

It should be to interpret these for what they are and how they play into the overarching strategy.

The Bigger Picture

KPIs such as ROAS and CPA offer immediate insights but provide a fragmented view of paid media performance.

To gain a comprehensive understanding, brands must combine medium- to long-term KPIs with broader modeling and tests that account for the multifaceted nature of performance marketing, while considering how complex customer journeys are in this day and age.

Marketing Mix Modeling (MMM)

Introduced in the 1950s, MMM is a statistical analysis that evaluates the effectiveness of marketing channels over time.

By analyzing historical data, MMM helps advertisers understand how different marketing activities contribute to sales and can guide budget allocation.

A 2024 Nielsen study found that 30% of global marketers cite MMM as their preferred method of measuring holistic ROI.

The very short version of how to get started with MMM includes:

- Collecting aggregated data (roughly speaking, at least two years of weekly data across all channels, mapped out with every possible variable (e.g., pricing, promotions, weather, social trends, etc.)

- Defining the dependent variable, which for ecommerce will be sales or revenue.

- Run regression modeling to isolate the contribution of each variable to sales (adjusting for overlaps, lags, etc.)

- Analyze, optimize, and report on the coefficients to understand the relative impact and ROI of your paid media activity as whole.

Unlike platform attribution, this doesn’t rely on user-level tracking, which is especially useful with privacy restrictions now and in the future.

From a tactical standpoint, your chosen KPIs will still lead campaign optimizations for your day-to-day management, but at a macro level, MMM will determine where to invest your budget and why.

Incrementality Testing

Instead of relying on attribution models, this uses controlled experiments to isolate the impact of your paid media campaigns on actual business outcomes.

This kind of testing aims to answer the question, “Would these sales have happened without the paid media investment?”.

This involves:

- Defining an objective or independent variable (e.g., sales, revenue, etc.)

- Creating test and control groups. This could be by audience or geography – one will be exposed to the campaigns and the other will not.

- Run the experiment while keeping all conditions equal across both groups.

- Compare the outcomes, analyze performance, and calculate the impact.

This isn’t one that’s run every week, but from a strategic point of view, these tests help to validate the actual performance of paid media and direct where and what spend should be allocated across ad platforms.

Operational Factors

These are equally as important (if not more) for ecommerce reporting and absolutely need to be considered when setting KPIs and beginning to think about modeling, testing, etc.

- Product margin.

- AOV variability.

- Shipping costs.

- Returns rates.

- Repeat rates.

- Discounting and promotions.

- Cancelled and/or failed payments.

- Stock availability.

- Attribute availability (e.g., size, color, model).

- Pixels and tracking.

Without considering these factors, brands will use inaccurate data from the get-go.

Think about the impact of buy now, pay later. Providers such as Klarna or Clearpay can lead to higher return rates, as bundle buying and impulsive purchases become more accessible.

Without considering operational factors, using this example and a basic in-platform ROAS, brands would be optimizing toward incorrect checkout data with higher AOV’s and no consideration of returns, restocking, etc.

Ultimately, building a true picture of paid media performance means stepping beyond the platform KPIs and metrics to consider all factors involved and how best to model the data to uncover not just “what” is happening, but “why” it is and how this impacts the wider business.

Bringing It All Together

No single tool or model tells the full story.

You’ll need to compare platform data, internal analytics, and external modeling to build a more reliable view of performance.

The first step is getting watertight KPIs nailed down that consider every possible operational factor so you know the platforms are being fed the correct data, and if you need to modify these based on platform nuances due to differing attribution models, do it.

Once these are nailed down, find a model that you trust and that will show you the holistic impact of your paid media spend on overall business performance.

You could explore the use of third-party attribution tools that aim to blend data together, but even with these, you’ll still require clear and accurate KPIs and reliable tracking.

Then, when it comes to the visual side of reporting, the world is your oyster.

Looker Studio, Tableau, and Datorama are among the long list of well-known platforms, and with most brands using three to four business intelligence tools and 67% of analysts relying on multiple dashboards, don’t stress if you can’t get everything under one lens.

When all of this is executed and made into a priority over the short-term ebbs and flows of paid media performance, this is the point where connecting media spend to profit begins.

More Resources:

Featured Image: Surasak_Ch/Shutterstock