A rare warm spell in January melted enough snow to uncover Cornell University’s newest athletic field, built for field hockey. Months before, it was a meadow teeming with birds and bugs; now it’s more than an acre of synthetic turf roughly the color of the felt on a pool table, almost digital in its saturation. The day I walked up the hill from a nearby creek to take a look, the metal fence around the field was locked, but someone had left a hallway-size piece of the new simulated grass outside the perimeter. It was bristly and tough, but springy and squeaky under my booted feet. I could imagine running around on it, but it would definitely take some getting used to.

My companion on this walk seemed even less favorably disposed to the thought. Yayoi Koizumi, a local environmental advocate, has been fighting synthetic-turf projects at Cornell since 2023. A petite woman dressed that day in a faded plum coat over a teal vest, with a scarf the colors of salmon, slate, and sunflowers, Koizumi compulsively picked up plastic trash as we walked: a red Solo cup, a polyethylene Dunkin’ container, a five-foot vinyl panel. She couldn’t bear to leave this stuff behind to fragment into microplastic bits—as she believes the new field will. “They’ve covered the living ground in plastic,” she said. “It’s really maddening.”

The new pitch is one part of a $70 million plan to build more recreational space at the university. As of this spring, Cornell plans to install something like a quarter million square feet of synthetic grass—what people have colloquially called “astroturf” since the middle of the last century. University PR says it will be an important part of a “health-promoting campus” that is “supportive of holistic individual, social, and ecological well-being.” Koizumi runs an anti-plastic environmental group called Zero Waste Ithaca, which says that’s mostly nonsense.

This fight is more than just the usual town-versus-gown tension. Synthetic turf used to be the stuff of professional sports arenas and maybe a suburban yard or two; today communities across the United States are debating whether to lay it down on playgrounds, parks, and dog runs. Proponents say it’s cheaper and hardier than grass, requiring less water, fertilizer, and maintenance—and that it offers a uniform surface for more hours and more days of the year than grass fields, a competitive advantage for athletes and schools hoping for a more robust athletic program.

But while new generations of synthetic turf look and feel better than that mid-century stuff, it’s still just plastic. Some evidence suggests it sheds bits that endanger users and the environment, and that it contains PFAS “forever chemicals”—per- and polyfluoroalkyl substances, which are linked to a host of health issues. The padding within the plastic grass is usually made from shredded tires, which might also pose health risks. And plastic fields need to be replaced about once a decade, creating lots of waste.

Yet people are buying a lot of the stuff. In 2001, Americans installed just over 7 million square meters of synthetic turf, just shy of 11,000 metric tons. By 2024, that number was 79 million square meters—enough to carpet all of Manhattan and then some, almost 120,000 metric tons. Synthetic turf covers 20,000 athletic fields and tens of thousands of parks, playgrounds, and backyards. And the US is just 20% of the global market.

Where real estate is limited and demand for athletic facilities is high, artificial turf is tempting. “It all comes down to land and demand.”

Frank Rossi, professor of turf science, Cornell

Those increases worry folks who study microplastics and environmental pollution. Any actual risk is hard to parse; the plastic-making industry insists that synthetic fields are safe if properly installed, but lots of researchers think that isn’t so. “They’re very expensive, they contain toxic chemicals, and they put kids at unnecessary risk,” says Philip Landrigan, a Boston College epidemiologist who has studied environmental toxins like lead and microplastics.

But at Cornell, where real estate is limited and demand for athletic facilities is high, synthetic turf was a tempting option. As Frank Rossi, a professor of turf science at Cornell, told me: “It all comes down to land and demand.”

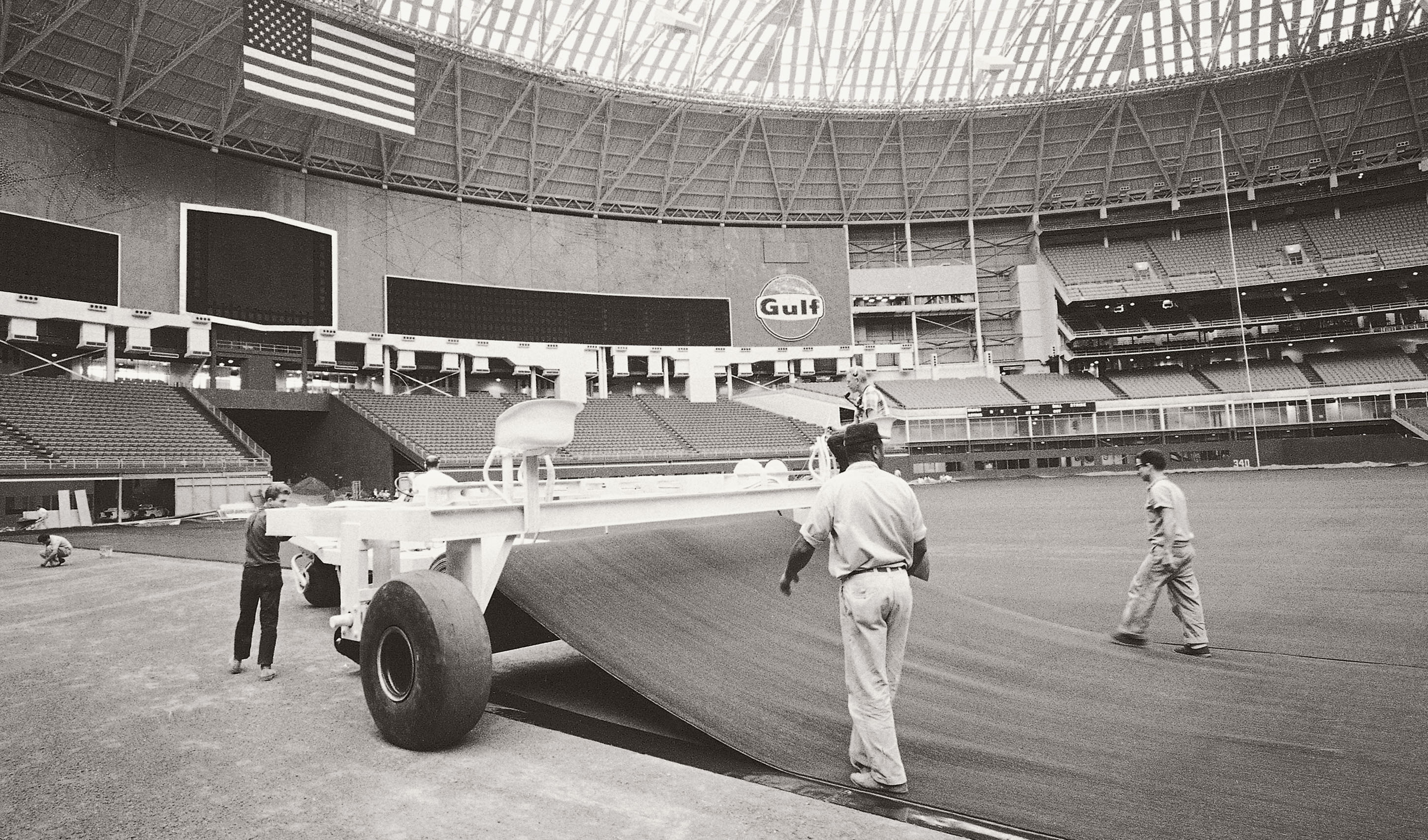

In 1965, Houston’s new, domed baseball stadium was an icon of space-age design. But the Astrodome had a problem: the sun. Deep in the heart of Texas, it shined brightly through the Astrodome’s skylights—so much so that players kept missing fly balls. So the club painted over the skylights. Denied sunlight, the grass in the outfield withered and died.

A replacement was already in the works. In the late 1950s a Ford Foundation–funded educational laboratory determined that a soft, grasslike surface material would give city kids more places to play outside and had prevailed upon the Monsanto corporation to invent one. The result was clipped blades of nylon stuck to a rubber base, which the company called ChemGrass. Down it went into Houston’s outfield, where it got a new, buzzier name: AstroTurf.

That first generation of simulated lawn was brittle and hard, but quality has improved. Today, there are a few competing products, but they’re all made by extruding a petroleum-based polymer—that’s plastic—through tiny holes and then stitching or fusing the resulting fibers to a carpetlike bottom. That gets attached to some kind of padding, also plastic. In the 1970s the industry started layering that over infill, usually sand; by the 1990s, “third generation” synthetic turf had switched to softer fibers made of polyethylene. Beneath that, they added infill that combined sand and a soft, cheap shredded rubber made from discarded automobile tires, which pile up by the hundreds of millions every year. This “crumb rubber” provides padding and fills spaces between the blades and the backing.

In the early 1980s, nearly half the professional baseball and football fields in the US had synthetic turf. But many players didn’t like it. It got hotter than real grass, gave the ball different action, and seemed to be increasing the rate of injuries among athletes. Since the 1990s, most pro sports have shifted back toward grass—water and maintenance costs pale in comparison to the importance of keeping players happy or sparing them the risk of injury.

But at the same time, more universities and high schools are buying the artificial stuff. The advantages are clear, especially in places where it rains either too much or not enough. A natural-grass field is usable for a little more than 800 hours a year at the most, spread across just eight months in the cooler, wetter northern US. An artificial-turf field can see 3,000 hours of activity per year. For sports like lacrosse, which begins in late winter, this makes artificial turf more appealing. Most lacrosse pitches are now synthetic. So are almost all field hockey pitches; players like the way the even, springy turf makes the ball bounce.

Furthermore, supporters say synthetic turf needs less maintenance than grass, saving money and resources. That’s not always true; workers still have to decompact the playing surface and hose it off to remove bird poop or cool it down. Sometimes the infill needs topping up. But real grass allows less playing time, and because grass athletic fields often need to be rotated to avoid damage, synthetic ground cover can require less space. Hence the market’s explosive growth in the 21st century.

The city and town of Ithaca—two separate political entities with overlapping jurisdiction over Cornell construction projects—held multiple public meetings about the university’s new synthetic fields: the field hockey pitch and a complex called the Meinig Fieldhouse. Koizumi’s group turned up in force, and a few folks who worked at Cornell came to oppose the idea too—submitting pages of citations and studies on the risks of synthetic grass.

At two of those meetings, dozens of Cornell athletes turned out to support the turf. Representatives of the university and the athletic department declined to speak with me for this story, citing an ongoing lawsuit from Zero Waste Ithaca. But before that, Nicki Moore, Cornell’s director of athletics, told a local newspaper that demand from campus groups and sports teams meant the fields were constantly overcrowded. “Activities get bumped later and later, and sometimes varsity teams won’t start practicing until 10 at night, you know?” Moore told the paper. “Availability of all-weather space should normalize scheduling a great deal.”

That argument wasn’t universally convincing. “It’s a bad idea, but that’s from the environmental perspective,” says Marianne Krasny, director of Cornell’s Civic Ecology Lab and one of the speakers at those hearings. “Obviously the athletic department thinks it’s a great idea.”

Members of Cornell on Fire, a climate action group with members from both the university and the town, joined in opposing the use of artificial turf, citing the fossil-fuel origins of the stuff. They described the nominal support of the project from student athletes as inauthentic, representing not grassroots support but, yes, an astroturf campaign.

Sorting out the actual science here isn’t simple. Over time, the plastic that synthetic turf is made of sheds bits of itself into the environment. In one study, published in 2023 in the journal Environmental Pollution, researchers found that 15% of the medium-size and microplastic particles in a river and the Mediterranean Sea outside Barcelona, Spain, came from artificial turf, mostly in the form of tiny green fibers. Back in 2020, the European Chemicals Agency estimated that infill material from artificial-turf fields in the European Union was contributing 16,000 metric tons of microplastics to the environment each year—38% of all annual microplastic pollution. Most of that came from the crumb rubber infill, which Europe now plans to ban by 2031.

This pollution worries the Cornell activists. Ithaca is famous for scenic gorges and waterways. The new field hockey pitch is uphill from a local creek that empties into Cayuga Lake, the longest of the Finger Lakes and the source of drinking water for over 40,000 people.

And it’s not just the plastic bits. When newer generations of synthetic turf switched to durable high-density polyethylene, the new material gunked up the extruders used in the manufacturing process. So turf makers started adding fluorinated polymers—a type of PFAS. Some of these environmentally persistent “forever chemicals” cause cancer, disrupt the endocrine system, or lead to other health problems. Research in several different labs has found PFAS in many types of plastic grass.

But the key to assessing the threat here is exposure. Heather Whitehead, an analytical chemist then at the University of Notre Dame, found PFAS in synthetic turf at levels around five parts per billion—but estimated it’d be in water running off the fields at three parts per trillion; for context, the US Environmental Protection Agency’s legal drinking-water limit on one of the most widespread and dangerous PFAS chemicals is four parts per trillion. “These chemicals will wash off in small amounts for long periods of time,” says Graham Peaslee, Whitehead’s advisor and an emeritus nuclear physicist who studies PFAS concentrations. “I think it’s reason enough not to have artificial turf.”

This gets confusing, though. There are over 16,000 different types of PFAS, few have been well studied, and different companies use different manufacturing techniques. Companies represented by the Synthetic Turf Council now “use zero intentionally added PFAS,” says Melanie Taylor, the group’s president. “This means that as the field rolls off the assembly line, there are zero PFAS-formulated materials present.”

Some researchers are skeptical of the industry’s assurances. They’re hard to confirm, especially because there are a lot of ways to test for PFAS. The type of synthetic turf going onto the new field hockey pitch at Cornell is called GreenFields TX; the university had a sample tested using an EPA method that looks for 40 different PFAS compounds. It came back negative for all of them. The local activists countered that the test doesn’t detect the specific types they’re most concerned about, and in 2025 they paid for three more tests on newly purchased synthetic turf. Two clearly found fluorine—the F in “PFAS”—and one identified two distinct PFAS compounds. (The company that makes GreenFields TX, TenCate, declined to comment, citing ongoing litigation.)

PFAS isn’t the only potential problem. There’s also the crumb rubber made from tires. A billion tires get thrown out every year worldwide, and if they aren’t recycled they sit in giant piles that make great habitats for rats and mosquitoes; they also occasionally catch fire. Lots of the tires that go into turf are made of styrene-butadiene rubber, or SBR. In bulk, that’s bad. Butadiene is a carcinogen that causes leukemia, and fumes from styrene can cause nervous system damage. SBR also contains high levels of lead.

But how much of that comes out of synthetic-turf infill? Again, that’s hotly debated. Researchers around the world have published suggestive studies finding potentially dangerous levels of heavy metals like zinc and lead in synthetic turf, with possible health risks to people using the fields. But a review of many of the relevant studies on turf and crumb rubber from Canada’s National Collaborating Centre for Environmental Health determined that most well-conducted health risk assessments over the last decade found exposures below levels of concern for cancer and certain other diseases. A 2017 report by the European Chemicals Agency—the same people who found all those microplastics in the environment—“found no reason to advise people against playing sports on synthetic turf containing recycled rubber granules as infill material.” And a multiyear study from the EPA, published in 2024, found much the same thing—although the researchers said that levels of certain synthetic chemicals were elevated inside places that used indoor artificial turf. They also stressed that the paper was not a risk assessment.

The problem is, the kinds of cancers these chemicals can cause may take decades to show up. Long-term studies haven’t been done yet. All the evidence available so far is anecdotal—like a series for the Philadelphia Inquirer that linked the deaths of six former Phillies players from a rare type of brain cancer called glioblastoma to years spent playing on PFAS-containing artificial turf. That’d be about three times the usual rate of glioblastoma among adult men, but the report comes with a lot of cautions—small sample size, lots of other potential causes, no way to establish causation.

Synthetic turf has one negative that no one really disputes: It gets very hot in the sun—as hot as 150 °F (66 °C). This can actually burn players, so they often want to avoid using a field on very hot days.

Athletes playing on artificial turf also have a higher rate of foot and ankle injuries, and elite-level football players seem to be more predisposed to knee injuries on those surfaces. But other studies have found rates of knee and hip injury to be roughly comparable on artificial and natural turf—a point the landscape architect working on the Cornell project made in the information packet the university sent to the city. Athletic departments and city parks departments say that the material’s upsides make it worthwhile, given that there’s no conclusive proof of harm.

Back in Ithaca, Cornell hired an environmental consulting firm called Haley & Aldrich to assess the evidence. The company concluded that none of the university’s proposed installations of artificial turf would have a negative environmental impact. People from Cornell on Fire and Zero Waste Ithaca told me they didn’t trust the firm’s findings; representatives from Haley & Aldrich declined to comment.

Longtime activists say that as global consumption of fossil fuels declines, petrochemical companies are desperate to find other markets. That means plastics. “There’s a big push to shift more petrochemicals into plastic products for an end market,” says Jeff Gearhart, a consumer product researcher at the Ecology Center. “Industry people, with a vested interest in petrochemicals, are looking to expand and build out alternative markets for this stuff.”

All that and more went before the decision-makers in Ithaca. In September 2024, the City of Ithaca Planning Board unanimously issued a judgment that the Meinig Fieldhouse would not have a significant environmental impact and thus would not need to complete a full environmental impact assessment. Six months later, the town made the same determination for the field hockey pitch.

Zero Waste Ithaca sued in New York’s supreme court, which ruled against the group. Koizumi and lawyers from Pace University’s Environmental Litigation Clinic have appealed. She says she’s still hopeful the court might agree that Ithaca authorities made a mistake by not requiring an environmental impact statement from the college. “We have the science on our side,” she says.

Ithaca is a pretty rarefied place, an Ivy League university town. But these same tensions—potential long-term environmental and public health consequences versus the financial and maintenance concerns of the now—are pitting worried citizens against their representatives and city agencies around the country.

New York City has 286 municipal synthetic-turf fields, with more under construction. In Inwood, the northernmost neighborhood in Manhattan, two fields were approved via Zoom meetings during the pandemic, and Massimo Strino, a local artist who makes kaleidoscopes, says he found out only when he saw signs announcing the work on one of his daily walks in Inwood Hill Park, along the Hudson River. He joined a campaign against the plan, gathering more than 4,300 signatures. “I was canvassing every weekend,” Strino says. “You can count on one hand, literally, the number of people who said they were in favor.”

But that doesn’t include the group that pushed for one of those fields in the first place: Uptown Soccer, which offers free and low-cost lessons and games to 1,000 kids a year, mostly from underserved immigrant families. “It was turning an unused community space into a usable space,” says David Sykes, the group’s executive director. “That trumped the sort of abstract concerns about the environmental impacts. I’m not an expert in artificial turf, but the parks department assured me that there was no risk of health effects.”

Artificial turf doesn’t go away. “You’re going to be paying to get rid of it. Somebody will have to take it to a dump, where it will sit for a thousand years.”

Graham Peaslee, emeritus nuclear physicist studying PFAS concentrations, University of Notre Dame

New York City councilmember Christopher Marte disagrees. He has introduced a bill to ban new artificial turf from being installed in parks, and he hopes the proposal will be taken up by the Parks Committee this spring. Last session, the bill had 10 cosponsors—that’s a lot. Marte says he expects resistance from lobbyists, but there’s precedent. The city of Boston banned artificial turf in 2022.

Upstate, in a Rochester suburb called Brighton, the school district included synthetic-turf baseball and softball diamonds in a wide-ranging February 2024 capital improvement proposition. The measure passed. In a public meeting in November 2025, the school board acknowledged the intent to use synthetic grass—or, as concerned parents had it, “to rip up a quarter million square feet of this open space and replace it with artificial turf,” says David Masur, executive director of the environmental group PennEnvironment, whose kids attend school in Brighton. Parents and community members mobilized against the plan, further angered when contractors also cut down a beloved 200-year-old tree. School superintendent Kevin McGowan says it’s too late to change course. Masur has been working to oppose the plan nevertheless—he says school boards are making consequential decisions about turf without sharing information or getting input, even though these fields can cost millions of dollars of taxpayer money.

In short, the fights can get tense. On Martha’s Vineyard, in Massachusetts, a meeting about plans to install an artificial field at a local high school had to be ended early amid verbal abuse. A staffer for the local board of health who voiced concern about PFAS in the turf quit the board after discovering bullet casings in her tote bag, she said, which she perceived as a death threat. After an eight-year fight, the board eventually banned artificial turf altogether.

What happens next? Well, outdoor artificial turf lasts only eight to 12 years before it needs to be taken up and replaced. The Synthetic Turf Council says it’s at least partially recyclable and cites a company called BestPLUS Plastic Lumber as a purveyor of products made from recycled turf. The company says one of its products, a liner called GreenBoard that artificial turf can be nailed into, is at least 40% recycled from fake grass. Joseph Sadlier, vice president and general manager of plastics recycling at BestPLUS, says the company recycles over 10 million pounds annually.

Yet the material is piling up. In 2021, a Danish company called Re-Match announced plans to open a recycling plant in Pennsylvania and began amassing thousands of tons of used plastic turf in three locations. The company filed for bankruptcy in 2025.

In Ithaca, university representatives told planning boards that it would be possible to recycle the old artificial turf they ripped out to make way for the Meinig Fieldhouse. That didn’t happen. An anonymous local activist tracked the old rolls to a hauling company a half-hour’s drive south of campus and shared pictures of them sitting on the lot, where they stayed for months. It’s unclear what their ultimate fate will be.

That’s the real problem: Artificial turf just doesn’t go away. “You’re going to be paying to get rid of it,” says Peaslee, the PFAS expert. “Somebody will have to take it to a dump, where it will sit for a thousand years.” At minimum, real grass is a net carbon sink, even including installation and maintenance. Synthetic turf releases greenhouse gases. One life-cycle analysis of a 2.2-acre synthetic field in Toronto determined that it would emit 55 metric tons of carbon dioxide over a decade. Plastic fields need less water to maintain, but it takes water to make plastic, and natural grass lets rainwater seep into the ground. Synthetic turf sends most of it away as runoff.

It’s a boggling set of issues to factor into a decision. Rossi, the Cornell turf scientist, says he can understand why a school in the northern United States might go plastic, even when it cares about its students’ health. “It was the best bad option,” he says. Concerns about microplastics and PFAS are “significant issues we have not fully addressed.” And they need to be.

Douglas Main is a journalist and former senior editor and writer at National Geographic.