How To Write SEO Reports That Get Attention From Your CMO via @sejournal, @AdamHeitzman

We’ve all been there. You spend weeks optimizing content, fixing technical issues, and building quality links – only to have your client skim through your report and ask, “But how is this affecting our bottom line?”

And they’re right to ask. As experienced SEO managers, we need to move beyond traffic numbers and keyword rankings.

Your clients don’t care about impressions or even clicks if they can’t see how those metrics translate to actual business results.

I’ve learned this lesson the hard way. After losing a major client despite significantly improving their rankings (it turns out they weren’t ranking for terms that actually drove revenue), I completely revamped our reporting approach.

Now, I focus on connecting every SEO effort to business outcomes that my clients genuinely care about: revenue growth, reduced acquisition costs, and competitive advantages.

The truth is, with AI reshaping search and budgets under constant scrutiny, proving SEO’s business value isn’t optional anymore. It’s essential for keeping your clients and justifying your fees.

So, let’s talk about how to transform standard SEO reports into strategic assets that make your clients see you as indispensable.

1. Traffic: Beyond Volume To Value

Let’s be real: Your clients aren’t getting bonuses for traffic increases alone anymore.

Yes, traffic is still foundational, but your CMO clients are being hammered about return on investment (ROI) in every meeting.

They need ammunition to defend their budgets, and “we got more visitors” doesn’t cut it in the boardroom.

When I start client reports now, I immediately connect traffic to dollars.

Here’s how to transform this section from a traffic report to a value demonstration: First, ditch the habit of leading with “traffic went up X%.”

Instead, start with: “Organic search generated $X in revenue this quarter through Y new customers.” This immediately frames SEO as a revenue channel, not a vanity metrics game.

Here’s what your traffic section should include:

- Traffic that matters: Break down traffic by buying intent. 10,000 visitors with purchase intent beats 100,000 tire-kickers every time. Show this segmentation.

- Revenue story: What actual money did this traffic generate?

- Comparison value: “This organic traffic would have cost $X through paid channels” is powerful. Use organic traffic value.

- Mobile: Mobile now accounts for 64% of organic searches (up from 56% in 2021). If your mobile performance lags, you’re leaving money on the table. Highlight this gap!

- Customer Journey Insights: Show where organic visitors enter the funnel and how they move through it. This tells a much richer story than pure traffic numbers.

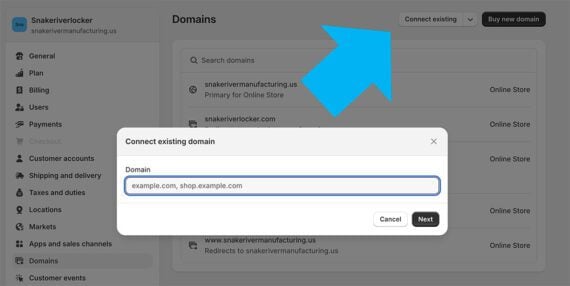

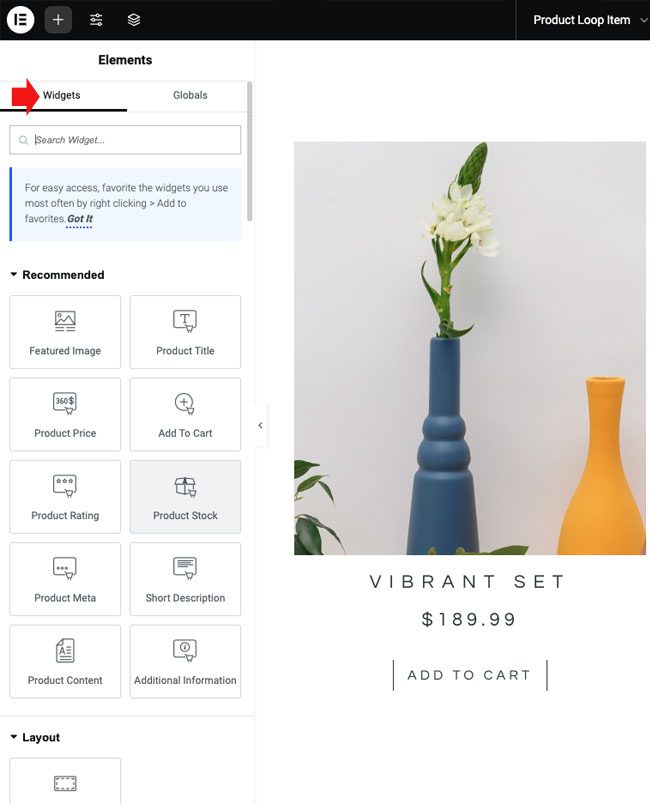

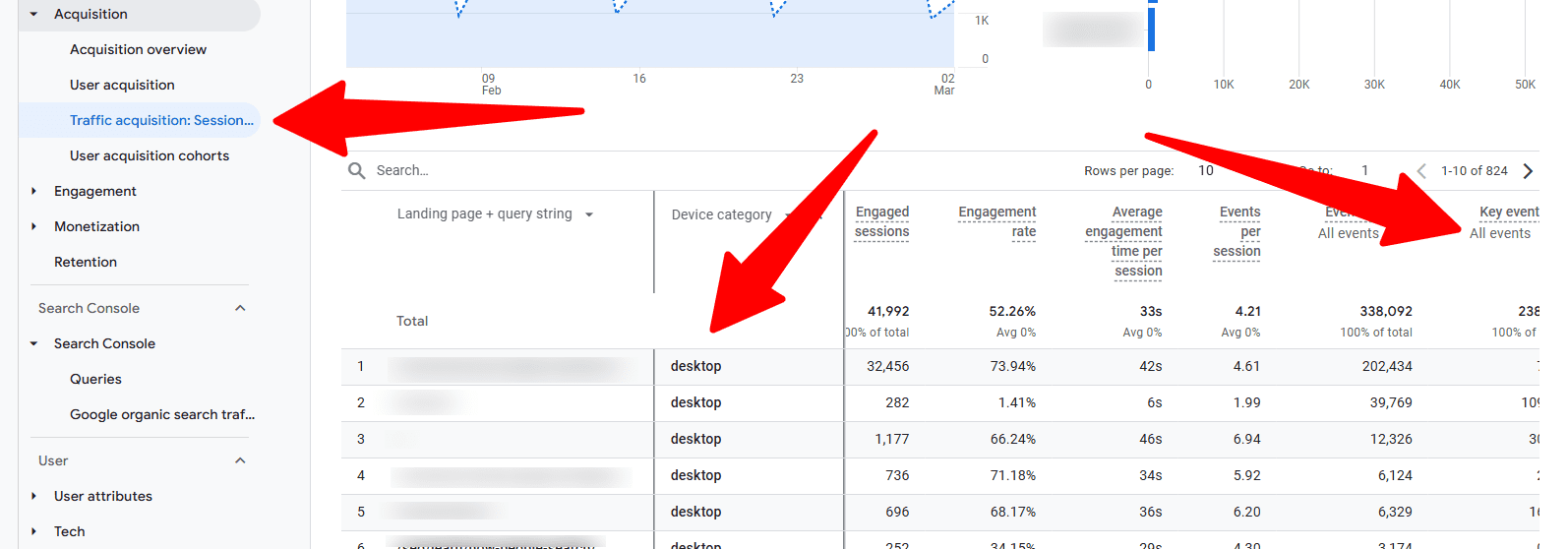

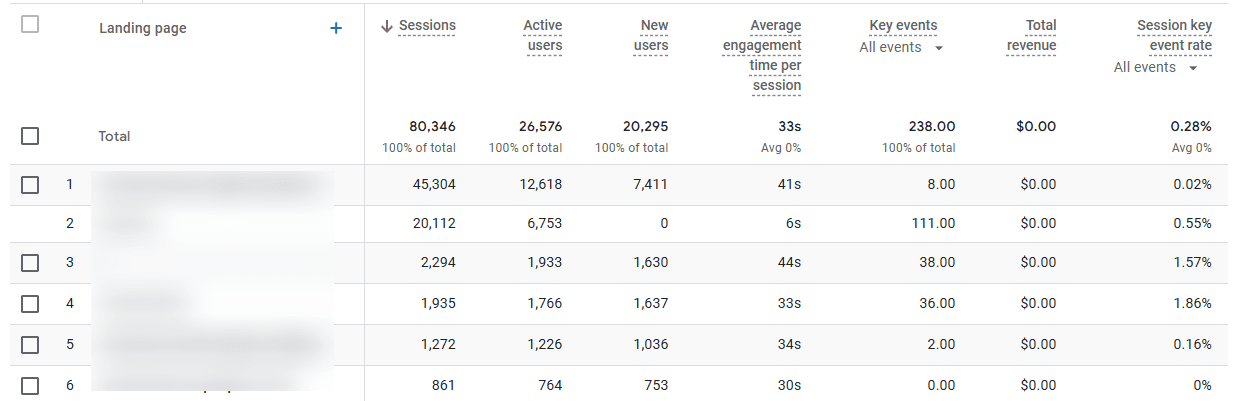

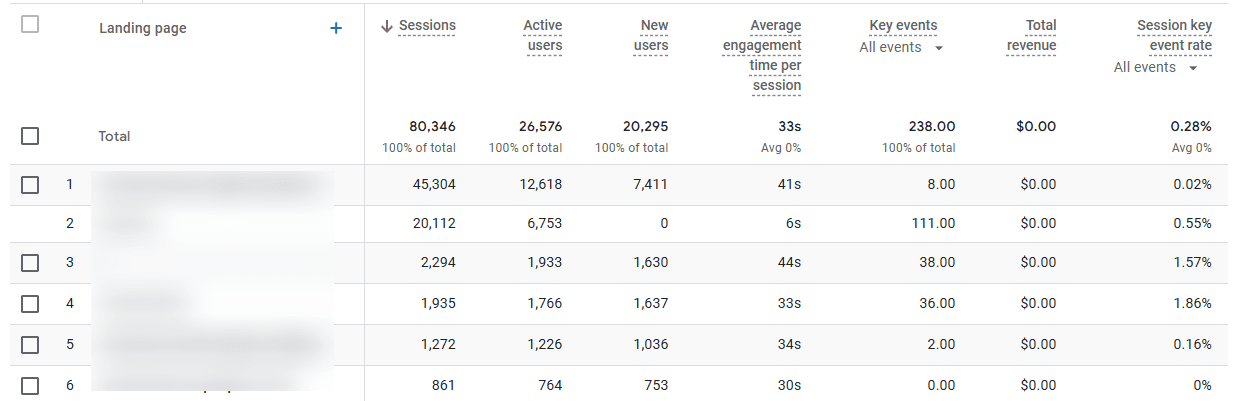

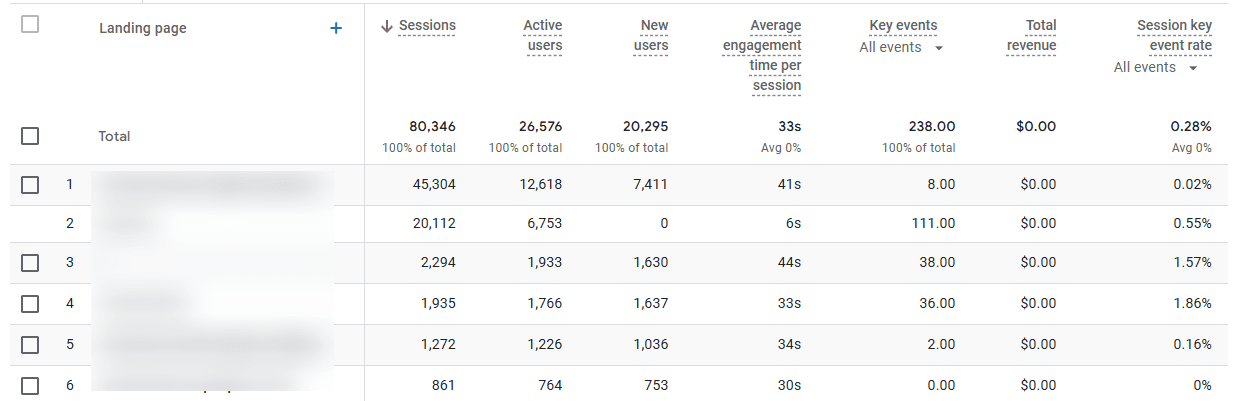

My favorite Google Analytics 4 report for this is: Go to Acquisition > Traffic Acquisition, then add a secondary dimension for “Landing Page” and “Device Category.”

Export this data, then merge it with your conversion values. Suddenly, you have a powerful view of which entry points and devices are actually generating business.

Screenshot from Google Analytics 4, March 2025

Screenshot from Google Analytics 4, March 2025Example:

- Old Way: “Organic traffic increased by 15% month-over-month.”

- New Way: “Organic search delivered 42% of new customer acquisitions this quarter, generating $267,000 in attributed revenue. This traffic would have cost approximately $85,000 through paid search, that’s a 214% ROI on our organic search investment. Interestingly, mobile visitors from our how-to content are converting at twice the rate of desktop visitors, suggesting we should prioritize mobile experience for these high-value entry points.”

2. Conversion Impact & Business Goal Alignment

I once spent three months improving a client’s conversion rate from 2.7% to 3.4% and excitedly presented this in our quarterly meeting. The CMO’s response? “So what does that mean?”

That painful moment taught me something crucial: Conversion rates only matter when tied to business goals the C-suite actually cares about.

Your client’s executives don’t wake up thinking about conversion rates. They worry about acquisition costs, revenue targets, and competitive pressures. Your reports need to speak this language.

Here’s how to make your conversion metrics matter:

- Start with their goals, not yours: Begin this section by restating the client’s specific business objectives: “Your Q1 goal was to reduce customer acquisition costs by 20% while maintaining volume. Here’s how our SEO work delivered on that…”

- Cost comparison is king: I’ve found nothing gets more positive reactions than showing how much cheaper SEO-acquired customers are compared to paid channels. This is pure gold for CMOs defending budgets.

- Lifetime value is your secret weapon: A friend at a major direct-to-customer (DTC) brand was about to have their SEO budget cut until they showed that organic search customers had a 31% higher lifetime value than social media acquisitions. Budget was not only saved, but also increased.

- Multi-touch reality: Today, the attribution game has changed. Use GA4’s Advertising workspace > Conversion paths to show how organic search contributes throughout the journey, not just on last-click conversions.

- Cross-channel impact: Show how SEO supports other channels. When I demonstrated to a client that organic content influenced 34% of their paid social conversions, their perspective on SEO completely changed.

Here’s my favorite method: Try to get access to your client’s customer relationship management (CRM) data (even a sample will do) and match it with GA4’s customer acquisition source data.

This lets you compare not just conversion rates but actual customer value by channel.

Example:

- Old Way: “Conversion rate increased from 2.7% to 3.4% this quarter.”

- New Way: “Our SEO program is now your most cost-efficient customer acquisition channel, with customer acquisition costs 27% lower than paid search and 42% lower than social. Even better, these organic search customers have a 22% higher lifetime value, adding an additional $142,000 to your annual customer base value. This directly supports your stated Q1 objective of improving customer acquisition efficiency while maintaining growth.”

3. Top Performing Content

I remember when top-performing pages just meant a list of URLs with the most traffic.

Content isn’t just content anymore; it’s a collection of strategic assets with different roles in your business.

Some content drives revenue directly; some builds trust; some answers key questions that remove purchase barriers. Your reporting needs to reflect this.

Here’s how to report on this to move from a simple traffic list to a strategic analysis:

- Track content ROI by type: I’ve started categorizing content by purpose (consideration, conversion, retention) and tracking the ROI of each type. For one client, we found that their buying guides delivered five times the ROI of their how-to content, completely changing our content strategy.

- Face the AI reality: With Google’s Search Generative Experience (SGE) and other AI systems affecting visibility, you need to show how your content performs in these environments. One trick: Track featured snippet capture rates alongside traditional rankings. For many queries, if you’re not in position zero, you’re invisible.

- Map the customer journey: Don’t just report which pages get traffic; show how different content types move people through the funnel.

- Quantify content gaps: When I find a competitor ranking for high-value terms we’re missing, I estimate the potential revenue based on search volume, our average conversion rates, and customer value. This turns content gaps from “maybe we should write about X” into “$125,000 annual revenue opportunities.”

Here’s my favorite method: Export GA4 landing page data with key event metrics, then join it with GSC query data to see which types of search intent drive the most value.

This often reveals surprising insights about what content actually drives business results versus what just gets traffic.

Example:

- Old Way: “Your blog posts about [topic] received the most traffic this quarter.”

- New Way: “Your product comparison content delivers the highest ROI of all content investments at 382%, generating $93,500 in quarterly revenue while capturing 64% of available featured snippets in this category. Meanwhile, our analysis identified a strategic content gap in the [specific topic] area, representing a $125,000 annual revenue opportunity that your competitors are currently capitalizing on. I recommend we prioritize closing this gap in Q3.”

4. Technical Performance

I used to dread the technical SEO section of client reports.

Eyes would glaze over at the first mention of “crawl budget optimization” or “Core Web Vitals.” Then, I learned a simple trick: Translate everything into dollars and cents.

In most cases, clients don’t care about technical SEO. They care about making money and saving money. When you frame technical improvements in those terms, suddenly, everyone starts paying attention.

Here’s how to make technical SEO sexy (yes, it’s possible!):

- Connect speed to money: Stop reporting PageSpeed scores in isolation. Instead, show the revenue impact. Show calculations that even minimal improvement in load time was worth $XXX based on their conversion rate lift. That will get their developer resources allocated quickly.

- Quantify technical debt: I’ve started putting actual dollar values on technical issues based on their estimated impact on search performance and conversions. Instead of an issue “severity” score, I now show “revenue at risk,” and it completely changes the conversation.

- Schema implementation as a revenue driver: For one retail client, adding product schema increased CTR by 16% and drove a 7% increase in product page traffic value. When presented in revenue terms, they immediately asked how quickly we could expand this to all category pages.

- Mobile experience in dollars: With mobile now dominating search, any mobile experience gaps translate directly to lost revenue. Show the conversion rate difference between devices and calculate the revenue impact of closing that gap.

Here’s my favorite method: I also love using Screaming Frog’s crawl data, joined with analytics, to try to quantify the impact of technical issues.

Example:

- Old Way: “Your mobile PageSpeed score improved from 72 to 92.”

- New Way: “Our Core Web Vitals optimization closed the mobile conversion gap by 18%, delivering an estimated $56,000 in additional quarterly revenue. This means our technical optimization work has already paid for itself 2.8 times over in just 90 days. Based on this ROI, I recommend we allocate resources to implement similar optimizations on the category pages next, which could unlock an additional $87,000 in annual revenue.”

5. Competitive Intelligence

Nothing motivates clients more than beating their competitors. Trust me on this.

I’ve seen lukewarm reactions to impressive performance improvements suddenly turn enthusiastic when I frame the same data in competitive terms.

There’s something about “we’re taking market share from Company X” that gets executives excited in a way that pure metrics never will.

Here’s how to transform competitive reporting from basic rank tracking to strategic intelligence:

- Think market share, not rankings: Track search visibility market share trends over time. This gives executives the big picture they care about.

- SERP feature strategy: Feature ownership has become critical. I track which competitors dominate different SERP features and develop strategies to capture these high-visibility positions.

- Topic authority positioning: Instead of thousands of keywords, I now organize reporting around key topic clusters and show authority positioning in each. This makes the competitive landscape much clearer and helps focus resources where they’ll have the biggest impact.

- Opportunity mining: My favorite approach is identifying where competitors are slipping. When I spot a competitor losing visibility in a valuable category, I quantify the revenue opportunity based on search volume and our conversion benchmarks. This creates clear, compelling opportunities.

- AI competitive intelligence: With AI reshaping search, I’ve added comparison metrics showing how often our content appears in AI-generated responses compared to competitors.

Tip: Don’t just track competitive metrics – turn them into opportunity estimates.

When I find a competitor’s weakness, I calculate the potential value using: [Search Volume] × [Estimated CTR] × [Average Conversion Rate] × [Average Order Value].

This transforms competitive insights into concrete business opportunities.

Example:

- Old Way: “We’re now ranking higher than Competitor A for these 28 keywords.”

- New Way: “Our search visibility market share has increased to 23% this quarter (+4% YoY) while Brand X has declined to 27% (-6% YoY), putting us on track to become the market leader by Q4. We’ve identified a significant opportunity in the [specific category] where Competitor B has unexpectedly lost 42% visibility. Based on search volume and our conversion benchmarks, this represents a $220,000 annual revenue opportunity we can capture with a targeted content and optimization strategy. “

6. AI Adaptation

AI is starting to disrupt our traditional world as SEO professionals. If you’re not talking about it in your reports, you’re doing your clients a disservice.

I remember the panic when SGE first rolled out, and clients started seeing their click data change.

Here’s how I will approach the AI section of reports:

- Be honest about the zero-click reality: I start by acknowledging the elephant in the room. Yes, some traditional clicks are gone forever, but then, I pivot to what we’re doing about it.

- AI visibility tracking: If you’re not already using AI visibility tracking tools, start now. I like what Knowatoa and Nightwatch are both doing.

7. Strategic Recommendations

This is where you earn your money.

Anyone can present data. The real value comes from translating that data into action and showing the likely business outcomes.

This section is your chance to prove you’re not just an SEO technician but a strategic business partner.

I learned this the hard way. I once delivered a report with 27 detailed recommendations without any prioritization or impact estimates.

The client’s response? “This is overwhelming. Where do we even start?” Now, my approach is different.

Here’s how to make your recommendations section actually valuable today:

- Prioritize by ROI: No more than three to five key recommendations, ranked by projected return. I calculate the expected ROI for every suggestion and only present the highest-impact items.

- Size each opportunity in dollars: Executives speak the language of money. I estimate the revenue potential for each recommendation based on historical performance data. This transforms “we should do X” into “this $30,000 investment could generate $120,000 in annual revenue.”

- Get specific about resources: Vague recommendations get vague results. I specify exactly what resources are needed (developer hours, content creation time, etc.) and when. This prevents the “great idea, but we don’t have the resources” response.

- Connect to competitive pressure: When appropriate, I frame recommendations as competitive responses: “Company X is gaining visibility in this category; here’s how we counter their strategy.” This creates urgency and executive interest.

- Include AI strategies: With search changing, I now include specific recommendations for adapting to upcoming AI changes. This demonstrates foresight and positions you as strategic.

A Final Note: Demonstrating SEO’s Strategic Value

The most effective SEO reports tell a business story that clearly demonstrates how your SEO efforts drive meaningful business outcomes.

By connecting SEO metrics to revenue, customer acquisition, and competitive advantage, you position yourself as a strategic business partner rather than just a tactical service provider.

When creating your reports, remember that consistency in tracking methodologies is essential for showing progress over time, while flexibility to address emerging opportunities is equally important.

Establish a baseline reporting framework that evolves with the changing search landscape while maintaining core business metrics that executives care about.

By focusing on business impact rather than technical metrics alone, you elevate SEO from a channel tactic to a strategic business asset that drives value.

More Resources:

Featured Image: tsyhun/Shutterstock