New Ecommerce Tools: February 6, 2025

We publish a rundown each week of new products from companies offering services to ecommerce merchants. This installment includes updates on AI agents, product pages, marketing models, point-of-sales solutions, payment platforms, and conversational commerce.

Got an ecommerce product release? Email releases@practicalecommerce.com.

New Tools for Merchants

Palona AI launches a platform for AI sales agents. Palona has released its suite of AI tools to accelerate the growth of direct-to-consumer and other consumer-facing businesses. The company also announced $10 million in seed funding from investors, including UpHonest Capital, Fusion Fund, Maynard Webb, NEO Investment Partners, and others. Palona’s mission is to give customers AI they can trust with key parts of any business: their brand, customer relationships, and sales.

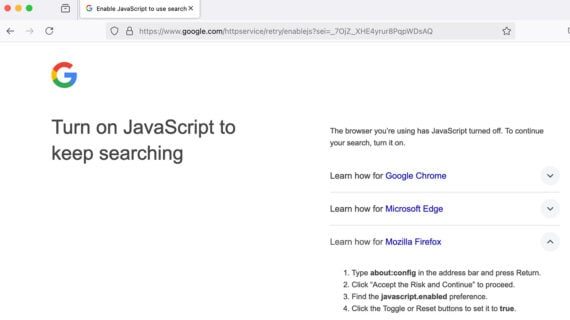

Google launches an open-source marketing mix model. Google has launched Meridian, an open-source marketing mix model available to all marketers and data scientists. Google has also introduced a partner program with over 20 certified measurement partners. Meridian analyzes campaign results based on a business’s key performance indicators, such as sales, website visits, profit, and conversions.

Poshmark marketplace debuts Smart List AI. Poshmark, a fashion resale marketplace, has unveiled Smart List AI to streamline the listing process with the power of generative AI. According to Poshmark, Smart List AI acts as a co-pilot for sellers. It uses artificial intelligence to generate listing details across any category and department from a single photo. The AI also automates the creation of key listing elements such as title, description, and category.

Paymob partners with Woo to help MENA merchants. Paymob, a financial services provider in the Middle East and North Africa, has partnered with Woo, the open-source ecommerce platform. Using Paymob, WooCommerce merchants can integrate over 50 global and local payment methods, including Apple Pay, Google Pay, and regional alternatives. According to Paymob, the integration aims to simplify the checkout process by providing a secure and seamless experience with embedded 3D Secure and PCI compliance.

Qeen.ai raises $10 million to provide AI agents for ecommerce businesses. Qeen.ai, a provider of AI tools for ecommerce, has raised $10 million in funding, led by Prosus Ventures, with participation from existing investors, including Wamda Capital, 10x Founders, and Dara Holdings. Qeen.ai develops AI agents that autonomously execute tasks and optimize outcomes based on observed user behavior. Ecommerce businesses can leverage Qeen.ai’s agents to carry out functions such as content creation, marketing, and conversational sales.

Vengo AI partners with AppSumo to launch an AI sales platform. Vengo AI, a platform for customizable AI sales agents, is now a featured partner on the AppSumo marketplace. Vengo AI says it enables businesses to create AI sales agents that reflect their unique voice and branding, available 24/7 through text and voice. Businesses can add Vengo AI to any website or platform to capture and track customer data.

Alloy.ai and CloudPaths partner on supply chain planning for consumer products. Alloy.ai, a data integration and retail analytics platform for consumer brands, has joined forces with CloudPaths, a SAP partner, to offer supply chain planning, guidance, and implementation. According to the companies, the partnership enables consumer product businesses to transform their supply chain and planning by combining CloudPaths’ SAP expertise with Alloy’s real-time data integration platform.

Kepler launches AI-powered tools for ecommerce product pages. Kepler, a digital marketing agency, has launched PDP+ for ecommerce product pages. Kipler’s AI engine optimizes product-page content, identifying high-performing keywords, restructuring titles for better discoverability, and prioritizing product features based on consumer search patterns. Kepler’s AI-powered engine elevates basic product photography, turning standard background shots into immersive imagery and video. A team of brand and creative experts crafts and reviews the enhancements, Kepler says.

Bookshop.org launches an ebook platform for indie bookstores. Bookshop.org, a site promoting independent bookstores, has launched a platform enabling independent bookstores to sell ebooks. According to Bookshop.org, the digital books initiative enables local bookstores to sell digital products and retain 100% of the profits. The platform, available through Bookshop.org, launches with 3 million ebooks.

Moniepoint tests a POS that manages inventory. Moniepoint, a digital payment company, is testing a point-of-sale service that combines payment and transaction processing with inventory management. Following its acquisition of Grocel, a provider of inventory management tools, Moniepoint has integrated Grocle’s features into its POS terminals. Moniepoint’s new integrated POS will manage the combination of business processes and record keeping.

Gallabox raises $3.5 million for AI-driven business on WhatsApp. Gallabox, a no-code conversational commerce platform, has raised $3.5 million to address how businesses leverage WhatsApp for marketing and sales. The seed round was led by Fuse, with participation from existing investors Prime Venture Partners and Neon Fund. Gallabox says its platform for WhatsApp automation enables businesses to create AI chatbots for lead qualification, deploy drip marketing campaigns, and manage team collaboration through shared inboxes.