The Download: inside the Vitalism movement, and why AI’s “memory” is a privacy problem

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology.

Meet the Vitalists: the hardcore longevity enthusiasts who believe death is “wrong”

Last April, an excited crowd gathered at a compound in Berkeley, California, for a three-day event called the Vitalist Bay Summit. It was part of a longer, two-month residency that hosted various events to explore tools—from drug regulation to cryonics—that might be deployed in the fight against death.

One of the main goals, though, was to spread the word of Vitalism, a somewhat radical movement established by Nathan Cheng and his colleague Adam Gries a few years ago. Consider it longevity for the most hardcore adherents—a sweeping mission to which nothing short of total devotion will do.

Although interest in longevity has certainly taken off in recent years, not everyone in the broader longevity space shares Vitalists’ commitment to actually making death obsolete. And the Vitalists feel that momentum is building, not just for the science of aging and the development of lifespan-extending therapies, but for the acceptance of their philosophy that defeating death should be humanity’s top concern. Read the full story.

—Jessica Hamzelou

This is the latest in our Big Story series, the home for MIT Technology Review’s most important, ambitious reporting. You can read the rest of the series here.

What AI “remembers” about you is privacy’s next frontier

—Miranda Bogen, director of the AI Governance Lab at the Center for Democracy & Technology, & Ruchika Joshi, fellow at the Center for Democracy & Technology specializing in AI safety and governance

The ability to remember you and your preferences is rapidly becoming a big selling point for AI chatbots and agents.

Personalized, interactive AI systems are built to act on our behalf, maintain context across conversations, and improve our ability to carry out all sorts of tasks, from booking travel to filing taxes.

But their ability to store and retrieve increasingly intimate details about their users over time introduces alarming, and all-too-familiar, privacy vulnerabilities––many of which have loomed since “big data” first teased the power of spotting and acting on user patterns. Worse, AI agents now appear poised to plow through whatever safeguards had been adopted to avoid those vulnerabilities. So what can developers do to fix this problem? Read the full story.

How the grid can ride out winter storms

The eastern half of the US saw a monster snowstorm over the weekend. The good news is the grid has largely been able to keep up with the freezing temperatures and increased demand. But there were some signs of strain, particularly for fossil-fuel plants.

One analysis found that PJM, the nation’s largest grid operator, saw significant unplanned outages in plants that run on natural gas and coal. Historically, these facilities can struggle in extreme winter weather.

Much of the country continues to face record-low temperatures, and the possibility is looming for even more snow this weekend. What lessons can we take from this storm, and how might we shore up the grid to cope with extreme weather? Read the full story.

—Casey Crownhart

This article is from The Spark, MIT Technology Review’s weekly climate newsletter. To receive it in your inbox every Wednesday, sign up here.

The must-reads

I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology.

1 Telegram has been flooded with deepfake nudes

Millions of users are creating and sharing falsified images in dedicated channels. (The Guardian)

2 China has executed 11 people linked to Myanmar scam centers

The members of the “Ming family criminal gang” caused the death of at least 14 Chinese citizens. (Bloomberg $)

+ Inside a romance scam compound—and how people get tricked into being there. (MIT Technology Review)

3 This viral personal AI assistant is a major privacy concern

Security researchers are sounding the alarm on Moltbot, formerly known as Clawdbot. (The Register)

+ It requires a great deal more technical know-how than most agentic bots. (TechCrunch)

4 OpenAI has a plan to keep bots off its future social network

It’s putting its faith in biometric “proof of personhood” promised by the likes of World’s eyeball-scanning orb. (Forbes)

+ We reported on how World recruited its first half a million test users back in 2022. (MIT Technology Review)

5 Here’s just some of the technologies ICE is deploying

From facial recognition to digital forensics. (WP $)

+ Agents are also using Palantir’s AI to sift through tip-offs. (Wired $)

6 Tesla is axing its Model S and Model X cars

Its Fremont factory will switch to making Optimus robots instead. (TechCrunch)

+ It’s the latest stage of the company’s pivot to AI… (FT $)

+ …as profit falls by 46%. (Ars Technica)

+ Tesla is still struggling to recover from the damage of Elon Musk’s political involvement. (WP $)

7 X is rife with weather influencers spreading misinformation

They’re whipping up hype ahead of massive storms hitting. (New Yorker $)

8 Retailers are going all-in on AI

But giants like Amazon and Walmart are taking very different approaches. (FT $)

+ Mark Zuckerberg has hinted that Meta is working on agentic commerce tools. (TechCrunch)

+ We called it—what’s next for AI in 2026. (MIT Technology Review)

9 Inside the rise of the offline hangout

No phones, no problem. (Wired $)

10 Social media is obsessed with 2016

…why, exactly? (WSJ $)

Quote of the day

“The amount of crap I get for putting out a hobby project for free is quite something.”

—Peter Steinberger, the creator of the viral AI agent Moltbot, complains about the backlash his project has received from security researchers pointing out its flaws in a post on X.

One more thing

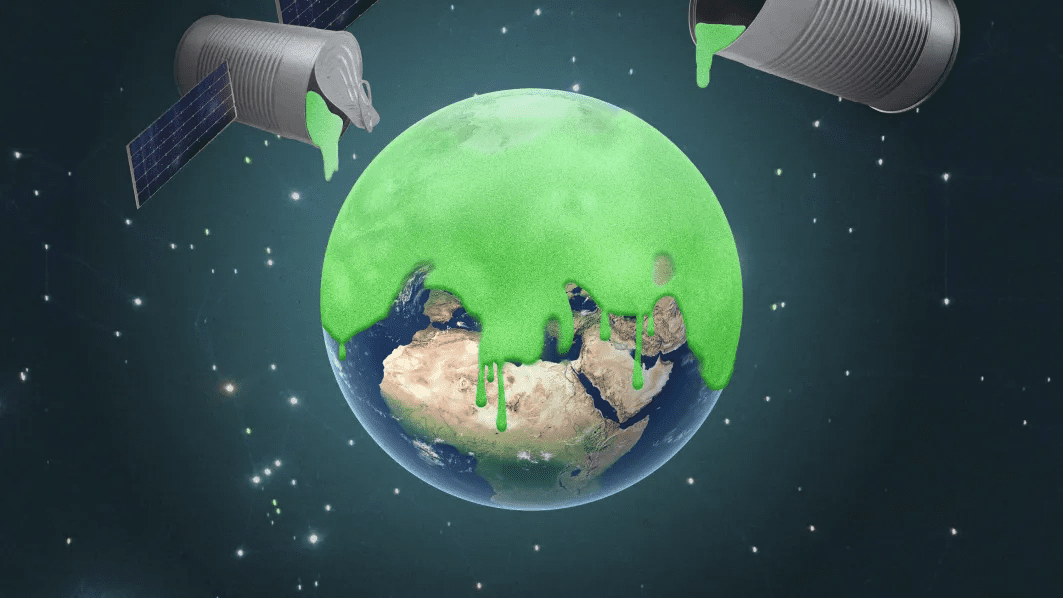

The flawed logic of rushing out extreme climate solutions

Early in 2022, entrepreneur Luke Iseman says, he released a pair of sulfur dioxide–filled weather balloons from Mexico’s Baja California peninsula, in the hope that they’d burst miles above Earth.

It was a trivial act in itself, effectively a tiny, DIY act of solar geoengineering, the controversial proposal that the world could counteract climate change by releasing particles that reflect more sunlight back into space.

Entrepreneurs like Iseman invoke the stark dangers of climate change to explain why they do what they do—even if they don’t know how effective their interventions are. But experts say that urgency doesn’t create a social license to ignore the underlying dangers or leapfrog the scientific process. Read the full story.

—James Temple

We can still have nice things

A place for comfort, fun and distraction to brighten up your day. (Got any ideas? Drop me a line or skeet ’em at me.)

+ The hottest thing in art right now? Vertical paintings.

+ There’s something in the water around Monterey Bay—a tail walking dolphin!

+ Fed up of hairstylists not listening to you? Remember these handy tips the next time you go for a cut.

+ Get me a one-way ticket to Japan’s tastiest island.