The digital advertising landscape is constantly changing, and a recent announcement from Google has shifted things yet again.

On July 22, 2024, Google made a surprising U-turn on its long-standing plan to phase out third-party cookies in Chrome.

This decision comes after years of back-and-forth between Google, regulatory bodies, and the advertising industry.

Advertisers have relied on third-party cookies – small pieces of code placed on users’ browsers by external websites – to track online behaviour, build detailed user profiles, and serve targeted ads across the web.

The initial plan to remove these cookies was driven by growing privacy concerns and regulations such as Europe’s General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) in the US.

However, Google’s recent announcement doesn’t mean the death of the cookieless future has been permanently averted. Instead, it signals a more gradual and user-choice-driven transition, allowing us to keep cookies for a little bit longer.

Google now plans to introduce a new experience in Chrome that will enable users to make informed choices about their web browsing privacy, which they can adjust at any time, thus giving control back to the user.

This change in approach emphasizes the ongoing tension between privacy concerns and the need for effective digital advertising.

While third-party cookies may stick around longer than initially expected, the trend toward more privacy-focused solutions continues. As such, it’s crucial for businesses running PPC campaigns to stay informed and adaptable.

In this article, we’ll examine the debate surrounding the elimination of cookies for enhanced privacy, explore the potential alternatives to third-party cookies, and discuss how these changes might shape the future of PPC campaigns in an evolving digital landscape.

Should We Get Rid Of Cookies For Enhanced Privacy?

The digital advertising industry has been debating this question for years.

Despite Google’s recent decision to keep third-party cookies in Chrome, the overall direction of the industry is moving towards more privacy-focused solutions.

Other major browsers, including Safari and Firefox, have already implemented restrictions on third-party cookies, underlining the industry trend toward increased privacy for users.

Of course, whether cookieless is the best path to greater privacy is still debated.

Yes, this would reduce some forms of tracking on the one hand, but on the other hand, it will spur efforts toward arguably even more invasive tracking methods.

Cookies also store a couple of user-friendly purposes like login information and user preferences.

As the industry continues to talk about these questions, one thing is obvious: the future of digital advertising will be a dance between user privacy and effective ad targeting.

However, that may be the case. Only time will tell whether it is in accepting the eventual phasing out of third-party cookies or developing new technologies that make the use of privacy user-friendly in the end.

What Options Are There To Replace Third-Party Cookies?

The urgency to find replacements halted after Google announced that Chrome would retain third-party cookies while adding more controls for users.

However, Google is still moving forward with its Privacy Sandbox initiative, which aims to develop privacy-preserving alternatives to third-party cookies.

The Privacy Sandbox is a collective name given to ongoing collaborative efforts to create new technologies designed to protect user privacy while ensuring digital ads are as effective as possible.

For some time now, Google has announced a raft of APIs around this, including the Topics API, Protected Audience API, and Attribution Reporting API.

These technologies are designed to offer a subset of the functionality of third-party cookies in a far more privacy-friendly manner.

While Google decided to retain third-party cookies for the time being, it is worth noting that the company is still investing in these alternative technologies.

This reflects the fact that the trend in the long run is toward a more privacy-centric Web, even though the transition might be happening at a slightly slower pace than initially planned.

In mid-2023, Google announced the release of 6 new APIs for Chrome version 115, designed to replace some functionalities of third-party cookies:

- The Topics API allows the browser to show ads based on broad interest categories or “topics” that users care about without tracking them individually. For example, topics could include fitness, travel, books, and literature.

- Protected Audience API enables interest-based advertising by allowing an “interest group owner” to ask a user’s Chrome browser to add a membership for a specific interest group.

- Attribution Reporting API helps advertisers understand the most effective ads without revealing individual user data.

- private-aggregation”>Private Aggregation API works with aggregated data from the Topics API and Shared Storage, similar to Attribution Reporting.

- Shared Storage API allows advertisers to show relevant ads without accessing visitors’ personal information.

- Fenced Frames API enables websites to display ads in a privacy-safe manner without tracking or collecting visitor information.

It’s important to note that these APIs are still evolving, and more may be developed in the future.

The UK’s Competition and Markets Authority (CMA) has raised concerns about various aspects of these APIs, including user consent interfaces, the potential for abuse, and impacts on competition in the digital advertising market.

As a digital marketer, it’s crucial to stay informed about these developments and be prepared to adapt your strategies as these new technologies roll out.

While they aim to provide privacy-friendly alternatives to third-party cookies, they will likely require new approaches to targeting, measuring, and optimizing your PPC campaigns.

First-Party Data

As third-party cookies slowly become a thing of the past, first-party data becomes very important. First-party data is information you collect directly from your audience or customers, including the following:

- Website or app usage patterns.

- Purchase history.

- Newsletter subscriptions with email.

- Reactions and feedback forms from customers, online surveys.

- Social media engagement with your brand.

First-party data is collected based on the users’ consent and falls under the Utility Standards of privacy regulations.

It also provides direct insights about your customers and their activities towards your brand, enabling more accurate and relevant targeting.

Alternative Tracking Methods

As the industry moves away from third-party cookies, several new tracking and measurement methods are emerging:

Consent Mode V2: A feature that adjusts Google tags based on user consent choices. When a user doesn’t consent to cookies, Consent Mode automatically adapts tag behavior to respect the user’s preference while still providing some measurement capabilities. This approach gives users more control over their data and its use, balancing user privacy and advertisers’ data needs.

Enhanced Conversions: Implementing this improves conversion measurement accuracy using first-party data. It uses hashed customer data like email addresses to connect online activity with actual conversions, even when cookies are limited. By utilizing secure hashing to protect user data while improving measurement, Enhanced Conversions offers a privacy-focused solution for tracking conversions.

Server-Side Tracking: This method collects data from the user’s browser and sends it to the server. Instead of placing tracking pixels or scripts on the user’s browser, data is collected and processed on the server side. This method reduces user data exposure in the browser, improving security and website performance while allowing for effective tracking.

Customer Lists: This utilizes first-party data for audience targeting and remarketing. Advertisers can upload hashed lists of customer information, like email addresses, to platforms for targeting or measurement purposes. This approach relies on data that customers have directly provided to the business rather than third-party tracking, making it a more privacy-conscious method of audience targeting.

Offline Conversion Tracking: OCT connects online ad interactions with offline conversions. It uses unique identifiers to link clicks on online ads to offline actions such as phone calls or in-store purchases. This method provides a more holistic view of the customer journey without relying on extensive online tracking, bridging the gap between digital advertising and real-world conversions.

Small businesses, with their adaptability, can navigate these changes.

Though no single method would be a perfect replacement for the functionality of third-party cookies, together, these alternatives can supply similar functionality for advertisers and solve the privacy fault lines that brought about their deprecation.

Advertisers are likely to need this combination of methods to achieve desired advertising and measurement goals in the era beyond cookies.

Long-Term Strategies For Small Businesses

1. First-Party Data Collection Strategy

Shift your focus to collecting data directly from your customers:

- Add sign-up forms against email capture on a website.

- Create loyalty programs or share valuable content in return for information about your customers.

- Use tools like Google Analytics to trace user interactivity on a website.

- Customer feedback surveys to understand their view about a business and learn more about your customers.

This process will be successful by building trust:

- Be open and transparent about how you collect and make use of the customer’s data.

- Communicate and offer your customers whatever value they get in return for their information.

- Give customers an easy way out and allow them an opt-out option. Customers must have control over their data.

- Provide regular training to raise employee awareness about privacy regulations and best practices for handling customer data.

Invest in a robust CRM system to help organize and manage first-party data effectively.

2. Diversify Your Marketing Channels

Businesses should not keep all the eggs in one basket.

Yes, the need for PPC will always be there; however, in light of this drastic step, it is imperative now to diversify marketing efforts within/between:

Diversification allows you to reach customers through numerous touchpoints and reduces your reliance upon any platform or technology.

Remember that the rule of seven states that a prospect needs to “hear” (or see) the brand’s message at least seven times before they take action to buy that product or service.

3. Embrace Contextual Targeting

Contextual targeting is a kind of targeting that displays advertisements by webpage content and not by the profiles of users. How to work with this approach:

- Choose relevant, meaningful keywords and topics aligned with your products or services.

- Choose placements where your target audience will most likely be viewing.

- Produce several ad creatives specifically for various contexts to prompt relevance.

Pros Of Contextual Targeting

- Privacy-friendly since it does not utilize personal data.

- When well done, targeting people actively interested in connected subjects is remarkably effective.

Cons Of Contextual Targeting

- Accuracy in targeting audiences might be lower than the audience-based targeting methods.

- Requires planning and analysis of content.

4. Use Tracking Solutions With A Focus On Privacy

Next comes server-side tracking and conversion APIs (refer to this article’s Alternative Tracking Methods section for more information). These methods shift data collection from the user’s browser to your server.

Pros

- Improved data accuracy: Server-side tracking can capture events that client-side tracking might miss due to ad blockers or browser restrictions.

- Cross-device tracking capabilities: Server-side solutions can more easily track user interactions across different devices and platforms.

- Future-proofing: As browser restrictions on cookies and client-side tracking increase, server-side solutions will likely remain more stable and effective in the long term.

- Ability to enrich data: Server-side tracking allows data integration from multiple sources before sending it to analytics platforms, potentially providing richer insights.

Cons

- Increased complexity: Server-side tracking and conversion APIs are more technically complex than traditional client-side methods, potentially requiring specialized skills or resources to implement and maintain.

- Potential latency issues: Server-side tracking may introduce slight delays in data processing, which could impact real-time analytics or personalization efforts.

- Ongoing maintenance: Server-side solutions often require more regular updates and maintenance to ensure they remain effective and compliant with evolving privacy regulations.

These solutions may become overly technical. You can also partner with a developer or an agency to ensure their implementation.

5. Investment In Creative Optimization

With reduced accuracy in targeting, your ad creative is more crucial than ever:

- Design creative, eye-catching visuals to blockbuster visuals.

- Be bold, clear in your ad copy, and fast in delivering your value proposition.

- Test different ad formats to find out what will make a connection with people.

- Run A/B testing over ad variations, images, headlines, or CTAs.

6. Embrace Privacy-First Solutions

Track the numerous efforts underway within Google’s Privacy Sandbox and other fast-developing privacy-centric solutions.

Be prepared to test these tools and to scale up their adoption upon release to stay ahead of the curve.

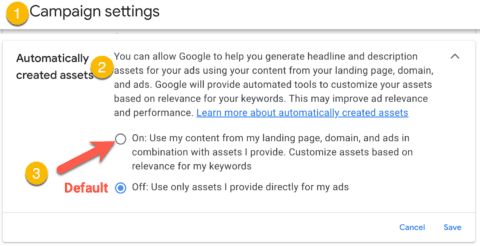

For now, enable Enhanced Conversions inside Google Ads to deliver a better model of your return on ad spend (ROAS) using hashed first-party data.

7. Train And Educate Employees End

Provide continuous training to your workforce:

- Educate your employees about data privacy and security.

- Keep them updated with all the latest privacy regulations and their impact on businesses.

- Conduct training on best practices in collecting, storing, and using customer data.

- Embed a culture of privacy awareness across the organization.

8. Collaborate With Experts

Navigating a cookieless future can be tricky.

A PPC agency or consultant can help you with the latest changes and best practices, implement advanced tracking and targeting solutions, and optimize your campaigns in this new landscape.

When choosing an agency:

- Check for experience in privacy-first campaigns.

- Ask about their approach to first-party data and alternative targeting methods.

- They have a record for converting to changes in the industry.

Start Now And Be Flexible As Digital Advertising Changes

Google’s decision to keep third-party cookies in Chrome while adding more user controls represents a significant shift in the digital advertising landscape.

While this move will definitely grant a bit of breathing room to the advertisers who are heavily reliant on third-party cookies, it doesn’t change the overall trend towards user privacy and control over personal data.

The strategies outlined in this article – focusing on first-party data collection, diversifying marketing channels, embracing contextual targeting, and investing in privacy-focused solutions – remain relevant for long-term success in digital advertising.

These approaches will help you navigate the current landscape and prepare you for a future where user privacy is increasingly prioritized.

Yes, third-party cookies are sticking around longer than initially expected, but the push to find more privacy-friendly advertising solutions still continues.

By implementing these strategies now, you’ll be better positioned to adapt to further changes down the road, whether they come from regulatory bodies, browser policies, or changing consumer expectations.

The time to start future-proofing is now. Start by auditing your existing strategies, building first-party data assets, and testing new targeting and measurement capabilities.

Stay informed about developments in privacy-preserving technologies like Google’s Privacy Sandbox, and be prepared to test and implement these new tools when they become available.

Taking a proactive, strategic approach that puts the user’s privacy and trust first ensures that your PPC campaigns will continue to thrive. The future of digital advertising may be uncertain.

Still, with the appropriate strategies and respect for users’ privacy, you can turn these challenges into opportunities for growth and innovation.

More resources:

Featured Image: BestForBest/Shutterstock