The Download: what’s next for electricity, and living in the conspiracy age

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology.

Three things to know about the future of electricity

The International Energy Agency recently released the latest version of the World Energy Outlook, the annual report that takes stock of the current state of global energy and looks toward the future.

It contains some interesting insights and a few surprising figures about electricity, grids, and the state of climate change. Let’s dig into some numbers.

—Casey Crownhart

This article is from The Spark, MIT Technology Review’s weekly climate newsletter. To receive it in your inbox every Wednesday, sign up here.

How to survive in the new age of conspiracies

Everything is a conspiracy theory now. Our latest series “The New Conspiracy Age” delves into how conspiracies have gripped the White House, turning fringe ideas into dangerous policy, and how generative AI is altering the fabric of truth.

If you’re interested in hearing more about how to survive in this strange new age, join our features editor Amanda Silverman and executive editor Niall Firth today at 1pm ET for an subscriber-exclusive Roundtable conversation. They’ll be joined by conspiracy expert Mike Rothschild, who’s written a fascinating piece for us about what it’s like to find yourself at the heart of a conspiracy theory. Register now to join us!

The must-reads

I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology.

1 Donald Trump is poised to ban AI state laws

The US President is considering signing an order to give the federal government unilateral power over regulating AI. (The Verge)

+ It would give the Justice Department power to sue dissenting states. (WP $)

+ Critics claim the draft undermines trust in the US’s ability to make AI safe. (Wired $)

+ It’s not just America—the EU fumbled its attempts to rein in AI, too. (FT $)

2 The CDC is making false claims about a link between vaccines and autism

Despite previously spending decades fighting misinformation connecting them. (WP $)

+ The National Institutes of Health is parroting RFK Jr’s messaging, too. (The Atlantic $)

3 China is going all-in on autonomous vehicles

Which is bad news for its millions of delivery drivers. (FT $)

+ It’s also throwing its full weight behind its native EV industry. (Rest of World)

5 Major music labels have inked a deal with an AI streaming service

Klay users will be able to remodel songs from the likes of Universal using AI. (Bloomberg $)

+ What happens next is anyone’s guess. (Billboard $)

+ AI is coming for music, too. (MIT Technology Review)

5 How quantum sensors could overhaul GPS navigation

Current GPS is vulnerable to spoofing and jamming. But what comes next? (WSJ $)

+ Inside the race to find GPS alternatives. (MIT Technology Review)

6 There’s a divide inside the community of people in relationships with chatbots

Some users assert their love interests are real—to the concern of others. (NY Mag $)

+ It’s surprisingly easy to stumble into a relationship with an AI chatbot. (MIT Technology Review)

7 There’s still hope for a functional cure to HIV

Even in the face of crippling funding cuts. (Knowable Magazine)

+ Breakthrough drug lenacapavir is being rolled out in parts of Africa. (NPR)

+ This annual shot might protect against HIV infections. (MIT Technology Review)

8 Is it possible to reverse years of AI brainrot?

A new wave of memes is fighting the good fight. (Wired $)

+ How to fix the internet. (MIT Technology Review)

9 Tourists fell for an AI-generated Christmas market outside Buckingham Palace

If it looks too good to be true, it probably is. (The Guardian)

+ It’s unclear who is behind the pictures, which spread on Instagram. (BBC)

10 Here’s what people return to Amazon

A whole lot of polyester clothing, by the sounds of it. (NYT $)

Quote of the day

“I think we’re in an LLM bubble, and I think the LLM bubble might be bursting next year.”

—Hugging Face co-founder and CEO Clem Delangue has a slightly different take on the reports we’re in an AI bubble, TechCrunch reports.

One more thing

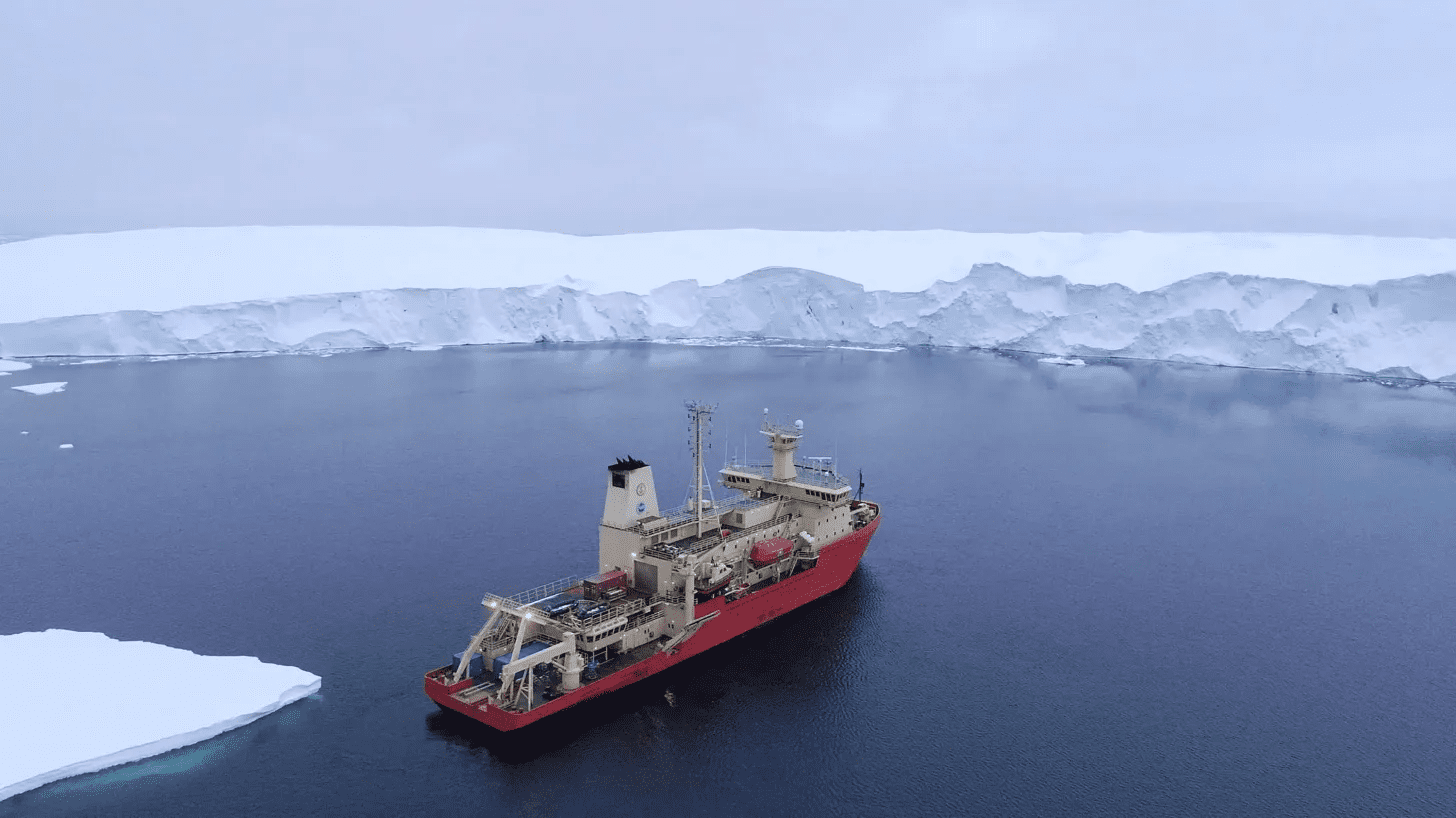

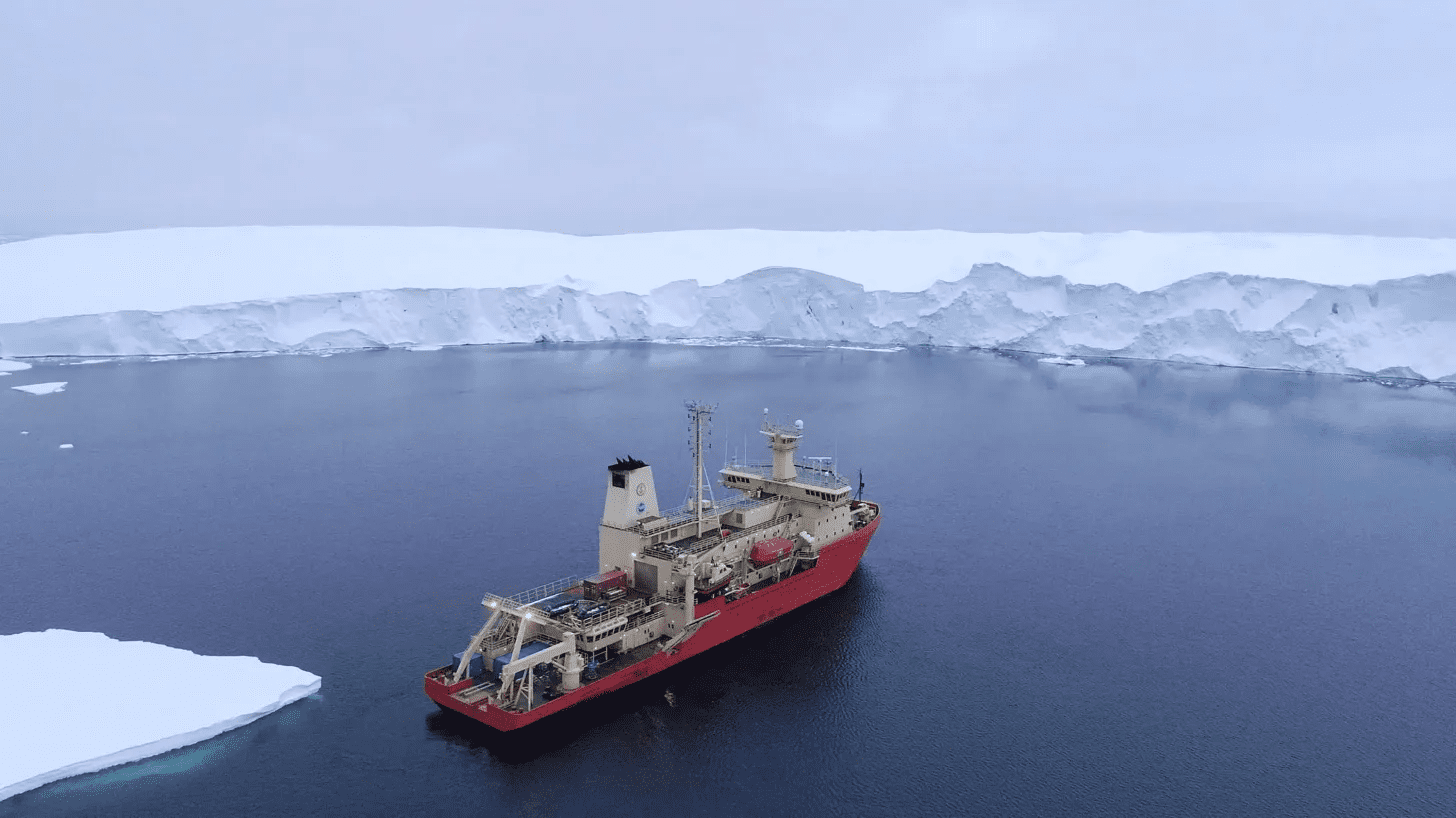

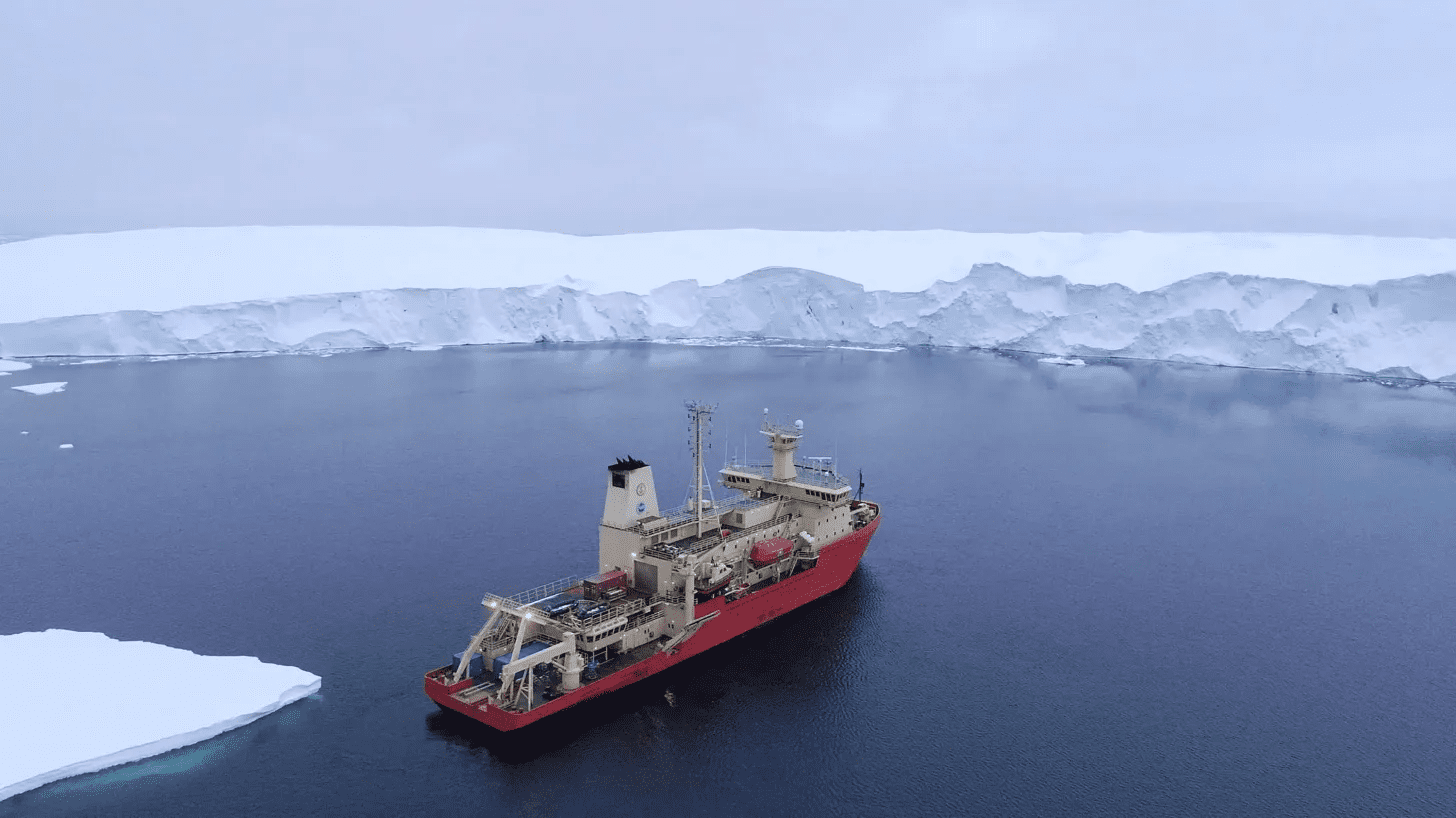

Inside a new quest to save the “doomsday glacier”

The Thwaites glacier is a fortress larger than Florida, a wall of ice that reaches nearly 4,000 feet above the bedrock of West Antarctica, guarding the low-lying ice sheet behind it.

But a strong, warm ocean current is weakening its foundations and accelerating its slide into the sea. Scientists fear the waters could topple the walls in the coming decades, kick-starting a runaway process that would crack up the West Antarctic Ice Sheet, marking the start of a global climate disaster. As a result, they are eager to understand just how likely such a collapse is, when it could happen, and if we have the power to stop it. Read the full story.

—James Temple

We can still have nice things

A place for comfort, fun and distraction to brighten up your day. (Got any ideas? Drop me a line or skeet ’em at me.)

+ As Christmas approaches, micro-gifting might be a fun new tradition to try out.

+ I’ve said it before and I’ll say it again—movies are too long these days.

+ If you’re feeling a bit existential this morning, these books are a great starting point for finding a sense of purpose.

+ This is a fun list of the internet’s weird and wonderful obsessive lists.