Big Tech’s big bet on a controversial carbon removal tactic

Over the last century, much of the US pulp and paper industry crowded into the southeastern corner of the nation, setting up mills amid sprawling timber forests to strip the fibers from juvenile loblolly, long leaf, and slash pine trees.

Today, after the factories chip the softwood and digest it into pulp, the leftover lignin, spent chemicals, and remaining organic matter form a dark, syrupy by-product known as black liquor. It’s then concentrated into a biofuel and burned, which heats the towering boilers that power the facility—and releases carbon dioxide into the air.

Microsoft, JP MorganChase, and a tech company consortium that includes Alphabet, Meta, Shopify, and Stripe have all recently struck multimillion-dollar deals to pay paper mill owners to capture at least hundreds of thousands of tons of this greenhouse gas by installing carbon scrubbing equipment in their facilities.

The captured carbon dioxide will then be piped down into saline aquifers more than a mile underground, where it should be sequestered permanently.

Big Tech is suddenly betting big on this form of carbon removal, known as bioenergy with carbon capture and storage, or BECCS. The sector also includes biomass-fueled power plants, waste incinerators, and biofuel refineries that add carbon capturing equipment to their facilities.

Since trees and other plants absorb carbon dioxide through photosynthesis and these factories will trap emissions that would have gone into the air, together they can theoretically remove more greenhouse gas from the atmosphere than was released, achieving what’s known as “negative emissions.”

The companies that pay for this removal can apply that reduction in carbon dioxide to cancel out a share of their own corporate pollution. BECCS now accounts for nearly 70% of the announced contracts in carbon removal, a popularity due largely to the fact that it can be tacked onto industrial facilities already operating on large scales.

“If we’re balancing cost, time to market, and ultimate scale potential, BECCS offers a really attractive value proposition across all three of those,” says Brian Marrs, senior director of energy and carbon removal at Microsoft, which has become by far the largest buyer of carbon removal credits as it races to balance out its ongoing emissions by the end of the decade.

But experts have raised a number of concerns about various approaches to BECCS, stressing they may inflate the climate benefits of the projects, conflate prevented emissions with carbon removal, and extend the life of facilities that pollute in other ways. It could also create greater financial incentives to log forests or convert them to agricultural land.

When greenhouse-gas sources and sinks are properly tallied across all the fields, forests, and factories involved, it’s highly difficult to achieve negative emissions with many approaches to BECCS, says Tim Searchinger, a senior research scholar at Princeton University. That undermines the logic of dedicating more of the world’s limited land, crops, and woods to such projects, he argues.

“I call it a ‘BECCS and switch,’” he says, adding later: “It’s folly at some level.”

The logic of BECCS

For a biomass-fueled power plant, BECCS works like this:

A tree captures carbon dioxide from the atmosphere as it grows, sequestering the carbon in its bark, trunk, branches, and roots while releasing the oxygen. Someone then cuts it down, converts it into wood pellets, and delivers it to a power plant that, in turn, burns the wood to produce heat or electricity.

Usually, that facility will produce carbon dioxide as the wood incinerates. But under both European Union and US rules, the burning of the wood is generally treated as carbon neutral, so long as the timber forests are managed in sustainable ways and the various operations abide by other regulations. The argument is that the tree pulled CO2 out of the air in the first place, and new plant growth will bring that emissions debt back into balance over time.

If that same power plant now captures a significant share of the greenhouse gas produced in the process and pumps it underground, the process can potentially go from carbon neutral to carbon negative.

But the starting assumption that biomass is carbon neutral is fundamentally flawed, because it doesn’t fully take into account other ways that emissions are released throughout the process, according to Searchinger.

Among other things, a proper analysis must also ask: How much carbon is left behind in roots or branches on the forest floor that will begin to decompose and release greenhouse gases after the plant is removed? How much fossil fuel was burned in the process of cutting, collecting, and distributing the biomass? How much greenhouse gas was produced while converting timber into wood pellets and shipping them elsewhere? And how long will it take to grow back the trees or plants that would have otherwise continued capturing and storing carbon?

“If you’re harvesting wood, it’s essentially impossible to get negative emissions,” Searchinger says.

Burning biomass, or the biofuels created from it, can also produce other forms of pollution that can harm human health, including particulate matter, volatile organic compounds, sulfur dioxide, and carbon monoxide.

Preventing carbon dioxide emissions at a given factory may necessitate capturing certain other pollutants as well, notably sulfur dioxide. But it doesn’t necessarily filter out all the other pollution floating out of the flue stack, notes Emily Grubert, an associate professor of sustainable energy policy at the University of Notre Dame who focuses on carbon management issues and the transition away from fossil fuels.

Driving demand

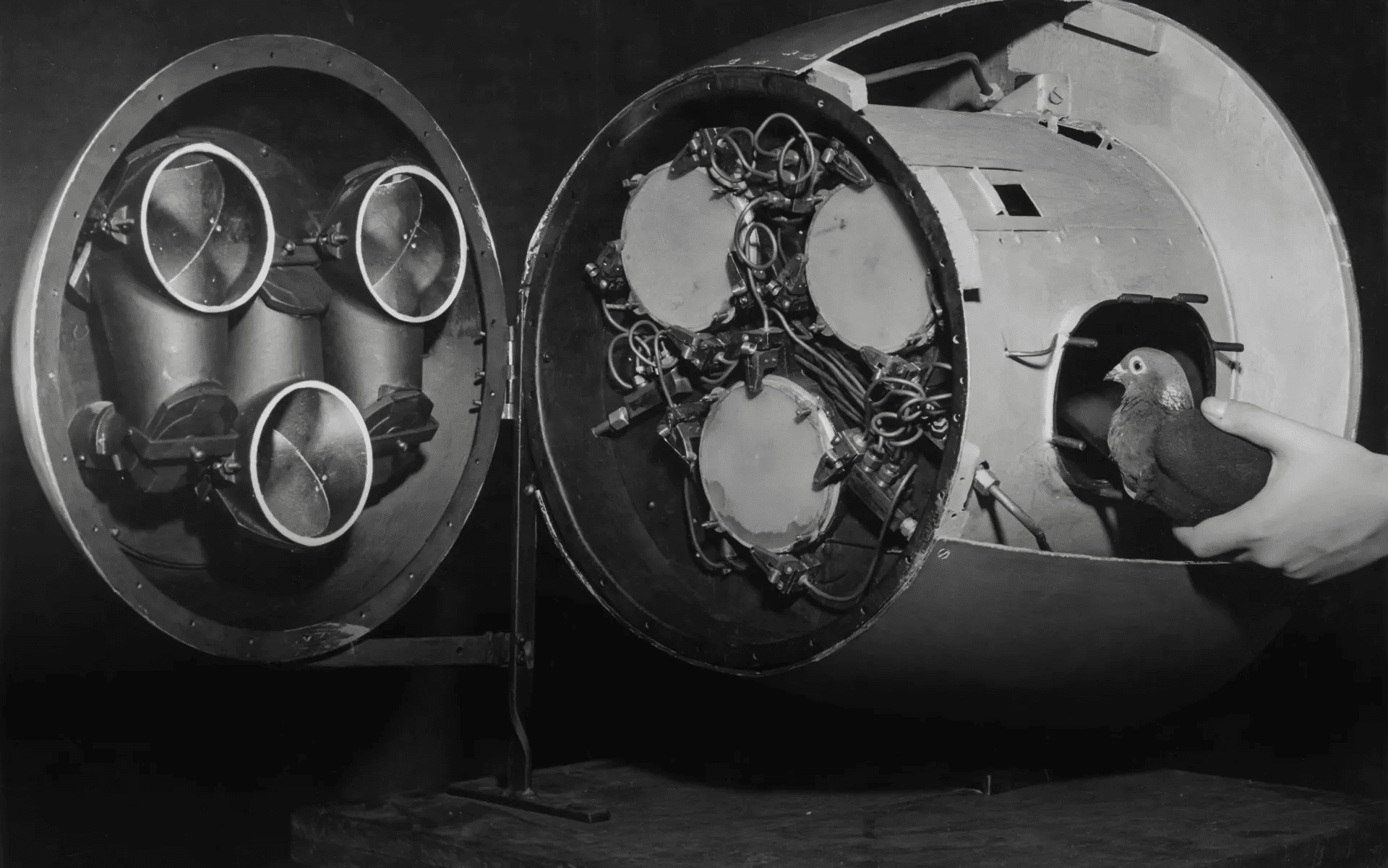

The idea that we might be able to use biomass to generate energy and suck down carbon dates back decades. But as global temperatures and emissions both continued to rise, climate modelers found that more and more BECCS or other types of carbon removal would be needed to prevent the planet from tipping past increasingly dangerous warming thresholds.

In addition to dramatic cuts in emissions, the world may need to suck down 11 billion tons of carbon dioxide per year by 2050 and 20 billion by 2100 to limit warming to 2 °C over preindustrial levels, according to a 2022 UN climate panel report. That’s a threshold we’re increasingly likely to blow past.

These grave climate warnings sparked growing interest and investments in ways to draw carbon dioxide out of the atmosphere. Companies sprang up offering to sink seaweed, bury biomass, develop carbon-sucking direct air capture factories, and add alkaline substances to agricultural fields or the oceans.

But BECCS purchases have dwarfed those other approaches.

For companies with fast-approaching climate deadlines, BECCS is one of the few options for removing hundreds of thousands of tons over the next few years, says Robert Höglund, who cofounded CDR.fyi, a public-benefit corporation that analyzes the carbon removal sector.

“If you have a target you want to meet in 2030 and you want durable carbon removal, that’s the thing you can buy,” he says.

That’s chiefly because these projects can harness the infrastructure of existing industries. At least for now, you don’t have to finance, permit, and develop new facilities.

“They’re not that hard to build, because it’s often a retrofitting of an existing plant,” Höglund says.

BECCS is also substantially less expensive for buyers than, say, direct air capture, with weighted average prices of $210 a ton compared with $490 among the deals to date, according to CDR.fyi. That’s in part because capturing the carbon dioxide from, say, a pulp and paper mill, where it makes up around 15% of flue gas, takes far less energy than plucking CO2 molecules out of the open air, where they account for just 0.04%.

Microsoft’s big BECCS bet

In 2020, Microsoft announced plans to become carbon negative by the end of this decade and, by midcentury, to remove all the emissions the company generated directly and from electricity use throughout its corporate history.

It’s leaning particularly heavily on BECCS to meet those climate commitments, with the category accounting for 76% of its known carbon removal purchases to date.

In April, the company announced it would purchase 3.7 million tons of carbon dioxide that a paper and pulp mill, located at some unspecified site in the southern US, will eventually capture and store over a 12-year period. It reached the deal through CO280, a startup based in Vancouver, British Columbia, that is forming joint ventures with paper and pulp mill companies in the US and Canada, to finance, develop, and operate the projects.

It was the biggest carbon removal purchase on record—until four days later, when Microsoft revealed it had agreed to buy 6.75 million tons of carbon removal from AtmosClear, CDR.fyi noted. That company is building a biomass power plant at the Port of Greater Baton Rouge in Louisiana, which will run largely on sugarcane bagasse (a by-product of sugar production) and forest trimmings. AtmosClear says the facility will be able to capture 680,000 tons of carbon dioxide per year.

“What we’ve seen is a lot of these BECCS projects have been very helpful, if not transformational, for providing investment in rural economies,” Marrs says. “We look at our BECCS deals, in Louisiana with AtmosClear and some other Gulf State providers, like CO280, as a real means of helping support these economies, while at the same time promoting sustainable forestry practices.”

In earlier quarters, Microsoft also made substantial purchases from Orsted, which operates power plants that burn wood pellets; Gaia, which runs facilities that convert municipal waste into energy; and Arbor, whose plants are fueled by “overgrown brush, crop residues, and food waste.”

Don’t let waste go to waste

Notably, at least three of these projects rely on some form of waste, a category distinct from fresh-cut timber or crops grown for the purpose of fueling BECCS projects. Solid waste, agricultural residues, logging leftovers, and plant material removed from forests to prevent fires present some of the ripest opportunities for BECCS—as well as some difficult questions of carbon accounting.

A 2019 report from the National Academy of Sciences estimated that the US could achieve more than 500 million tons of carbon removal a year through BECCS by 2040, while the world could exceed 3.5 billion tons, by relying just on agricultural by-products, logging residues, and organic waste—without needing to grow crops dedicated to energy.

Roger Aines, chief scientist of the energy program at Lawrence Livermore National Laboratory, argues we should at least be putting these sources to use rather than burning them or leaving them to decompose in fields. (Aines coauthored a similar analysis focused on California’s waste biomass and contributed to a 2022 lab report prepared for Microsoft to evaluate costs and options for carbon removal purchases.)

He stresses that the BECCS sector can learn a lot from using that waste material. For example, it should help to provide a sharper sense of whether the carbon math will work if more land, forests, and crops are dedicated to these sorts of purposes.

“The point is you won’t grow new material to do this in most cases, and won’t have to for a very long time, because there’s so much waste available,” Aines says. “If we get to that point, long into the future, we can address that then.”

Wonky accounting

But the critical question that emerges with waste is: Would it otherwise have been burned or allowed to decompose, or might some of it have been used in some other way that kept the carbon out of the atmosphere?

Sugarcane bagasse, for instance, is or could also be used to produce recyclable packaging and paper, biodegradable food packaging and cutlery, building materials, or soil amendments that add nutrients back to agricultural fields.

“A lot of the time those materials are being used for something else already, so the accounting gets wonky really quickly,” Grubert says.

Some fear that the financial incentives to pursue BECCS could also compel companies to trim away more trees and plants than is truly necessary to, say, manage forests or prevent fires—particularly as more and more BECCS plants create greater and greater demand for the limited supplies of such materials.

“Once you start capturing waste, you create an incentive to produce waste, so you have to be very careful about the perverse incentives,” says Danny Cullenward, a researcher and senior fellow at the Kleinman Center for Energy Policy at the University of Pennsylvania who studies carbon markets.

Due diligence

Like other big tech companies, Microsoft has lost some momentum when it comes to its climate goals, in large part because of the surging energy demands of its AI data centers.

But the company has generally earned a reputation for striving to clean up its direct emissions where possible and for seeking out high-quality approaches to carbon removal. It has consulted extensively with critically minded researchers at advisory firms like Carbon Direct and demonstrated a willingness to pay higher prices to support more credible projects.

Marrs says the company has extended that scrutiny to its BECCS deals.

“We want as much positive environmental impact as possible from every project,” he says.

“We’re doing months and months of technical due diligence with teams that visit the site, that interview stakeholders, that produce a report for us that we go through in depth with a third-party engineering provider or technical perspective provider,” he adds.

In a follow-up statement, Microsoft stressed that it strives to validate that every BECCS project it supports will achieve negative emissions, whatever the fuel source.

“Across all of these projects, we conducted substantial due diligence to ensure that BECCS feedstocks would otherwise return carbon to the atmosphere in a few years,” the company said.

Likewise, Jonathan Rhone, the cofounder and chief executive of CO280, stresses that they’ve worked with consultants, carbon market registries, and pulp and paper mills “to make sure we’re adopting the best standards.” He says they strive to conservatively assess the release and uptake of greenhouse gases across the supply chain of the mills they work with, taking into account the type of biomass used by a given plant, the growth rate of the forests it’s harvested from, the distance trucks drive to ship the timber or sawmill residues, the total emissions of the facility, and more.

Rhone says its typical projects will capture and store away on the order of 850,000 to 900,000 tons of carbon dioxide per year. How much that would make up of the plant’s total emissions would vary, based in part on how much of the facility’s energy comes from by-product biomatter and how much comes from fossil fuels. For its first projects, the company will aim to capture 50% to 65% of the CO2 emissions at the pulp and paper mills, but it eventually hopes to exceed 90%.

In a follow-up email, Rhone said the carbon capture equipment at the mills it works with will also prevent “substantial levels” of particulate matter and sulfur dioxide emissions and might reduce emissions of other pollutants as well.

The company is in active discussions with 10 pulp and paper mills in the Gulf Coast and Canada. Each carbon capture and storage project could cost hundreds of millions of dollars.

“What we’re trying to do at CO280 is show and demonstrate that we can create a stable, repeatable playbook for developing projects that are low risk and provide the market with what it wants, with what it needs,” Rhone says.

Proponents of BECCS say we could leverage biomass to deliver substantial volumes of carbon removal, so long as appropriate industry standards are put in place to prevent, or at least minimize, bad behavior.

The question is whether that will be the case—or whether, as the BECCS sector matures, it will veer closer to the pattern of carbon offset markets.

Studies and investigations have consistently shown that loosely regulated or poorly designed carbon credit and offset programs have allowed, if not invited, companies to significantly exaggerate the climate benefits of tree planting, forest preservation, and similar projects.

“It appears to me to be something that will be manageable but that we’ll always have to keep an eye on,” Aines says.

Magic

Even with all these carbon accounting complexities, BECCS projects can often deliver climate benefits, particularly for existing plants.

Adding carbon capture to an operating paper and pulp mill, power plant, or refinery is at least an improvement over the status quo from a climate perspective, insofar as it prevents emissions that would otherwise have continued.

But ambitions for BECCS are already growing beyond existing plants: Last year Drax, the controversial UK power giant, announced plans to launch a Houston-based division tasked with developing enough new BECCS projects to deliver 6 million tons of carbon removal per year, in the US or elsewhere.

Numerous other companies have also built or proposed biomass power plants in recent years, with or without carbon capture systems—decisions driven in part by policies that classify them as carbon neutral.

But if biomass isn’t carbon neutral, as Searchinger and others argue it can’t be in many applications, then these new unfiltered power plants are just adding more emissions to the atmosphere—and BECCS projects aren’t drawing any out of the air. And if that’s the case, it raises tough questions about corporate climate claims that depend on its doing so and the societal trade-offs involved in building lots of new plants dedicated to these purposes.

That’s because crops grown for energy require land, fertilizer, insecticides, and human labor that might otherwise go toward producing food for an expanding global population. And greater demand for wood invites the timber industry to chop down more and more of the world’s forests, which are already sucking up and storing away vast amounts of carbon dioxide and providing homes for immense varieties of plants and animals.

If these projects are merely preventing greenhouse gas from floating into the atmosphere but not drawing any down, we’re better off adding carbon capture and storage (CCS) equipment to an existing natural-gas plant instead, Searchinger argues.

Companies may think that harnessing nature to draw carbon dioxide out of the sky sounds better than cutting the emissions of a fossil-fuel turbine. But the electricity from the latter plant would cost dramatically less, the carbon capture system would reduce emissions more for the amount of same energy generated, and it would avoid the added pressures to cut down trees, he says.

“People think some magic happens—this magic combination of using biomass and CCS creates something bigger than its parts,” Searchinger says. “But it’s not magic; it’s simply the sum of the two.”