AI tools can do a lot of SEO now. Draft content. Suggest keywords. Generate metadata. Flag potential issues. We’re well past the novelty stage.

But for all the speed and surface-level utility, there’s a hard truth underneath: AI still gets things wrong. And when it does, it does it convincingly.

It hallucinates stats. Misreads query intent. Asserts outdated best practices. Repeats myths you’ve spent years correcting. And if you’re in a regulated space (finance, healthcare, law), those errors aren’t just embarrassing. They’re dangerous.

The business stakes around accuracy aren’t theoretical; they’re measurable and growing fast. Over 200 class action lawsuits for false advertising were filed annually from 2020-2022 in just the food and beverage industry alone, compared to 53 suits in 2011. That’s a 4x increase in one sector.

Across all industries, California district courts saw over 500 false advertising cases in 2024. Class actions and government enforcement lawsuits collected more than $50 billion in settlements in 2023. Recent industry analysis shows false advertising penalties in the United States have doubled in the last decade.

This isn’t just about embarrassing mistakes anymore. It’s about legal exposure that scales with your content volume. Every AI-generated product description, every automated blog post, every algorithmically created landing page is a potential liability if it contains unverifiable claims.

And here’s the kicker: The trend is accelerating. Legal experts report “hundreds of new suits every year from 2020 to 2023,” with industry data showing significant increases in false advertising litigation. Consumers are more aware of marketing tactics, regulators are cracking down harder, and social media amplifies complaints faster than ever.

The math is simple: As AI generates more content at scale, the surface area for false claims expands exponentially. Without verification systems, you’re not just automating content creation, you’re automating legal risk.

What marketers want is fire-and-forget content automation (write product descriptions for these 200 SKUs, for example) that can be trusted by people and machines. Write it once, push it live, move on. But that only works when you can trust the system not to lie, drift, or contradict itself.

And that level of trust doesn’t come from the content generator. It comes from the thing sitting beside it: the verifier.

Marketers want trustworthy tools; data that’s accurate and verifiable, and repeatability. As ChatGPT 5’s recent rollout has shown, in the past, we had Google’s algorithm updates to manage and dance around. Now, it’s model updates, which can affect everything from the actual answers people see to how the tools built on their architecture operate and perform.

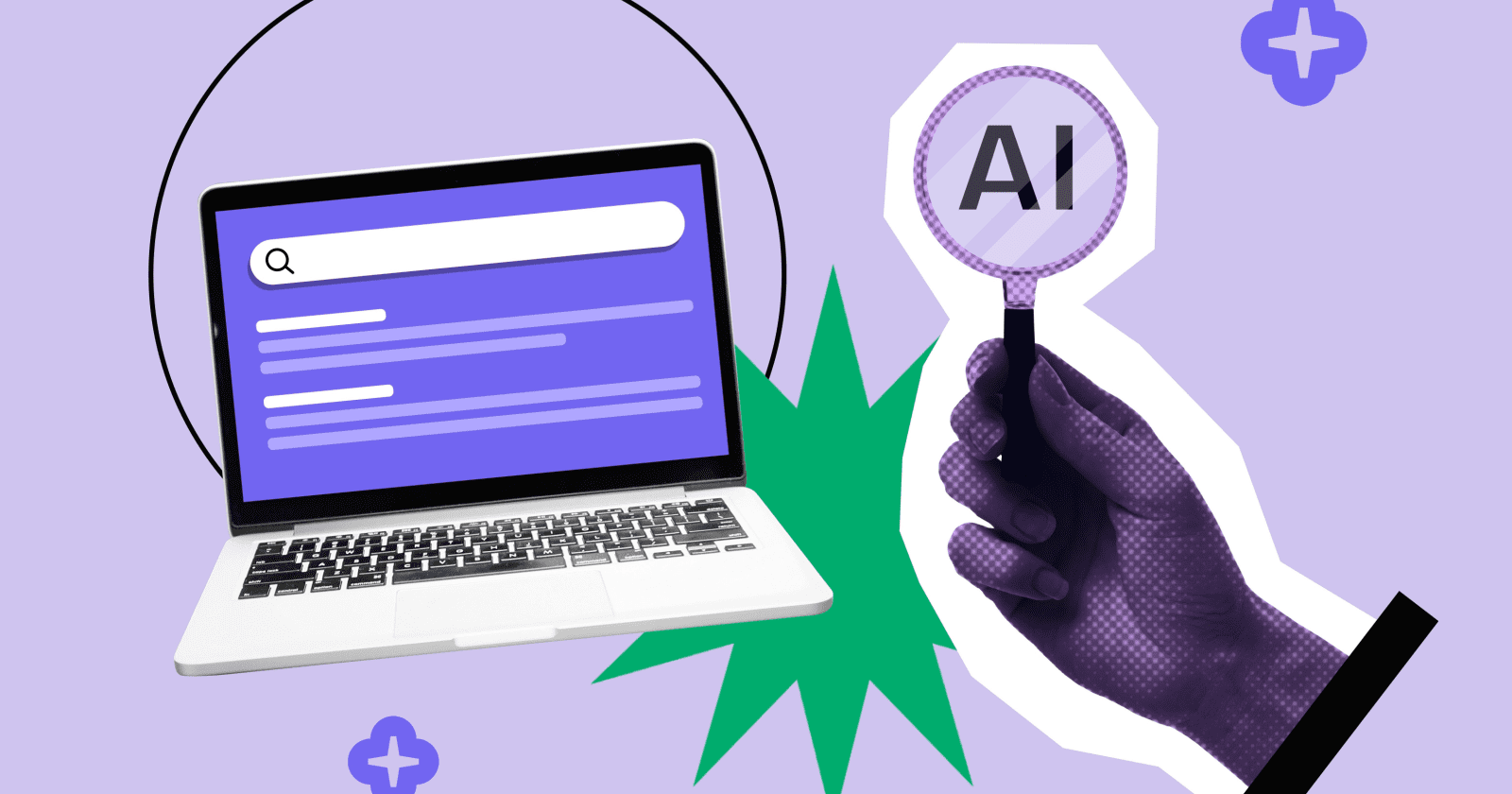

To build trust in these models, the companies behind them are building Universal Verifiers.

A universal verifier is an AI fact-checker that sits between the model and the user. It’s a system that checks AI output before it reaches you, or your audience. It’s trained separately from the model that generates content. Its job is to catch hallucinations, logic gaps, unverifiable claims, and ethical violations. It’s the machine version of a fact-checker with a good memory and a low tolerance for nonsense.

Technically speaking, a universal verifier is model-agnostic. It can evaluate outputs from any model, even if it wasn’t trained on the same data or doesn’t understand the prompt. It looks at what was said, what’s true, and whether those things match.

In the most advanced setups, a verifier wouldn’t just say yes or no. It would return a confidence score. Identify risky sentences. Suggest citations. Maybe even halt deployment if the risk was too high.

That’s the dream. But it’s not reality yet.

Industry reporting suggests OpenAI is integrating universal verifiers into GPT-5’s architecture, with recent leaks indicating this technology was instrumental in achieving gold medal performance at the International Mathematical Olympiad. OpenAI researcher Jerry Tworek has reportedly suggested this reinforcement learning system could form the basis for general artificial intelligence. OpenAI officially announced the IMO gold medal achievement, but public deployment of verifier-enhanced models is still months away, with no production API available today.

DeepMind has developed Search-Augmented Factuality Evaluator (SAFE), which matches human fact-checkers 72% of the time, and when they disagreed, SAFE was correct 76% of the time. That’s promising for research – not good enough for medical content or financial disclosures.

Across the industry, prototype verifiers exist, but only in controlled environments. They’re being tested inside safety teams. They haven’t been exposed to real-world noise, edge cases, or scale.

If you’re thinking about how this affects your work, you’re early. That’s a good place to be.

This is where it gets tricky. What level of confidence is enough?

In regulated sectors, that number is high. A verifier needs to be correct 95 to 99% of the time. Not just overall, but on every sentence, every claim, every generation.

In less regulated use cases, like content marketing, you might get away with 90%. But that depends on your brand risk, your legal exposure, and your tolerance for cleanup.

Here’s the problem: Current verifier models aren’t close to those thresholds. Even DeepMind’s SAFE system, which represents the state of the art in AI fact-checking, achieves 72% accuracy against human evaluators. That’s not trust. That’s a little better than a coin flip. (Technically, it’s 22% better than a coin flip, but you get the point.)

So today, trust still comes from one place: A human in the loop, because the AI UVs aren’t even close.

Here’s a disconnect no one’s really surfacing: Universal verifiers won’t likely live in your SEO tools. They don’t sit next to your content editor. They don’t plug into your CMS.

They live inside the LLM.

So even as OpenAI, DeepMind, and Anthropic develop these trust layers, that verification data doesn’t reach you, unless the model provider exposes it. Which means that today, even the best verifier in the world is functionally useless to your SEO workflow unless it shows its work.

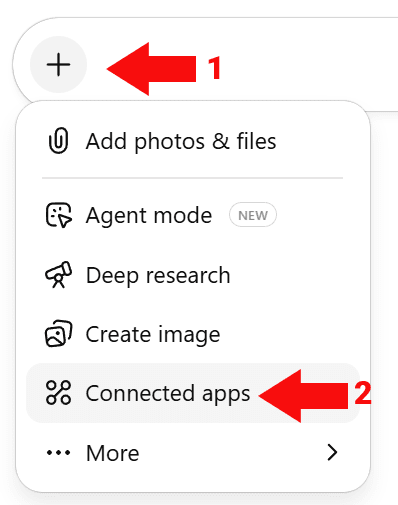

Here’s how that might change:

Verifier metadata becomes part of the LLM response. Imagine every completion you get includes a confidence score, flags for unverifiable claims, or a short critique summary. These wouldn’t be generated by the same model; they’d be layered on top by a verifier model.

SEO tools start capturing that verifier output. If your tool calls an API that supports verification, it could display trust scores or risk flags next to content blocks. You might start seeing green/yellow/red labels right in the UI. That’s your cue to publish, pause, or escalate to human review.

Workflow automation integrates verifier signals. You could auto-hold content that falls below a 90% trust score. Flag high-risk topics. Track which model, which prompt, and which content formats fail most often. Content automation becomes more than optimization. It becomes risk-managed automation.

Verifiers influence ranking-readiness. If search engines adopt similar verification layers inside their own LLMs (and why wouldn’t they?), your content won’t just be judged on crawlability or link profile. It’ll be judged on whether it was retrieved, synthesized, and safe enough to survive the verifier filter. If Google’s verifier, for example, flags a claim as low-confidence, that content may never enter retrieval.

Enterprise teams could build pipelines around it. The big question is whether model providers will expose verifier outputs via API at all. There’s no guarantee they will – and even if they do, there’s no timeline for when that might happen. If verifier data does become available, that’s when you could build dashboards, trust thresholds, and error tracking. But that’s a big “if.”

So no, you can’t access a universal verifier in your SEO stack today. But your stack should be designed to integrate one as soon as it’s available.

Because when trust becomes part of ranking and content workflow design, the people who planned for it will win. And this gap in availability will shape who adopts first, and how fast.

The first wave of verifier integration won’t happen in ecommerce or blogging. It’ll happen in banking, insurance, healthcare, government, and legal.

These industries already have review workflows. They already track citations. They already pass content through legal, compliance, and risk before it goes live.

Verifier data is just another field in the checklist. Once a model can provide it, these teams will use it to tighten controls and speed up approvals. They’ll log verification scores. Adjust thresholds. Build content QA dashboards that look more like security ops than marketing tools.

That’s the future. It starts with the teams that are already being held accountable for what they publish.

You can’t install a verifier today. But you can build a practice that’s ready for one.

Start by designing your QA process like a verifier would:

- Fact-check by default. Don’t publish without source validation. Build verification into your workflow now so it becomes automatic when verifiers start flagging questionable claims.

- Track which parts of AI content fail reviews most often. That’s your training data for when verifiers arrive. Are statistics always wrong? Do product descriptions hallucinate features? Pattern recognition beats reactive fixes.

- Define internal trust thresholds. What’s “good enough” to publish? 85%? 95%? Document it now. When verifier confidence scores become available, you’ll need these benchmarks to set automated hold rules.

- Create logs. Who reviewed what, and why? That’s your audit trail. These records become invaluable when you need to prove due diligence to legal teams or adjust thresholds based on what actually breaks.

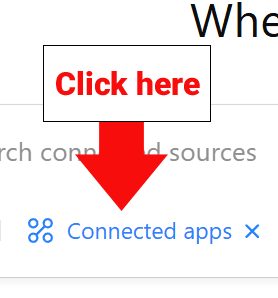

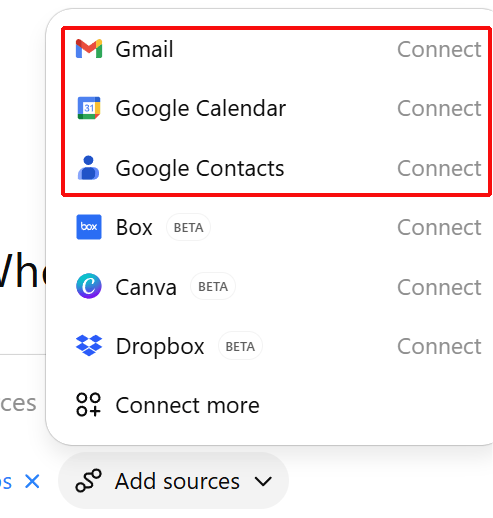

- Tool audits. When you’re looking at a new tool to help with your AI SEO work, be sure to ask them if they are thinking about verifier data. If it becomes available, will their tools be ready to ingest and use it? How are they thinking about verifier data?

- Don’t expect verifier data in your tools anytime soon. While industry reporting suggests OpenAI is integrating universal verifiers into GPT-5, there’s no indication that verifier metadata will be exposed to users through APIs. The technology might be moving from research to production, but that doesn’t mean the verification data will be accessible to SEO teams.

This isn’t about being paranoid. It’s about being ahead of the curve when trust becomes a surfaced metric.

People hear “AI verifier” and assume it means the human reviewer goes away.

It doesn’t. What happens instead is that human reviewers move up the stack.

You’ll stop reviewing line-by-line. Instead, you’ll review the verifier’s flags, manage thresholds, and define acceptable risk. You become the one who decides what the verifier means.

That’s not less important. That’s more strategic.

The verifier layer is coming. The question isn’t whether you’ll use it. It’s whether you’ll be ready when it arrives. Start building that readiness now, because in SEO, being six months ahead of the curve is the difference between competitive advantage and playing catch-up.

Trust, as it turns out, scales differently than content. The teams who treat trust as a design input now will own the next phase of search.

More Resources:

This post was originally published on Duane Forrester Decodes.

Featured Image: Roman Samborskyi/Shutterstock