The SEO Industry Is Teaching The Wrong Skills via @sejournal, @DuaneForrester

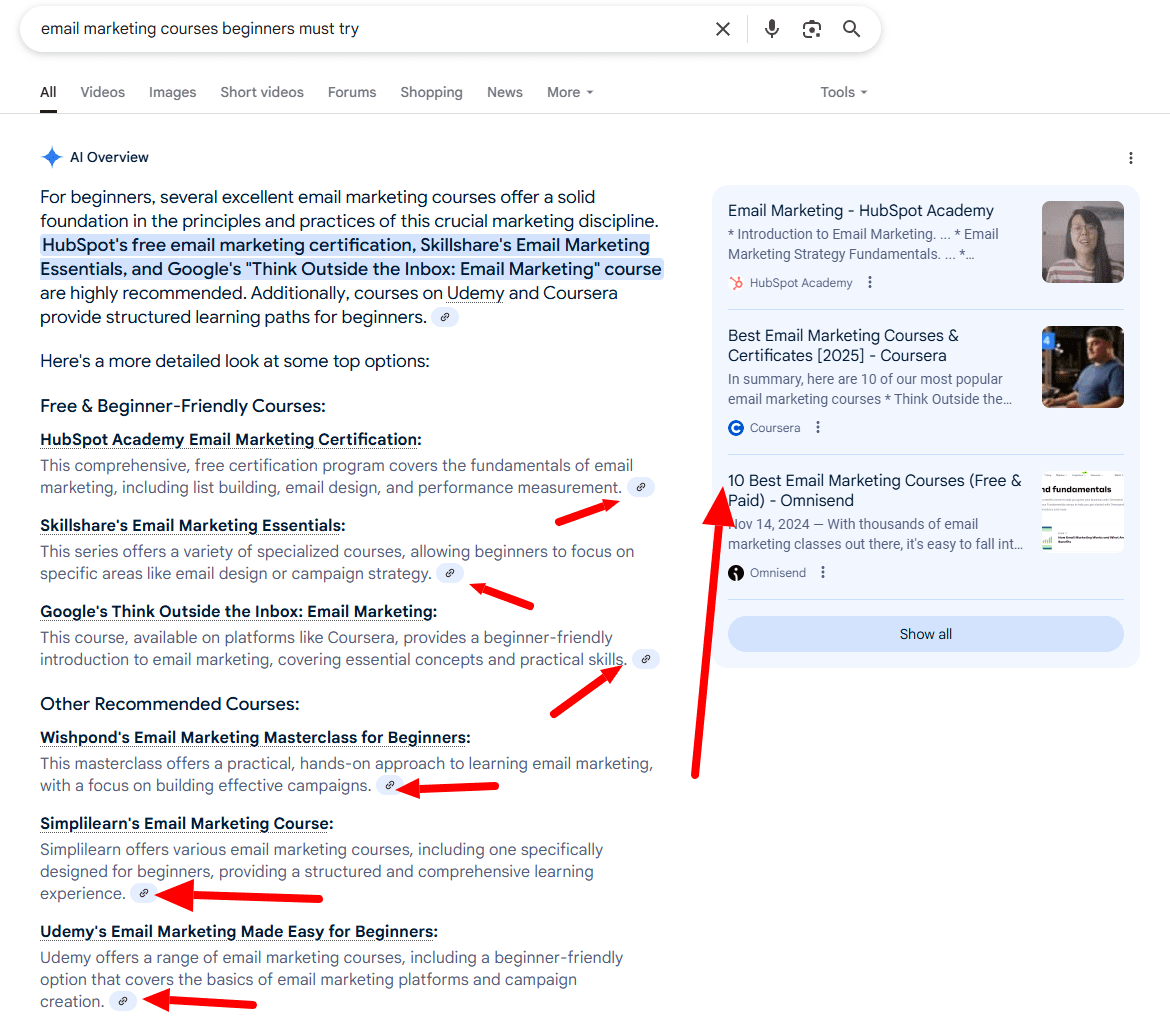

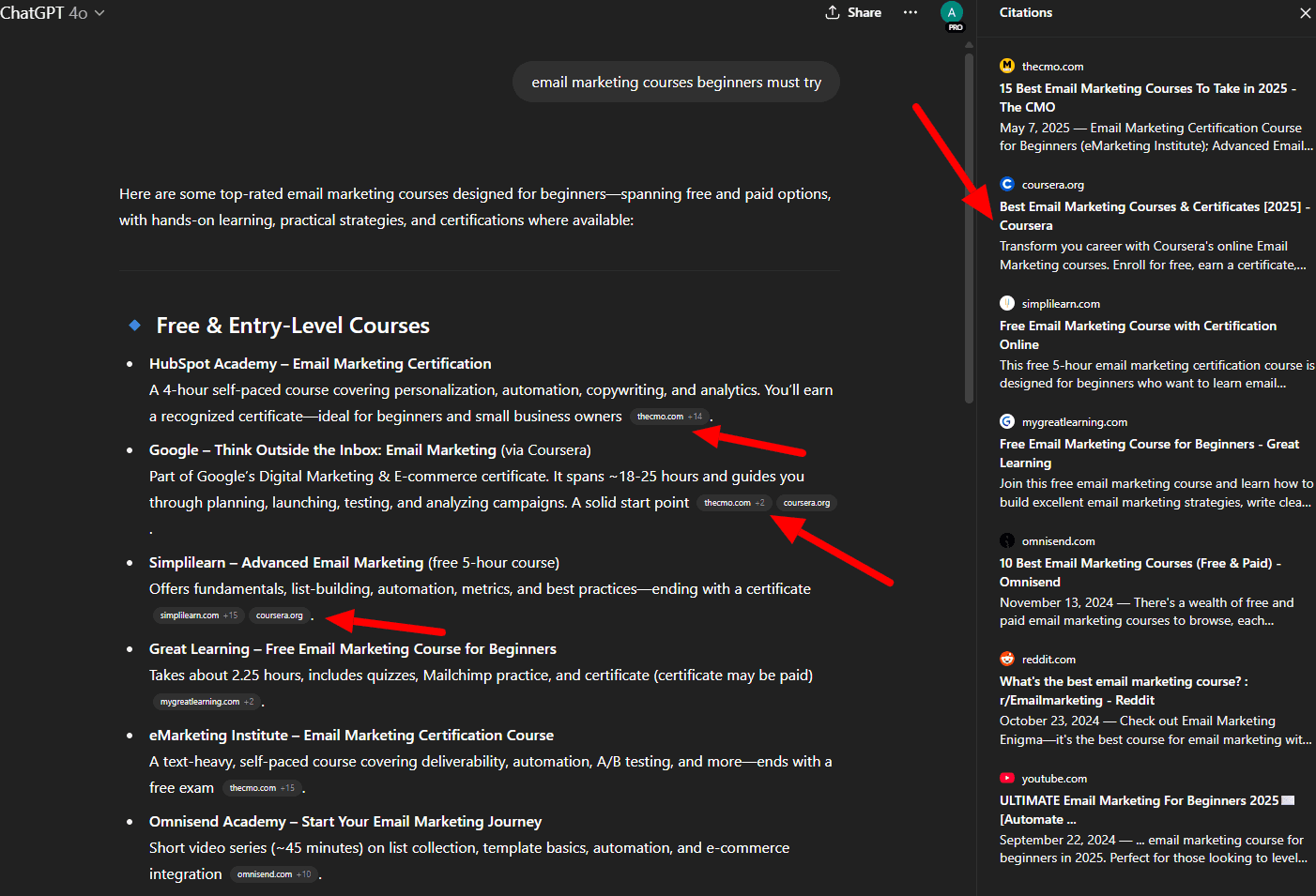

Generative AI-driven search isn’t a trend; it’s the new baseline. Tools like Gemini and ChatGPT have already replaced traditional queries for millions of users.

Your audience doesn’t just search anymore: They ask. They expect answers. And those answers are being assembled, ranked, and cited by AI systems that don’t care about title tags or keyword placement. They care about trust, structure, and retrievability.

Most SEO training programs haven’t caught up. They’re still built around tactics designed for a ranking algorithm, not a generative model. The gap isn’t closing; it’s widening.

And this isn’t speculation. Research from multiple firms now shows that conversational AI is becoming a dominant discovery interface.

Microsoft, Google, Meta, OpenAI, and Amazon are all restructuring their product ecosystems around AI-powered answers, not just ranked links.

The tipping point has already passed. If your training still revolves around keyword targeting and domain authority, you’re falling behind, and not gradually, but right now.

The uncomfortable reality is that many marketers are now trained in a playbook from the early 2010s, while the engines have moved on to an entirely different game.

At this point, are we even optimizing for “search engines” anymore – or have they become “discovery assistants” or “search assistants” built to curate, cite, and synthesize?

How SEO Fell Behind (Historical Context)

Traditional SEO has always adapted, from Google’s Panda and Penguin algorithms, which prioritized content quality and penalized low-quality links, to Hummingbird’s semantic understanding of user intent.

But today’s generative search landscape is an entirely new paradigm. Google Gemini, ChatGPT, and other conversational interfaces don’t simply rank pages; they synthesize answers from the most retrievable chunks of content available.

This is not a gradual shift. This is the biggest leap in SEO’s history, and most training programs haven’t caught up yet.

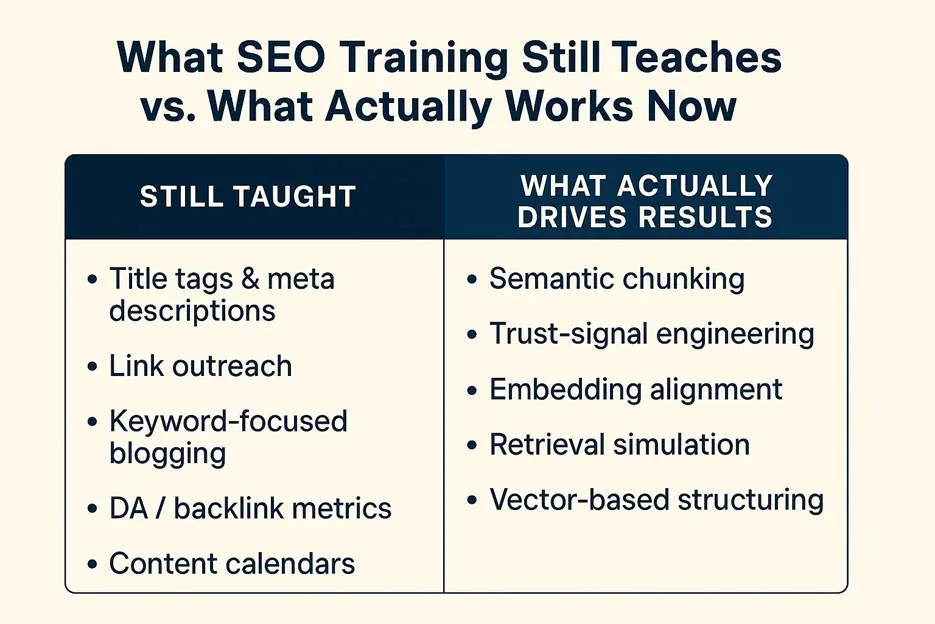

The Old Curriculum: What We’re Still Teaching (And Shouldn’t Be)

Traditional SEO curriculums typically emphasize:

- Title Tags & Meta Descriptions: Despite Google rewriting around 60-75% of these (source: Zyppy SEO study), these remain foundational to most SEO training programs.

- Link Outreach & Link Building: Still focused on quantity and domain authority, even though AI-driven search systems focus more on contextual relevance and content (and author) trustworthiness.

- Keyword-Focused Blogging & Content Calendars: Rigid editorial calendars and keyword-driven articles are becoming obsolete in an AI-driven search era.

- Technical SEO: While still useful for traditional search engines, modern AI-based systems care far less about the technical structure of a webpage, and more about the accessibility of the content, and how it displays entities and relationships.

Example:

Take a common assignment from SEO training programs: “Write a blog post targeting the keyword ‘best hiking boots for 2025’.”

You’re taught to select a primary keyword, structure your headers around related phrases, and write a long-form post designed to rank in traditional SERPs.

That approach might still work for Google’s blue links, but in a generative AI context, it fails.

Ask Gemini or ChatGPT the same query, and your content likely won’t appear. Not because it’s low quality, but because it wasn’t structured to be retrieved.

It lacks semantic chunking, embedding alignment, and explicit trust signals.

The AI systems are selecting content blocks they can understand, rank by relevance, and cite. If your article is built to match human scan patterns instead of machine retrieval cues, it’s simply invisible.

Image credit: Duane Forrester

Image credit: Duane ForresterThe New SEO Work: What Actually Drives Results Now

Real SEO today revolves around structured, retrievable, semantically rich content:

1. Semantic Chunking

Creating content structured into clearly defined, self-contained chunks optimized for large language models (LLMs).

2. Vector Modeling & Embeddings

Placing content into semantic clusters inside vector databases, ensuring each piece of content is closely aligned with user intent and query vectors.

3. Trust, Signal Engineering

Implementing structured citations, schema markup, clear attribution, and credibility signals that AI-driven models trust enough to cite explicitly.

4. Retrieval Simulation & Prediction

Using tools such as RankBee, SERPRecon, and Waikay.io to actively simulate how your content surfaces within AI-driven answers.

5. RRF Tuning & Model Optimization

Fine-tuning content performance across generative models like Perplexity, Gemini, ChatGPT, ensuring maximum retrievability in various conversational contexts.

6. Zero-Click Optimization

Optimizing content not just for clicks but to be featured directly in generative AI responses.

Backlinko’s guide on LLM Seeding introduces a practical framework for getting cited by large language models like ChatGPT and Gemini.

It emphasizes creating chunkable, trustworthy content designed to be surfaced in AI-generated answers – marking a fundamental shift from optimizing for rankings to optimizing for retrieval.

Consider leading brands engaging with AI-first discovery themes:

- Zapier has published educational content on vector embeddings and how they underpin tools like ChatGPT and semantic search (source). While that article doesn’t detail their internal SEO strategies, it shows how marketing teams can start unpacking the concepts that underpin retrieval-based visibility.

→ Correction: An earlier version of this article suggested Zapier had implemented semantic chunking and retrieval optimization. That was an editing error on my part: there’s no public evidence to support that claim. - Shopify, meanwhile, uses its Shopify Magic tool to generate SEO-optimized product descriptions at scale, integrating generative workflows into day-to-day content ops (source).

→ Takeaway: Shopify ties generative tooling directly to scalable, structured content designed for discovery.

These examples don’t suggest perfect alignment – but they point to how modern teams are beginning to integrate AI thinking into real workflows. That’s the shift: from content creation to content retrieval architecture.

Why The Disconnect Exists (And Persists)

1. Educational Inertia

Updating curriculums is expensive, difficult, and risky for educators.

Many course creators and educational institutions are overwhelmed or ill-equipped to rapidly pivot their syllabi toward advanced semantic optimization and vector embeddings.

2. Hiring Practices & Organizational Habits

Job ads often still emphasize outdated skills, perpetuating the inertia by attracting talent trained in legacy SEO methods rather than future-oriented techniques.

3. Legacy Toolsets

Major SEO platforms like Moz, Semrush, and Ahrefs continue to emphasize metrics like domain authority, keyword volumes, and traditional backlink counts, reinforcing outdated optimization practices.

The Fix: An Outcome-Driven SEO Training Model

To address these problems, SEO training must now shift toward measurable KPIs, clear roles, and task-based learning:

New KPI, Driven Framework:

- Embedding retrieval rate (AI-driven visibility).

- GenAI attribution percentage (citations in AI outputs).

- Vector presence and semantic alignment.

- Trust-signal effectiveness (schema and structured data).

- Re-ranking lift via Retrieval Rank Fusion (RRF).

New Roles And Responsibilities:

- Digital GEOlogist: Optimizes content placement and semantic structure for retrieval. (I know, the title is a joke, but you get the point.)

- Trust-Signal Strategist: Implements schema, citations, structured credibility signals.

- Cheditor (Chunk Editor): Optimizes chunks of content specifically for LLM consumption and retrievability. If you’re an Editor, you need to be a Cheditor.

Task-Based SEO Education:

- Simulate retrieval via ChatGPT/Perplexity prompt engineering.

- Perform semantic embedding audits to measure content similarity against successful retrieval outputs.

- Conduct regular A/B tests on chunk structures and semantic signals, evaluating real-world retrievability.

How To Take Charge: You Are The Resource Now

The reality is stark but empowering: No one’s coming to save your career. Not your company, which may move slowly, nor traditional schools, nor third-party platforms with outdated content.

You won’t find this in a course catalog. If your company hasn’t caught up (and most haven’t), it’s on you to take the lead.

Here’s a practical roadmap to start building your own AI-SEO expertise from the ground up:

Month 1: Build Your Foundation

- Complete foundational AI courses:

- Share key learnings internally.

Month 2: Tactical Skill, Building

- Complete practical SEO, specific courses:

- Start sharing actionable tips via Slack or internal newsletters.

Month 3: Community And Collaboration

- Organize “Lunch & Learns” or internal SEO Labs, focused on semantic chunking, embeddings, trust, signal engineering.

- Engage actively in external communities (Discord groups, LinkedIn SEO groups, online forums like Moz Q&A) to deepen your knowledge.

Month 4: Institutionalize Your Expertise

- Formally propose and launch an internal “AI-SEO Center of Excellence.”

- Run practical retrieval simulations, document results, and showcase tangible improvements to secure ongoing investment and visibility internally.

Turning Learning Into Leadership

Once you’ve built momentum with personal upskilling, don’t stop at silent improvement. Make your learning visible, and valuable, by creating change around you:

- Host SEO-AI Micro Sessions: Run short, focused sessions (15-20 minutes) on topics like semantic chunking, retrieval testing, or schema design. Keep them informal, repeatable, and useful.

- Run Retrieval Audits: Pick three to five high-priority URLs and test them in ChatGPT, Gemini, or Perplexity. Which content blocks surface? What gets ignored? Share your findings openly.

- Build a Knowledge Hub: Use Notion, Google Docs, or Confluence to create a centralized space for SEO-AI strategies, test results, tools, and templates.

- Create a Weekly AI Digest: Curate key updates from the field – citations appearing in generative answers, new tools, useful prompts – and circulate them internally.

- Recruit Allies: Invite collaborators to contribute retrieval tests, co-host sessions, or flag examples of your content appearing in AI answers. Leadership scales faster with support.

This is how you shift from learner to leader. You’re no longer just upskilling, you’re operationalizing AI search inside your company.

You Are the Catalyst, Take Action Now

The roles of traditional SEO specialists will shift (or fade?), replaced by experts fluent in semantic optimization and retrievability.

Become the person who educates your company because you educated yourself first.

Your role isn’t just to keep up, it’s to lead. The responsibility, and the opportunity, sit with you right now.

Don’t wait for your company to catch up or for course platforms to get current. Take action. The new discovery systems are already here, and the people who learn to work with them will define the next era of visibility.

- If you teach SEO, rewrite your courses around these new KPIs and roles.

- If you hire SEO talent, demand modern optimization skills: semantic embeddings knowledge, chunk structuring experience, retrieval simulation approaches.

- If you practice SEO, proactively shift your efforts toward retrieval testing, embedding audits, and semantic optimization immediately.

SEO isn’t dying, it’s evolving.

And you have an opportunity, right now, to be at the forefront of this evolution.

More Resources:

This post was originally published on Duane Forrester Decodes.

Featured Image: Rawpixel.com/Shutterstock