Turning data into actionable insights: a data-driven SEO strategy

Modern SEO is all about data. Rankings can change overnight, user behavior as well, and search engines increasingly use AI to power the search results. To be able to respond, your decisions should be dictated by real, measurable insights. This article offers a practical way to turn SEO data into actionable insights.

The role of data in modern SEO

The search landscape is more complex than ever, so you need all the help you can get. By analyzing data, SEOs and business owners can learn and understand what works and what doesn’t. Metrics from tools like Google Analytics and Search Console provide glimpses of how visitors behave, keyword usage, and page performance. Using data to make decisions takes the guesswork out of the SEO work.

Good data gives you a clear picture of user engagement. For instance, tracking engagement time, engagement rates, and click-through rates will reveal whether content meets audience needs. These are crucial data insights that uncover gaps that might hinder performance. Data-driven insights help you understand what to focus on and what to prioritize.

Data doesn’t just identify issues, but also opportunities. Trends in keyword performance or a shift in traffic sources can lead to new content ideas or a new market to target. This is data-driven marketing, as you are making decisions based on evidence instead of hunches. These insights will lead to strategies focused on real user behaviors, which should lead to better results.

The goal isn’t to find interesting stats — it’s to find what you can do next. In SEO and AI-driven search, the data that matters is the data that leads to action: fix this page, shift that content, change how you’re showing up. If your insights don’t lead to decisions, they’re just noise.

Carolyn Shelby – Principal SEO at Yoast

A Yoast example

Let’s take a simple example from Yoast. We noticed one of our articles (What is SEO?) was gradually losing traffic and slipping in the rankings for key terms. The content hadn’t been updated for a while, so we took a closer look. We analyzed the search results and compared our article with those from competitors. We looked at intent, structures, relevance, and freshness. It was easy to see that our article lacked depth and context in key areas.

We wrote a good brief for the article and detailed the work needed. Then, we rewrote sections, updated examples, improved internal linking, and made it generally easier to read. We also added new custom graphics and on-topic expert quotes from our in-house Principal SEO, Alex Moss.

After republishing, the article quickly regained visibility. Plus, it climbed back towards the top of the search results, which brought in extra traffic. This was a clear reminder for us; when data shows a drop, improving the quality of the content backed by a good analysis can still win.

Turning data into insights

You need a process to quickly and systematically turn raw data into valuable insights. Eventually, you’ll get these insights once you ask the right SEO questions, gather the data, analyze it, and plan accordingly.

Start with your goals, then ask: what’s holding us back? Actionable insights live in the gap between where you are and where you’re trying to go. That gap is different for every site and that’s what makes good analysis so powerful.

Carolyn Shelby – Principal SEO at Yoast

Step 1: What do you want to know?

Start by writing down the SEO questions you want answered. Do you want to improve performance, get more organic traffic, or better engagement? Analyze a traffic drop? For instance, an online store owner might want to understand why certain product pages don’t convert as well as expected. Thinking these things through before you start digging into the data makes it easier to focus on the metrics that matter.

Step 2: Gather the relevant data

Collect the data you need using tools like Google Analytics, Semrush, Wincher, Ahrefs, or other platforms that can power your data-driven SEO strategy. If you’d like to investigate a product page with subpar performance, you’ll look at page views, click-through rates, average engagement times, and engagement rates in GA4. Data like this should give you an idea to find and address the issues.

Step 3: Analyze and spot trends

Dive into the data and try to spot patterns and trends. For example, an educational site might notice that articles on a particular topic get a lot of traffic but low engagement. Digging deeper might find that the titles of the articles attract visitors, but for some reason, the content doesn’t keep them interested. Trends like these help turn that data into insights that you can act upon. You can also use things like segmentation to find differences between groups of people from specific regions, who could engage wildly differently with your content.

Step 4: Turn findings into actions

Once you’ve pinpointed the issues, it’s time to decide what you want to do. For instance, if you’ve found that an article has a low engagement rate because of the time it takes to load the page, you could fix the images and scripts on the page. Or, if you find that some keywords get traffic, but no conversions, you might need to improve the CTA on the page. Or it might be a search intent mismatch to fix. This is the thing that turns the insights from data into actionable insights.

This is a nicely structured way of getting the insights needed to inform your data-driven SEO strategy. You can use every piece of information you find to improve your work as you go. This will not only help you understand the data but also make it easier to make the improvements needed to reach your SEO and business goals.

An example: Addressing brand performance in LLMs

For this example, think of a tech publisher named Digital Mosaic. It’s a reputable source for in-depth news from the tech industry. Recently, their marketing team noticed something off. Users interacting with AI search engines and large language models (LLMs) like Google Gemini or ChatGPT rarely saw mentions of the Digital Mosaic brand. In other words, even when asked for the latest tech insights, the AI-driven sources and answers often omitted Digital Mosaic in favor of other options.

After finding the issue, the team started analyzing data from various analytics platforms, brand mention trackers, and user surveys. They found their SEO and content work was pretty good, but the content was not properly optimized to help LLMs surface it. The data showed that their content lacked the language and brand signals needed to help LLMs understand the brand’s authority.

When they found this, the teams got to work to improve how LLMs perceive their content:

Improving brand signals

The content team added clearer brand signals to their content, and each post received better metadata and structured data. The goal was to clearly tie the brand to the content to help LLMs recognize the sources.

Changes in content

Next, the team restructured certain articles to include branded segments, such as “Digital Mosaic Exclusive Analysis” or “Today’s Tech Insights by Digital Mosaic”. This makes the brand more visible to users and gives LLMs a chance to associate the content with the brand, coming from a trusted source.

Investing in partnerships and collaboration

The publisher set up a series of collaborations with well-known tech influencers and other outlets. They made co-branded content and were mentioned in many podcasts and webinars. This helped improve the brand’s presence in online conversations. LLMs love to look for what’s available on third-party sites about brands while generating responses.

Rinse and repeat

The team reviewed the changes’ performance to see if the LLMs would improve brand mentions. They used AI tools, like AI brand monitoring tools, to monitor and simulate the LLM outputs to see if the work was effective. Based on their findings, they would fine-tune their work and continue to improve performance.

Within a few months, the results were encouraging. LLMs were increasingly showing content from and mentioning Digital Mosaic, and the brand’s footprint in LLMs was steadily improving. This did not just help visibility and increase the brand’s authority in the industry, but also led to a new source of traffic from AI search interfaces.

This fictional example shows how a publisher can use data insights to overcome a very specific challenge. Mixing traditional SEO solutions with new technologies helped Digital Mosaic turn data into actionable insights. Not only did it help the brand’s visibility right now, but it also prepared it for the AI-powered future.

Read more: How to optimize content for AI LLM comprehension using Yoast’s tools.

You need the right tools to turn data into actionable insights. This will be a mix of the tools we all know and love, and more specific ones to understand user behavior and site performance.

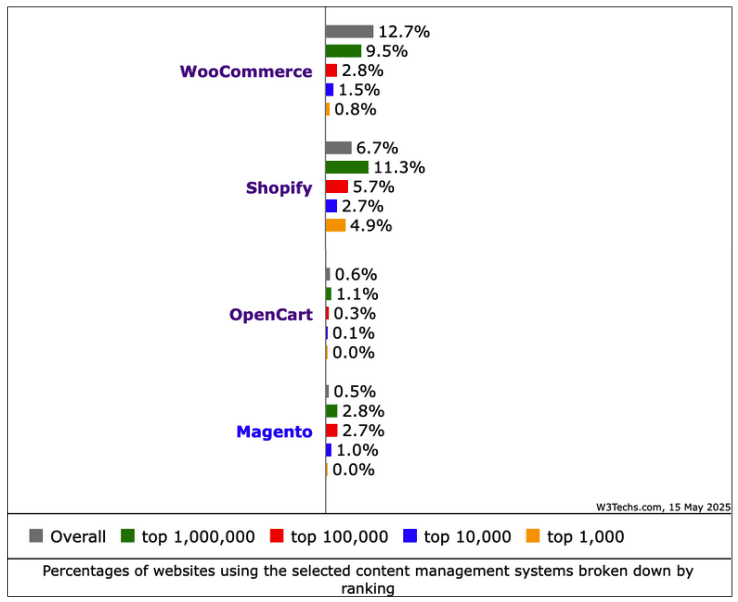

We all start with Google Analytics 4 and Search Console. GA4 tracks many metrics, including user engagement, event counts, and traffic sources. Properly set up, it gives you a good overview of how users use your site. Search Console shows how your site performs in the SERPs, including keyword rankings, indexing status, and crawl errors.

Tools like Ahrefs and Semrush provide information about backlinks, rankings, and search trends. These search marketing tools also have many features for competitive analysis and keyword research. You’ll get a big database of historical data, so you can spot and interpret trends over time. This data helps you with your data-driven marketing on all fronts.

Advanced techniques and technologies

The are so many options to dive ever-deeper into your data to find the insights you need. Beyond the basics, you can use:

- Segmentation: It could help to break up your data into specific audience segments. For instance, you could look at visitor behavior based on demographics, location, or the type of device they use. Segmenting data helps you understand why certain groups behave differently. For instance, if mobile users show lower engagement than desktop users, there might be something wrong with your mobile site.

- Trend analysis: Don’t just focus on looking at data for a specific day. It’s often better to look at metrics over different time periods. Look at the monthly or quarterly performance. This gives you an idea of the long-term impact of changes.

- Build dashboards to visualize data: Make a dashboard with data from various sources. Use tools like Looker Studio to combine Google data with SEO tools like Semrush and Ahrefs. This will give you reports that will show all key data at a glance. A dashboard makes it easier to understand data and communicate it with other team members or management.

- Big data: Big data is becoming increasingly important for data-driven SEO. Huge data sets can provide insights that smaller sets can overlook. They allow you to examine user behavior, search trends, and site performance at scale. With machine learning and automation, you can use big data to get better and faster results to inform your SEO strategy.

Iterative optimization and reporting

SEO is an ongoing process, and you’ll have to adjust course regularly. Don’t treat your site’s performance as a snapshot, but as something dynamic that evolves over time. Regularly looking at your data keeps you on top of things, from changes in user behavior to emerging search trends.

Make it a routine

Schedule when you review data. This might be daily checks for urgent work or weekly to track short-term changes. For long-term trends, do monthly or quarterly deep dives. Route analysis helps you spot patterns that might not be so obvious at first glance.

Test and experiment

With an iterative optimization approach, you test what works. For example, you could A/B test different page layouts, CTA buttons, or various meta titles. You might also try different content formats to see what gets more engagement. These tests will get you the data and insights needed to make the most of your SEO work.

Feedback loop

A true feedback loop helps validate your improvements. After turning data into actionable insights, implement the changes in your content or technical SEO work. Keep updating your data to see if you need to refine your strategy. If a new tactic works, adopt it as a standard practice. But if it doesn’t work as intended, find out why and try a variation of it. Measuring trial and error and adopting your tactics makes you flexible and responsive.

Towards a data-driven SEO strategy

Using the knowledge you gain from turning data into actionable insights can greatly improve your SEO performance. Be sure to structure the data-gathering process: ask the right questions, collect the right data, analyze the trends, and create a system that turns those insights into action.

What you change on your site isn’t even that important; it might be updating metadata, improving content, or diving into technical SEO aspects. If only what you do is the correct answer to the questions you wanted to have answered.

Every insight can lead to big improvements in rankings and user engagement. Use this data-driven marketing approach to make the right decisions that will keep your SEO strategy effective in the future.