Who should get a uterus transplant? Experts aren’t sure.

This article first appeared in The Checkup, MIT Technology Review’s weekly biotech newsletter. To receive it in your inbox every Thursday, and read articles like this first, sign up here.

Earlier this year, a boy in Sweden celebrated his 10th birthday. Reproductive scientists and doctors marked the occasion too. This little boy’s birth had been special. He was the first person to be born from a transplanted uterus.

The boy was born in 2014 after his mother, a 35-year-old woman who had been born without a uterus, received a donated uterus from a 61-year-old close family friend. At the time, she was one of only 11 women who had undergone the experimental procedure.

A decade on, over 135 uterus transplants have been performed globally, resulting in the births of over 50 healthy babies. The surgery has had profound consequences for these families—the recipients would not have been able to experience pregnancy any other way.

But legal and ethical questions continue to surround the procedure, which is still considered experimental. Who should be offered a uterus transplant? Could the procedure ever be offered to transgender women? And if so, who should pay for these surgeries?

These issues were raised at a recent virtual event run by Progress Educational Trust, a UK-based charity that aims to provide information to the public on genomics and infertility. One of the speakers was Mats Brännström, who led the team at the University of Gothenburg that performed the first successful transplant.

For Brännström, the story of uterus transplantation begins in 1998. While traveling in Australia, he said, he met a 27-year-old woman called Angela, who longed to be pregnant but lacked a functional uterus. She suggested to Brännström that her mother could donate hers. “I was amazed I hadn’t thought of it before,” he said.

According to Brännström, around 1 in 500 women experience infertility due to what’s known as absolute uterine factor infertility, or AUFI, meaning they do not have a functional uterus. Uterus transplants could offer them a way to get pregnant.

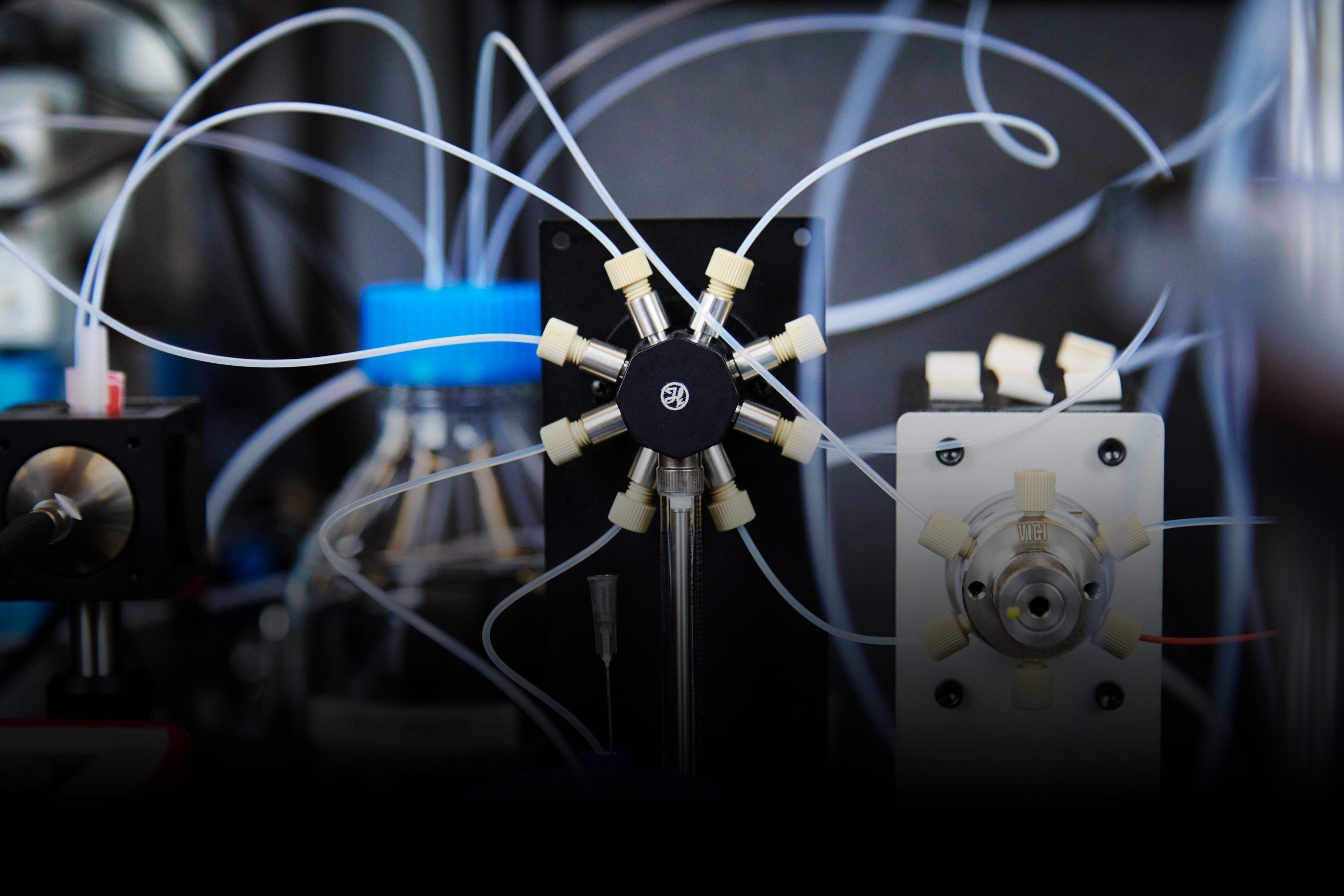

His meeting with Angela kick-started a research project that started in mice and eventually moved on to pigs, sheep, and baboons. Brännström’s team started performing uterus transplants in women as part of a small clinical trial in 2012. In that trial, all the donors were living, and in many cases they were the mothers or aunts of the recipients.

The surgeries ended up being more complicated than he had anticipated, said Brännström. The operation to remove a donor’s uterus was expected to take between three and four hours. It ended up taking between eight and 11 hours.

In that first trial, Brännström’s team transplanted uteruses into nine women, each of whom had IVF to create and store embryos beforehand. The woman who was the first to give birth had IVF over a 12-month period, which ended six months before her surgery. It took a little over 10 hours to remove the uterus from the donor, and just under five hours to stitch it into the recipient.

The recipient started getting her period 43 days after her transplant. Doctors transferred one of her embryos into the uterus a year after her surgery. Three weeks later, a pregnancy test confirmed she was pregnant.

At 31 weeks, she was admitted to hospital with preeclampsia, a serious medical condition that can develop during pregnancy, and her baby was delivered by C-section 16 hours later. She was discharged from hospital after three days, although the baby spent 16 days in the hospital’s neonatal unit.

Despite those difficulties, her story is considered a success. Other uterus recipients have also experienced pregnancy complications, and some have had surgical complications. And all transplant recipients must adhere to a regimen of immunosuppressant drugs, which can have side effects.

The uteruses aren’t intended to last forever, either. Surgeons remove them once the recipients have completed their families, often after one or two children. The removal is also a significant operation.

Given all that, uterus transplants are not to be taken lightly. And there are other paths to parenthood. Some ethicists are concerned that in pursuing uterus transplantation as a fertility treatment, we might reinforce ideas that define a woman’s value in terms of her reproductive potential, Natasha Hammond-Browning, a legal scholar at Cardiff University in Wales, said at the event. “There is debate around whether we should be giving greater preference to adoption, to surrogacy, and to supporting children who already exist and who need care,” she said.

We also need to consider whether there is a “right to gestate,” and if there is, who has that right, said Hammond-Browning. And these concerns need to be balanced with the importance of reproductive autonomy—the idea that people have the right to decide and control their own reproductive efforts.

Further questions remain over whether uterus transplants might ever be an option for trans women, who not only lack a uterus but also have a different pelvic anatomy. I asked the speakers if the surgery might ever be feasible. They weren’t hugely optimistic that it would, at least in the near future.

“I personally think that the transgender community have been given … false hope for responsible transplantation in the near future,” was the response of J. Richard Smith of Imperial College London, who co-led the first uterus transplant performed in the UK. Even cisgender women who have needed surgery to create “neovaginas” aren’t eligible for the uterus transplants his team are offering as part of a clinical study. They have an altered vaginal microbiome that appears to increase the risk of miscarriage, he said.

“There is a huge amount of work to be done before this work can be translated to the transgender community,” Smith said. Brännström agreed but added that he thinks the surgery will be available at some point—just after a lot more research.

And then there are the legal and ethical questions, none of which have easy answers. Hammond-Browning pointed out that clinical teams would first need to determine what the goal of such an operation would be. Is it about reproduction or gender realignment, for example? And how might that goal influence decisions over who should get a donated uterus, and why?

Considering only 135 human uterus transplants have ever been carried out, we still have a lot to learn about the best way to perform them. (For context, more than 25,000 kidney transplants were carried out in 2023 in the US alone.) Researchers are still figuring out how uteruses from deceased donors differ from those of living ones, and how to minimize complications in young, healthy women. Since that little boy was born 10 years ago, only 50 other children have been born in a similar way. It’s still early days.

Now read the rest of The Checkup

Read more from MIT Technology Review

The first birth following the transplantation of a uterus from a dead donor happened in 2017. A team in Brazil transferred the uterus of a 45-year-old donor, who had died from a brain hemorrhage, to a 32-year-old recipient born without a uterus.

Researchers are working on artificial wombs—“biobags” designed to care for premature babies. They have been tested on lambs and piglets. Now FDA advisors are figuring out how to move the technology into human trials.

An alternative type of artificial womb is being used to grow mouse embryos. Jacob Hanna at the Weizmann Institute of Science and his colleagues say they’ve been able to grow embryos in this environment for 11 or 12 days—around half the animal’s gestational period.

Research is underway to develop new fertility options for transgender men. Some of these men are put off by existing approaches, which tend to involve pausing hormone therapy and undergoing potentially distressing procedures.

From around the web

People on Ozempic, Wegovy, and similar drugs are losing their appetite for sugary, ultraprocessed foods. The food industry will have to adapt. (TIL Nestlé has already started a line of frozen meals targeted at people on these weight-loss drugs.) (The New York Times Magazine)

People who have a history of obesity can find it harder to lose weight. That might be because the fat cells in our bodies seem to “remember” that history and have an altered response to food. (The Guardian)

Robert F. Kennedy Jr. took leave as chairman of Children’s Health Defense, a nonprofit known for spreading doubt about vaccines, to run for US president last year. But he is still involved in legal cases filed by the group. And several of its cases remain open, including ones against the Food and Drug Administration, the Centers for Disease Control and Prevention, and the National Institutes of Health—all agencies Kennedy would lead if his nomination for head of Health and Human Services is confirmed. (STAT)

Researchers are among the millions of new users of Bluesky, a social media alternative to X (formerly known as Twitter). “There is this pent-up demand among scientists for what is essentially the old Twitter,” says one researcher who found that the number of influential scientists using the platform doubled between August and November. (Science)

Since 2016, a team of around 100 scientists have been working to catalogue the 37 trillion or so cells in the human body. This week, the Human Cell Atlas published a collection of studies that represents a significant first step toward that goal—including maps of cells in the nervous system, lungs, heart, gut, and immune system. (Nature)