How conspiracy theories infiltrated the doctor’s office

As anyone who has googled their symptoms and convinced themselves that they’ve got a brain tumor will attest, the internet makes it very easy to self-(mis)diagnose your health problems. And although social media and other digital forums can be a lifeline for some people looking for a diagnosis or community, when that information is wrong, it can put their well-being and even lives in danger.

Unfortunately, this modern impulse to “do your own research” became even more pronounced during the coronavirus pandemic.

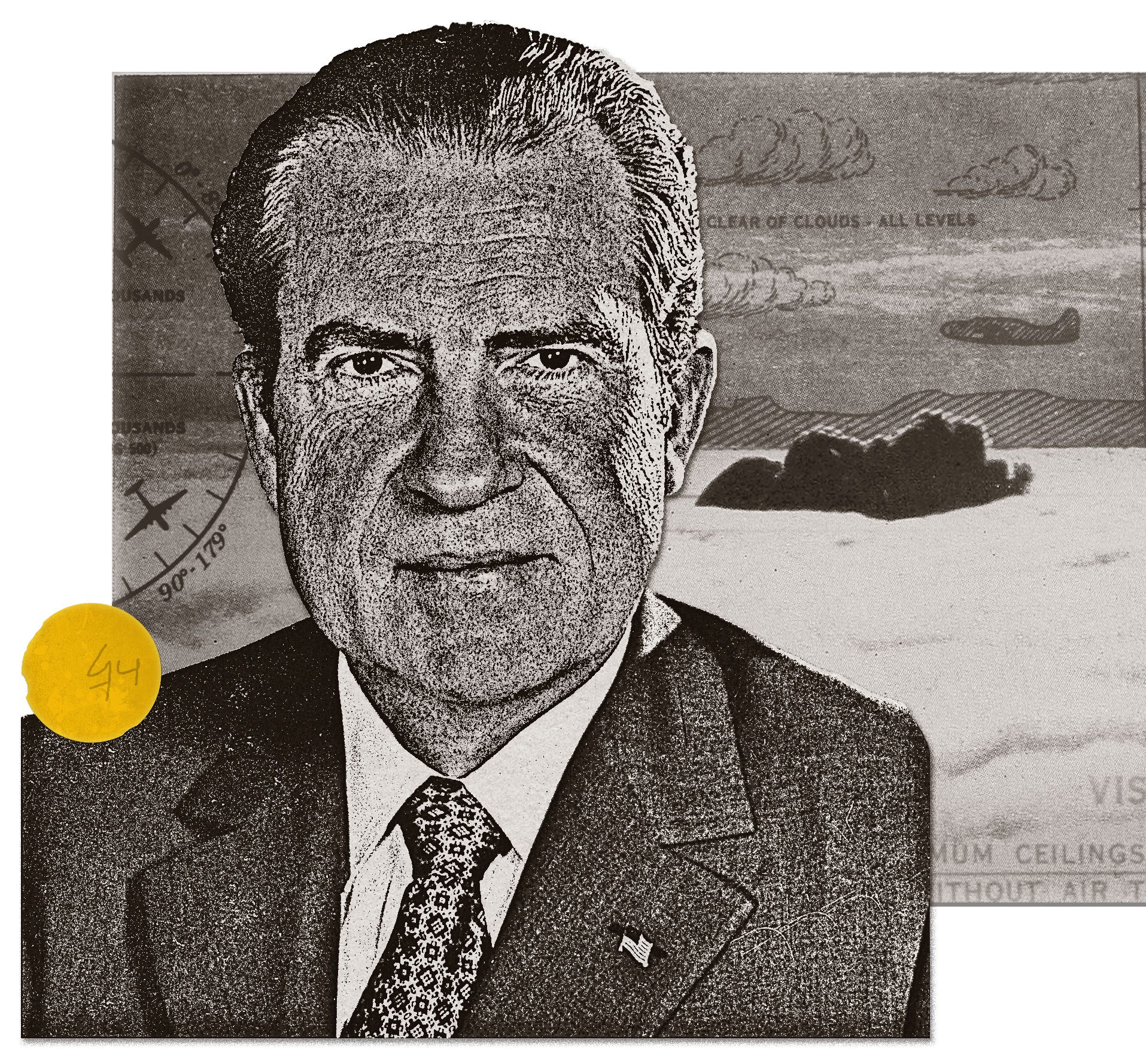

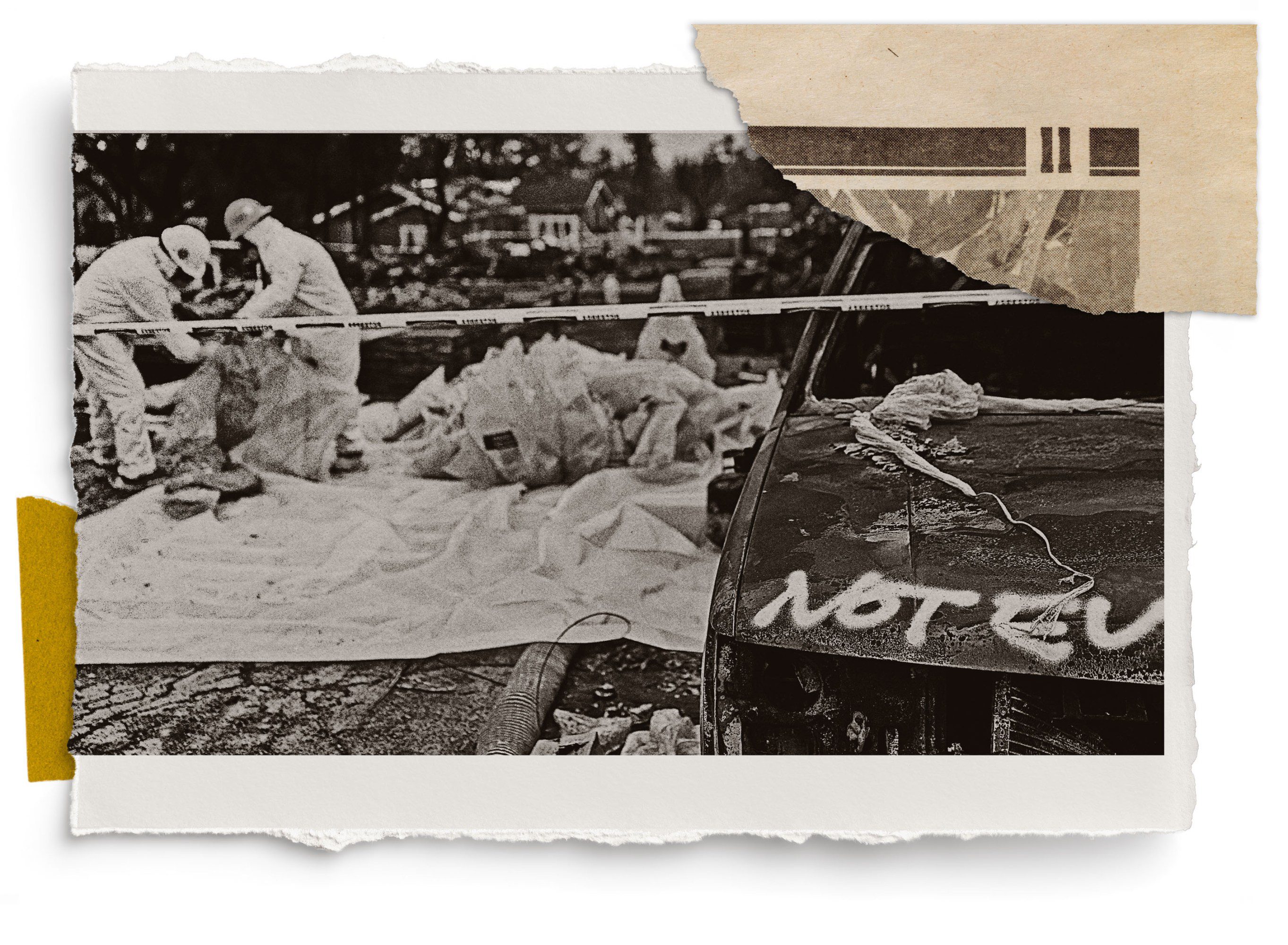

This story is part of MIT Technology Review’s series “The New Conspiracy Age,” on how the present boom in conspiracy theories is reshaping science and technology.

We asked a number of health-care professionals about how this shifting landscape is changing their profession. They told us that they are being forced to adapt how they treat patients. It’s a wide range of experiences: Some say patients tell them they just want more information about certain treatments because they’re concerned about how effective they are. Others hear that their patients just don’t trust the powers that be. Still others say patients are rejecting evidence-based medicine altogether in favor of alternative theories they’ve come across online.

These are their stories, in their own words.

Interviews have been edited for length and clarity.

The physician trying to set shared goals

David Scales

Internal medicine hospitalist and assistant professor of medicine,

Weill Cornell Medical College

New York City

Every one of my colleagues has stories about patients who have been rejective of care, or had very peculiar perspectives on what their care should be. Sometimes that’s driven by religion. But I think what has changed is people, not necessarily with a religious standpoint, having very fixed beliefs that are sometimes—based on all the evidence that we have—in contradiction with their health goals. And that is a very challenging situation.

I once treated a patient with a connective tissue disease called Ehlers-Danlos syndrome. While there’s no doubt that the illness exists, there’s a lot of doubt and uncertainty over which symptoms can be attributed to Ehlers-Danlos. This means it can fall into what social scientists call a “contested illness.”

Contested illnesses used to be causes for arguably fringe movements, but they have become much more prominent since the rise of social media in the mid-2010s. Patients often search for information that resonates with their experience.

This patient was very hesitant about various treatments, and it was clear she was getting her information from, I would say, suspect sources. She’d been following people online who were not necessarily trustworthy, so I sat down with her and we looked them up on Quackwatch, a site that lists health myths and misconduct.

“She was extremely knowledgeable, and had done a lot of her own research, but she struggled to tell the difference between good and bad sources.”

She was still accepting of treatment, and was extremely knowledgeable, and had done a lot of her own research, but she struggled to tell the difference between good and bad sources and fixed beliefs that overemphasize particular things—like what symptoms might be attributable to other stuff.

Physicians have the tools to work with patients who are struggling with these challenges. The first is motivational interviewing, a counseling technique that was developed for people with substance-use disorders. It’s a nonjudgmental approach that uses open-ended questions to draw out people’s motivations, and to find where there’s a mismatch between their behaviors and their beliefs. It’s highly effective in treating people who are vaccine-hesitant.

Another is an approach called shared decision-making. First we work out what the patient’s goals are and then figure out a way to align those with what we know about the evidence-based way to treat them. It’s something we use for end-of-life care, too.

What’s concerning to me is that it seems as though there’s a dynamic of patients coming in with a fixed belief of how to diagnose their illness, how their symptoms should be treated, and how to treat it in a way that’s completely divorced from the kinds of medicine you’d find in textbooks—and that the same dynamic is starting to extend to other illnesses, too.

The therapist committed to being there when the conspiracy fever breaks

Damien Stewart

Psychologist

Warsaw, Poland

Before covid, I hadn’t really had any clients bring up conspiracy theories into my practice. But once the pandemic began, they went from being fun or harmless to something dangerous.

In my experience, vaccines were the topic where I first really started to see some militancy—people who were looking down the barrel of losing their jobs because they wouldn’t get vaccinated. At one point, I had an out-and-out conspiracy theorist say to me, “I might as well wear a yellow star like the Jews during the Holocaust, because I won’t get vaccinated.”

I felt pure anger, and I reached a point in my therapeutic journey I didn’t know would ever occur—I’d found that I had a line that could be crossed by a client that I could not tolerate. I spoke in a very direct manner he probably wasn’t used to and challenged his conspiracy theory. He got very angry and hung up the call.

It made me figure out how I was going to deal with this in future, and to develop an approach—which was to not challenge the conspiracy theory, but to gently talk through it, to provide alternative points of view and ask questions. I try to find the therapeutic value in the information, in the conversations we’re having. My belief is and evidence seems to show that people believe in conspiracy theories because there’s something wrong in their life that is inexplicable, and they need something to explain what’s happening to them. And even if I have no belief or agreement whatsoever in what they’re saying, I think I need to sit here and have this conversation, because one day this person might snap out of it, and I need to be here when that happens.

As a psychologist, you have to remember that these people who believe in these things are extremely vulnerable. So my anger around these conspiracy theories has changed from being directed toward the deliverer—the person sitting in front of me saying these things—to the people driving the theories.

The emergency room doctor trying to get patients to reconnect with the evidence

Luis Aguilar Montalvan

Attending emergency medicine physician

Queens, New York

The emergency department is essentially the pulse of what is happening in society. That’s what really attracted me to it. And I think the job of the emergency doctor, particularly within shifting political views or belief in Western medicine, is to try to reconnect with someone. To just create the experience that you need to prime someone to hopefully reconsider their relationship with this evidence-based medicine.

When I was working in the pediatrics emergency department a few years ago, we saw a resurgence of diseases we thought we had eradicated, like measles. I typically framed it by saying to the child’s caregiver: “This is a disease we typically use vaccines for, and it can prevent it in the majority of people.”

“The doctor is now more like a consultant or a customer service provider than the authority. … The power dynamic has changed.”

The sentiment among my adult patients who are reluctant to get vaccinated or take certain medications seems to be from a mistrust of the government or “The System” rather than from anything Robert F. Kennedy Jr. says directly, for example. I’m definitely seeing more patients these days asking me what they can take to manage a condition or pain that’s not medication. I tell them that the knowledge I have is based on science, and explain the medications I’d typically give other people in their situation. I try to give them autonomy while reintroducing the idea of sticking with the evidence, and for the most part they’re appreciative and courteous.

The role of doctor has changed in recent years—there’s been a cultural change. My understanding is that back in the day, what the doctor said, the patient did. Some doctors used to shame parents who hadn’t vaccinated their kids. Now we’re shifting away from that, and the doctor is now more like a consultant or a customer service provider than the authority. I think that could be because we’ve seen a lot of bad actors in medicine, so the power dynamic has changed.

I think if we had a more unified approach at a national level, if they had an actual unified and transparent relationship with the population, that would set us up right. But I’m not sure we’ve ever had it.

The psychologist who supported severely mentally ill patients through the pandemic

Michelle Sallee

Psychologist, board certified in serious mental illness psychology

Oakland, California

I’m a clinical psychologist who only works with people who have been in the hospital three or more times in the last 12 months. I do both individual therapy and a lot of group work, and several years ago during the pandemic, I wrote a 10-week program for patients about how to cope with sheltering in place, following safety guidelines, and their concerns about vaccines.

My groups were very structured around evidence-based practice, and I had rules for the groups. First, I would tell people that the goal was not to talk them out of their conspiracy theory; my goal was not to talk them into a vaccination. My goal was to provide a safe place for them to be able to talk about things that were terrifying to them. We wanted to reduce anxiety, depression, thoughts of suicide, and the need for psychiatric hospitalizations.

Half of the group was pro–public health requirements, and their paranoia and fear for safety was around people who don’t get vaccinated; the other half might have been strongly opposed to anyone other than themselves deciding they need a vaccination or a mask. Both sides were fearing for their lives—but from each other.

I wanted to make sure everybody felt heard, and it was really important to be able to talk about what they believed—like, some people felt like the government was trying to track us and even kill us—without any judgment from other people. My theory is that if you allow people to talk freely about what’s on their mind without blocking them with your own opinions or judgment, they will find their way eventually. And a lot of times that works.

People have been stuck on their conspiracy theory or their paranoia has been stuck on it for a long time because they’re always fighting with people about it, everyone’s telling them that this is not true. So we would just have an open discussion about these things.

“People have been stuck on their conspiracy theory for a long time because they’re always fighting with people about it, everyone’s telling them that this is not true.”

I ran the program four times for a total of 27 people, and the thing that I remember the most was how respectful and tolerant and empathic, but still honest about their feelings and opinions, everybody was. At the end of the program, most participants reported a decrease in pandemic-related stress. Half reported a decrease in general perceived stress, and half reported no change.

I’d say that the rate of how much vaccines are talked about now is significantly lower, and covid doesn’t really come up anymore. But other medical illnesses come up—patients saying, “My doctor said I need to get this surgery, but I know who they’re working for.” Everybody has their concerns, but when a person with psychosis has concerns, it becomes delusional, paranoid, and psychotic.

I’d like to see more providers be given more training around severe mental illness. These are not just people who just need to go to the hospital to get remedicated for a couple of days. There’s a whole life that needs to get looked at here, and they deserve that. I’d like to see more group settings with a combination of psychoeducation, evidence-based research, skills training, and process, because the research says that’s the combination that’s really important.

Editor’s note: Sallee works for a large HMO psychiatry department, and her account here is not on behalf of, endorsed by, or speaking for any larger organization.

The epidemiologist rethinking how to bridge differences in culture and community

John Wright

Clinician and epidemiologist

Bradford, United Kingdom

I work in Bradford, the fifth-biggest city in the UK. It has a big South Asian population and high levels of deprivation. Before covid, I’d say there was growing awareness about conspiracies. But during the pandemic, I think that lockdown, isolation, fear of this unknown virus, and then the uncertainty about the future came together in a perfect storm to highlight people’s latent attraction to alternative hypotheses and conspiracies—it was fertile ground. I’ve been a National Health Service doctor for almost 40 years, and until recently, the NHS had a great reputation, with great trust, and great public support. The pandemic was the first time that I started seeing that erode.

It wasn’t just conspiracies about vaccines or new drugs, either—it was also an undermining of trust in public institutions. I remember an older woman who had come into the emergency department with covid. She was very unwell, but she just wouldn’t go into hospital despite all our efforts, because there were conspiracies going around that we were killing patients in hospital. So she went home, and I don’t know what happened to her.

The other big change in recent years has been social media and social networks that have obviously amplified and accelerated alternative theories and conspiracies. That’s been the tinder that’s allowed the wildfires to spread with these sort of conspiracy theories. In Bradford, particularly among ethnic minority communities, there’s been stronger links between them—allowing this to spread quicker—but also a more structural distrust.

Vaccination rates have fallen since the pandemic, and we’re seeing lower uptake of the meningitis and HPV vaccines in schools among South Asian families. Ultimately, this needs a bigger societal approach than individual clinicians putting needles in arms. We started a project called Born in Bradford in 2007 that’s following more than 13,000 families, including around 20,000 teenagers as they grow up. One of the biggest focuses for us is how they use social media and how it links to their mental health, so we’re asking them to donate their digital media to us so we can examine it in confidence. We’re hoping it could allow us to explore conspiracies and influences.

The challenge for the next generation of resident doctors and clinicians is: How do we encourage health literacy in young people about what’s right and what’s wrong without being paternalistic? We also need to get better at engaging with people as health advocates to counter some of the online narratives. The NHS website can’t compete with how engaging content on TikTok is.

The pediatrician who worries about the confusing public narrative on vaccines

Jessica Weisz

Pediatrician

Washington, DC

I’m an outpatient pediatrician, so I do a lot of preventative care, checkups, and sick visits, and treating coughs and colds—those sorts of things. I’ve had specific training in how to support families in clinical decision-making related to vaccines, and every family wants what’s best for their child, and so supporting them is part of my job.

I don’t see specific articulation of conspiracy theories, but I do think there’s more questions about vaccines in conversations I’ve not typically had to have before. I’ve found that parents and caregivers do ask general questions about the risks and benefits of vaccines. We just try to reiterate that vaccines have been studied, that they are intentionally scheduled to protect an immature immune system when it’s the most vulnerable, and that we want everyone to be safe, healthy, and strong. That’s how we can provide protection.

“I think what’s confusing is that distress is being sowed in headlines when most patients, families, and caregivers are motivated and want to be vaccinated.”

I feel that the narrative in the public space is unfairly confusing to families when over 90% of families still want their kids to be vaccinated. The families who are not as interested in that, or have questions—it typically takes multiple conversations to support that family in their decision-making. It’s very rarely one conversation.

I think what’s confusing is that distress is being sowed in headlines when most patients, families, and caregivers are motivated and want to be vaccinated. For example, some of the headlines around recent changes the CDC are making make it sound like they’re making a huge clinical change, when it’s actually not a huge change from what people are typically doing. In my standard clinical practice, we don’t give the combined MMRV vaccine to children under four years old, and that’s been standard practice in all of the places I’ve worked on the Eastern Seaboard. [Editor’s note: In early October, the CDC updated its recommendation that young children receive the varicella vaccine separately from the combined vaccine for measles, mumps, and rubella. Many practitioners, including Weisz, already offer the shots separately.]

If you look at public surveys, pediatricians are still the most trusted [among health-care providers], and I do live in a jurisdiction with pretty strong policy about school-based vaccination. I think that people are getting information from multiple sources, but at the end of the day, in terms of both the national rates and also what I see in clinical practice, we really are seeing most families wanting vaccines.