When Advertising Shifts To Prompts, What Should Advertisers Do? via @sejournal, @siliconvallaeys

When I last wrote about Google AI Mode, my focus was on the big differentiators: conversational prompts, memory-driven personalization, and the crucial pivot from keywords to context.

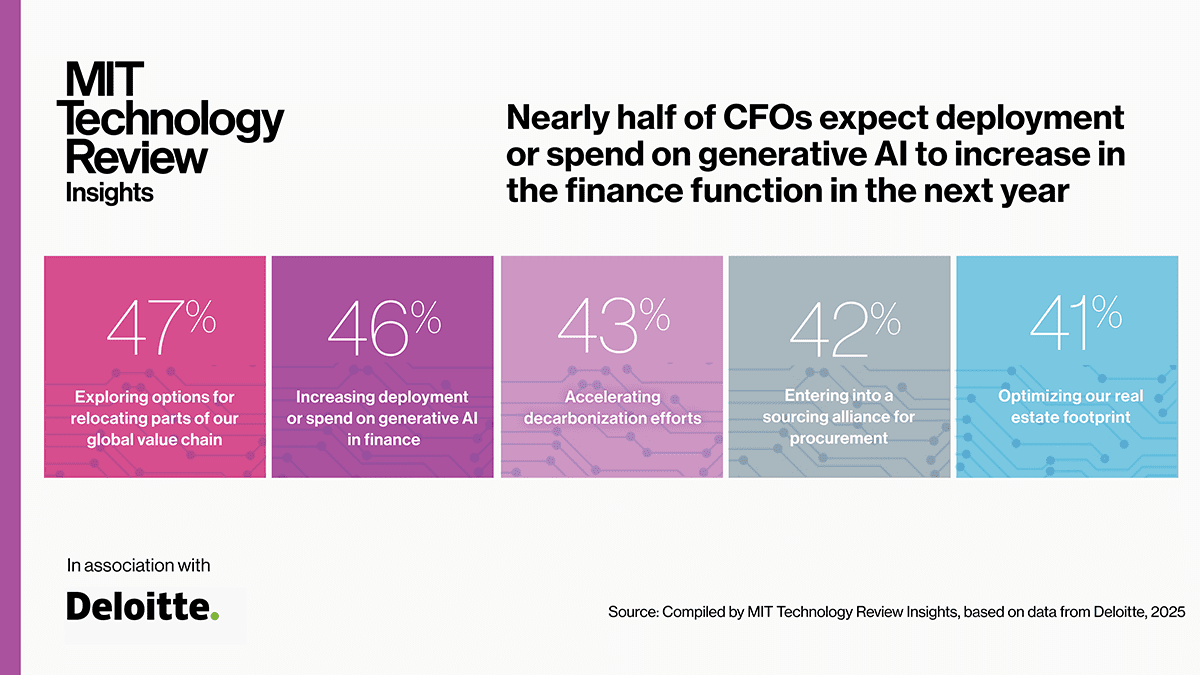

As we see with the Q2 ad platform financial results below, this shift is rapidly reshaping performance advertising. While AI Mode means Google has to rethink how it makes money, it forces us advertisers to rethink something even more fundamental: our entire strategy.

In the article about AI Mode, I laid out how prompts are different from keywords, why “synthetic keywords” are really just a temporary band-aid, and how fewer clicks might just challenge the age-old cost-per-click (CPC) revenue model.

This follow-up is about what these changes truly mean for us as advertisers, and why holding onto that keyword-era mindset could cost us our competitive edge.

The Great Rewiring Of Search

The biggest shift since we first got keyword-targeted online advertising is now in full swing. People aren’t searching with those relatively concise keywords anymore, the ones we optimized for how Google used to weigh certain words in a query.

Large language models (LLMs) have pretty much removed the shackles from the search bar. Now, users can fire off prompts with hundreds of words, and add even more context.

Think about the 400,000 token context window of GPT-5, which is like tens of thousands of words. Thankfully, most people don’t need that much space to explain what they want, but they are speaking in full sentences now, stutters and all.

Google’s internal ads in AI Mode document shares that early testers of AI Mode are asking queries that are two to three times as long as traditional searches on Google.

And thanks to LLMs’ multi-modal capabilities, users are searching with images (Google reports 20 billion Lens searches per month), drawing sketches, and even sending video. They’re finding what they need in entirely new ways.

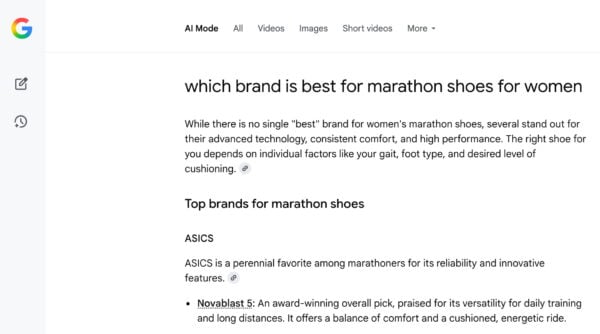

Increasingly, users aren’t just looking for a list of what might be relevant. They expect a guided answer from the AI, one that summarizes options based on their personal preferences. People are asking AI to help them decide, not just to find.

And that fundamental change in user behavior is now reshaping the very platforms where these searches happen, starting with Google.

The Impact On Google As The Main Ads Platform

All of this definitely poses a threat to Google’s primary revenue stream. But as I mentioned in a LinkedIn post, the traffic didn’t vanish; it just moved.

Users didn’t ditch Google; they simply stopped using it the way they did when keywords were king. Plus, we’re seeing new players emerge, and search itself has fragmented:

This creates a fresh challenge for us advertisers: How do we design campaigns that actually perform when intent originates in these wildly new ways?

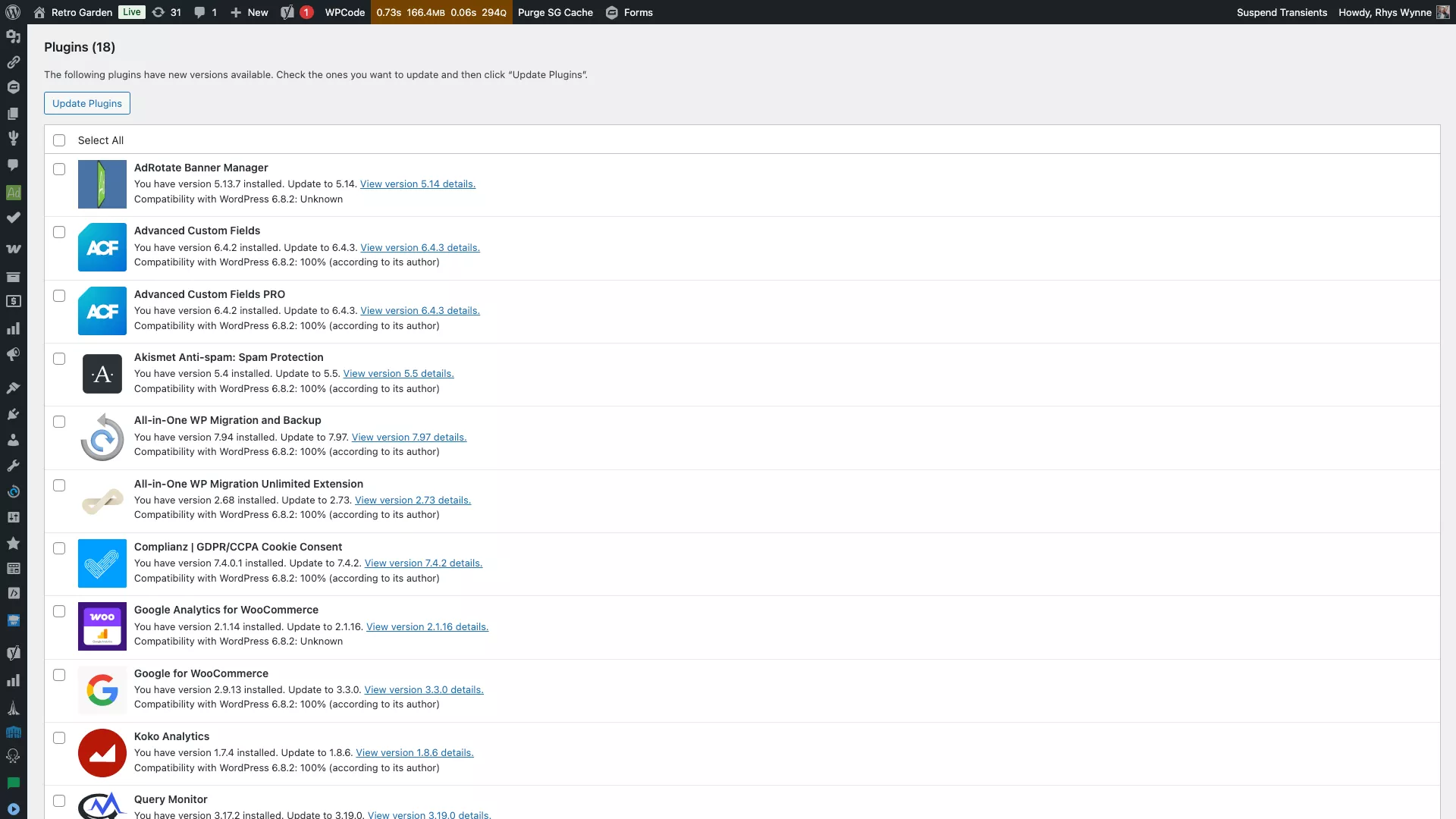

What Q2 Earnings Reports Told Us About AI In Search

The Q2 earnings calls were packed with GenAI details. Some of the most jaw-dropping figures involved the expected infrastructure investments.

Microsoft announced plans to spend an eye-watering $30 billion on capital expenditures in the coming quarter, and Alphabet estimated an $85 billion budget for the next year. I guess we’ll all be clicking a lot of ads to help pay for that. So, where will those ads come from when keywords are slowly being replaced by prompts?

Google shared some numbers to illustrate the scale of this shift. AI Overviews already reach 2 billion users a month. AI Mode itself is up to 100 million. The real question is, how is AI actually enabling better ads, and thus improving monetization?

Google reports:

- Over 90 Performance Max improvements in the past year drove 10%+ more conversions and value.

- Google’s AI Max for Search campaigns show a 27% lift in conversions or value over exact or phrase matches.

Microsoft Ads tells a similar story. In Q2 2025, it reported:

- $13 billion in AI-related ad revenue.

- Copilot-powered ads drove 2.3 times more conversions than traditional formats.

- Users were 53% more likely to convert within 30 minutes.

So, what’s an advertiser to do with all this?

What Advertisers Should Do

As shared recently in a conversation with Kasim Aslam, these ecosystems are becoming intent originators. That old “search bar” is now a conversation, a screenshot, or even a voice command.

If your campaigns are still relying on waiting for someone to type a query, you’re showing up to the party late. Smart advertisers don’t just respond to intent; they predict it and position for it.

But how? Well, take a look at the Google products that are driving results for advertisers: They’re the newest AI-first offerings. Performance Max, for example, is keywordless advertising driven by feeds, creative, and audiences.

Another vital step for adapting to this shift is AI Max, which I’d call the most unrestrictive form of keyword advertising.

It blends elements of Dynamic Search Ads (DSAs), automatically created assets, and super broad keywords. This allows your ads to show up no matter how people search, even if they’re using those sprawling, multi-part prompts.

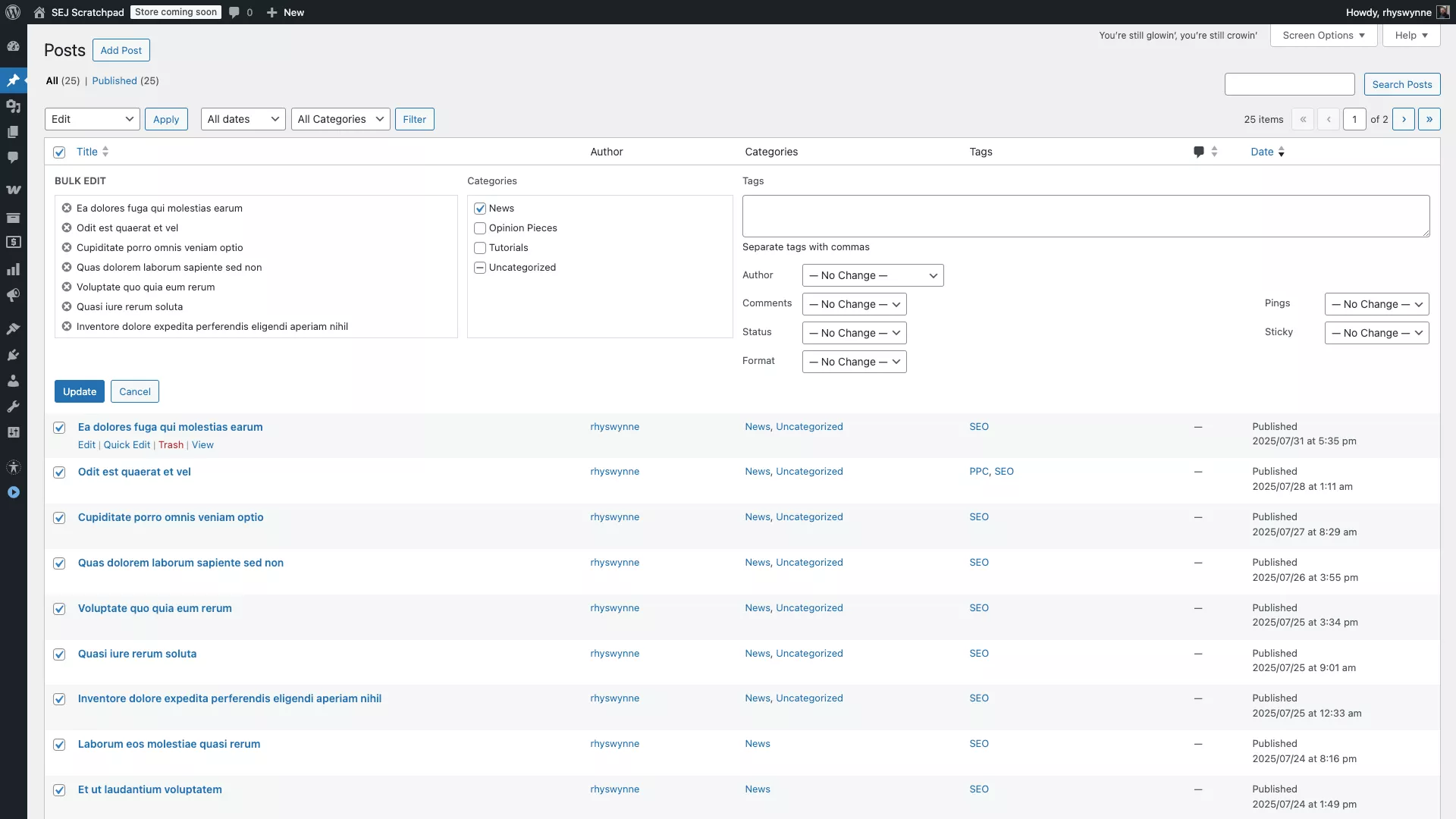

Sure, advertisers can still use today’s best practices, like reviewing search term reports and automatically created assets, then adding negatives or exclusions for the irrelevant ones. But let’s be honest, that’s a short-term, old-model approach.

As AI gains memory and contextual understanding, ads will be shown based on scenarios and user intent that isn’t even explicitly expressed.

Relying solely on negatives won’t cut it. The future demands that advertisers focus on getting involved earlier in the decision-making process and making sure the AI has all the right information to advocate for their brand.

Keywords Aren’t The Lever They Once Were

In the AI Mode era, prompts aren’t just simple queries; they’re rich, multi-turn conversations packed with context.

As I outlined in my last article, these interactions can pull in past sessions, images, and deeply personal preferences. No keyword list in the world can capture that level of nuance.

Tinuiti’s Q2 benchmark report shows Performance Max accounts for 59% of Shopping ad spend and delivers 18% higher click-through rates. This is a clear illustration that the platform is taking control of targeting.

And when structured feeds plus dynamic creative drive a 27% lift in conversions according to Google data, it’s because the creative itself is doing the targeting.

Those journeys happen out of sight, which is the biggest threat to advertisers whose strategies aren’t evolving.

The Real Danger: Invisible Decisions

One of my key takeaways from the AI Mode discussion was the risk of “zero-click” journeys. If the assistant delivers what a user needs inside the conversation, your brand might never get a visit.

According to Adobe Analytics, AI-powered referrals to U.S. retail sites grew 1,200% between July 2024 and February 2025. Traffic from these sources now doubles every 60 days.

These users:

- Visit 12% more pages per session.

- Bounce 23% less often.

- Spend 45% more time browsing (especially in travel and finance verticals).

Even more importantly, 53% of users say they plan to rely on AI tools for shopping going forward.

In short, users are starting their journeys before they reach a traditional search engine, and they’re more engaged when they do. And winning in this environment means rethinking our levers for influence.

Why This Is An Opportunity, Not A Death Sentence

As I argued before, platforms aren’t killing keyword advertising; they’re evolving it. The advertisers winning now are leaning into the new levers:

Signals Over Keywords

- Use customer relationship management (CRM) data to build high-intent audience lists.

- Layer first-party data into automated campaign types through conversion value adjustments, audiences, or budget settings.

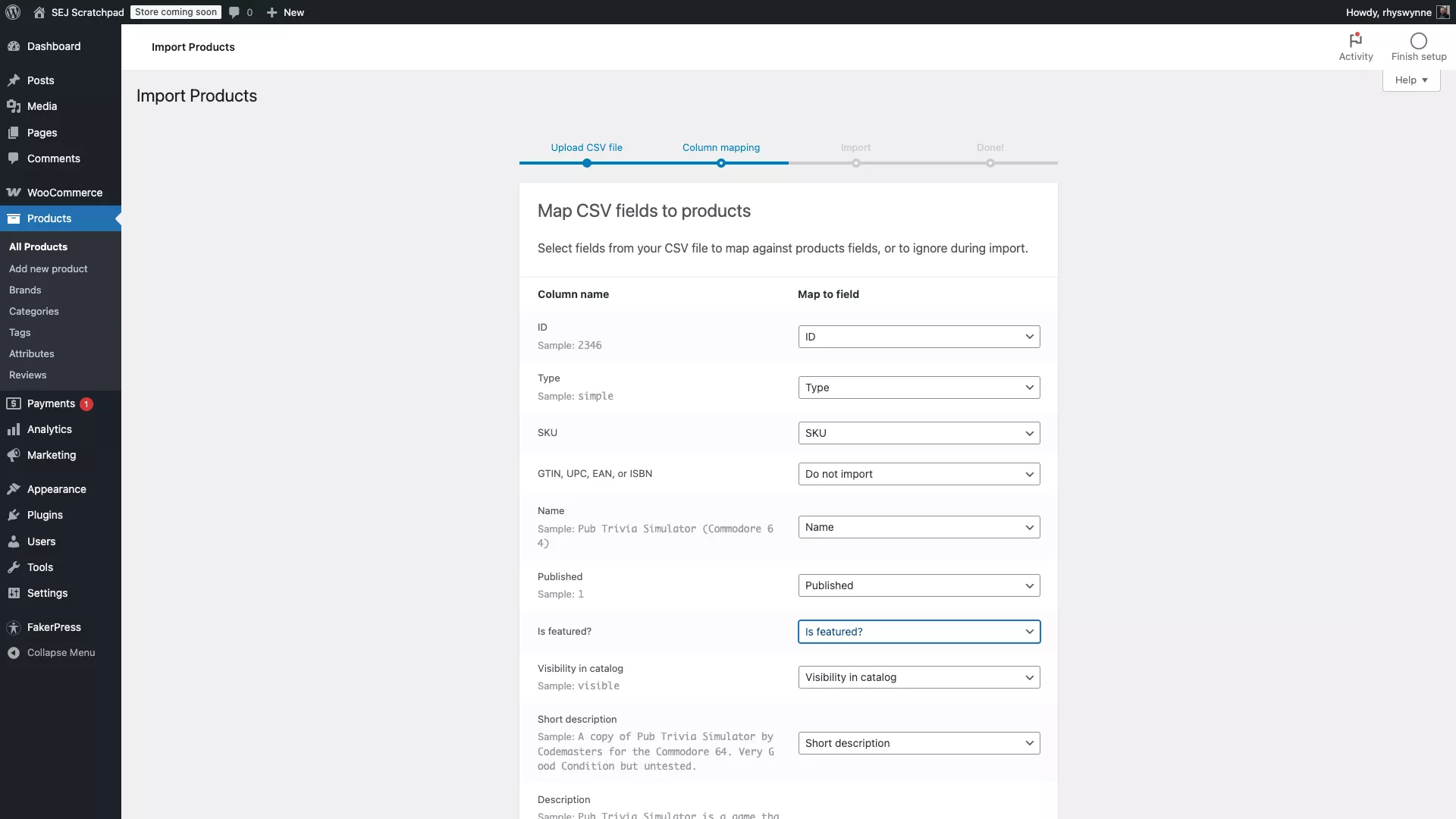

- Optimize your product feed with rich attributes so AI has more to work with and knows exactly which products to recommend.

- Ensure feed hygiene so LLMs have the most current data about your offers.

- Enhance your website with more data for the LLMs to work with, like data tables, and schema.

Creative As Targeting

- Build modular ad assets that AI can assemble dynamically: multiple headlines, descriptions, and images tailored to different audiences.

- Test variations that align with different stages of the buying journey so you’re likely to show in more contextual scenarios across the entire consumer journey, not only at the end.

Measurement Beyond Clicks

- Frequently evaluate the new metrics in Google Ads for AI Max and Performance Max. Changes are rolling out frequently, enabling smarter optimizations.

- Track feed impression share by enabling these extra columns in Google Ads.

- Monitor how often your products are surfaced in AI-driven recommendations, as with the recently updated AI Max report for “search terms and landing pages from AI Max.”

- Focus your measurement on how well users are able to complete tasks, not just clicks.

The future isn’t about bidding on a query. It’s about supplying the AI with the best “raw ingredients” so you win the recommendation at the exact moment of decision.

That mindset shift is the real competitive advantage in the AI-first era.

The Bottom Line

My previous AI Mode post was about the mechanics of the shift. This one is about the mindset change required to survive it.

Keywords aren’t vanishing, but their role is shrinking fast. In an AI-driven, context-first search landscape, the brands that thrive will stop obsessing over what the user types and start shaping what the AI recommends.

If you can win that moment, you won’t just get found. You’ll get chosen.

More Resources:

Featured Image: Smile Studio AP/Shutterstock