A few weeks ago, I fell down a rabbit hole of cottagecore TikTok and Japanese jazz-funk from the ’70s. I didn’t search for it. I didn’t ask for it. But, somehow, my For You Page and Spotify knew. They knew before I did.

That’s the power of what I call B2Me, from broad strokes to a segment of one. And it’s changing everything.

As marketers, we’re moving from static personas to living identity graphs. As audiences, we’ve gone from craving options to craving intuition. We want brands that just get us.

Picture ads that shift based on your inferred mood, product recommendations that feel like they were plucked straight from your subconscious, content around what you were only just thinking about.

We’re marketing to real people in real time. And the brands that get it right, get rewarded with clicks, loyalty, and trust.

Demographics Were Always Broken (AI Just Made It Obvious)

For decades, we, marketers, clung to personas. Those convenient, yet ultimately flawed, cardboard cutouts like “Marketing Mike,” who supposedly loved artisanal everything, skateboarded to work, and breakfasted on avocado toast.

Meanwhile, the real Mike was out buying a motorcycle, years past his skateboarding phase, and loves gas station hotdogs.

“Women aged 25-34 with college degrees who live in New York and work in marketing” tells you nothing about what Natasha actually wants, what she’s struggling with, or what would make her say yes.

For too long, we’ve marketed to people who look like our customers instead of those who act like them.

Even today, many companies claiming “personalized marketing” are still relying on a demographic infrastructure from 2019, if not earlier. It’s a bit like driving forward while looking in the rearview mirror.

Demographics were always stereotypes in a data suit. AI strips that away and sees the person underneath.

That’s the essence of B2Me marketing: connecting with individuals based on observed behavior, not assumed demographics.

Decisions happen in fleeting, emotional moments. AI recognizes intent in real time, often before we do.

When was the last time an algorithm recommended something you didn’t know you wanted, but it was exactly what you wanted? Creepy? Maybe. Useful? Yes.

That’s the emotional layer AI is tapping into. It’s going beyond tracking behavior to interpreting intent. Frustration. Curiosity. Readiness. These are signals. And our job as marketers is to listen when they’re telling us, often without saying a word.

What True B2Me Looks Like

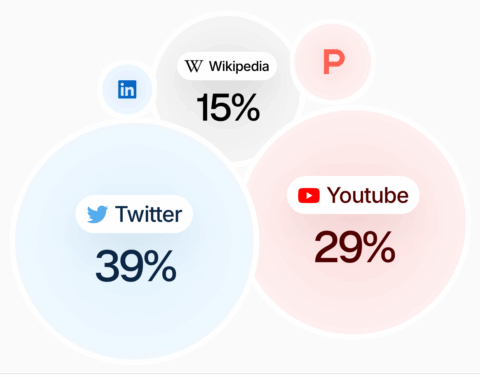

Coca-Cola tested this in Saudi Arabia. Instead of targeting “Millennials,” its AI agent analyzed millions of social posts across platforms like TikTok and LinkedIn, identifying people expressing cravings for fast food.

It then delivered 828,000 personalized coupon ads for discounted Coke products – 20,000 of which were clicked on – all without human intervention.

Overall, it executed roughly 8 million autonomous actions on behalf of its marketing team. That’s behavioral precision at unprecedented scale.

Meanwhile, a project management software company I observed found that its highest-converting customers weren’t the enterprise IT directors its demographic models targeted.

It was mid-level operations managers, the ones actually wrestling with the workflows. They weren’t filling out forms. But, they were driving the deals. The invisible layer of influence was profound.

B2Me strategies create compounding advantages. Each interaction refines AI’s understanding of individual patterns, leading to more precise future targeting. This can translate to:

- Faster, more accurate intent recognition.

- Superior message-market fit.

- Measurably higher conversion rates.

- Enhanced customer lifetime value.

Why Most “B2Me” Efforts Fail

Because they’re not really B2Me. They’re just demographic micro-segmentation with fancier plumbing.

I watched a SaaS company spend six months building an “AI-powered individual targeting system.” Its big breakthrough? Sending different subject lines to “Marketing Managers” versus “Marketing Directors.”

That’s not B2Me. That’s lipstick on a persona.

True B2Me watches behavior. It asks: What are they doing? What are they feeling? What are they trying to solve? And it zeroes in on the behavioral patterns that predict buying intent.

B2Me thrives on living identity graphs that continuously evolve based on what individuals consume, click, purchase, and how they navigate content.

Salesforce, through its focus on comprehensive customer data within frameworks like Customer 360, enables businesses to leverage behavioral signals, such as rapid tool adoption or shifts in company structure, to identify opportunities for digital transformation and improve targeting effectiveness.

These “digital transformation stress signals” convert significantly higher than demographic targeting, regardless of company size.

3 Ways To Implement B2Me

1. Target Behavior, Not Job Titles

Traditional: “Target CISOs at Fortune 500 companies.”

B2Me: “Target individuals researching security compliance solutions.”

Job titles aren’t always accurate predictors of buying behavior. Your best prospects might not match your ideal customer profile (ICP) on paper, but they’re showing you who they are through their actions.

2. Time Messages To Emotional States

AI’s true power lies in its ability to detect human intent and emotional states.

It can sense things like frustration (rapid scrolling, quick exits), curiosity (deep engagement, repeated visits), and buying readiness (pricing page visits, competitor research). This goes beyond what someone does to how they do it.

HubSpot’s platform and integrations support outreach timing based on behavioral frustration signals such as prospects engaging with content about data migration headaches or sales team bottlenecks.

3. Predict Needs Before Searches

Zoom capitalized on early remote work signals, such as increased interest in collaboration tools, distributed team hiring, and work-from-home content consumption, to scale rapidly during the pandemic

It identified “remote work scaling signals,” i.e., companies actively researching collaboration tools, posting jobs for distributed teams, and consuming work-from-home content.

This foresight allowed it to engage prospects and capture demand before competitors even fully recognized the shift.

Getting Started

1. Map Real Customer Behavior

Begin by auditing your current targeting. Most companies, from my observation, are still operating at 80% demographics, 20% behavior. It’s time to work on inverting that ratio.

Document what your actual best customers do before they buy:

- What content truly resonates?

- What questions consistently emerge during sales conversations?

- What research triggers precede their engagement?

- What are their preferred engagement channels?

2. Build Behavioral Audiences

Build behavioral audiences using the tools you already have in your search and social platforms.

These platforms are already prioritizing behavioral signals over static demographics, so lean into their capabilities.

Brand Still Wins

AI can distill patterns, but it can’t feel. It segments behavior, but it doesn’t grasp human motivation. It predicts clicks, but it can’t forge connection.

This is where brand is essential. It can serve as a definitive advantage in AI-mediated decisions.

When someone asks an AI assistant for customer relationship management (CRM) recommendations, which brands show up? And more importantly, how are they described?

You’re not just competing for human memory anymore. You’re competing for AI memory. And your brand is the shortcut.

When an AI recommends brands, it’s synthesizing reputation and consistency across thousands of complex touchpoints.

We can’t talk about brand without talking about trust.

We’ve always said “trust matters.” Now, AI exposes what trust really is: the gap between what you can do and what you should do.

Remember that Coca-Cola campaign? Eight million social posts analyzed, 828,000 personalized coupons delivered autonomously. Impressive results … and also a few debates about “surveillance marketing.”

AI exposes where trust was always fragile. Take surge pricing. AI can adjust rates based on your browser history, your device, even your cursor hesitation.

But, when customers notice? “Smart” becomes “sneaky.” Trust evaporates. Remember, trust isn’t a feature you add later. It’s the foundation.

The Right People At The Right Time With The Right Message

B2Me is about fundamentally better understanding your customer. AI can help us see patterns. But, only we can make meaning. Only we can build trust. Only we can decide what matters.

B2Me is empathy at scale, helping you see people, not personas. It empowers you to show up in the moments that matter, even the ones we’ll never see.

B2Me bridges the gap between what’s technically possible and what’s strategically smart.

You don’t need to have it all figured out tomorrow. You just need to start. And start by remembering that the most powerful force in marketing is still a thinking human.

More Resources:

Featured Image: Paulo Bobita/Search Engine Journal