How To Find Competitors’ Keywords: Tips & Tools

This post was sponsored by SE Ranking. The opinions expressed in this article are the sponsor’s own.

Wondering why your competitors rank higher than you?

The secret to your competitors’ SEO success might be as simple as targeting the appropriate keywords.

Since these keywords are successful for your competitors, there’s a good chance they could be valuable for you as well.

In this article, we’ll explore the most effective yet simple ways to find competitors’ keywords so that you can guide your own SEO strategy and potentially outperform your competitors in SERPs.

Benefits Of Competitor Keyword Analysis

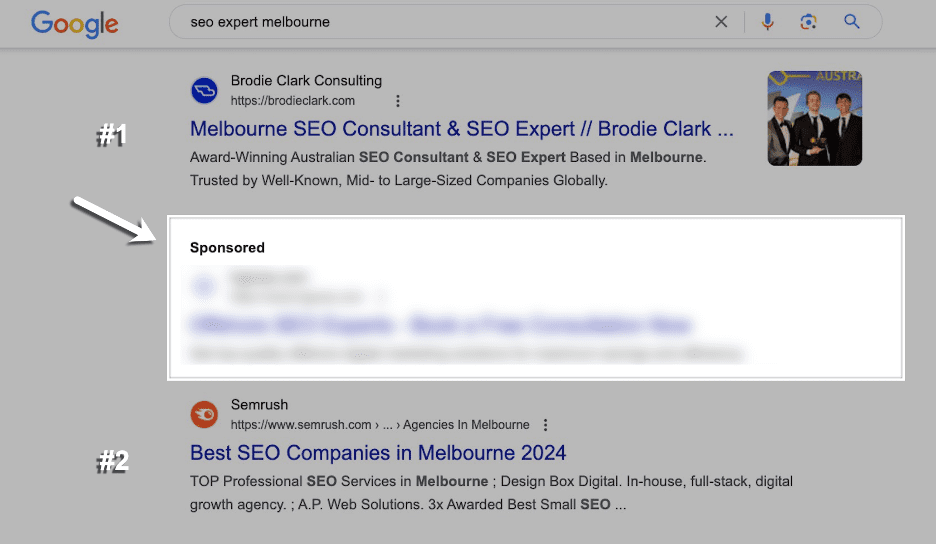

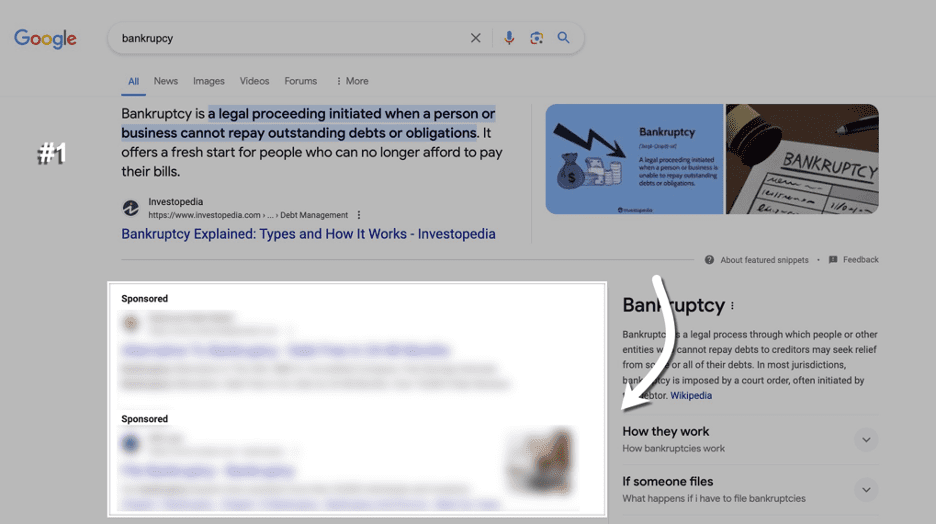

Competitor keywords are the search terms your competitors target within their content to rank high in SERPs, either organically or through paid ads.

Collecting search terms that your competitors rely on can help you:

1. Identify & Close Keyword Gaps.

The list of high-ranking keywords driving traffic to your competitors may include valuable search terms you’re currently missing out on.

To close these keyword gaps, you can either optimize your existing content with these keywords or use them as inspiration for creating new content with high traffic potential.

2. Adapt To Market Trends & Customer Needs.

You may notice a shift in the keywords your competitors optimize content for. This could be a sign that market trends or customer expectations are changing.

Keep track of these keywords to jump on emerging trends and align your content strategy accordingly.

3. Enhance Visibility & Rankings.

Analyzing your competitors’ high-ranking keywords and pages can help you identify their winning patterns (e.g., content format, user intent focus, update frequency, etc).

Study what works for your rivals (and why) to learn how to adapt these tactics to your website and achieve higher SERP positions.

How To Identify Your Competitors’ Keywords

There are many ways to find keywords used by competitors within their content. Let’s weigh the pros and cons of the most popular options.

Use SE Ranking

SE Ranking is a complete toolkit that delivers unique data insights. These insights help SEO pros build and maintain successful SEO campaigns.

Here’s the list of pros that the platform offers for agency and in-house SEO professionals:

- Huge databases. SE Ranking has one of the web’s largest keyword databases. It features over 5 billion keywords across 188 regions. Also, the number of keywords in their database is constantly growing, with a 30% increase in 2024 compared to the previous year.

- Reliable data. SE Ranking collects keyword data, analyzes it, and computes core SEO metrics directly from its proprietary algorithm. The platform also relies on AI-powered traffic estimations that have up to a 100% match with GSC data.

Thanks to SE Ranking’s recent major data quality update, the platform boasts even fresher and more accurate information on backlinks and referring domains (both new and lost).

As a result, by considering the website’s backlink profile, authority, and SERP competitiveness, SE Ranking now makes highly accurate calculations of keyword difficulty. This makes it easy to see how likely your own website or page is to rank at the top of the SERPs for a particular query.

- Broad feature set. Beyond conducting competitive (& keyword) research, you can also use this tool to track keyword rankings, perform website audits, handle all aspects of on-page optimization, manage local SEO campaigns, optimize your content for search, and much more.

- Great value for money. The tool offers premium features with generous data limits at a fair price. This eliminates the need to choose between functionality and affordability.

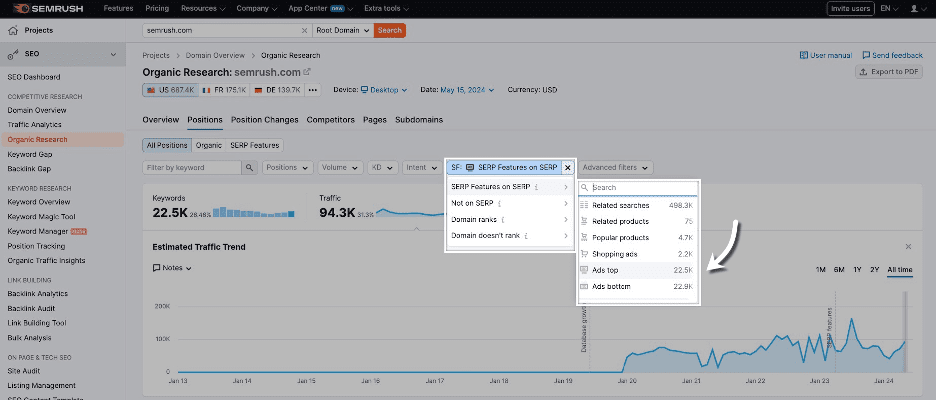

Let’s now review how to use SE Ranking to discover the keywords your competitors are targeting for both organic search and paid advertising.

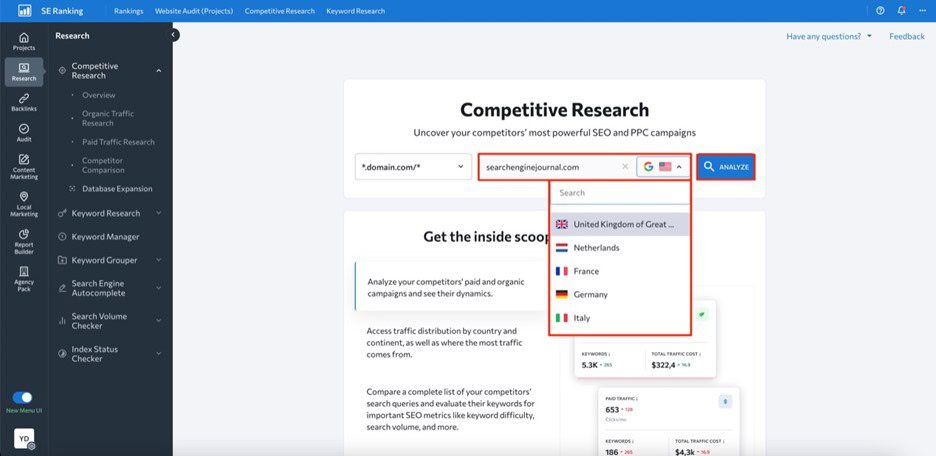

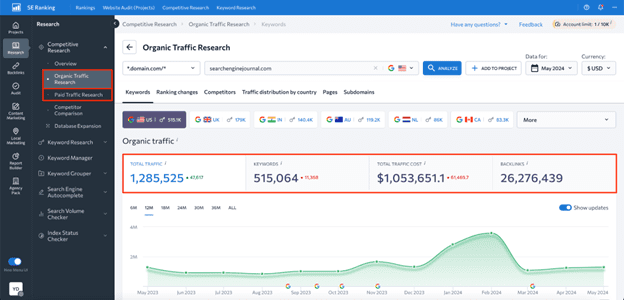

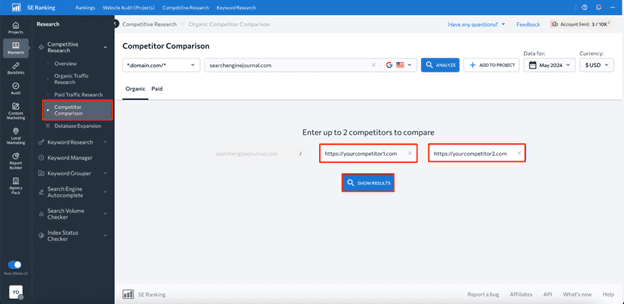

First, open the Competitive Research Tool and input your competitor’s domain name into the search bar. Select a region and click Analyze to initiate analysis of this website.

Image created by SE Ranking, May 2024

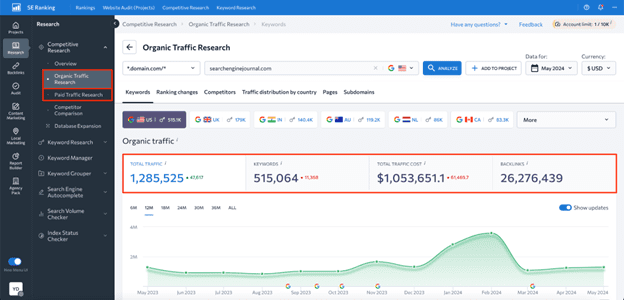

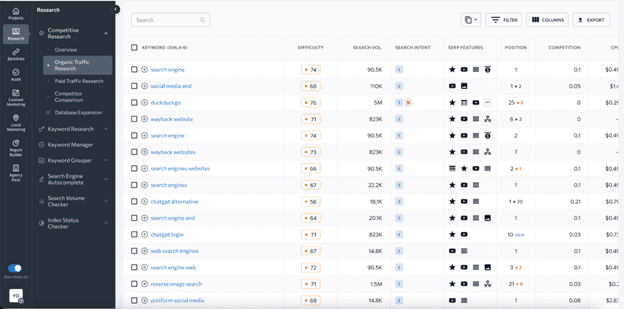

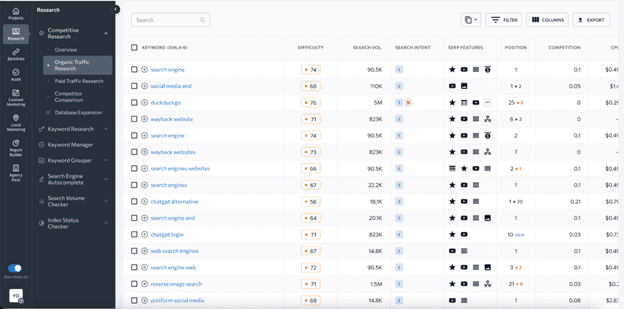

Image created by SE Ranking, May 2024Depending on your goal, go either to the Organic Traffic Research or Paid Traffic Research tab on the left-hand navigation menu.

Here, you’ll be able to see data on estimated organic clicks, total number of keywords, traffic cost, and backlinks.

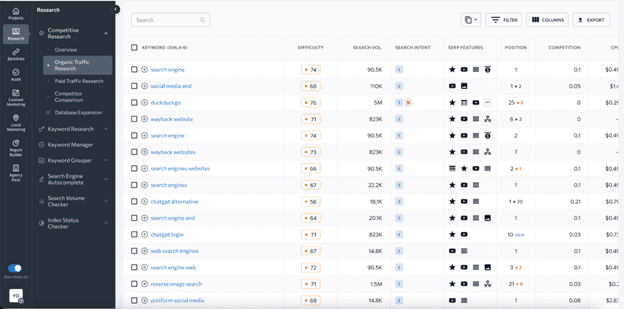

Upon scrolling this page down, you’ll see a table with all the keywords the website ranks for, along with data on search volume, keyword difficulty, user intent, SERP features triggered by keywords, ranking position, URLs ranking for the analyzed keyword, and more.

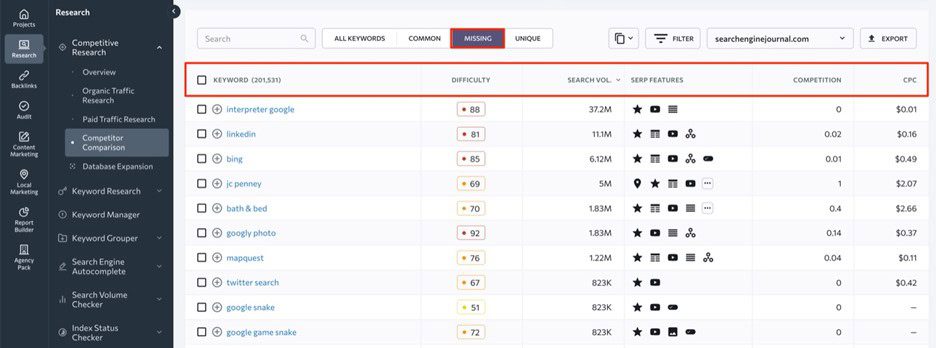

What’s more, the tool allows you to find keywords your competitors rank for but you don’t.

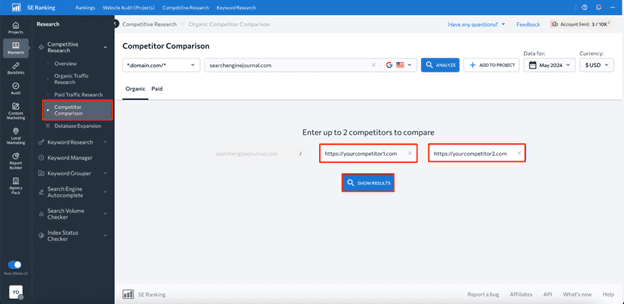

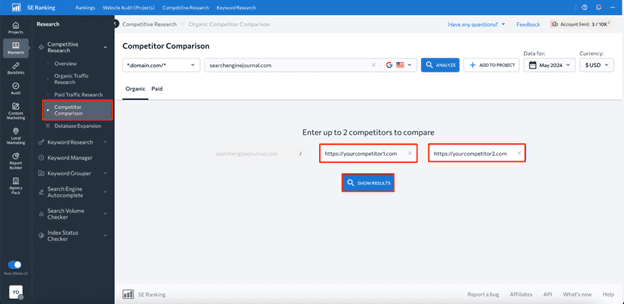

To do this, head to the Competitor Comparison tab and add up to two websites for comparison.

Within the Missing tab, you’ll be able to see existing keyword gaps.

While the platform offers many benefits, there are also some downsides to be aware of, such as:

- Higher-priced plans are required for some features. For instance, historical data on keywords is only available to Pro and Business plan users.

- Data is limited to Google only. SE Ranking’s Competitor Research Tool only provides data for Google.

Use Google Keyword Planner

Google Keyword Planner is a free Google service, which you can use to find competitors’ paid keywords.

Here’s the list of benefits this tool offers in terms of competitive keyword analysis:

- Free access. Keyword Planner is completely free to use, which makes it a great option for SEO newbies and businesses with limited budgets.

- Core keyword data. The tool shows core SEO metrics like search volume, competition, and suggested bid prices for each identified keyword.

- Keyword categorization. Keyword Planner allows you to organize keywords into different groups, which may be helpful for creating targeted ad campaigns.

- Historical data. The tool has four years of historical data available.

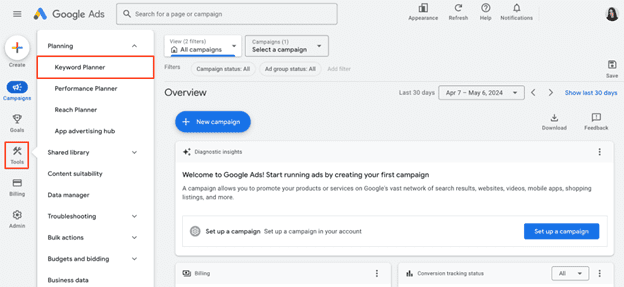

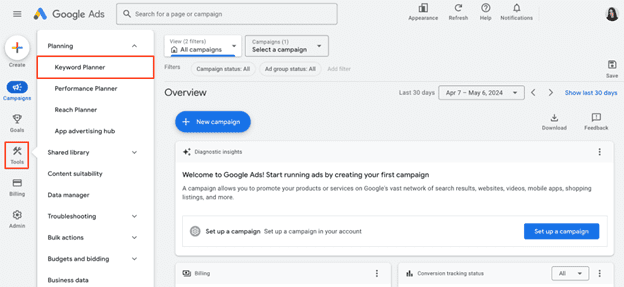

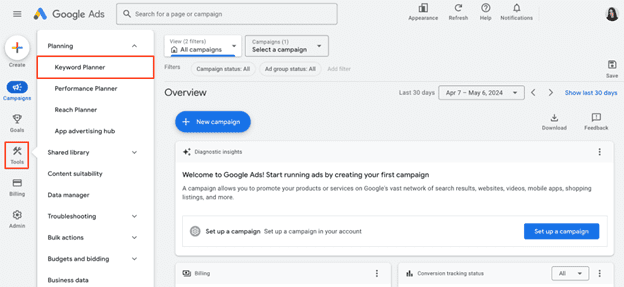

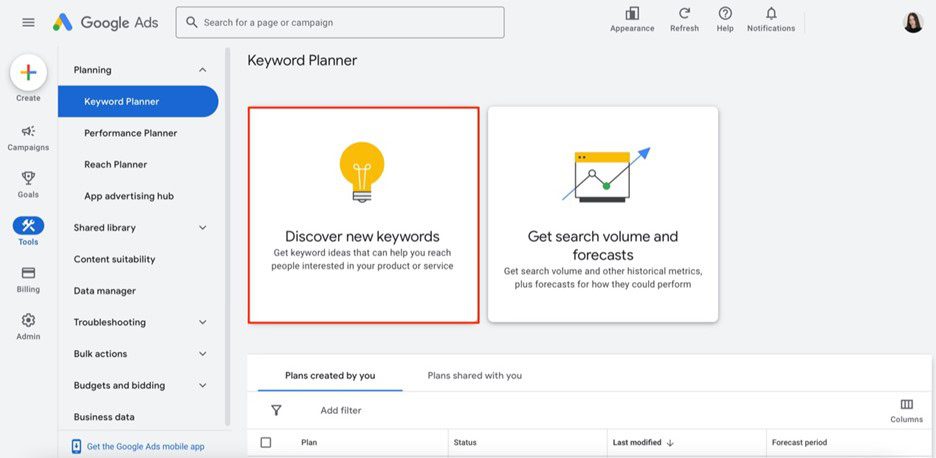

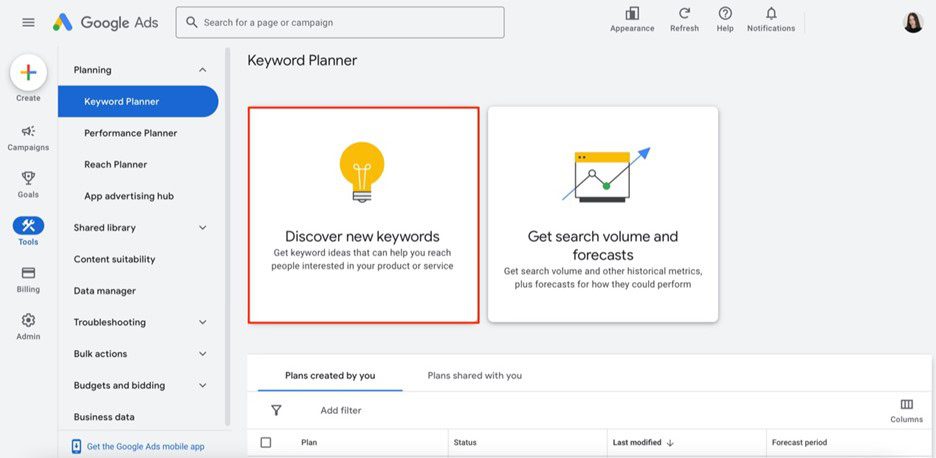

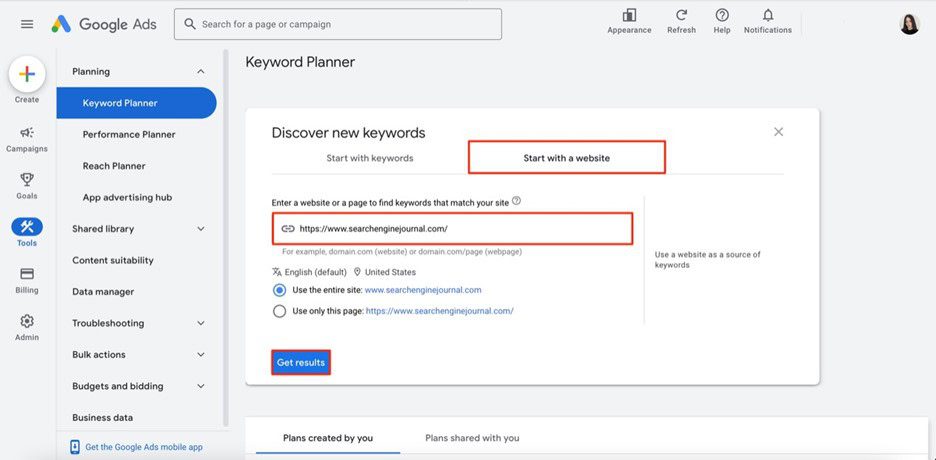

Once you log into your Google Ads account, navigate to the Tools section and select Keyword Planner.

Now, click on the Discover new keywords option.

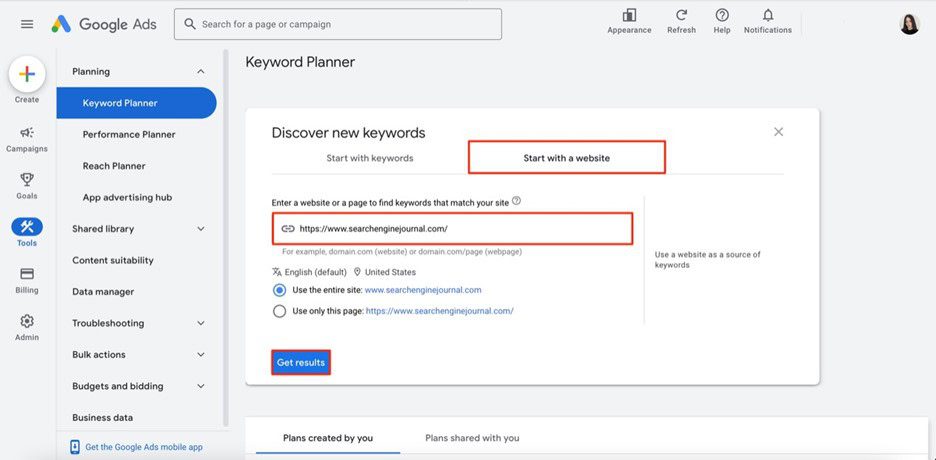

Choose Start with a website option, enter your competitor’s website domain, region, and language, then choose to analyze the whole site (recommended for deeper insights) or a specific URL.

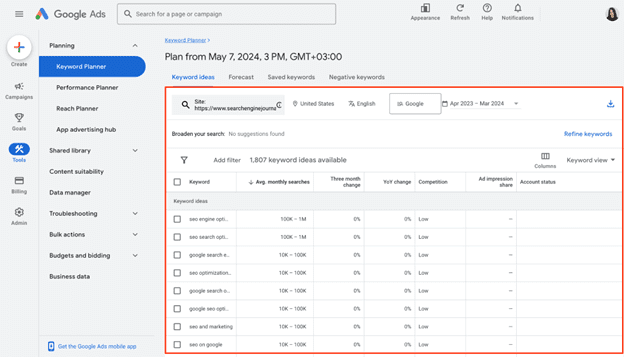

And there you have it — a table with all keywords that your analyzed website uses in its Google Ads campaigns.

Although Keyword Planner can be helpful, it’s not the most effective and data-rich tool for finding competitors’ keywords. Its main drawbacks are the following:

- No organic data. The tool offers data on paid keywords, which is mainly suitable for advertising campaigns.

- Broad search volume data. Since it’s displayed in ranges rather than exact numbers, it might be difficult to precisely assess the demand for identified keywords.

- No keyword gap feature. Using this tool, you cannot compare your and your competitors’ keywords side-by-side and, therefore, find missing keyword options.

So, if you want to access more reliable and in-depth data on competitors’ keywords, you’ll most likely need to consider other dedicated SEO tools.

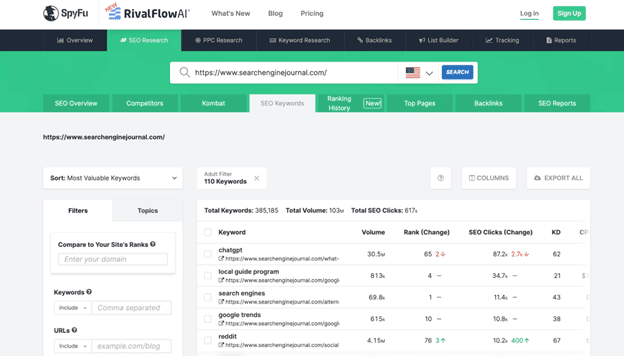

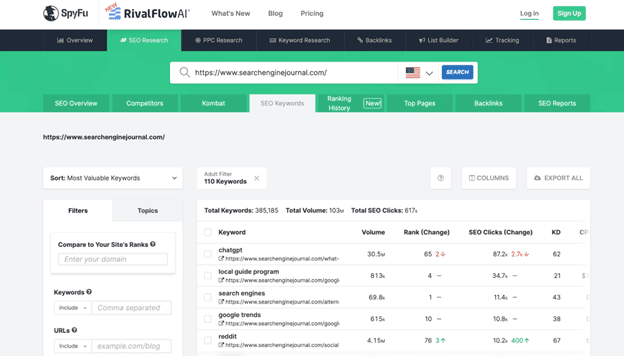

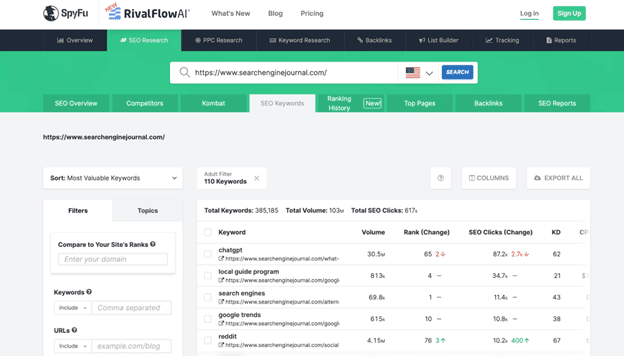

Use SpyFu

SpyFu is a comprehensive SEO and PPC analysis tool created with the idea of “spying” on competitors.

Its main pros in terms of competitor keyword analysis are the following:

- Database with 10+ years of historical data. Although available only in a Professional plan, SpyFu offers long-term insights to monitor industry trends and adapt accordingly.

- Keyword gap analysis. Using this tool, you can easily compare your keywords to those of your competitors using metrics like search volume, keyword difficulty, organic clicks, etc.

- Affordability. It’s suitable for businesses on a tight budget.

To explore competitor data, simply visit their website and enter your competitor’s domain in the search bar.

You’ll be presented with valuable insights into their SEO performance, from estimated traffic to the list of their top-performing pages and keywords. Navigate to the Top Keywords section and click the View All Organic Keywords button to see the search terms they rank for.

Yet, this free version provides an overview of just the top 5 keywords for a domain along with metrics like search volume, rank change, SEO clicks, and so on. To perform a more comprehensive analysis, you’ll need to upgrade to a paid plan.

When it comes to the tool’s cons, it would be worth mentioning:

- Keyword data may be outdated. On average, SpyFu updates data on keyword rankings once a month.

- Limited number of target regions. Keyword data is available for just 14 countries.

Wrapping Up

There’s no doubt that finding competitors’ keywords is a great way to optimize your own content strategy and outperform your rivals in SERPs.

By following the step-by-step instructions described in this article, we’re sure you’ll be able to find high-value keywords you haven’t considered before.

Ready to start optimizing your website? Sign up for SE Ranking and get the data you need to deliver great user experiences.

Image Credits

Featured Image: Image by SE Ranking. Used with permission.