HIV could infect 1,400 infants every day because of US aid disruptions

Around 1,400 infants are being infected by HIV every day as a result of the new US administration’s cuts to funding to AIDS organizations, new modeling suggests.

In an executive order issued January 20, President Donald Trump paused new foreign aid funding to global health programs, and four days later, US Secretary of State Marco Rubio issued a stop-work order on existing foreign aid assistance. Surveys suggest that these changes forced more than a third of global organizations that provide essential HIV services to close within days of the announcements.

Hundreds of thousands of people are losing access to HIV treatments as a result. Women and girls are missing out on cervical cancer screening and services for gender-based violence, too. A waiver Rubio later issued in an attempt to restore lifesaving services has had very little impact.

“We are in a crisis,” said Jennifer Sherwood, director of research, public policy, at amfAR, the Foundation for AIDS Research, at a data-sharing event on March 17 at Columbia University in New York. “Even funds that had already been appropriated, that were in the field, in people’s bank accounts, [were] frozen.”

Rubio approved a waiver for “life-saving” humanitarian assistance on January 28. “This resumption is temporary in nature, and with limited exceptions as needed to continue life-saving humanitarian assistance programs, no new contracts shall be entered into,” he said in a statement at the time.

The US President’s Emergency Plan for AIDS Relief (PEPFAR), which invests millions of dollars in the global AIDS response every year, was also granted a waiver February 1 to continue “life-saving” work.

Despite this waiver, there have been devastating reports of the impact on health programs across the many low-income countries that relied on the US Agency for International Development (USAID), which oversees PEPFAR, for funding. To get a better sense of the overall impact, amfAR conducted two surveys looking at more than 150 organizations that rely on PEPFAR funding in more than 26 countries.

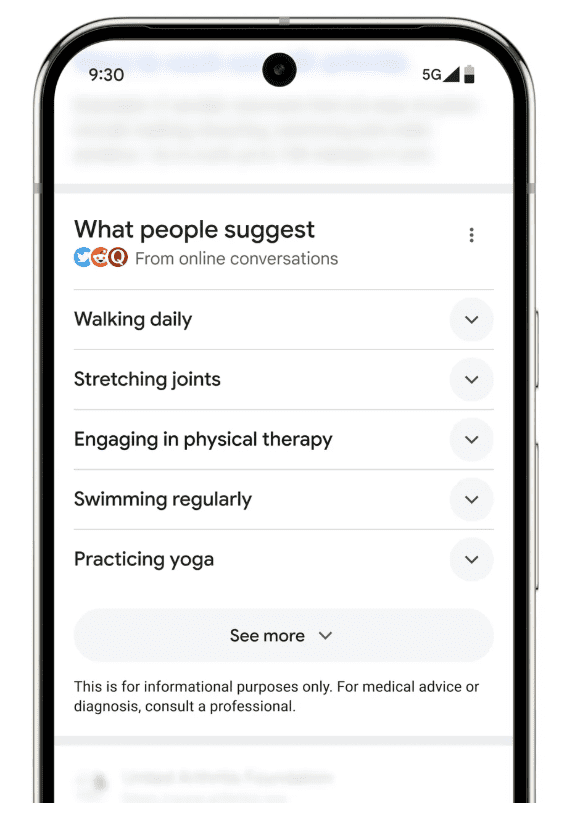

“We found really severe disruptions to HIV services,” said Sherwood, who presented the findings at Columbia. “About 90% of our participants said [the cuts] had severely limited their ability to deliver HIV services.” Specifically, 94% of follow-up services designed to monitor people’s progress were either canceled or disrupted. There were similarly dramatic disruptions to services for HIV testing, treatment, and prevention, and 92% of services for gender-based violence were canceled or disrupted.

The cuts have plunged organizations into a “deep financial crisis,” said Sherwood. Almost two-thirds of respondents said community-based staff were laid off before the end of January. When the team asked these organizations how long they could stay open without US funding, 36% said they had already closed. “Only 14% said that they were able to stay open longer than a month,” said Sherwood. “And … this data was collected longer than a month ago.”

The organizations said tens of thousands of the people they serve would lose HIV treatment within a month. For some organizations, that figure was over 100,000, said Sherwood.

Part of the problem is that the stop-work order came at a time when these organizations were already experiencing “shortages in commodities,” Sherwood said. Typically, centers might give a person a six-month supply of antiretroviral drugs. Before the stop-work order, many organizations were only giving one-month supplies. “Almost all of their clients are due to come back and pick up [more] treatments in this 90-day freeze,” she said. “You can really see the panic this has caused.”

The waiver for “life-saving” treatment didn’t do much to remedy this situation. Only 5% of the organizations received funds under the waiver, while the vast majority either were told they didn’t qualify or had not been told they could restart services. “While the waiver might be one important avenue to restart some services, it cannot, on the whole, save the US HIV program,” says Sherwood. “It is very limited in scope, and it has not been widely communicated to the field.”

AmfAR isn’t the only organization tracking the impact of US funding cuts. At the same event, Sara Casey, assistant professor of population and family health at Columbia, presented results of a survey of 101 people who work in organizations reliant on US aid. They reported seeing disruptions to services in humanitarian responses, gender-based violence, mental health, infectious diseases, essential medicines and vaccines, and more. “Many of these should have been eligible for the ‘life-saving’ waivers,” Casey said.

Casey and her colleagues have also been interviewing people in Colombia, Kenya, and Nepal. In those countries, women of reproductive age, newborns and children, people living with HIV, members of the LGBTQI+ community, and migrants are among those most affected by the cuts, she said, and health workers, who are primarily women, are losing their livelihoods.

“There will be really disproportionate impacts on the world’s most vulnerable,” said Sherwood. Women make up 67% of the health-care workforce, according to the World Health Organization. They also make up 63% of PEPFAR clients. PEPFAR has supported gender equality and services for gender-based violence. “We don’t know if other countries or other donors … can or will pick up these types of programs, especially in the face of competing priorities about keeping people on treatment and keeping people alive,” said Sherwood.

Sherwood and her colleagues at amfAR have also done some modeling work to determine the potential impact of cuts to PEPFAR on women and girls, using data from last year to create their estimates. “Each day that the stop-work order is in place, we estimate that there are 1,400 new HIV infections among infants,” she said. And every day, over 7,000 women stand to miss out on cervical cancer screenings.

The funding cuts have also had a dramatic effect on mental-health services, said Farah Arabe, who serves on the advisory board of the Global Mental Health Action Network. Arabe presented the preliminary findings of an ongoing survey of mental-health organizations from 29 countries that receive US aid. “Unfortunately, this is a very grim picture,” she said. “Only 5% of individuals who were receiving services in 2024 will be able to receive services in 2025.”

The same goes for children and adolescents. “This is a particularly sad picture because children … are going through brain development,” she said. “Impacts … at this early stage of life have lifelong impacts on academic achievement, economic productivity, mental health, physical health … even the ability to parent the next generation.”

For now, nonprofits and aid and research organizations are scrambling to try to understand, and potentially limit, the impact of the cuts. Some are hoping to locate new sources of funding, independent of the US.

“I am deeply concerned that progress in disease eradication, poverty reduction, and gender equality is at risk of being reversed,” said Thoai Ngo of Columbia University’s Mailman School of Public Health, who chaired the event. “Without urgent action, preventable deaths will rise, more people will fall into poverty, and as always, women and girls will bear the heaviest burden.”

On March 10, Rubio announced the results of his department’s review of USAID. “After a 6 week review we are officially cancelling 83% of the programs at USAID,” he shared via the social media platform X.