What I learned from the UN’s “AI for Good” summit

This story originally appeared in The Algorithm, our weekly newsletter on AI. To get stories like this in your inbox first, sign up here.

Greetings from Switzerland! I’ve just come back from Geneva, which last week hosted the UN’s AI for Good Summit, organized by the International Telecommunication Union. The summit’s big focus was how AI can be used to meet the UN’s Sustainable Development Goals, such as eradicating poverty and hunger, achieving gender equality, promoting clean energy and climate action and so on.

The conference featured lots of robots (including one that dispenses wine), but what I liked most of all was how it managed to convene people working in AI from around the globe, featuring speakers from China, the Middle East, and Africa too, such as Pelonomi Moiloa, the CEO of Lelapa AI, a startup building AI for African languages. AI can be very US-centric and male dominated, and any effort to make the conversation more global and diverse is laudable.

But honestly, I didn’t leave the conference feeling confident AI was going to play a meaningful role in advancing any of the UN goals. In fact, the most interesting speeches were about how AI is doing the opposite. Sage Lenier, a climate activist, talked about how we must not let AI accelerate environmental destruction. Tristan Harris, the cofounder of the Center for Humane Technology, gave a compelling talk connecting the dots between our addiction to social media, the tech sector’s financial incentives, and our failure to learn from previous tech booms. And there are still deeply ingrained gender biases in tech, Mia Shah-Dand, the founder of Women in AI Ethics, reminded us.

So while the conference itself was about using AI for “good,” I would have liked to see more talk about how increased transparency, accountability, and inclusion could make AI itself good from development to deployment.

We now know that generating one image with generative AI uses as much energy as charging a smartphone. I would have liked more honest conversations about how to make the technology more sustainable itself in order to meet climate goals. And it felt jarring to hear discussions about how AI can be used to help reduce inequalities when we know that so many of the AI systems we use are built on the backs of human content moderators in the Global South who sift through traumatizing content while being paid peanuts.

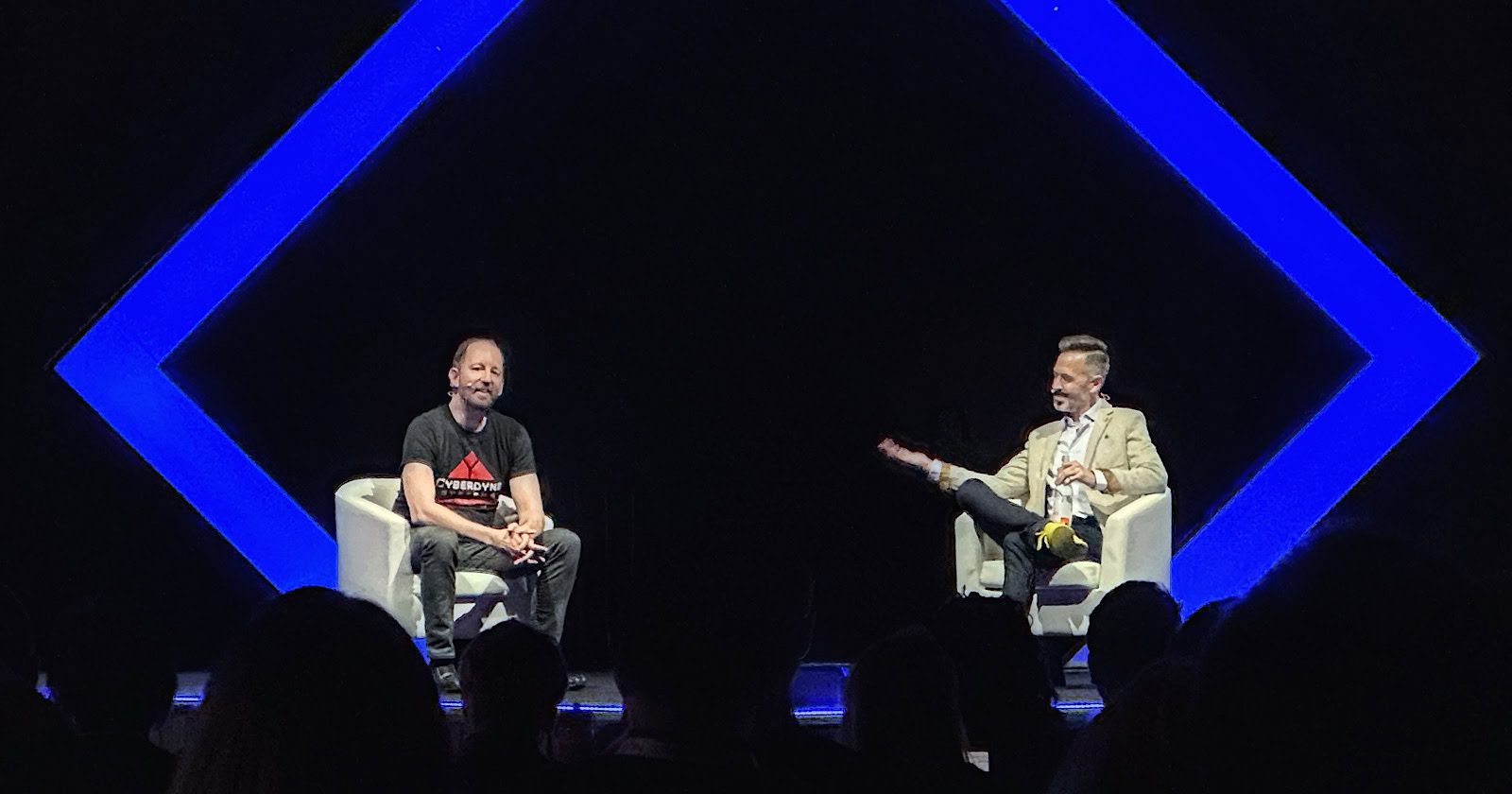

Making the case for the “tremendous benefit” of AI was OpenAI’s CEO Sam Altman, the star speaker of the summit. Altman was interviewed remotely by Nicholas Thompson, the CEO of the Atlantic, which has incidentally just announced a deal for OpenAI to share its content to train new AI models. OpenAI is the company that instigated the current AI boom, and it would have been a great opportunity to ask him about all these issues. Instead, the two had a relatively vague, high-level discussion about safety, leaving the audience none the wiser about what exactly OpenAI is doing to make their systems safer. It seemed they were simply supposed to take Altman’s word for it.

Altman’s talk came a week or so after Helen Toner, a researcher at the Georgetown Center for Security and Emerging Technology and a former OpenAI board member, said in an interview that the board found out about the launch of ChatGPT through Twitter, and that Altman had on multiple occasions given the board inaccurate information about the company’s formal safety processes. She has also argued that it is a bad idea to let AI firms govern themselves, because the immense profit incentives will always win. (Altman said he “disagree[s] with her recollection of events.”)

When Thompson asked Altman what the first good thing to come out of generative AI will be, Altman mentioned productivity, citing examples such as software developers who can use AI tools to do their work much faster. “We’ll see different industries become much more productive than they used to be because they can use these tools. And that will have a positive impact on everything,” he said. I think the jury is still out on that one.

Now read the rest of The Algorithm

Deeper Learning

Why Google’s AI Overviews gets things wrong

Google’s new feature, called AI Overviews, provides brief, AI-generated summaries highlighting key information and links on top of search results. Unfortunately, within days of AI Overviews’ release in the US, users were sharing examples of responses that were strange at best. It suggested that users add glue to pizza or eat at least one small rock a day.

MIT Technology Review explains: In order to understand why AI-powered search engines get things wrong, we need to look at how they work. The models that power them simply predict the next word (or token) in a sequence, which makes them appear fluent but also leaves them prone to making things up. They have no ground truth to rely on, but instead choose each word purely on the basis of a statistical calculation. Worst of all? There’s probably no way to fix things. That’s why you shouldn’t trust AI search engines. Read more from Rhiannon Williams here.

Bits and Bytes

OpenAI’s latest blunder shows the challenges facing Chinese AI models

OpenAI’s GPT-4o data set is polluted by Chinese spam websites. But this problem is indicative of a much wider issue for those building Chinese AI services: finding the high-quality data sets they need to be trained on is tricky, because of the way China’s internet functions. (MIT Technology Review)

Five ways criminals are using AI

Artificial intelligence has brought a big boost in productivity—to the criminal underworld. Generative AI has made phishing, scamming, and doxxing easier than ever. (MIT Technology Review)

OpenAI is rebooting its robotics team

After disbanding its robotics team in 2020, the company is trying again. The resurrection is in part thanks to rapid advancements in robotics brought by generative AI. (Forbes)

OpenAI found Russian and Chinese groups using its tech for propaganda campaigns

OpenAI said that it caught, and removed, groups from Russia, China, Iran, and Israel that were using its technology to try to influence political discourse around the world. But this is likely just the tip of the iceberg when it comes to how AI is being used to affect this year’s record-breaking number of elections. (The Washington Post)

Inside Anthropic, the AI company betting that safety can be a winning strategy

The AI lab Anthropic, creator of the Claude model, was started by former OpenAI employees who resigned over “trust issues.” This profile is an interesting peek inside one of OpenAI’s competitors, showing how the ideology behind AI safety and effective altruism is guiding business decisions. (Time)

AI-directed drones could help find lost hikers faster

Drones are already used for search and rescue, but planning their search paths is more art than science. AI could change that. (MIT Technology Review)