Water shortages in Southern California made an indelible impression on Evelyn Wang ’00 when she was growing up in Los Angeles. “I was quite young, perhaps in first grade,” she says. “But I remember we weren’t allowed to turn our sprinklers on. And everyone in the neighborhood was given disinfectant tablets for the toilet and encouraged to keep flushing to a minimum. I didn’t understand exactly what was happening. But I saw that everyone in the community was affected by the scarcity of this resource.”

Today, as extreme weather events increasingly affect communities around the world, Wang is leading MIT’s effort to tackle the interlinked challenges of a changing climate and a burgeoning global demand for energy. Last April, after wrapping up a two-year stint directing the US Department of Energy’s Advanced Research Projects Agency–Energy (ARPA-E), she returned to the campus where she’d been both an undergraduate and a faculty member to become the inaugural vice president for energy and climate.

“The accelerating problem of climate change and its countless impacts represent the greatest scientific, technical, and policy challenge of this or any age,” MIT President Sally Kornbluth wrote in a January 2025 letter to the MIT community announcing the appointment. “We are tremendously fortunate that Evelyn Wang has agreed to lead this crucial work.”

A time to lead

MIT has studied and worked on problems of climate and energy for decades. In recent years, with temperatures rising, storms strengthening, and energy demands surging, that work has expanded and intensified, spawning myriad research projects, policy proposals, papers, and startups. The challenges are so urgent that MIT launched several Institute-wide initiatives, including President Rafael Reif’s Climate Grand Challenges (2020) and President Kornbluth’s Climate Project (2024).

But Kornbluth has argued that MIT needs to do even more. Her creation of the new VP-level post that Wang now holds underscores that commitment.

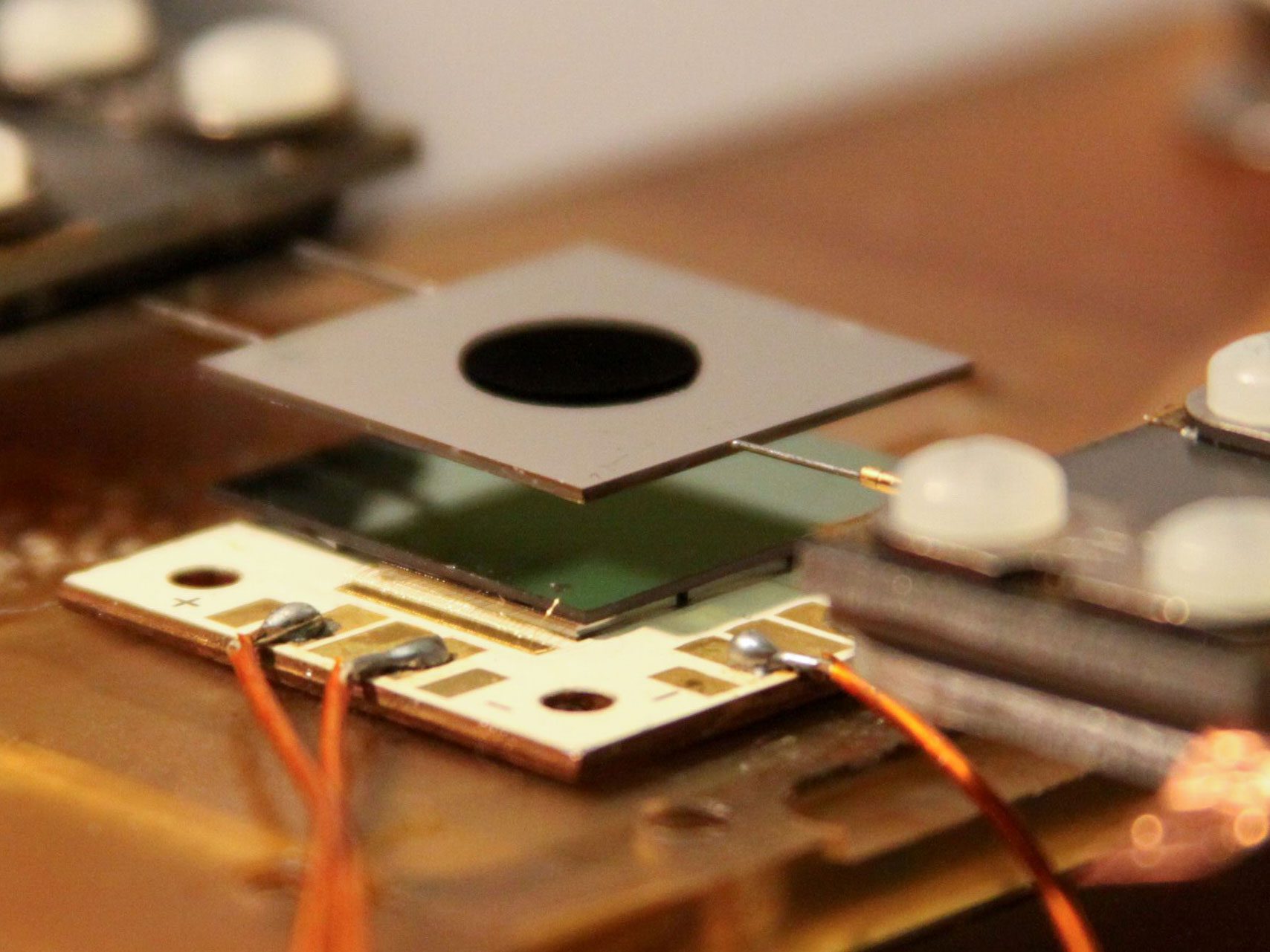

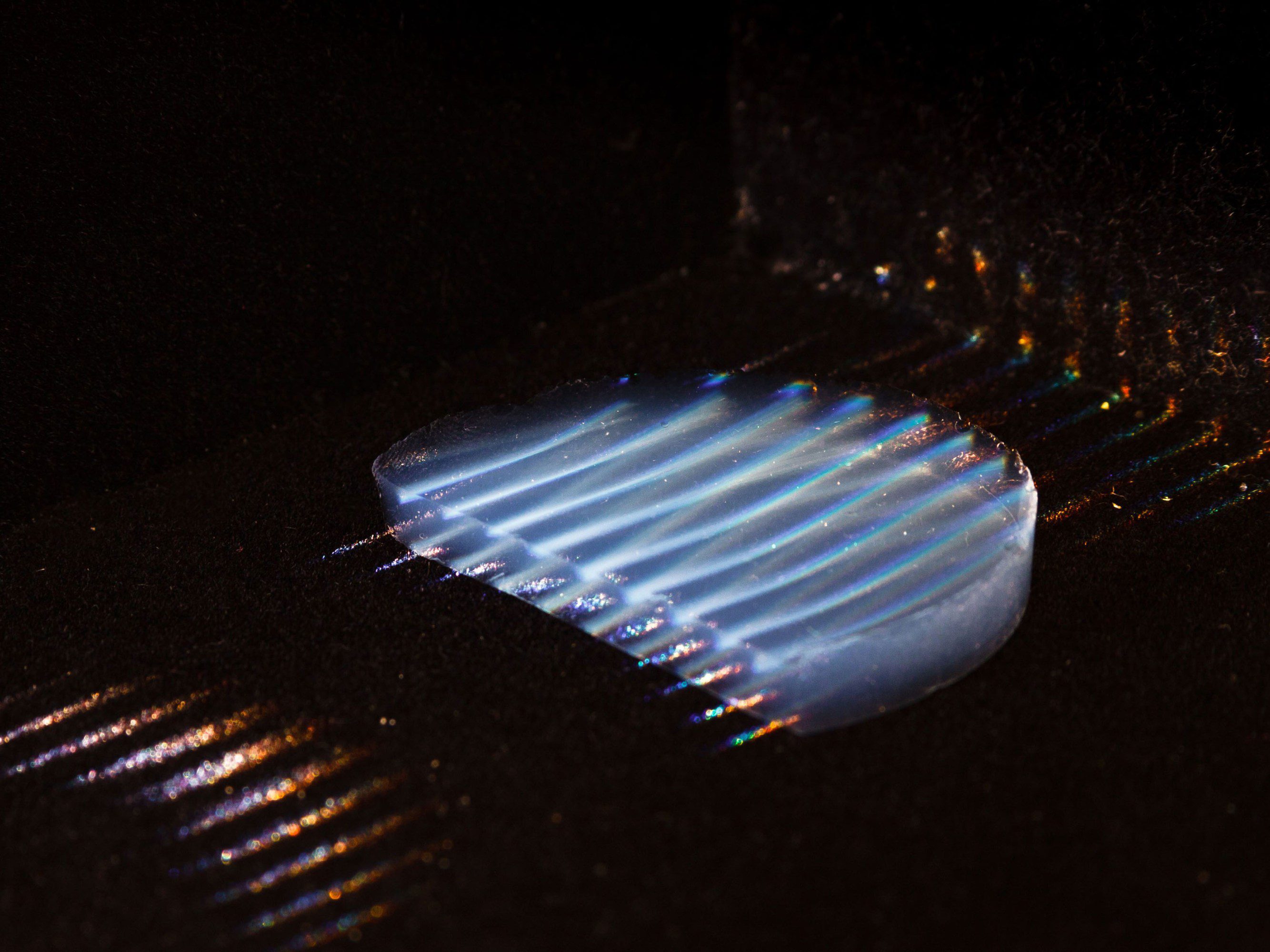

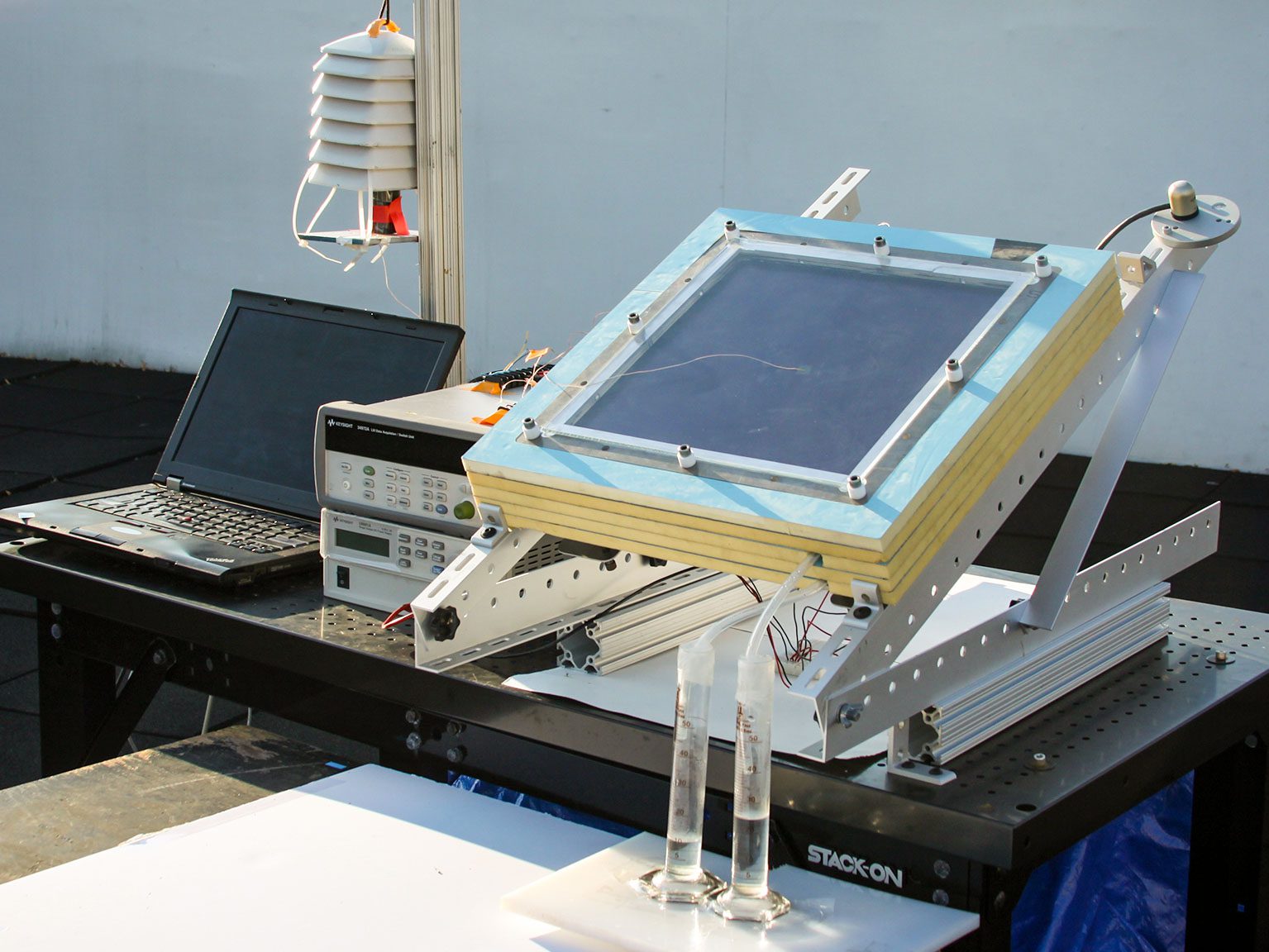

Wang is well suited for the role. The Ford Professor of Engineering at MIT and former head of the Department of Mechanical Engineering, she joined the faculty in 2007, shortly after she completed her PhD at Stanford University. Her research centers on thermal management and energy conversion and storage, but she also works on nano-engineered surfaces and materials, as well as water harvesting and purification. Wang and her colleagues have produced a device based on nanophotonic crystals that could double the efficiency of solar cells—one of MIT Technology Review’s 10 breakthrough technologies of 2017. And the device she invented with Nobel laureate Omar Yaghi for extracting water from very dry air was named one of 2017’s top 10 emerging technologies by Scientific American and the World Economic Forum, and in 2018 earned her the Prince Sultan Bin Abdulaziz International Prize for Water. (See story on this water harvesting research in the January/February issue of MIT Technology Review.)

Wang has a deep knowledge of the Institute—and even deeper roots here. (See “Family Ties,” MIT Alumni News, March/April 2015.) Her parents met at MIT as PhD students from Taiwan in the 1960s; they were married in the MIT chapel. When Wang arrived at MIT in 1996 as a first-year student, her brother Alex ’96, MEng ’97, had just graduated and was working on a master’s degree in electrical engineering. Her other brother, Ben, would earn his PhD at MIT in 2007. She even met her husband, Russell Sammon ’98, at MIT. Apart from her time at ARPA-E, a very brief stint at Bell Labs, and sabbatical work at Google, she has spent her entire professional career at the Institute. So she has a rare perspective on the resources MIT can draw on to respond to the climate and energy challenge.

“The beating heart of MIT is innovation,” she says. “We are innovators. And innovation is something that will help us leapfrog some of the potential hurdles as we work toward climate and energy solutions. Our ability to innovate can enable us to move closer toward energy security, toward sustainable resource development and use.”

The prevailing innovative mindset at MIT is backed by a deep desire to tackle the problem.Many people on campus are passionate about climate and energy, says Wang. “That is why President Kornbluth made this her flagship initiative. We are fortunate to have so many talented students and faculty, and to be able to rely on our infrastructure. I know they will all step up to meet the challenges.” But she is quick to point out that the problems are too large for any one entity—including MIT—to address on its own. So she’s aiming to encourage more collaboration both among MIT researchers and with other institutions.

“If we want to solve this problem of climate change, if we want to change the trajectory in the next decade, we cannot continue to do business as usual,” she says. “That is what is most exciting about this problem and, frankly, is why I came back to campus to take this job.”

Hand in hand

The coupling of climate and energy in Wang’s portfolio is strategic. “Energy and climate are two sides of the same coin,” she explains. “A major reason we are seeing climate change is that we haven’t deployed solutions at a scale necessary to mitigate the CO2 emissions from the energy sector. The ways we generate energy and manage emissions are fundamental to any strategy addressing climate change. At the same time, the world demands more and more energy—a demand we can’t possibly meet through a single means.”

“Zero-emissions and low-carbon approaches will not be enough to supply the necessary energy or to reverse our impact on the climate … We need to do something truly transformational. That is the heart of the challenge.”

What’s more, she contends that switching from fossil fuels to cleaner energy, while fundamental, is only part of the solution. “Zero-emissions and low-carbon approaches will not be enough to supply the necessary energy or to reverse our impact on the climate,” she says. “We need to consider the environmental impacts of these new fuels we develop and deploy. We need to use data analysis to move goods and energy more efficiently and intelligently. We need to consider raising more of our food in water and using food by-products and waste to help sequester carbon. In short, we need to do something truly transformational. That is the heart of the challenge.”

That challenge seems destined to grow more daunting in the coming years. There are still, Wang observes, areas of “energy poverty”—places where people cannot access sufficient energy to sustain their well-being. But solving that problem will only drive up energy production and consumption worldwide. The explosive growth of AI will likely do the same, since the huge data centers that power the technology require enormous quantities of energy for both computation and cooling.

Wang believes that while AI will continue to drive electricity demand, it can also contribute to creating a more sustainable future.“We can use AI to develop climate and energy solutions,” she says. “AI can play a primary role in solution sets, can give us new and improved ways to manage intermittent loads in the energy grid. It can help us develop new catalysts and chemicals or help us stabilize the plasma we’ll use in nuclear fusion. It could augment climate and geospatial modeling that would allow us to predict the impact of potential climate solutions before we implement them. We could even use AI to reduce computational needs and thereby ease cooling demand.”

Change the narrative, change the culture

MIT was humming with climate and energy research long before Wang returned to campus in 2025 after wrapping up her work at ARPA-E. Almost 400 researchers across 90% of MIT’s departments responded to President Reif’s 2020 Climate Grand Challenges initiative. The Institute awarded $2.7 million to 27 finalist teams and identified five flagship projects, including one to create an early warning system to help mitigate the impact of climate disasters, another to predict and prepare for extreme weather events, and an ambitious project to slash nearly half of all industrial carbon emissions.

About 250 MIT faculty and senior researchers are now involved in the Climate Project at MIT, a campus-wide initiative launched in 2024 that works to generate and implement climate solutions, tools, and policy proposals. Conceived to bolster MIT’s already significant efforts as a leading source of technological, behavioral, and policy solutions to global climate issues, the Climate Project has identified six “missions”: decarbonizing energy and industry; preserving the atmosphere, land, and oceans; empowering frontline community action; designing resilient and prosperous cities; enabling new policy approaches; and wild cards, a catch-all category that supports development of unconventional solutions outside the scope of the other missions. Faculty members direct each mission.

With so much climate research already underway,a large part of Wang’s new role is to support and deepen existing projects. But to fully tap into MIT’s unique capabilities, she says, she’s aiming to foster some cultural shifts. And that begins with identifying ways to facilitate cooperation—both across the Institute and with external partners—on a scale that can make “something truly transformational” happen, she says. “At this stage, with the challenges we face in energy and climate, we need to do something ambitious.”

In Wang’s view, getting big results depends on taking a big-picture, holistic approach that will require unprecedented levels of collaboration. “MIT faculty have always treasured their independence and autonomy,” she says. “Traditionally, we’ve tried to let 1,000 flowers bloom. And traditionally we’ve done that well, often with outstanding results. But climate and energy are systems problems, which means we need to create a systems solution. How do we bring these diverse faculty together? How do we align their efforts, not just in technology, but also in policy, science, finance, and social sciences?”

To encourage MIT faculty to collaborate across departments, schools, and disciplines, Wang recently announced that the MIT Climate Project would award grants of $50,000 to $250,000 to collaborative faculty teams that work on six- to 24-month climate research projects. Student teams are invited to apply for research grants of up to $15,000.“We can’t afford to work in silos,” she says. “People from wildly diverse fields are working on the same problems and speaking different professional languages. We need to bring people together in an integrative way so we can attack the problem holistically.”

Wang also wants colleagues to reach beyond campus. MIT, she says, needs to form real, defined partnerships with other universities, as well as with industries, investors, and philanthropists. “This isn’t just an MIT problem,” she says. “Individual efforts and 1,000 flowers alone will not be enough to meet these challenges.”

Thinking holistically—and in terms of systems—will help focus efforts on the areas that will have the greatest impact. At a Climate Project presentation in October, Wang outlined an approach that would focus on building well-being within communities. This will begin with efforts to empower communities by developing coastal resilience, to decarbonize ports and shipping, and to design and build data centers that integrate smoothly and sustainably with nearby communities. She encouraged her colleagues to think in terms of big-picture solutions for the future and then to work on the components needed to build that future.

“As researchers, we sometimes jump to a solution before we have fully defined the problem,” she explains. “Let’s take the problem of decarbonization in transportation. The solution we’ve come up with is to electrify our vehicles. When we run up against the problem of the range of these vehicles, our first thought is to create higher-density batteries. But the real problem we’re facing isn’t about batteries. It’s about increasing the range of these vehicles. And the solution to that problem isn’t necessarily a more powerful battery.”

“Too often the narrative around climate is steeped in doom and gloom … The goal of any climate project is to build and protect well-being. How can we help communities thrive, empower people to live as they wish, even as the climate is changing?”

Wang is confident that her MIT colleagues have both the capacity and the desire to embrace the holistic approach she envisions. “When I was accepted to MIT as an undergraduate and visited the campus, the thing that made me certain I wanted to enroll here was the people,” she recalls. “They weren’t just talented. They had so many different interests. They were passionate about solving big problems. And they were eager to learn from one another. That spirit hasn’t changed. And that’s the spirit I and my team can tap into.”

Wang believes MIT and other institutions working on climate and energy solutions also need to change how we talk about the challenge. “Too often the narrative around climate is steeped in doom and gloom,” she says. “The underlying issue here is our well-being. That’s what we care about, not the climate. The goal of any climate project is to build and protect well-being. How can we help communities thrive, empower people to live as they wish, even as the climate is changing? How can we create conditions of resilience, sustainability, and prosperity? That is the framework I would like us to build on.” For example, in areas where extreme weather threatens homes or rising temperatures are harming human health, we should be developing affordable technologies that make dwellings more resilient and keep people cooler.

Wang’s colleagues at MIT concur with her assessment of the mission ahead. They also have a deep respect for her scholarship and leadership. “I couldn’t think of a better person to represent MIT’s diverse and powerful ability to attack climate,” says Susan Solomon, the Lee and Geraldine Martin Professor of Environmental Studies at MIT and recipient of the US National Medal of Science for her work on the Antarctic ozone hole. “Communicating MIT’s capabilities to provide what the nation needs—not only in engineering but also in … economics, the physical, chemical, and biological sciences, and much more—is an immense challenge.” But she’s confident that Wang will do it justice. “Evelyn is a consummate storyteller,” she says.

“She’s tremendously quick at learning new fields and driving toward what the real nuggets are that need to be addressed in solving hard problems,” says Elsa Olivetti, PhD ’07, the Jerry McAfee Professor in Engineering and director of the MIT Climate Project’s Decarbonizing Energy and Industry mission. “Her direct, meticulous thinking and leadership style mean that she can focus teams within her office to do the work that will be most impactful at scale.”

Wang’s experience at ARPA-E is expected to be especially useful. “The current geopolitical situation and the limited amount of research funding available relative to the scale of the climate problem pose formidable challenges to bringing MIT’s strengths to bear on the problem,” says Rohit Karnik, director of the Abdul Latif Jameel Water and Food Systems Lab (J-WAFS) and a collaborator with Wang on numerous projects and initiatives since both joined the mechanical engineering faculty in 2007. “Evelyn’s leadership experience at MIT and in government, her ability to take a complex situation and define a clear vision, and her passion to make a difference will serve her well in her new role.”

Wang’s new MIT appointment is seen as a good thing beyond the Institute as well. “A role like this requires a skill set that is difficult to find in one individual,” says Krista Walton, vice chancellor for research and innovation at North Carolina State University. Walton and Wang collaborated on multiple projects, including the DARPA work that produced the device for extracting water from very dry air based on the original prototype Wang co-developed. “You need scientific depth, an understanding of the federal and global landscape, a collaborative instinct and the ability to be a convener, and a strategic vision,” Walton says—and she can’t imagine a better pick for the job.

“Evelyn has an extraordinary ability to bridge fundamental science with real-world application,” she says. “She approaches collaboration as a true partnership and not as a transaction.”

A challenging funding climate

Climate scientists explore broad swaths of time, tracking trends in temperature, greenhouse gases, volcanic activity, vegetation, and more across hundreds, thousands, and even millions of years. Even average temperatures and precipitation levels are calculated over periods of three decades.

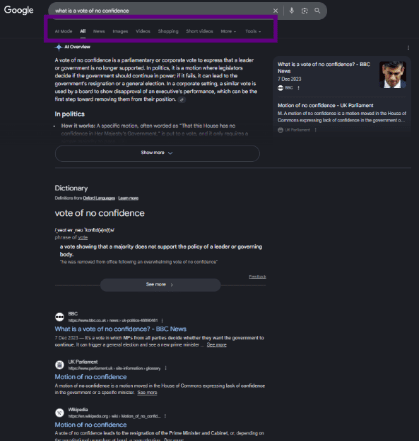

But in the realm of politics, change happens much faster, prompting sudden and sometimes startling shifts in culture and policy. The current US administration has proposed widespread budget cuts in climate and energy research. These have included slicing more than $1.5 billion from the National Oceanic and Atmospheric Administration (NOAA), canceling multiple climate-related missions at NASA, and shuttering the US Global Change Research Program (USGCRP), the agency responsible for publishing the National Climate Assessment. The Trump administration submitted a budget request that would cut the National Science Foundation budget from more than $9 billion to just over $4 billion for 2026. The New York Times reported that NSF grant funding for STEM education from January through mid-May 2025 was down 80% from its 10-year average, while NSF grant awards for math, physics, chemistry, astronomy, and materials science research were down 67%. In September, the US Department of Energy announced it had terminated 223 projects that “did not adequately advance the nation’s energy needs, were not economically viable, and would not provide a positive return on investment of taxpayer dollars.” Among the agencies affected is ARPA-E, the agency Wang directed before returning to MIT. Meanwhile, MIT research programs that rely on government sources of funding will also feel the impact of the cuts.

Acknowledging the difficulties she and MIT may face in the present moment, Wang still prefers to look forward. “Of course this is a challenging time,” she says. “There are near-term challenges and long-term challenges. We need to focus on those long-term challenges. As President Kornbluth has said, we need to continue to advocate for research and education. We need to pursue long-term solutions, to follow our convictions in addressing problems in energy and climate. And we need to be ready to seize the opportunities that reside in these long-term challenges.”

Wang also sees openings for short-term collaboration—areas where MIT and the current administration can find common ground and goals. “There is still a huge area of opportunity for us to align our interests with those of this administration,” she says. “We can move the needle forward together on energy, on national security, on minerals, on economic competitiveness. All these are interests we share, and there are pathways we can follow to meet these challenges to our nation together. MIT is a major force in the nuclear space, in both fission and fusion. These, along with geothermal, could provide the power base we need to meet our energy demands. There are significant opportunities for partnerships with this or any administration to unlock some of these innovations and implement them.”

A moonshot factory

While she views herself as a researcher and an academic, Wang’s relevant government experience should prove especially useful in her VP role at MIT. In her two years as director of ARPA-E, she coordinated a broad array of the US Department of Energy’s early-stage research and development in energy generation, storage, and use. “I think I had the best job in government,” she says. Designed to operate at arm’s length from the Department of Energy, ARPA-E searches for high-risk, high-reward energy innovation projects. “More than one observer has called ARPA-E a moonshot factory,” she says.

Seeking out and facilitating “moonshot”-worthy projects at a national and sometimes global scale gave Wang a broader lens through which to view issues of energy and climate. It also taught her that big ideas do not translate into big solutions automatically. “I learned what it takes to make an impact on energy technology at ARPA-E and I will be forever grateful,” she says. “I saw how game-changing ideas can take a decade to go from concept to deployment. I learned to appreciate the diversity of talent and innovation in the national ecosystem composed of laboratories, startups, and institutions. I saw how that ecosystem could zero in to identify real problems, envision diverse pathways, and create prototypes. And I also saw just how hard that journey is.”

Climate and energy research at MIT

MIT researchers are tackling climate and energy issues from multiple angles, working on everything from decarbonizing energy and industry to designing resilient and prosperous cities. Find out more at climateproject.mit.edu.

While MIT is already an important element in that ecosystem, Wang and her colleagues want the Institute to play an even more prominent role. “We can be a convener and collaborator, first across all of MIT’s departments, and then with industry, the financial world, and governments,” she says. “We need to do aggressive outreach and find like-minded partners.”

“Although the problems of climate and climate change are global, the most effective way MIT can address them is locally,” said Wang at the October presentation of the MIT Climate Project. “Working across schools and disciplines, collaborating with external partners, we will develop targeted solutions for individual places and communities—solutions that can then serve as templates for other places and communities.” But she also cautions against one-size-fits-all solutions. “Solar panels, for example, work wonderfully, but only in areas that have sufficient space and sunlight,” she explains. “Institutions like MIT can showcase a diversity of approaches and determine the best approach for each individual context.”

Most of all, Wang wants her colleagues to be proactive. “Because MIT is a factory of ideas, perhaps even a moonshot factory, we need to think boldly and continue to think boldly so we can make an impact as soon as possible,” she says. She also wants her colleagues to stay hopeful and not feel daunted by a challenge that can at times feel overwhelming. “We will build pilots, one at a time, and demonstrate that these projects are not only possible but practical,” she says. “And that is how we will build a future everyone wants to live in.”