Why does having insights across multiple LLMs matter for brand visibility?

Search today looks very different from what it did even a few years ago. Users are no longer browsing through SERPs to make up their own minds; instead, they are asking AI tools for conclusions, summaries, and recommendations. This shift changes how visibility is earned, how trust is formed, and how brands are evaluated during discovery. In AI-driven search, large language models interpret information, decide what matters, and present a narrative on behalf of the user.

Key takeaways

- Search has evolved; users now rely on AI for conclusions instead of traditional SERPs

- Conversational AI serves as a new discovery layer, users expect quick answers and insights

- Brands must navigate varied interpretations of their presence across different LLMs

- Yoast AI Brand Insights helps track brand mentions and identify gaps in AI visibility across models

- Understanding LLM brand visibility is crucial for modern brand strategy and perception

The rise of conversational AI as a discovery layer

“Assistant engines and wider LLMs are the new gatekeepers between our content and the person discovering that content – our potential new audience.” — Alex Moss

Search is no longer confined to typing queries into a search engine and scanning a list of links. Today’s discovery journey frequently begins with a conversation, whether that’s a typed question in a chatbot, a voice prompt to an AI assistant, or an embedded AI feature inside a platform people use every day.

This shift has made conversational AI a new layer of discovery, where users expect direct answers, recommendations, and curated insights that help them make decisions and build brand perception more quickly and confidently.

Discovery is happening everywhere

Users are now encountering AI-powered discovery across a range of interfaces:

AI chat interfaces

Tools like ChatGPT allow users to ask open-ended questions and follow up in a conversational manner. These interfaces interpret intent and tailor responses in a way that feels natural, making them a go-to for exploratory search.

Also read: What is search intent and why is it important for SEO?

Answer engines

Platforms such as Perplexity synthesize information from multiple sources and often cite them. They act as research helpers, offering concise summaries or explanations to complex queries.

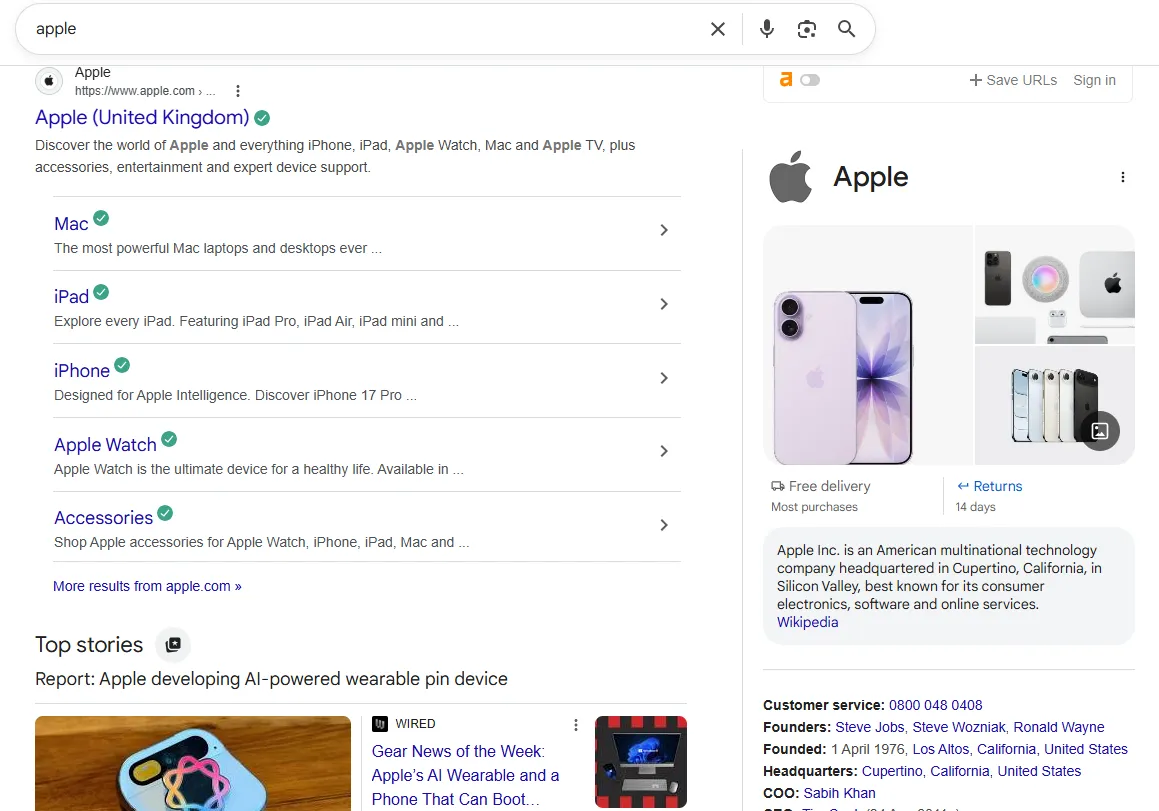

Embedded AI experiences

AI is increasingly built directly into search and discovery environments that people already use. Examples include AI-assisted summaries within search results, such as Google’s AI Overviews, as well as AI features embedded in browsers, operating systems, and apps. In these moments, users may not even think of themselves as “using AI,” yet AI is already influencing what information is surfaced first and how it is interpreted.

This broad distribution of AI discovery surfaces means users now expect accessibility of information regardless of where they are, whether in a chat, an app, or embedded in the places they work, shop, and explore online.

How people are using AI in their day-to-day discovery

Users interact with conversational AI for a wide range of purposes beyond traditional search. These models increasingly guide decisions, comparisons, and exploration, often earlier in the journey than classic search engines.

Here are some prominent ways people use LLMs today:

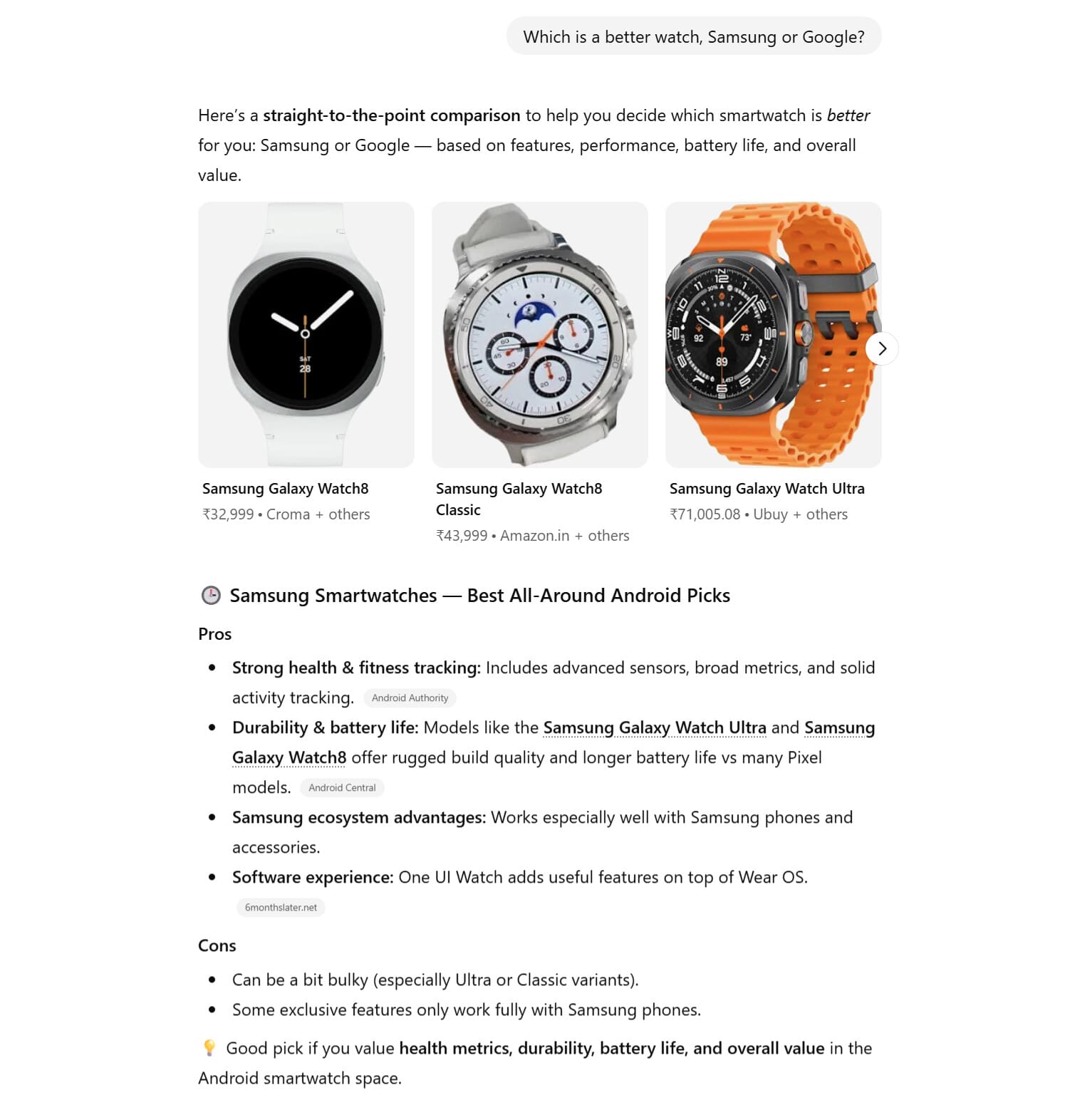

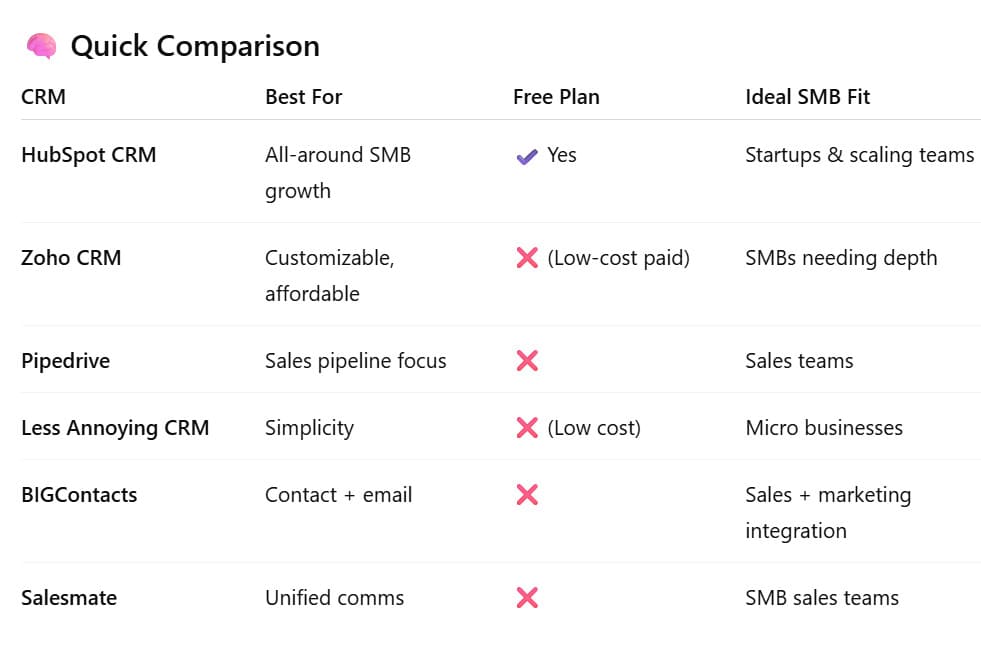

Product comparisons

Rather than visiting multiple sites and aggregating reviews, there are 54% users who ask AI to compare products or services directly, for example, “How does Brand A compare to Brand B?” and “What are the pros and cons of X vs Y?” AI synthesizes information into a concise summary that often feels more efficient than browsing search results.

“Best tools for…” queries

Did you know 47% of consumers have used AI to help make a purchase decision?

AI users frequently ask for ranked suggestions or curated lists such as “best SEO tools for small businesses” or “top content optimization software.” These queries serve as discovery moments, where brands can be suggested alongside context and reasoning.

Trust and validation checks

Many users prompt AI models to validate decisions or confirm perceptions, for example, “Is Brand X reputable?” or “What do people say about Service Y?” AI responses blend sentiment, context, and summarization into one narrative, affecting how trust is formed.

Also read: Why is summarizing essential for modern content?

Idea generation and research exploration

In a study by Yext, it was found that 42% users employ AI for early-stage exploration, such as brainstorming topics, gathering potential search intents, or understanding broad categories before narrowing down specifics. AI user archetypes range from creators who use AI for ideation to explorers seeking deeper discovery.

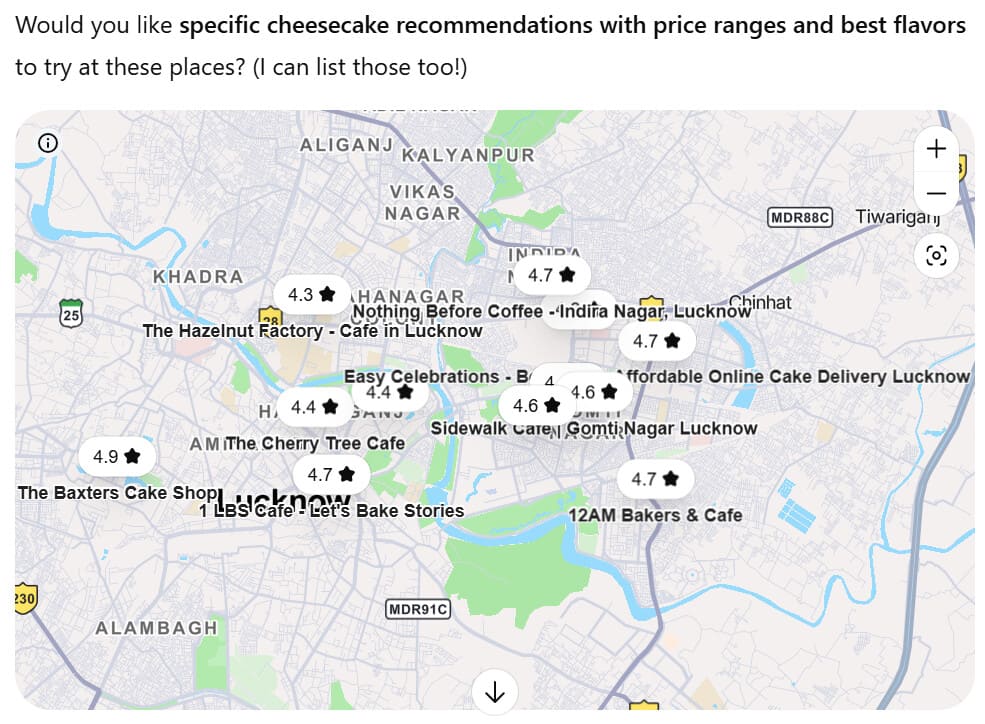

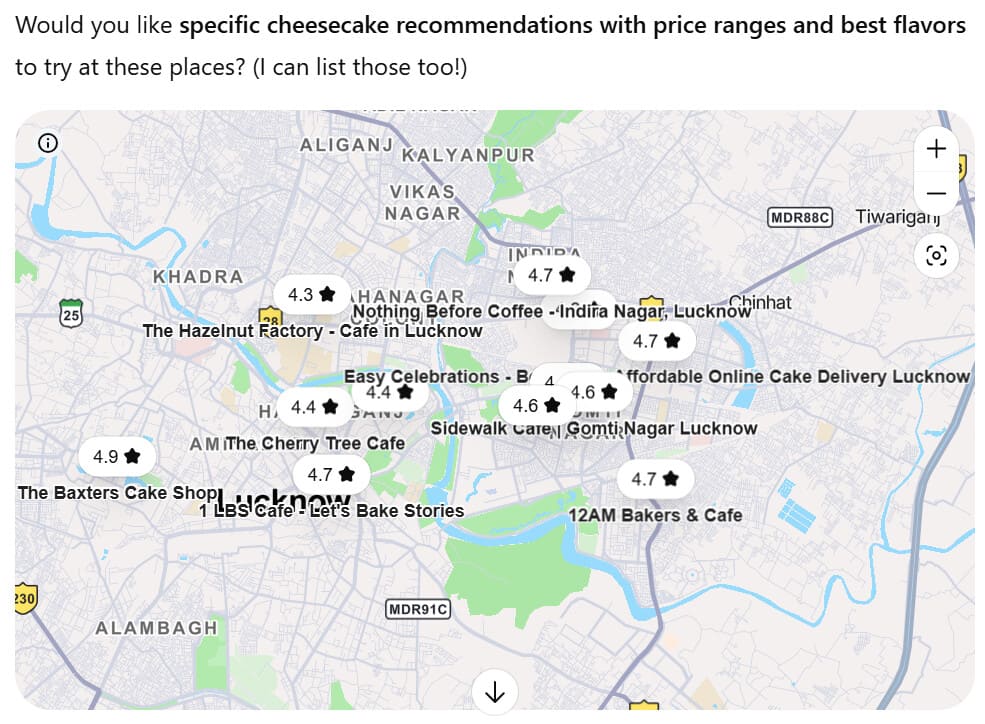

Local discovery and service search

AI is also used for local searches. For example, many users turn to AI tools to research local products or services, such as finding nearby businesses, comparing local options, or understanding community reputations. In a recent AI usage study by Yext, 68% of consumers reported using tools like ChatGPT to research local products or services, even as trust in AI for local information remains lower than traditional search.

In each of these moments, conversational AI doesn’t just surface brands; it frames them by summarizing strengths, weaknesses, use cases, and comparisons in a single response. These narratives become part of how users interpret relevance, trust, and fit far earlier in the decision-making process than in traditional search.

Not all LLMs interpret brands the same way

As conversational AI becomes a discovery layer, one assumption often sneaks in quietly: if your brand shows up well in one AI model, it must be showing up everywhere. In reality, that’s rarely the case. Large language models interpret, retrieve, and present brand information differently, which means relying on a single AI platform can give a very incomplete picture of your brand’s visibility.

To understand why, it helps to look at how some of the most widely used models approach answers and brand mentions.

How ChatGPT interprets brands

ChatGPT is often used as a general-purpose assistant. People turn to it for explanations, comparisons, brainstorming, and decision support. When it mentions brands, it tends to focus on contextual understanding rather than explicit sourcing. Brand mentions are frequently woven into explanations, recommendations, or summaries, sometimes without clear attribution.

From a visibility perspective, this means brands may appear:

- As examples in broader explanations

- As recommendations in “best tools” or comparison-style prompts

- As part of a narrative rather than a cited source

The challenge is that brand mentions can feel correct and authoritative, while still being outdated, incomplete, or inconsistent, depending on how the prompt is phrased.

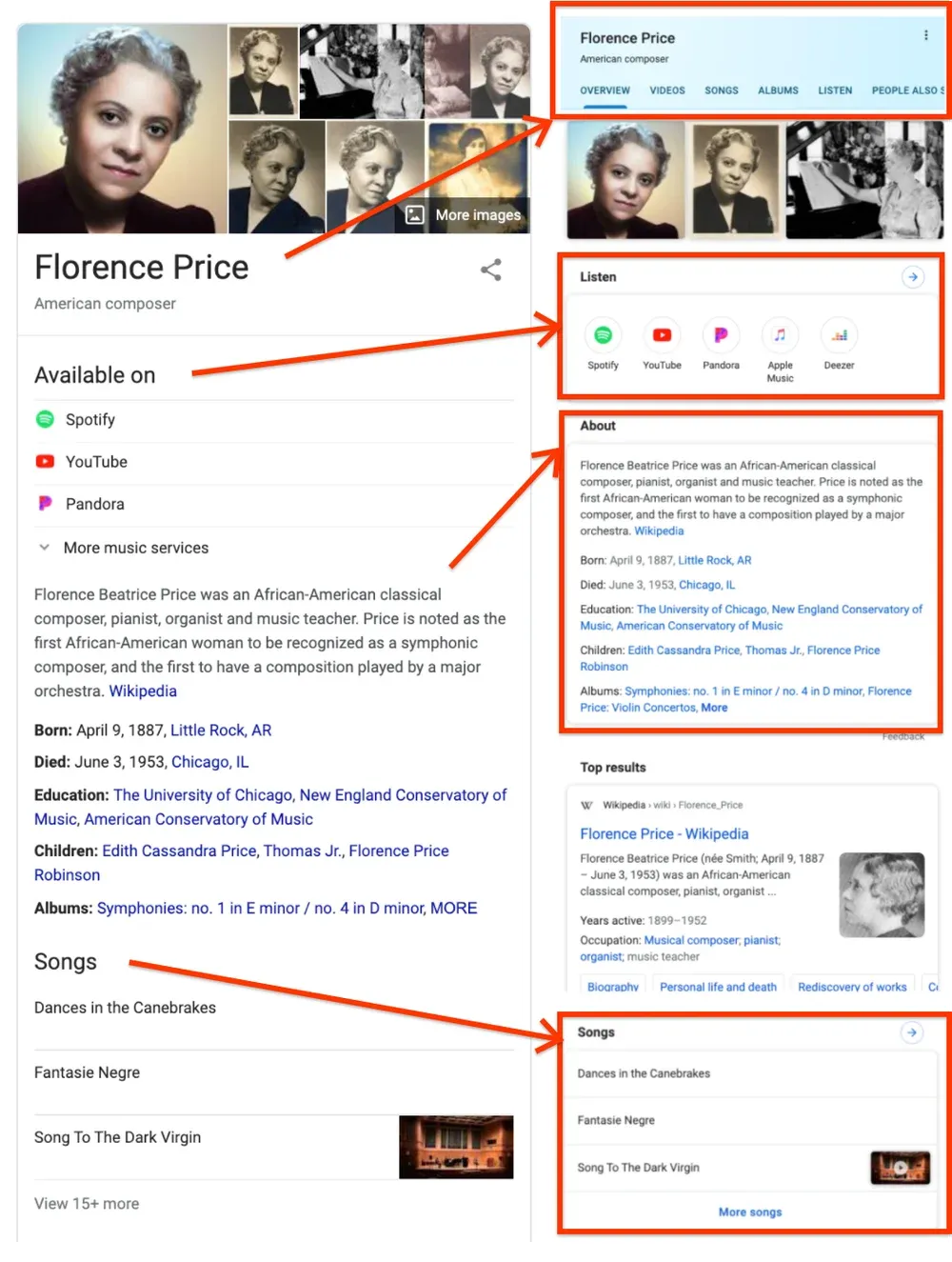

How Gemini interprets brands

Gemini is deeply connected to Google’s ecosystem, which influences how it understands and surfaces brand information. It leans more heavily on entities, structured data, and authoritative sources, and its outputs often reflect signals familiar to traditional SEO teams.

For brands, this means:

- Visibility is closely tied to how well the brand is understood as an entity

- Clear, consistent information across the web plays a bigger role

- Mentions often align more closely with established sources

Gemini can feel more predictable in some cases, but that predictability depends on strong foundational signals and accurate brand representation across trusted platforms.

How Perplexity interprets brands

Perplexity positions itself as an answer engine rather than a general assistant. It emphasizes citations and source-backed responses, which makes it popular for research and comparison queries. When brands appear in Perplexity answers, they are often tied directly to cited articles, reviews, or documentation.

This creates a different visibility dynamic:

- Brands may be surfaced only if they are referenced in cited sources

- Freshness and topical relevance matter more

- Competitors with stronger editorial or PR coverage may appear more often

Here, brand presence is tightly coupled with external content and how frequently that content is used as a reference.

How these models differ at a glance

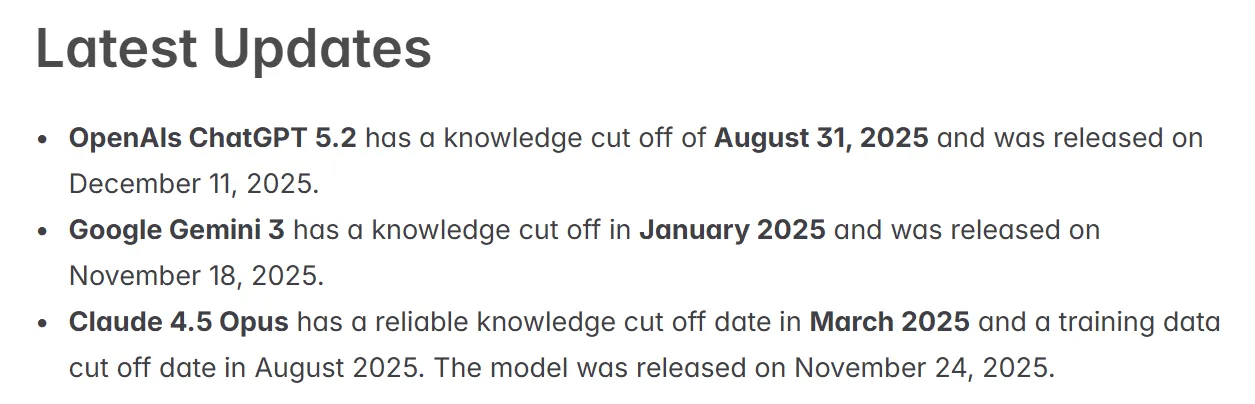

| AI Model | How brands are surfaced | What influences the visibility |

| ChatGPT | Contextual mentions within explanations and recommendations | Prompt phrasing, training data, general relevance |

| Gemini | Entity-driven, aligned with authoritative sources | Structured data, brand consistency, trusted signals |

| Perplexity | Citation-based mentions tied to sources | Content coverage, freshness, external references |

Why brands need insights across multiple LLMs?

Once you see how differently large language models interpret brands, one thing becomes clear: looking at just one AI model gives you an incomplete picture. AI-driven discovery does not produce a single, consistent version of your brand. It produces multiple interpretations, shaped by the model, its data sources, and users’ interactions with it.

Must read: When AI gets your brand wrong: Real examples and how to fix it

Therefore, tracking across your brand across multiple brands is essential because:

Brand visibility is fragmented by default

Across different LLMs, the same brand can show up in very different ways:

- Correctly represented in one model, where information is accurate and well-contextualized

- Completely missing in another, even for relevant queries

- Partially outdated or misrepresented in a third, depending on the sources being used

This fragmentation happens because each model processes and prioritizes information differently. Without visibility across models, it’s easy to assume your brand is ‘covered’ when, in reality, it may only be visible in one corner of the AI ecosystem.

Different audiences use different AI tools

AI usage is not concentrated in a single platform. People choose tools based on intent:

- Some use conversational assistants for exploration and ideation

- Others rely on citation-led answer engines for research

- Many encounter AI passively through search or embedded experiences

If your brand appears in only one environment, you are effectively visible only to a subset of your audience. This mirrors challenges SEO teams already recognize from traditional search, where performance varies by device, location, and search feature. The difference is that with AI, these variations are less obvious and more challenging to track without dedicated insights.

Blind spots create real business risks

Limited visibility across LLMs doesn’t just affect awareness; it also impairs learning. Over time, it can lead to:

- Inconsistent brand narratives, where AI tools describe your brand differently depending on where users ask

- Missed demand, especially for comparison or “best tools for” queries

- Competitors are being recommended instead, simply because they are more visible or better understood by a specific model

These outcomes are rarely intentional, but they can quietly influence brand perception and decision-making long before users reach your website.

So all these points point to one thing: a broader, multi-model view helps build a more complete understanding of brand visibility.

The challenge: LLM visibility is hard to measure

As brands start paying attention to how they appear in AI-generated content, a new problem becomes obvious: LLM visibility doesn’t behave like traditional search visibility. The signals are fragmented, opaque, and constantly changing, which makes tracking and understanding brand presence across AI models far more complex than tracking rankings or traffic.

Below are some key challenges brand marketers might face when trying to understand how their brand appears to large language models.

1. Lack of visibility across AI platforms

Different LLMs, such as ChatGPT, Gemini, and Perplexity, rely on various data sources, retrieval methods, and citation logic. As a result, the same brand may be mentioned prominently in one model, inconsistently in another, or not at all elsewhere.

Without a unified view, it’s difficult to answer basic questions like where your brand shows up, which AI tools mention it, and where the gaps are. This fragmentation makes it easy to overestimate visibility based on a single platform.

2. No clear insight into how AI describes your brand

AI models often mention brands as part of explanations, comparisons, or recommendations, but traditional analytics tools don’t capture how those brands are described. Teams lack visibility into tone, context, sentiment, or whether mentions are positive, neutral, or misleading.

This makes it hard to understand whether AI is reinforcing your intended brand positioning or subtly reshaping it in ways you can’t see.

3. No structured way to measure change over time

AI-generated answers are inherently dynamic. Small changes in prompts, updates to models, or shifts in underlying data can all influence how brands appear. Without consistent, longitudinal tracking, it’s nearly impossible to tell whether visibility is improving, declining, or simply fluctuating.

One-off checks may offer snapshots, but they don’t reveal trends or patterns that matter for long-term strategy.

4. Limited ability to benchmark against competitors

Seeing your brand mentioned in AI answers is a start, but it doesn’t tell you the whole story. The real question is what’s happening around it: which competitors appear more often, how they’re described, and who AI recommends when users are ready to decide.

Without comparative insights, teams struggle to understand whether AI visibility represents a competitive advantage or a missed opportunity.

5. Missing attribution and source clarity

Some AI models summarize or paraphrase information without clearly attributing sources. When brands are mentioned, it’s not always obvious which pages, articles, or properties influenced the response.

This lack of source visibility makes it difficult to connect AI mentions back to specific content efforts, PR coverage, or SEO work, leaving teams guessing what is actually driving brand representation.

6. Existing tools weren’t built for AI visibility

Traditional SEO and analytics platforms are designed around clicks, impressions, and rankings. They don’t capture AI-powered mentions, sentiment, or visibility trends because AI platforms don’t expose those signals in a structured way.

As a result, teams are left without reliable reporting for one of the fastest-growing discovery channels.

Together, these challenges point to a clear gap: brands need a new way to understand visibility that reflects how AI models surface and interpret information. This is where tools explicitly designed for AI-driven discovery, such as Yoast AI Brand Insights, come into play.

How does Yoast AI Brand Insights help?

It won’t be wrong to say that the AI-driven brand discovery can be fragmented and opaque; therefore, leading us to our next practical question: how do brand marketing teams actually make sense of it?

Traditional SEO tools weren’t built to answer that, which is where Yoast AI Brand Insights comes in. It’s designed to help users understand how brands appear in AI-generated answers and is available as part of Yoast SEO AI+.

Rather than focusing on rankings or clicks, Yoast AI Brand Insights focuses on visibility and interpretation across large language models.

Track brand mentions across multiple AI models

One of the biggest gaps in AI visibility is fragmentation. Brands may appear in one AI model but not in another, without any obvious signal to explain why. Yoast AI Brand Insights addresses this by tracking brand mentions across multiple AI platforms, including ChatGPT, Gemini, and Perplexity.

This gives teams a clearer view of where their brand appears, rather than relying on isolated checks or assumptions based on a single model.

Identify gaps, inconsistencies, and opportunities

AI-generated answers don’t just mention brands; they frame them. Yoast AI Brand Insights helps surface patterns in how a brand is described, making it easier to spot:

- Where mentions are missing altogether

- Where descriptions feel outdated or incomplete

- Where competitors appear more frequently or more favorably

These insights turn AI visibility into something teams can actually act on, rather than a black box.

Shared insights for SEO, PR, and content teams

AI-driven discovery sits at the intersection of SEO, content, and brand communication. One of the strengths of Yoast AI Brand Insights is that it provides a shared view of AI visibility that multiple teams can use. SEO teams can connect AI mentions back to site signals, content teams can understand how messaging is interpreted, and PR or brand teams can see how external coverage influences AI narratives.

Instead of working in silos, teams get a common reference point for how the brand appears across AI-driven search experiences.

A natural extension of Yoast’s SEO philosophy

Yoast AI Brand Insights builds on principles Yoast has long emphasized: clarity, consistency, and understanding how search systems interpret content. As AI becomes part of how people discover brands, those same principles now apply beyond traditional search results and into AI-generated answers.

In that sense, Yoast AI Brand Insights isn’t about chasing AI trends. It’s about giving teams a more straightforward way to understand how their brand is represented, where discovery is increasingly happening.

From rankings to representation in AI-driven search

AI-driven discovery is no longer an edge case. It’s becoming a regular part of how people explore options, validate decisions, and form opinions about brands. As large language models continue to evolve, the question for brands is not whether they appear in AI-generated answers, but whether they understand how they appear, where they appear, and what story is being told on their behalf. Gaining visibility into that layer is quickly becoming a foundational part of modern brand and search strategy.